AI in Biotechnology: The Big Interview with Dr Fred Jordan, Co-Founder of FinalSpark

Of course, the ethical consideration is increased because we are using human

cells. From an ethical perspective, what is interesting is that all this

wouldn’t be possible without the ISPCs. Ethically, we don’t need to take the

brain of a real human being to conduct experiments. ... The ultimate goal is to

develop machines with a form of intelligence. We want to create a real function,

something useful. Imagine inputting a picture to the organoid, and it responds,

recognizing objects like cats or dogs. Right now, we are focusing on one

specific function – the significant reduction in energy consumption, potentially

millions to billions of times less than digital computers. As a result, one

practical application could be cloud computing, where these neuron-based systems

consume significantly less energy. This offers an eco-friendly alternative to

traditional computing processing. Ultimately, the future of AI in biotechnology

holds huge potential for various applications because it’s a completely new way

of looking at neurons. It’s like the inventors of the transistor not knowing

about the internet.

AI regulatory landscape and the need for board governance

“We all need to have a plan in place, and we need to be thinking about how are

you using it and whether it is safe.” She underscored the urgency, noting that

journalists are investigating where AI has gone wrong and where it’s

discriminating against people. Additionally, there are lawyers who seize

potential litigation opportunities against ill-prepared, deep-pocketed

organizations. "Good AI hygiene is non-negotiable today, and you must have good

oversight and best practices in place," she asserted. Despite a lack of

comprehensive Congressional AI legislation, Vogel clarified that AI is not

without oversight. Four federal agencies recently committed to ensuring fairness

in emerging AI systems. In a recent statement, agency leaders committed to using

their enforcement powers if AI perpetuates unlawful bias or discrimination. AI

regulatory bills have been proposed by over 30 state legislatures, and the

international community is also ramping up efforts. Vogel cited the European

Union's AI Act as the AI equivalent of the GDPR bill, which established strict

data privacy regulations affecting companies worldwide.

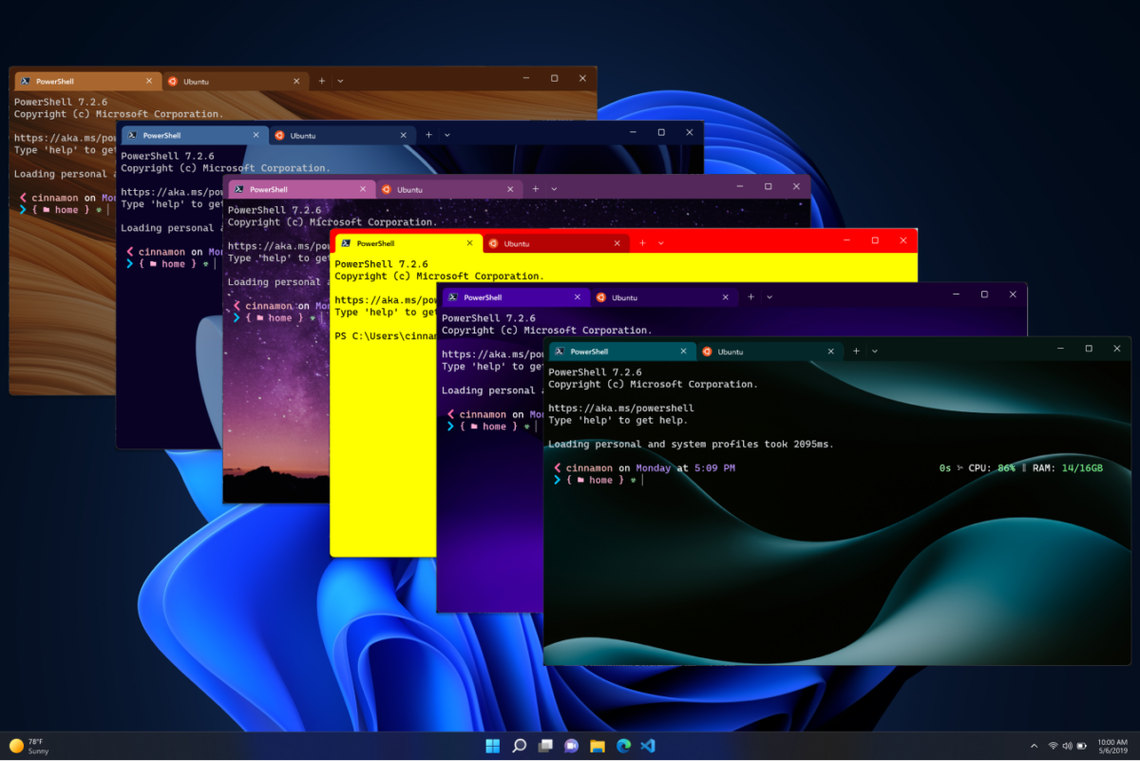

Data Management, Distribution, and Processing for the Next Generation of Networks

Investments in cloud architectures by CSPs span their own resources – but they

also extend to third parties; federated cloud architectures are the result.

These interconnected cloud assets allow CSPs to extend their reach, share

resources and collaborate with other stakeholders to secure desired outcomes.

Why do we combine this with edge computing? Because resources at the edge may

not be in the CSP’s own domain. Edge systems may be a combination of CSP-owned

and other resources that are used in parallel to deliver a particular service.

And, regardless of overall pace towards 5G SA, edge computing is now firmly in

demand by enterprises (and CSPs), to support a new generation of

high-performance and low latency services. This demand won’t only be served by

CSPs, however. Many enterprises are seeking to deploy private networks – and the

resources required to support their applications may be accessed via federated

clouds. This user may not need its own UPF, but it may benefit from one offered

by another provider in an adjacent edge location, or delivered by a systems

integrator that runs multiple private networks with shared resources, available

on demand.

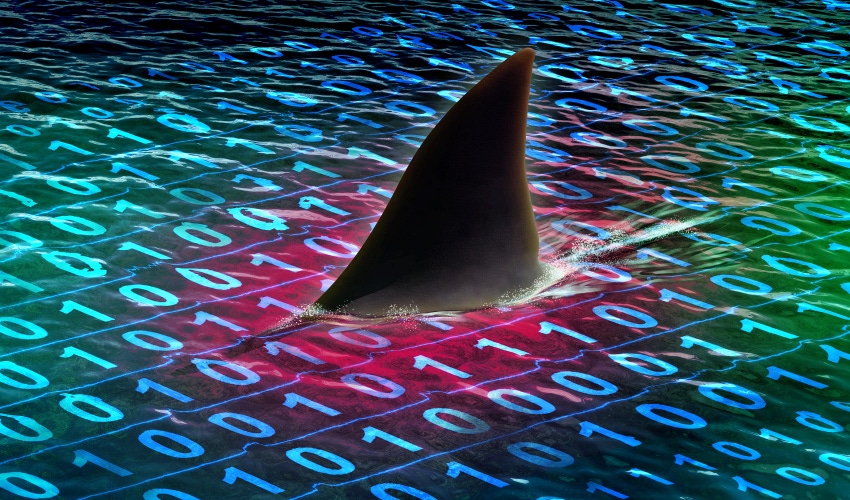

Understanding Each Link of the Cyberattack Impact Chain

There are two ways to assess the cyberattack impact chain: Causes and effects.

To build stakeholder support for CSAT, CISOs have to show the board how much

damage cyberattacks are capable of causing. Beyond the fact that the average

cost of a data breach reached an all-time high of $4.45 million in 2023, there

are many other repercussions: Disrupted services and operations, a loss of

customer trust and a heightened risk of future attacks. CSAT content must inform

employees about the effects of cyberattacks to help them understand the risks

companies face. It’s even more important for company leaders and employees to

have a firm grasp on the causes of cyberattacks. Cybercriminals are experts at

exploiting employees’ psychological vulnerabilities – particularly fear,

obedience, craving, opportunity, sociableness, urgency and curiosity – to steal

money and credentials, break into secure systems and launch cyberattacks.

Consider the MGM attack, which relied on vishing – one of the most effective

social engineering tactics, as it allows cybercriminals to impersonate trusted

entities to deceive their victims.

Another Cyberattack on Critical Infrastructure and the Outlook on Cyberwarfare

Critical infrastructure attacks, like the one against the water authority in

Pennsylvania, have occurred in the wake of the Israel-Hamas war. And

geopolitical tension and turmoil expands beyond this conflict. Russia’s invasion

of Ukraine has sparked cyberattacks. Chinese cyberattacks against government and

industry in Taiwan have increased. “This is just going to be an ongoing part of

operating digital systems and operating with the internet,” Dominique Shelton

Leipzig, a partner and member of the cybersecurity and data privacy practice at

global law firm Mayer Brown, tells InformationWeek. While kinetic weapons are

still very much a part of war, cyberattacks are another tool in the arsenal.

Successful cyberattacks against critical infrastructure have the potential for

widespread devastation. “The landscape of warfare is changing,” says Warner. And

the weaponization of artificial intelligence is likely to increase the scale of

cyberwarfare. “We have the normal technology that we use for denial-of-service

attacks, but imagine being able to do all of that on an even greater scale,”

says Shelton Leipzig.

Continuous Testing in the Era of Microservices and Serverless Architectures

Continuous testing is a practice that emphasizes the need for testing at every

stage of the software development lifecycle. From unit tests to integration

tests and beyond, this approach aims to detect and rectify defects as early as

possible, ensuring a high level of software quality. It extends beyond mere bug

detection and it encapsulates a holistic approach. While unit tests can

scrutinize individual components, integration tests can evaluate the

collaboration between diverse modules. The practice allows not only the

minimization of defects but also the robustness of the entire system. ...

Decomposed testing strategies are key to effective microservices testing. This

approach advocates for the examination of each microservice in isolation. It

involves a rigorous process of testing individual services to ensure their

functionality meets specifications, followed by comprehensive integration

testing. This methodical approach not only identifies defects at an early stage

but also guarantees seamless communication between services, aligning with the

modular nature of microservices.

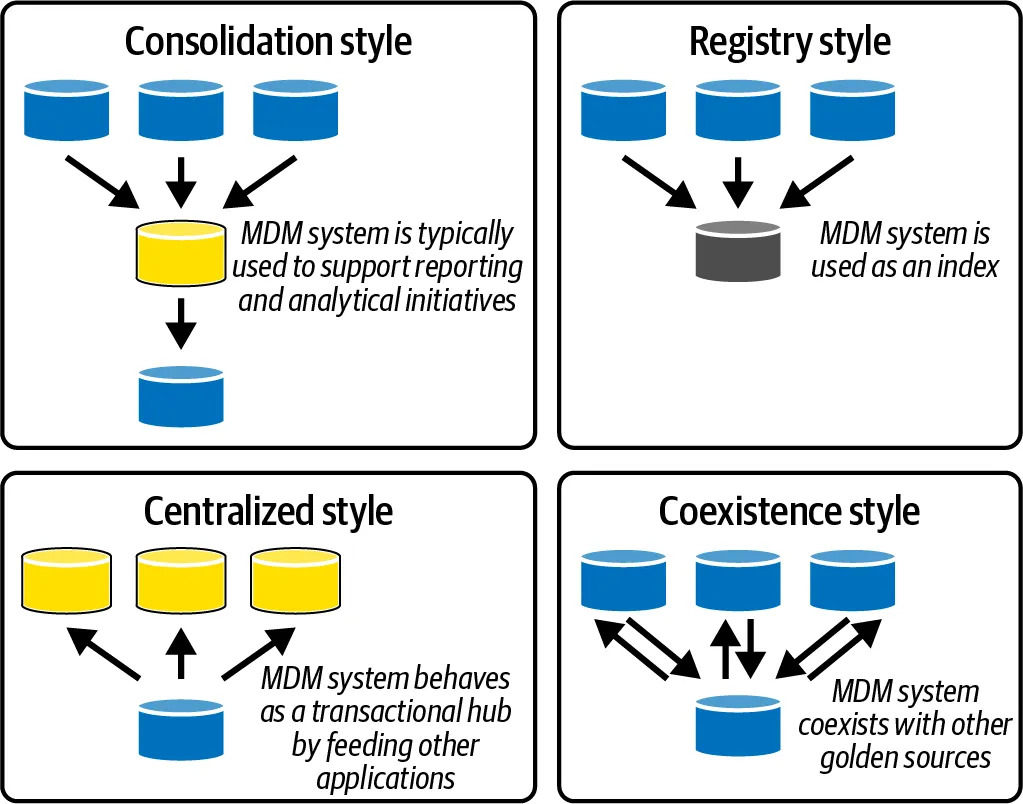

Understanding Master Data Management’s integration challenges

The integration of data within MDM is a very complex task, which should not be

underestimated. Many organizations often have a myriad of source systems, each

with its own data structure and format. These systems can range from commercial

CRM or ERP systems to custom-built legacy software, all of which may use

different data models, definitions, and standards. In addition, organizations

often desire real-time or near-real-time synchronization between the MDM system

and the source systems. Any changes in the source systems need to be immediately

reflected in the MDM system to ensure data accuracy and consistency. Using a

native connector from the MDM system to read data from your operational systems

can provide several benefits, such as ease of integration. This has been

illustrated at the bottom in the image above. However, the choice of using a

native connector or a custom-built one mostly depends on your specific needs,

the complexity of your data, the systems you’re integrating, and the

capabilities of your MDM system.

Aim for a modern data security approach

Beginning with data observability, a “shift left” implementation requires that

data security become the linchpin before any application is put into production.

Instead of being confined to data quality or data reliability, security needs to

become another use case application of the underlying data and be unified into

the rest of the data observability subsystem. By doing this, data security

benefits from the alerts and notifications stemming from data observability

offerings. Data governance platform capabilities typically include business

glossaries, catalogs, and data lineage. They also leverage metadata to

accelerate and govern analytics. In “shift left” data governance, the same

metadata is augmented by data security policies and user access rights to

further increase trust and allow appropriate users to access data. Leveraging

and establishing comprehensive data observability and governance is the key to

data democratization. As a result, these proactive and transparent views over

the security of critical data elements will also accelerate application

development and improve productivity.

Google expands minimum security guidelines for third-party vendors

"The expanded guidance around external vulnerability protection aims to provide

more consistent legal protection and process to bug hunters that want to protect

themselves from being prosecuted or sued for reporting findings," says Forester

Principal Analyst Sandy Carielli. "It also helps set expectations about how

companies will work with researchers. Overall, the expanded guidance will help

build trust between companies and security researchers." The enhanced guidance

encourages more comprehensive and responsible vulnerability disclosures, says

Jan Miller, CTO of threat analysis at OPSWAT, a threat prevention and data

security company. "That contributes to a more secure digital ecosystem, which is

especially crucial in critical infrastructure sectors where vulnerabilities can

have significant repercussions," he says. ... The enhanced guidance encourages

more comprehensive and responsible vulnerability disclosures, says Jan Miller,

CTO of threat analysis at OPSWAT, a threat prevention and data security

company.

Europe Reaches Deal on AI Act, Marking a Regulatory First

"Europe has positioned itself as a pioneer, understanding the importance of its

role as global standard setter," said Thierry Breton, the European commissioner

for internal market, who had a key role in negotiations. The penalties for

noncompliance with the rules can lead to fines of up to 7% of global revenue,

depending on the violation and size of the company. What the final regulation

ultimately requires of AI companies will be felt globally, a phenomenon known as

the Brussels effect since the European Union often succeeds in approving

cutting-edge regulations before other jurisdictions. The United States is

nowhere near approving a comprehensive AI regulation, leaving the Biden

administration to rely on executive orders, voluntary commitments and existing

authorities to combat issues such as bias, deep fakes, privacy and security.

European officials had no difficulty in agreeing that the regulation should ban

certain AI applications such as social scoring or that regulations should take a

tiered-based approach that treats high-risk systems, such as those that could

influence the outcome of an election, with greater requirements for transparency

and disclosure.

Quote for the day:

''It is never too late to be what you

might have been." -- George Eliot