Quote for the day:

"The best reason to start an organization is to make meaning; to create a product or service to make the world a better place." -- Guy Kawasaki

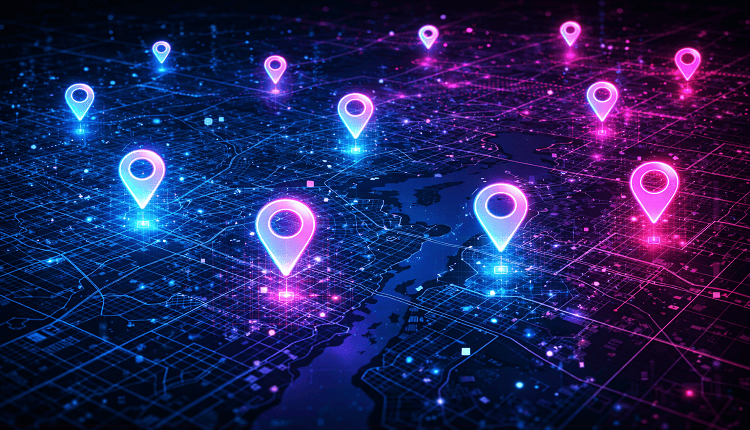

PIN It to Win It: India’s digital address revolution

DIGIPIN is a nationwide geo-coded addressing system developed by the Department

of Posts in collaboration with IIT Hyderabad. It divides India into

approximately 4m x 4m grids and assigns each grid a unique 10-character

alphanumeric code based on latitude and longitude coordinates. The ability of

DIGIPIN to function as a persistent, interoperable location identifier across

India’s dispersed public and private networks is what gives it its real power.

Unlike normal addresses, which depend on textual descriptions, a DIGIPIN

condenses the geo-coordinates, administrative metadata and unique spatial

identifiers into a 10-character alphanumeric string. Because of which, DIGIPIN

is readable by machines, compatible with maps and unaffected by changes in

naming conventions. When combined with systems like Aadhaar (identity), UPI

(payments), ULPIN (land) and UPIC (property), DIGIPIN can enable seamless KYC

validation, last-mile delivery automation, digital land titling and geographic

analytics. ... For DIGIPIN to become the default address format in India, it has

to succeed across three critical dimensions: A 10-character code might be

accurate, but is it memorable? For a busy delivery rider or a rural farmer,

remembering and sharing it must be easier than reciting a landmark-heavy

address. The code must be accepted across platforms – Aadhaar, land registries,

GST, KYC forms, food delivery apps and banks.

DIGIPIN is a nationwide geo-coded addressing system developed by the Department

of Posts in collaboration with IIT Hyderabad. It divides India into

approximately 4m x 4m grids and assigns each grid a unique 10-character

alphanumeric code based on latitude and longitude coordinates. The ability of

DIGIPIN to function as a persistent, interoperable location identifier across

India’s dispersed public and private networks is what gives it its real power.

Unlike normal addresses, which depend on textual descriptions, a DIGIPIN

condenses the geo-coordinates, administrative metadata and unique spatial

identifiers into a 10-character alphanumeric string. Because of which, DIGIPIN

is readable by machines, compatible with maps and unaffected by changes in

naming conventions. When combined with systems like Aadhaar (identity), UPI

(payments), ULPIN (land) and UPIC (property), DIGIPIN can enable seamless KYC

validation, last-mile delivery automation, digital land titling and geographic

analytics. ... For DIGIPIN to become the default address format in India, it has

to succeed across three critical dimensions: A 10-character code might be

accurate, but is it memorable? For a busy delivery rider or a rural farmer,

remembering and sharing it must be easier than reciting a landmark-heavy

address. The code must be accepted across platforms – Aadhaar, land registries,

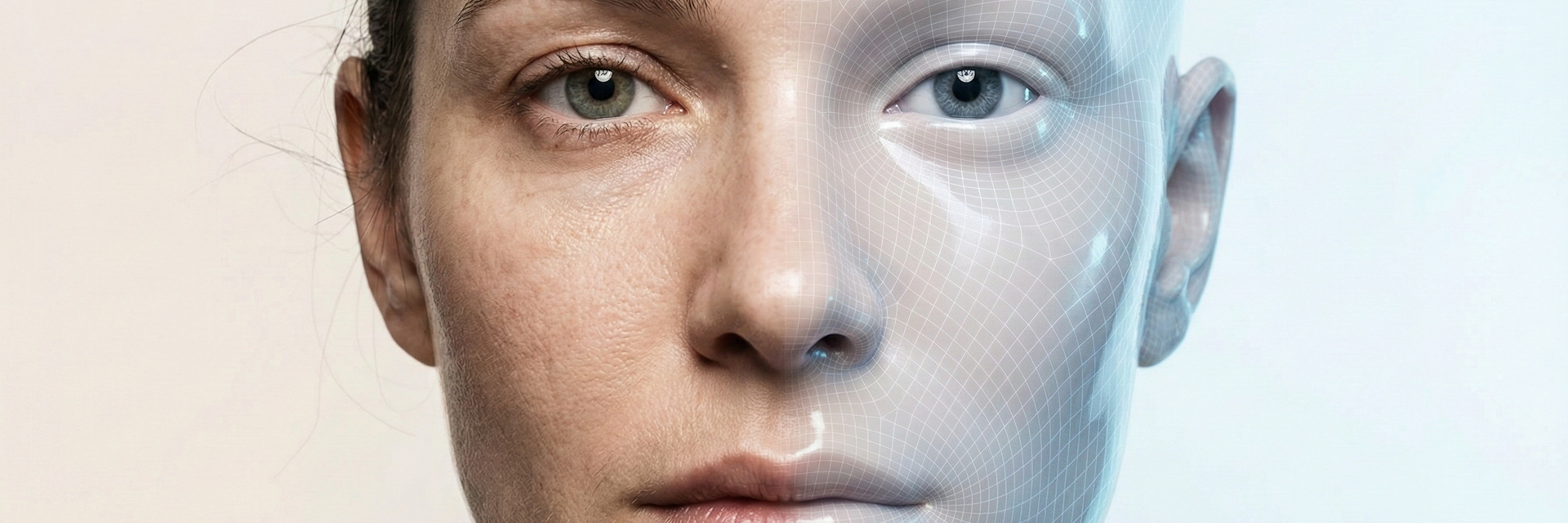

GST, KYC forms, food delivery apps and banks. Deepfakes leveled up in 2025 – here’s what’s coming next

Over the course of 2025, deepfakes improved dramatically. AI-generated faces,

voices and full-body performances that mimic real people increased in quality

far beyond what even many experts expected would be the case just a few years

ago. They were also increasingly used to deceive people. For many everyday

scenarios — especially low-resolution video calls and media shared on social

media platforms — their realism is now high enough to reliably fool nonexpert

viewers. In practical terms, synthetic media have become indistinguishable from

authentic recordings for ordinary people and, in some cases, even for

institutions. And this surge is not limited to quality. ... Looking forward, the

trajectory for next year is clear: Deepfakes are moving toward real-time

synthesis that can produce videos that closely resemble the nuances of a human’s

appearance, making it easier for them to evade detection systems. The frontier

is shifting from static visual realism to temporal and behavioral coherence:

models that generate live or near-live content rather than pre-rendered clips.

... As these capabilities mature, the perceptual gap between synthetic and

authentic human media will continue to narrow. The meaningful line of defense

will shift away from human judgment. Instead, it will depend on

infrastructure-level protections. These include secure provenance such as media

signed cryptographically, and AI content tools that use the Coalition for

Content Provenance and Authenticity specifications.

Over the course of 2025, deepfakes improved dramatically. AI-generated faces,

voices and full-body performances that mimic real people increased in quality

far beyond what even many experts expected would be the case just a few years

ago. They were also increasingly used to deceive people. For many everyday

scenarios — especially low-resolution video calls and media shared on social

media platforms — their realism is now high enough to reliably fool nonexpert

viewers. In practical terms, synthetic media have become indistinguishable from

authentic recordings for ordinary people and, in some cases, even for

institutions. And this surge is not limited to quality. ... Looking forward, the

trajectory for next year is clear: Deepfakes are moving toward real-time

synthesis that can produce videos that closely resemble the nuances of a human’s

appearance, making it easier for them to evade detection systems. The frontier

is shifting from static visual realism to temporal and behavioral coherence:

models that generate live or near-live content rather than pre-rendered clips.

... As these capabilities mature, the perceptual gap between synthetic and

authentic human media will continue to narrow. The meaningful line of defense

will shift away from human judgment. Instead, it will depend on

infrastructure-level protections. These include secure provenance such as media

signed cryptographically, and AI content tools that use the Coalition for

Content Provenance and Authenticity specifications.Your Core Is Being Retired. Now What?

Eventually, all financial institutions will find themselves in the position of

voluntarily or involuntarily going through a core migration. The stock market

hammered one of the largest core processing companies in the world recently,

effectively admitting publicly what most of the industry has known for years:

They were more concerned about financial engineering of the share price than

they were about product engineering a better outcome for their clients.

Unfortunately, the market also learned recently that the largest core processing

provider will soon be making some big changes and consolidating many of its core

systems. It’s hard to imagine how a software company can effectively support and

maintain this many diverse core platforms – and the rationale behind this

decision seems obvious and needed. However, this is an incredibly risky

inflection point for banks and credit unions on platforms targeted for

retirement. The hope and bet is that most clients will be incentivized to

migrate to one of the remaining cores. ... The retirement of your core is

an opportunity to rethink the foundation of your institution’s future. While no

core conversion is easy, those who approach it strategically, armed with data,

foresight, and the right partners, can turn a forced migration into a

competitive advantage. The next generation of cores promises greater

flexibility, integration and scalability, but only for institutions that

negotiate wisely, plan deliberately, and take control of their own timelines

before someone else does.

Eventually, all financial institutions will find themselves in the position of

voluntarily or involuntarily going through a core migration. The stock market

hammered one of the largest core processing companies in the world recently,

effectively admitting publicly what most of the industry has known for years:

They were more concerned about financial engineering of the share price than

they were about product engineering a better outcome for their clients.

Unfortunately, the market also learned recently that the largest core processing

provider will soon be making some big changes and consolidating many of its core

systems. It’s hard to imagine how a software company can effectively support and

maintain this many diverse core platforms – and the rationale behind this

decision seems obvious and needed. However, this is an incredibly risky

inflection point for banks and credit unions on platforms targeted for

retirement. The hope and bet is that most clients will be incentivized to

migrate to one of the remaining cores. ... The retirement of your core is

an opportunity to rethink the foundation of your institution’s future. While no

core conversion is easy, those who approach it strategically, armed with data,

foresight, and the right partners, can turn a forced migration into a

competitive advantage. The next generation of cores promises greater

flexibility, integration and scalability, but only for institutions that

negotiate wisely, plan deliberately, and take control of their own timelines

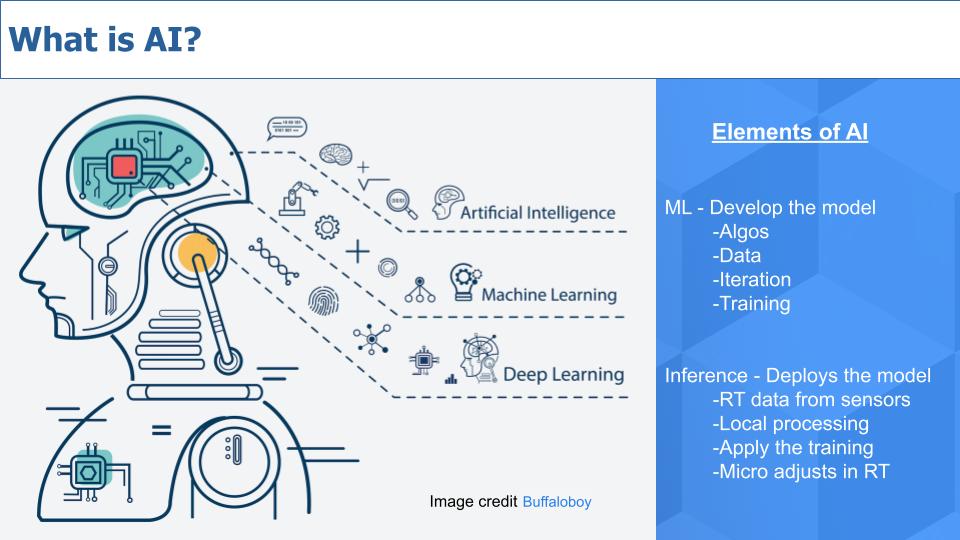

before someone else does.Whether AI is a bubble or revolution, how does software survive?

Bubble or not, AI has certainly made some waves, and everyone is looking to find

the right strategy. It’s already caused a great deal of disruption—good and

bad—among software companies large and small. The speed at which the technology

has moved from its coming out party, has been stunning; costs have dropped,

hardware and software have improved, and the mediocre version of many jobs can

be replicated in a chat window. It’s only going to continue. “AI is positioned

to continuously disrupt itself, said McConnell. “It's going to be a constant

disruption. If that's true, then all of the dollars going to companies today are

at risk because those companies may be disrupted by some new technology that's

just around the corner.” First up on the list of disruption targets: startups.

If you’re looking to get from zero to market fit, you don’t need to build the

same kind of team like you used to. “Think about the ratios between how many

engineers there are to salespeople,” said Tunguz. “We knew what those were for

10 or 15 years, and now none of those ratios actually hold anymore. If we are

really are in a position that a single person can have the productivity of 25,

management teams look very different. Hiring looks extremely different.” That’s

not to say there won’t be a need for real human coders. We’ve seen how badly the

vibe coding entrepreneurs get dunked on when they put their shoddy apps in front

of a merciless internet.

Bubble or not, AI has certainly made some waves, and everyone is looking to find

the right strategy. It’s already caused a great deal of disruption—good and

bad—among software companies large and small. The speed at which the technology

has moved from its coming out party, has been stunning; costs have dropped,

hardware and software have improved, and the mediocre version of many jobs can

be replicated in a chat window. It’s only going to continue. “AI is positioned

to continuously disrupt itself, said McConnell. “It's going to be a constant

disruption. If that's true, then all of the dollars going to companies today are

at risk because those companies may be disrupted by some new technology that's

just around the corner.” First up on the list of disruption targets: startups.

If you’re looking to get from zero to market fit, you don’t need to build the

same kind of team like you used to. “Think about the ratios between how many

engineers there are to salespeople,” said Tunguz. “We knew what those were for

10 or 15 years, and now none of those ratios actually hold anymore. If we are

really are in a position that a single person can have the productivity of 25,

management teams look very different. Hiring looks extremely different.” That’s

not to say there won’t be a need for real human coders. We’ve seen how badly the

vibe coding entrepreneurs get dunked on when they put their shoddy apps in front

of a merciless internet. Why Windows Just Became Disruptible in the Agentic OS Era

Identity is where the cracks show early. Traditional Windows environments assume

a human logging into a device, launching applications, and accessing resources

under their account. Entra ID and Active Directory groups, role-based access

control across Microsoft 365, and Conditional Access policies all grew out of

that pattern. An agentic environment forces a different set of questions. Who is

authenticated when an agent books a conference room, issues a purchase order

draft, or requests a sensitive dataset? How should policy cope with agents that

mix personal and organizational context, or that act for multiple managers

across overlapping projects? What happens when an internal agent needs to

negotiate with an external agent that belongs to a partner or supplier? ...

Agentic systems improve as they see more behavior. Early customers who allow

their interactions, decisions, and corrections to be observed become de facto

trainers for the platform. That creates a race to capture training data, not

just market share. The same is true for the user experience. How people “vibe

reengineer” processes isn’t optimized yet. The vendor that gets that experience

right will empower AI-savvy users in new ways, and deep knowledge about those

emerging processes will be hard to copy. It is likely, however, that more than

one approach will emerge, which will set up the next round of competition.

Identity is where the cracks show early. Traditional Windows environments assume

a human logging into a device, launching applications, and accessing resources

under their account. Entra ID and Active Directory groups, role-based access

control across Microsoft 365, and Conditional Access policies all grew out of

that pattern. An agentic environment forces a different set of questions. Who is

authenticated when an agent books a conference room, issues a purchase order

draft, or requests a sensitive dataset? How should policy cope with agents that

mix personal and organizational context, or that act for multiple managers

across overlapping projects? What happens when an internal agent needs to

negotiate with an external agent that belongs to a partner or supplier? ...

Agentic systems improve as they see more behavior. Early customers who allow

their interactions, decisions, and corrections to be observed become de facto

trainers for the platform. That creates a race to capture training data, not

just market share. The same is true for the user experience. How people “vibe

reengineer” processes isn’t optimized yet. The vendor that gets that experience

right will empower AI-savvy users in new ways, and deep knowledge about those

emerging processes will be hard to copy. It is likely, however, that more than

one approach will emerge, which will set up the next round of competition.SaaS attacks surge as boards turn to AI for defence

Why CIOs must lead AI experimentation, not just govern it

The role of IT leadership is undergoing a profound transformation. We were once

the gatekeepers of technology. Then came SaaS, which began to democratize

technology access, putting powerful tools directly into the hands of employees.

AI represents an even more significant shift. It can feel intimidating, and as

leaders, we have a crucial responsibility to demystify it and make it

accessible. Much like the dot.com boom, we're witnessing a transformative

moment, and IT leaders must harness this potential to drive innovation. ... The

key to successful AI adoption is fostering a culture of learning and

experimentation. Employees at all levels, whether developers or non-developers,

executives or individual contributors, must have the opportunity to get their

hands on AI tools and understand how they work. Some companies are having

employees train AI models and learn prompt engineering, which is a fantastic way

to remove the mystery and show people how AI truly functions. We’re encouraging

our own teams to write prompts and train chatbots, aiming for AI to become a

true copilot in their daily tasks. Think of it as akin to an athlete who trains

consistently, refining their skills to achieve better results. That’s the

feeling we want our employees to have with AI — a tool that makes their work

faster, better and, ultimately, more meaningful and joyful. My own mother’s

relationship with her voice assistant, which has become an integral part of her

life, is a simple reminder of how seamlessly technology can integrate when it’s

genuinely helpful.

The role of IT leadership is undergoing a profound transformation. We were once

the gatekeepers of technology. Then came SaaS, which began to democratize

technology access, putting powerful tools directly into the hands of employees.

AI represents an even more significant shift. It can feel intimidating, and as

leaders, we have a crucial responsibility to demystify it and make it

accessible. Much like the dot.com boom, we're witnessing a transformative

moment, and IT leaders must harness this potential to drive innovation. ... The

key to successful AI adoption is fostering a culture of learning and

experimentation. Employees at all levels, whether developers or non-developers,

executives or individual contributors, must have the opportunity to get their

hands on AI tools and understand how they work. Some companies are having

employees train AI models and learn prompt engineering, which is a fantastic way

to remove the mystery and show people how AI truly functions. We’re encouraging

our own teams to write prompts and train chatbots, aiming for AI to become a

true copilot in their daily tasks. Think of it as akin to an athlete who trains

consistently, refining their skills to achieve better results. That’s the

feeling we want our employees to have with AI — a tool that makes their work

faster, better and, ultimately, more meaningful and joyful. My own mother’s

relationship with her voice assistant, which has become an integral part of her

life, is a simple reminder of how seamlessly technology can integrate when it’s

genuinely helpful.AI, fraud and market timing drive biometrics consolidation in 2025 … and maybe 2026

Fraud has overwhelmed organizations of all kinds, and Verley emphasizes the

degree to which this has pulled enterprise teams and market players in adjacent

areas together. AI has contributed to this wave of fraud in several important

ways. The barrier to entry has been lowered, and forgeries are now scalable in a

way cybercriminals could only have dreamed of just a few years ago. The

proliferation of generative AI tools has also changed the state of the art in

biometric liveness detection, with injection attack detection (IAD) now table

stakes for secure remote user onboarding the way presentation attack detection

(PAD) has been for the last several years. ... Reducing fraud is part of the

motivation behind the EU Digital Identity Wallet, which launches in the year

ahead. By tying digital IDs to government-issued biometric documents with

electronic chips. “That’s going to mean a huge uptick in onboarding people to

issue them these new credentials that are going to be big in identity

verification, and that’s going to be the best way to do that,” Goode says. At

the same time, businesses that had no choice but to pay for identity services

during pandemic now have more choice, Verley says. So providers are emphasizing

fraud protection to justify the value of their products. ... Uncertainty is a

central feature of the AI market landscape, and Goode notes the possibility that

if predictions of the AI market popping like a bubble in 2026 come true,

restricted credit availability “could put a damper on acquisitions.”

Fraud has overwhelmed organizations of all kinds, and Verley emphasizes the

degree to which this has pulled enterprise teams and market players in adjacent

areas together. AI has contributed to this wave of fraud in several important

ways. The barrier to entry has been lowered, and forgeries are now scalable in a

way cybercriminals could only have dreamed of just a few years ago. The

proliferation of generative AI tools has also changed the state of the art in

biometric liveness detection, with injection attack detection (IAD) now table

stakes for secure remote user onboarding the way presentation attack detection

(PAD) has been for the last several years. ... Reducing fraud is part of the

motivation behind the EU Digital Identity Wallet, which launches in the year

ahead. By tying digital IDs to government-issued biometric documents with

electronic chips. “That’s going to mean a huge uptick in onboarding people to

issue them these new credentials that are going to be big in identity

verification, and that’s going to be the best way to do that,” Goode says. At

the same time, businesses that had no choice but to pay for identity services

during pandemic now have more choice, Verley says. So providers are emphasizing

fraud protection to justify the value of their products. ... Uncertainty is a

central feature of the AI market landscape, and Goode notes the possibility that

if predictions of the AI market popping like a bubble in 2026 come true,

restricted credit availability “could put a damper on acquisitions.”Why Strategic Planning Without CIOs Fail

For large IT projects exceeding $15 million in initial budget, the research

found average cost overruns of 45%, value delivery 56% below predictions, and

17% of projects becoming black swan events with cost overruns exceeding 200%,

sometimes threatening organizational survival. These outcomes are not random.

BCG 2024 research surveying global C-suite executives across 25 industries found

that organizations including technology leaders from the start of strategic

initiatives achieve 154% higher success rates than those that do not. When CIOs

enter after critical decisions are made, organizations discover mid-execution

that constraints render promised features impossible, integration requirements

multiply beyond projections, and vendor capabilities fail to match sales

promises. Direct project costs pale beside the accumulated burden of technical

debt. ... Gartner’s 2025 CIO Survey (released October 2024), which surveyed over

3,100 CIOs and technology executives, revealed that only 48% of digital

initiatives meet or exceed their business outcome targets. However, Digital

Vanguard CIOs, who co-own digital delivery with business leaders, achieve a 71%

success rate. That 48% improvement represents the difference between coin-flip

odds and a reliable strategic advantage. Failed transformations do not merely

waste money. They consume organizational capacity that could deliver value

elsewhere.

For large IT projects exceeding $15 million in initial budget, the research

found average cost overruns of 45%, value delivery 56% below predictions, and

17% of projects becoming black swan events with cost overruns exceeding 200%,

sometimes threatening organizational survival. These outcomes are not random.

BCG 2024 research surveying global C-suite executives across 25 industries found

that organizations including technology leaders from the start of strategic

initiatives achieve 154% higher success rates than those that do not. When CIOs

enter after critical decisions are made, organizations discover mid-execution

that constraints render promised features impossible, integration requirements

multiply beyond projections, and vendor capabilities fail to match sales

promises. Direct project costs pale beside the accumulated burden of technical

debt. ... Gartner’s 2025 CIO Survey (released October 2024), which surveyed over

3,100 CIOs and technology executives, revealed that only 48% of digital

initiatives meet or exceed their business outcome targets. However, Digital

Vanguard CIOs, who co-own digital delivery with business leaders, achieve a 71%

success rate. That 48% improvement represents the difference between coin-flip

odds and a reliable strategic advantage. Failed transformations do not merely

waste money. They consume organizational capacity that could deliver value

elsewhere.Top 3 Reasons Why Data Governance Strategies Fail

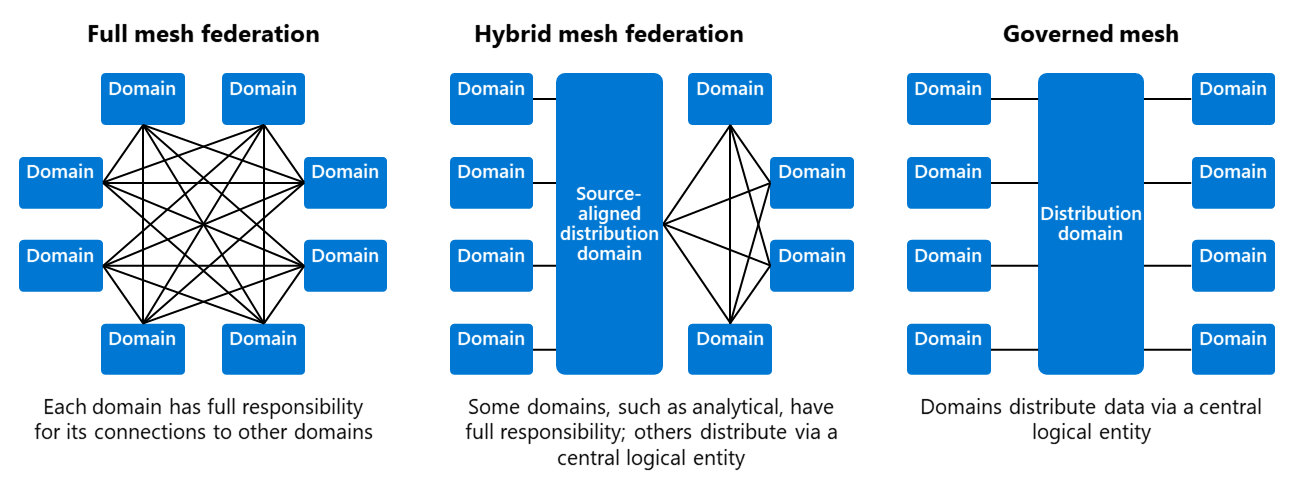

Clearly, data governance is policy, not a solution. It nests within any

organization that has deployed business analytics as part of its overall

strategy – in fact, one of the reasons for data governance failure is that it is

not being aligned with an enterprise’s business strategy. Governance is about

ensuring the proper implementation of business rules and controls around your

organization’s data. It involves the wholehearted participation of all company

departments, especially IT and business. Any attempt to run it in a vacuum or

silo means it’s imminently doomed. ... A well-thought-out data governance plan

must have a governing body and a defined set of procedures with a plan to

execute them. To begin with, one has to identify the custodians of an

enterprise’s data assets. Accountability is key here. The policy must determine

who in the system is responsible for various aspects of the data, including

quality, accessibility, and consistency. Then come to the processes. A set of

standards and procedures must be defined and developed for how data is stored,

backed up, and protected. To be left out, a good data governance plan must also

include an audit process to ensure compliance with government regulations. ...

If an Enterprise does not know where it’s headed with its data governance plan,

reflected in black and white, it’s bound to stutter. Things like targets

achieved, dollars saved, and risks mitigated need to be measured and

recorded.

Clearly, data governance is policy, not a solution. It nests within any

organization that has deployed business analytics as part of its overall

strategy – in fact, one of the reasons for data governance failure is that it is

not being aligned with an enterprise’s business strategy. Governance is about

ensuring the proper implementation of business rules and controls around your

organization’s data. It involves the wholehearted participation of all company

departments, especially IT and business. Any attempt to run it in a vacuum or

silo means it’s imminently doomed. ... A well-thought-out data governance plan

must have a governing body and a defined set of procedures with a plan to

execute them. To begin with, one has to identify the custodians of an

enterprise’s data assets. Accountability is key here. The policy must determine

who in the system is responsible for various aspects of the data, including

quality, accessibility, and consistency. Then come to the processes. A set of

standards and procedures must be defined and developed for how data is stored,

backed up, and protected. To be left out, a good data governance plan must also

include an audit process to ensure compliance with government regulations. ...

If an Enterprise does not know where it’s headed with its data governance plan,

reflected in black and white, it’s bound to stutter. Things like targets

achieved, dollars saved, and risks mitigated need to be measured and

recorded.