What It Means to Be a Software Architect — and Why It Matters

One of the misperceptions that architects face is that we are engaging in

architecture for architecture’s sake, or that we propose new technologies mainly

because of the “coolness” factor. Our challenge is to counter this misperception

by arguing not merely for the aesthetic value of good design, but for the

pragmatic, economic value. We need to frame the need for intentional design as

something that can save the company significant costs by averting

disadvantageous technology and design choices, producing a distinct competitive

edge through market differentiation and paving the way for increased customer

satisfaction. ... My commentary on some of Martin Fowler’s views of software

architecture is not intended to paint a complete picture of this important role

and how it differs from other types of architects. Rather, I’ve sought to

highlight the importance of designing the structure of a system at the code

level to ensure that the application of relevant patterns results in a design

that can sustain cumulative functionality over time, increasing business value

while reducing time to market.

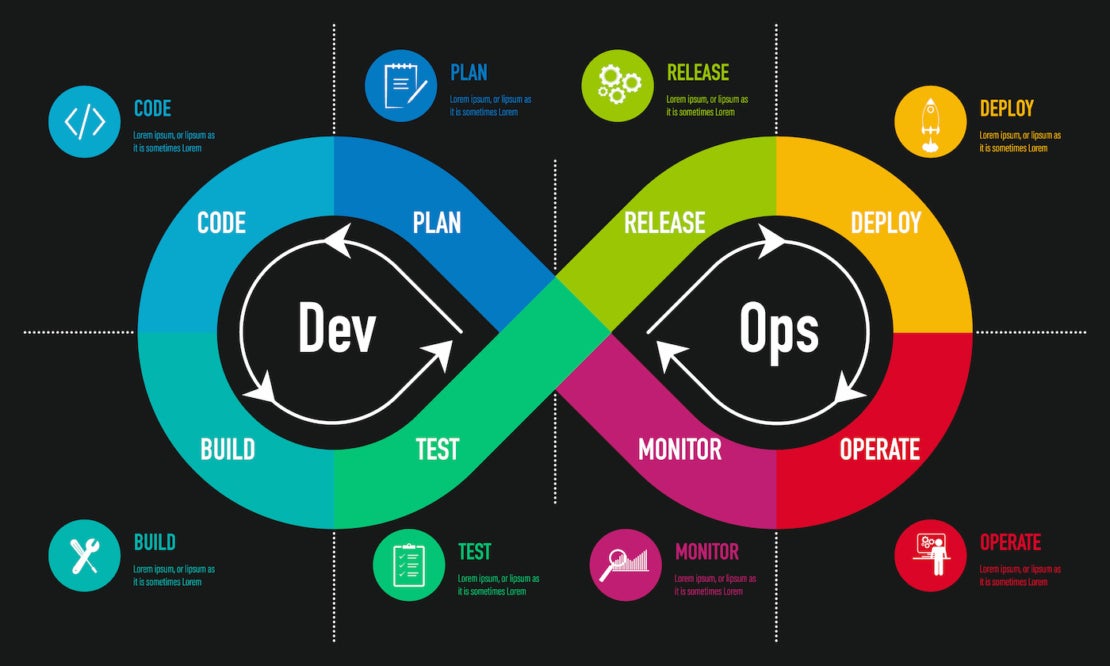

5 Ways to Supercharge Incident Remediation with Automation

It’s a balance between your confidence in the automation, the value or cost of

the incident and the frequency the task occurs. Common incidents with proven

automated steps for diagnosis and remediation are good opportunities to trigger

with AIOps. From there, follow a similar process to prioritize your incident

response. Automate diagnosis and remediation steps for serious outages to speed

resolution. Then focus on increasing efficiency by automating recurring

diagnostics and remediation actions that occur across many kinds of incidents.

You can safely automate and trigger lower-risk actions such as read-only

diagnostic pulls with AIOps, giving downstream personnel the information they

need, even when they are paged. You can automate common remediation actions and

make them available to responders to use. This automation can utilize secrets

management tools such as Vault to enable privileged actions in production

environments without sharing credentials, making it safer to delegate to

responders.

Tech Pros Quitting Over Salary Stagnation, Stress

Gartner Vice President Analyst Lily Mok told InformationWeek via email CIOs

should work with their recruitment and compensation teams to identify IT roles

and skills areas facing higher attrition risk and recruitment challenges due to

noncompetitive compensation. “This will help pinpoint additional funding will be

needed in the short term to address pay gaps,” she says. “Organizations with

limited financial resources should prioritize allocating increases to high-risk

areas.” She also recommends conducting spot-checks of the market pay conditions

on at least a quarterly basis and updating pay benchmarks for key IT roles and

skills areas with more recent data. “At very least, I would recommend annual

review of market pay levels for key IT jobs and skills area,” Mok adds. ...

Another suggestion is to create a separate salary structure for IT, an approach

that helps avoid force-fitting IT jobs into enterprise wide pay grades that

often place a higher weight on internal equity than external competitiveness

when valuing jobs across different functions.

Unlocking Cyber Resilience: The Role Of SBOMs In Cybersecurity

Implementing an SBOM strategy is a step towards fortifying your cybersecurity

defenses. While having a list of the components that make up your software

supply chain is better than not having one, context is also crucial. You don’t

just want to know that you have a given code module—but all of the associated

data as well. Vulnerabilities and exploits tend to effect specific versions,

so you need to know the details of the versions in your environment, the year

and date the code was released, where and how the code is used, etc.

Automation is essential. It’s impractical, bordering on impossible to try and

manage or maintain an accurate SBOM through any manual process. By automating

the SBOM generation and maintenance, the margin for human error diminishes,

the speed of response accelerates, and organizations can scale their security

practices as they grow. Compliance is another piece of the puzzle. Your SBOM

solution should align with industry standards and regulatory requirements,

ensuring that you aren't just secure, but also compliant.

CISOs can marry security and business success

While businesses aim for different outcomes, one goal that the business

typically prescribes for cybersecurity is business continuity. This is

probably due to most executives viewing cybersecurity only as an operational

necessity. At the same time, they fail to see cybersecurity’s essential

contribution to the due diligence aspect of the procurement process. The

complexity and length of procurement processes have increased over the years,

as prospective clients use this as part of their third-party risk management.

Executives that are aware of clients’ needs can use them to improve the

cybersecurity of the organization and its offerings, by translating them into

features that will raise the offering’s competitive advantage. Traditionally,

R&D and innovation teams perceive the CISO’s role as an obstacle to

innovation and advancement. Conventional security entities frequently resort

to phrases like “this can’t be done due to security protocols,” obstructing

changes to existing infrastructure and impeding innovation. If security is

confined to an IT concern rather than recognized as a business imperative,

CISOs struggle to emerge as strategic partners.

Advanced Applications of Open-Source Technologies

The Evolution of Open-Source Culture The widespread adoption of open-source

technologies is attributed to the culture and philosophy underpinning the

open-source movement. Early pioneers in the open-source community championed

the belief in the transformative power of collaborative, community-driven

efforts and unrestricted access to software source code. For young developers

exploring careers, open source presents exciting opportunities. Contributing

to open-source projects enables developers to hone their skills, gain

visibility, and engage with mentorship from experienced professionals. ...

Demonstrated by Brazil’s Amazonia-1 satellite program, Julia is instrumental

in in-orbit sensor calibration, showcasing its adaptability beyond

conventional software development. NASA, a leader in space exploration, also

utilises Julia for various purposes, including gaining insights into the

intricacies of Earth’s oceans. This strategic adoption of open-source

technology highlights its pivotal role as more than just a developer’s tool,

serving as a crucial enabler to tackle real-world challenges on a global

scale.

Generative AI is a developer's delight. Now, let's find some other use cases

"We aren't surprised that the most common application of generative AI is in

programming, using tools like GitHub Copilot or ChatGPT," Mike Loukides,

author of the O'Reilly report, writes. "However, we are surprised at the level

of adoption." There is also evidence of a healthy tools ecosystem that has

already sprung up around generative AI, the report indicates. ... "Automating

the process of building complex prompts has become common, with patterns like

retrieval-augmented generation (RAG) and tools like LangChain. And there are

tools for archiving and indexing prompts for reuse, vector databases for

retrieving documents that an AI can use to answer a question, and much more.

We're already moving into the second generation of tooling." ... "Programmers

have always developed tools that would help them do their jobs, from test

frameworks to source control to integrated development environments.

Programmers will do what's necessary to get the job done, and managers will be

blissfully unaware as long as their teams are more productive and goals are

being met."

In the symphony of enterprise, every business today dances to the silent tune of technology

Not all AI applications have had a positive impact – content writing and the

media industry are the worst hit. It was widely believed that creative

industries will be the last to be impacted by technologies like AI however the

ground realities are very different. One of the demands of the striking

Writers Guild of America was that AI will not encroach on writers’ credits and

compensation. No matter the core product, all functions of an organization are

now utilizing technology in some form or manner – planning, organizing,

analysis, marketing, sales, customer engagement or service. Technology has

always been a catalyst for progress, often propelling non-tech companies into

new realms of efficiency and cost-effectiveness. From the industrial

revolution’s steam engines to the digital age’s computers, companies outside

the technology sector have harnessed innovation to transform their operations.

Today, the conductor of this transformative orchestra is artificial

intelligence (AI) and the darling subset – Generative AI.

5 pillars of a cloud-conscious culture

“A developer shouldn’t just provision an extra-large server and then leave it

running,” says Firment. “Coders have to learn to work in a cloud native way.

That requires understanding terms like elasticity, scalability, and

resiliency. They need to know what we mean by multiple availability zones.

Developers can still leverage their skills in the cloud, but they just have to

apply them in the new way.” Building a culture is like building a tribe, and

certificates are a good marker of the new tribe. They create a sense of

belonging. Rituals are equally important. “As individuals get certified,

create a cloud of fame,” says Firment. “That’s a great way to say you value

people who develop the skills. And it’s an artifact of the new culture.”

Celebrating certification is also highly effective. “Establish a weekly or

monthly cloud hour, where people share what they’re learning on the way to

getting certified,” he says. “Ultimately, they should share how they’re

applying the knowledge and customer success stories. Storytelling is a big

part of creating a culture.”

The SSO tax is killing trust in the security industry

Before some of these solutions are adopted, there are steps we can all take.

If you are responsible for identity and access management at an organization,

have you audited the authentication tokens you rely on to ensure they operate

as expected? Have you considered what compensating controls you could put in

place? Are there security products that can do that auditing for you or

otherwise mitigate this risk in your environment? Do the security

questionnaires your company sends to potential SaaS application providers ask

how they configure authentication tokens? It is going to take a serious

collaborative "security by design" effort between SSO providers, application

developers, and browser companies to repair the broken SSO environment we

currently operate under. We single out application providers for criticism in

this article because they so often charge an upgrade fee to integrate with

SSO. If they are going to charge us a tax, they need to step up or share in

the blame for the compromises that will continue to happen.

Quote for the day:

"Success consists of getting up just

one more time than you fall." -- Oliver Goldsmith

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/23951355/STK043_VRG_Illo_N_Barclay_1_Meta.jpg)