A CDO Call To Action: Stop Hoarding Data—Save The Planet

Many of the implications of rampant data hoarding are reasonably well

known—including the potential compliance and privacy risks associated with

storing petabytes of data you know absolutely nothing about. For many

companies, "don’t ask, don’t tell" seems to be the approach when it comes to

ensuring compliant management of their dark data—or at the very least,

ignoring dark data represents a business risk many compliance officers seem

willing to take. Other implications on the cost of storing dark data, or the

potential value that could be unlocked by operationalizing it, are also often

discussed. For many CDOs, the motivation to store troves of data that may

never get used is a form of FOMO, where the fear of being unable to support a

future request for new analytical insights outweighs the cost of data storage.

In these situations, the unwillingness of many CDOs to apply methods to

measure the business value of data is a primary enabler of data hoarding,

where the idea that "we might need it someday" is sufficient to drive millions

in annual revenues for cloud service providers.

Google, Microsoft, Amazon, Meta Pledge to Make AI Safer and More Secure

Meta said it welcomed the White House agreement. Earlier this week, the

company launched the second generation of its AI large language model, Llama

2, making it free and open source. "As we develop new AI models, tech

companies should be transparent about how their systems work and collaborate

closely across industry, government, academia and civil society," said Nick

Clegg, Meta's president of global affairs. The White House agreement will

"create a foundation to help ensure the promise of AI stays ahead of its

risks," Brad Smith, Microsoft vice chair and president, said in a blog post.

Microsoft is a partner on Meta's Llama 2. It also launched AI-powered Bing

search earlier this year that makes use of ChatGPT and is bringing more and

more AI tools to Microsoft 365 and its Edge browser. The agreement with the

White House is part of OpenAI's "ongoing collaboration with governments, civil

society organizations and others around the world to advance AI governance,"

said Anna Makanju, OpenAI vice president of global affairs.

UN Security Council discusses AI risks, need for ethical regulation

During the meeting, members stressed the need to establish an ethical,

responsible framework for international AI governance. The UK and the US have

already started to outline their position on AI regulation, while at least one

arrest has occurred in China this year after the Chinese government enforced

new laws relating to the technology. Malta is the only current non-permanent

council member that is also an EU member state and would therefore be governed

by the bloc’s AI Act, the draft of which was confirmed in a vote last month.

Although AI can bring huge benefits, it also poses threats to peace, security

and global stability due to its potential for misuse and its unpredictability

— two essential qualities of AI systems, Clark said in comments published by

the council after the meeting. “We cannot leave the development of artificial

intelligence solely to private-sector actors,” he said, adding that without

investment and regulation from governments, the international community runs

the risk of handing over the future to a narrow set of private-sector

players.

How to Choose Carbon Credits That Actually Cut Emissions

Risk is the biggest driver in business and — with trillions of dollars in

annual climate-related costs and damage – the climate crisis is fast becoming

a business crisis. Corporations must act now to minimize losses, illustrate

meaningful climate action to shareholders and comply with fast-approaching

climate regulations. Carbon credits are an important approach to scaling

climate action globally and are a fast-growing strategy for delivering on

corporate ESG goals. While these offsets are part of nearly every scenario

that keeps global warming to 1.5 degrees Celsius, legacy carbon markets lack

broad public trust: Impactful carbon solutions require clear guidelines and

proven, verifiable data. ... This is an all-hands-on-deck moment. We must

engage proven, reliable, and equitable methods to meet what may be the

greatest threat to the future of humanity and the planet we inhabit. Carbon

credits, when implemented responsibly and at scale, can be a very effective

tool for humanity to use in the fight to limit the damages from climate

change.

BGP Software Vulnerabilities Overlooked in Networking Infrastructure

At the heart of the vulnerabilities was message parsing. Typically, one would

expect a protocol to check that a user is authorized to send a message before

processing the message. FRRouting did the reverse, parsing before verifying.

So if an attacker could have spoofed or otherwise compromised a trusted BGP

peer's IP address, they could have executed a denial-of-service (DoS) attack,

sending malformed packets in order to render the victim unresponsive for an

indefinite amount of time. ... "Originally, BGP was only used for large-scale

routing — Internet service providers, Internet exchange points, things like

that," dos Santos says. "But especially in the last decade, with the massive

growth of data centers, BGP is also being used by organizations to do their

own internal routing, simply because of the scale that has been reached," to

coordinate VPNs across multiple sites or data centers, for example. More

than 317,000 Internet hosts have BGP enabled, most of them concentrated in

China (around 92,000) and the US (around 57,000). Just under 2,000 run

FRRouting — though not all, necessarily, with BGP enabled — and only around

630 respond to malformed BGP OPEN messages.

How IT leaders are driving new revenue

CIOs who are driving new revenue are: Delivering technologies designed to meet

specific business outcomes. For example, Narayaran has seen CIOs focus their

teams on creating applications designed not merely on high availability and

reliability but on hitting very specific business goals — such as enabling

on-time deliveries to its customers. Unlocking data’s potential. Narayaran

says he has also seen CIOs make big plays with their data programs, investing

in the technology infrastructure needed to bring together and analyze data

sets to create new services or products and drive business objectives such as

improved customer retention and customer stickiness. Co-creating with their

business unit colleagues. Notably, Narayaran says CIOs are approaching their

business unit colleagues with such proposals. “CIOs [are saying], ‘Here’s an

opportunity. We have this data, and we can make this data do this for you,’

and they then bring that to life. And if they say, ‘This is what we have and

this is what we can do,’ then the business, too, can come up with new

ideas.”

Data centers grapple with staffing shortages, pressure to reduce energy use

A consistent issue for the past decade, attracting and retaining new talent

will continue to challenge data center leaders, according to Uptime. About

two-thirds of survey respondents said they have “problems recruiting or

retaining staff,” but the trend appears to be stabilizing as it hasn’t

increased over last year’s data. According to the report: More than one third

(35%) of respondents say their staff is being hired away, which is more than

double the 2018 figure of 17%. And many believe operators are poaching from

within the sector, with 22% of respondents reporting that they lost staff to

their competitors. Staffing challenges are highest among operations management

staff and those specializing in mechanical and electrical trades, as well as

with junior level staff. “It's been challenging the data center industry for

about a decade. It has been escalating in recent years. Our survey data this

year suggests that it may, at least this year, not be getting worse, maybe

stabilizing. And poaching is a problem of people who do get qualified

applicants into jobs – they do find them hired away,” said Jacqueline Davis,

research analyst at Uptime Institute.

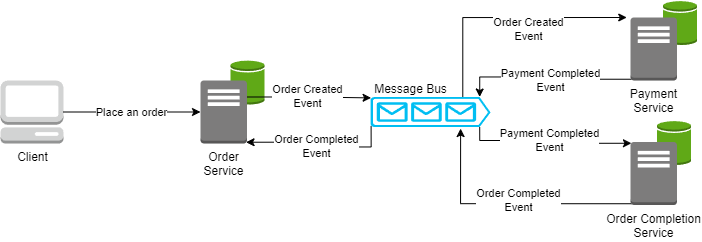

Why API attacks are increasing and how to avoid them

First, exposing APIs to network requests significantly increases the attack

surface, says Johannes Ullrich, dean of research at the SANS Technology

Institute. “An attack no longer needs access to the local system but can

attack the API remotely,” he says. Even worse, APIs are designed to be easy to

find and use, Ullrich says. They’re “self-documenting” and are typically based

on common standards. That makes them convenient for developers, but also prime

targets for hackers. Since APIs are designed to help applications talk to one

another, they often have access to core company data, such as financial

information or transaction records. It’s not only the data itself that’s at

risk. The API documentation can also give outsider insights into business

logic, says Ullrich. “This insight may make finding weaknesses in the business

process easier.” Then there’s the quantity issue. Companies deploying

cloud-based applications no longer deploy a single monolithic application with

a single access point in and out.

Journey to Quantum Supremacy: First Steps Toward Realizing Mechanical Qubits

Research and development in this field are growing at astonishing paces to see

which system or platform outruns the other. To mention a few, platforms as

diverse as superconducting Josephson junctions, trapped ions, topological

qubits, ultra-cold neutral atoms, or even diamond vacancies constitute the zoo

of possibilities to make qubits. So far, only a handful of qubit platforms

have demonstrated to have the potential for quantum computing, marking the

checklist of high-fidelity controlled gates, easy qubit-qubit coupling, and

good isolation from the environment, which means sufficiently long-lived

coherence. ... The realization of a mechanical qubit is possible if the

quantized energy levels of a resonator are not evenly spaced. The challenge is

to keep the nonlinear effects big enough in the quantum regime, where the

oscillator’s zero point displacement is minuscule. If this is achieved, then

the system may be used as a qubit by manipulating it between the two lowest

quantum levels without driving it in higher energy states.

ChatGPT Amplifies IoT, Edge Security Threats

ChatGPT and its ilk are rapidly appearing integrated or embedded in commercial

and consumer IoT of all types. Many imagine AI models to be the most

sophisticated security threat to date. But most of what is imagined is indeed

imaginary. “Now, if an actual AI emerges, be very worried if the kill switch

is very far away from humans,” says Jayendra Pathak, chief scientist at

SecureIQLab. He, like others in security and AI, agree that the chances of an

actual general artificial intelligence developing any time soon are still very

low. But as to the latest AI sensation, ChatGPT, well that’s another kind of

scare. “ChatGPT poses [insider] threats -- similar to the way rogue or

‘all-knowing employees’ pose -- to IoT. Some of the consumer IoT

vulnerabilities pose the same risk as a microcontroller or microprocessor

does,” Pathak says. In essence, ChatGPT’s potential threats spring from its

training to be helpful and useful. Such a rosy prime directive can be very

harmful, however.

Quote for the day:

"No man can stand on top because he is

put there." -- H. H. Vreeland