How the European Energy Crisis Could Impact IT

Enterprises with internal, inefficient data centers will be the organizations most affected by the power crisis. “Enterprises that have already sourced or have moved to the cloud will be less impacted, although they will not escape some cost challenges,” Hall says. “Energy costs are going up across the board, so you can expect those costs to be passed on to customers through existing agreements.” With energy becoming increasingly scarce and expensive, many European enterprises are turning to hyper-scalers, an agile method of processing data via remote data centers that are equipped with horizontally linked servers. Hall forecasts a bigger push to cloud computing in the months ahead, especially toward major hyper-scaler providers -- including Amazon AWS, Microsoft Azure, Google GCP, Alibaba Cloud, IBM, and Oracle -- which tend to offer both lower costs and reduced carbon emissions. “Given the complexity of transitioning workloads, though, we are concerned clients will pull back on technology spend for lower-priority activities,” he says.

A “Green” Quantum Sensor

Zhu and colleagues take a different approach by developing a quantum sensor that generates its own power from a renewable energy source, in this case solar energy. The team’s sensor is made from an ensemble of NV centers in diamond, a well-established solid-state quantum-sensing platform that can operate over a wide range of temperatures (0–600 K), pressures (up to 40 GPa), and magnetic fields (0–12 T). Nitrogen-vacancy centers are defects that are typically created by implanting nitrogen ions into a diamond lattice. The centers confine charge carriers—such as electrons or holes—creating a localized electronic state. Users can read out the spin of this state by exciting the defect with a laser. The NV center then emits radiation, via fluorescence, whose intensity correlates with the system’s spin. Researchers typically use a green laser for this excitation, as that color of light produces the strongest fluorescence in the system (the emitted radiation is red). For use in quantum applications, NV centers are ideal because they operate at room temperature, so no cooling apparatus is required.

How to Achieve API Governance

To scale your developer (i.e., user) experience, you need to look at the bigger

picture “the entire API landscape” and not just a single API. Not only will you

have different consumers accessing one API, but they will mix and match

different APIs to build their own experiences. These experiences will be built

by the designers on the consumer side; they will combine the APIs in a way that

makes sense for their users. The main question to ask here is “How do you plan

for such a scenario?” This is where API governance and scaling come into play,

because now we are not only looking at what is the best way to manage that one

API but we are looking at the best way to manage the entire API landscape, so

that we can scale those experiences much better. The more we design and optimize

the experience for the entire API landscape, the more we can create a better UX

that translates to more value generated. One crucial thing to keep in mind is

that, in the end, even though we are striving to get the best UX, that’s not all

that matters.

7 critical steps to defend the healthcare sector against cyber threats

Internet of Things (IoT)-enabled equipment has been hugely beneficial in

enabling healthcare providers to automate and facilitate remote working. But if

not properly monitored and patched, these connected devices can also provide

threat actors with an easy attack path. Hospitals are likely to have hundreds of

devices deployed across their facilities, so keeping them all updated and

patched can be an extremely resource-heavy task. Many health providers also

struggle to accommodate the required downtime to update vital equipment.

Automating device discovery and update processes will make it easier to keep

devices secured. Providers should also vet future purchases to ensure they have

key security functionality and are accessible for maintenance and updates.

Healthcare providers sit in the center of extremely large and complex supply

networks. Suppliers for medical materials, consultants, hardware, and facilities

maintenance are just a few examples, alongside a growing number of digital

services.

Gartner reveals top strategic tech trends for CIOs to watch in 2023

Observable data reflects the digitised artifacts — such as logs, traces, API

calls, downloads and file transfers — that appear when any stakeholder takes

any kind of action. Applied observability feeds these observable artifacts

back to users in a highly orchestrated, integrated approach to accelerate

organisational decision-making. ... With AI-related privacy breaches and

security incidents becoming more frequent, organisations will need to

implement new capabilities to ensure model reliability, trustworthiness,

security and data protection. AI trust, risk and security management (TRiSM)

requires participants from different business units to work together to

implement new measures. ... Digital immune systems implement data-driven

insight into operations — including automated and extreme testing and

software engineering — to increase the resilience and stability of systems.

This emerging capability can help provide a roadmap that CIOs can use to

plan out new practices and approaches that their teams can adopt to deliver

higher business value, while mitigating risk and increasing customer

satisfaction.

Digital transformation: 4 paths to becoming future-ready

Modernizing and transforming while recognizing that many legacy systems

serve critical business needs that can’t be seriously disrupted isn’t a new

tension. It was a common enough theme in 2015 for terms like bi-modal IT

(coined by Gartner), 2nd/3rd platform (IDC), and fast/slow IT to be in

common circulation. The basic idea was that you might want to modernize (or

not) traditional IT while freeing new cloud and container technologies from

having to deal with legacy entanglements. A similar approach to digital

transformation – with similar pluses and minuses – is in play with this

pathway. The authors write that the motivation for this approach is when

senior leaders “believe transforming their current firm will take too long

and will require a very different culture, skills, and systems than exist

today.” They point to similar organizational hurdles that bimodal IT critics

pointed to: The cool new organization gets all the attention and focus while

the traditional organization slowly trudges along.

How to turbocharge collaboration in innovation ecosystems

Fail fast, learn fast, succeed faster. These precepts, which emanated from

Silicon Valley tech labs, have taken the world of open innovation by storm.

Executives who are eager to get to the bottom line of evaluating an

innovative idea, product, or technology will likely be told, “Wait for the

retrospective!” Even in the most traditional, non-digital-native

corporations, innovative collaborations have borrowed heavily from methods

such as agile technology and lean startup. From ideating and producing a

proof of concept, innovation partners will typically work in sprints,

testing and prototyping until they have come up with a minimum viable

product. In the meantime, successful pilots may lead to other pilots and

create spillover effects. Few best practices or approved scripts are

available to follow. Instead, it is crucial to test and experiment with

different ways of finding solutions to a problem. Although the search for

solutions is typically driven by customer needs, optimizing the internal

process can also produce results.

Guilty verdict in the Uber breach case makes personal liability real for CISOs

Going forward, CSOs and CISOs may be at odds with their senior and peer

groups of executives when a strategic decision is made that places the

company at risk, even a mitigated risk. As every CSO/CISO knows, there is no

such thing as 100% secure. Has this verdict opened a door for victims of a

corporate data breach to not only go after the company with which they had

entrusted their information, but also the executives who shoulder that

responsibility? Whether this is a welcome turn of events or a shock to the

system will play out in the coming months as legal teams of companies that

hold personal data evaluate their positions in the light of this verdict.

Another question that must be discussed in corporate C-suites is just how

far down the executive chain of responsibility should the corporate

liability insurance coverage extend and what guidance is coming out of human

resources and legal to their executives about personal liability and their

need to obtain personal liability insurance.

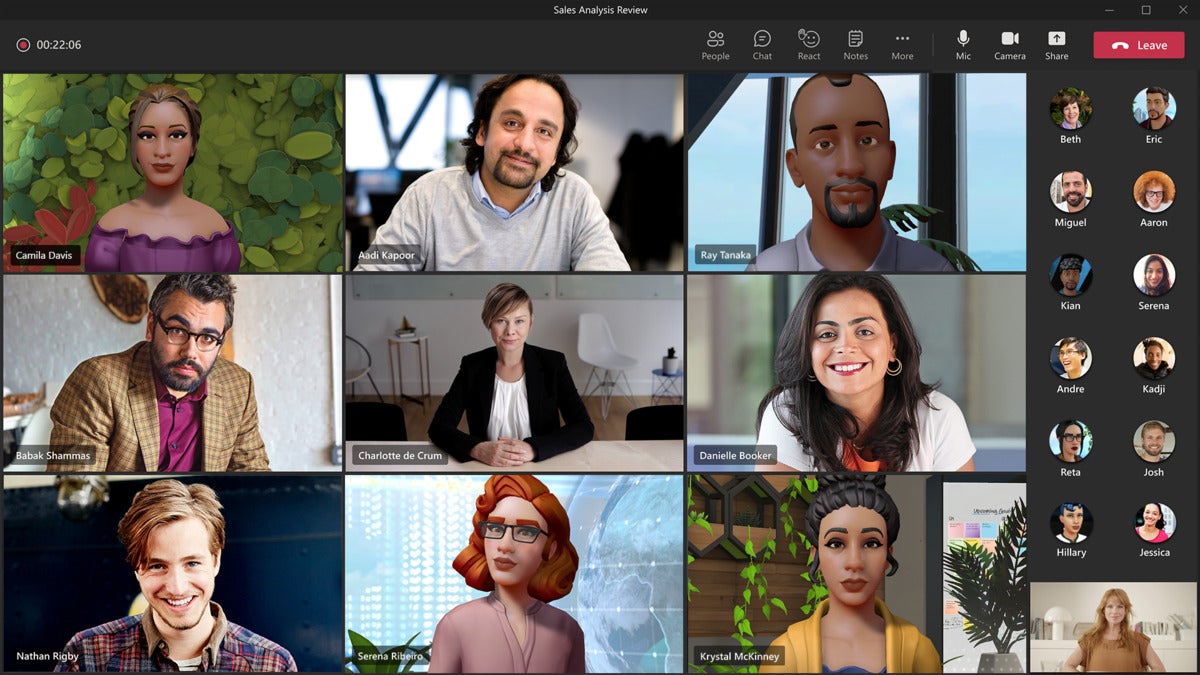

How to Tackle Cyberthreats in the Metaverse

“This is not a new issue to the metaverse, as people have dealt with

conversational integrity since the dawn of social interaction on the

internet,” he says. However, with more and more social and workplace

interaction taking place in places known as a metaverse, there is a new level

of awareness required to ensure you are actually speaking with the individual

you think you are speaking with. John Bambenek, principal threat hunter at

Netenrich, a security and operations analytics SaaS company, agrees, noting

almost all cybersecurity threats start or are furthered by deception of an

individual. “Ultimately, I think most crime on the metaverse will surround

deception towards individuals,” he says. “Romance scams entail huge financial

losses but are almost completely disregarded when companies consider

cybersecurity risks.” He explains for most social media companies, ensuring

that individuals truly exist (i.e. are not bots) and authentic (i.e. not

scammers running 20 accounts) will remain a problem.

New Data Leaks Add to Australia's Data Security Reckoning

In what may be a world-first, the Australian government also pressed Optus to

reimburse people for fees incurred related to replacing their passports and

driver's licenses. For passports, those eligible must pay for the replacement

upfront and then apply for reimbursement from Optus. Optus will apply a credit

to customers' bills to cover the cost of replacement driver's licenses,

depending on the state or territory. Some states and territories are initially

waiving the cost of replacement due to the breach. Optus provides more

information here. The government's pressure on Optus to reimburse those

affected by the breach is striking and could send a message of increasing

intolerance for data breaches and a desire to increase the immediate costs for

those responsible for breaches. Consumers often wait years to see any

compensation from class action lawsuits as a result of a breach.

Quote for the day:

"Problem-solving leaders have one

thing in common: a faith that there's always a better way." --

Gerald M. Weinberg