Ransomware Attacks Are Evolving. Your Security Strategy Should, Too

Modern ransomware attacks typically include various tactics like social

engineering, email phishing, malicious email links and exploiting

vulnerabilities in unpatched software to infiltrate environments and deploy

malware. What that means is that there are no days off from maintaining good

cyber-hygiene. But there’s another challenge: As an organization’s defense

strategies against common threats and attack methods improve, bad actors will

adjust their approach to find new points of vulnerability. Thus, threat

detection and response require real-time monitoring of various channels and

networks, which can feel like a never-ending game of whack-a-mole. So how can

organizations ensure they stay one step ahead, if they don’t know where the next

attack will target? The only practical approach is for organizations to

implement a layered security strategy that includes a balance between

prevention, threat detection and remediation – starting with a zero-trust

security strategy. Initiating zero-trust security requires both an operational

framework and a set of key technologies designed for modern enterprises to

better secure digital assets.

Stateful Applications in Kubernetes: It Pays to Plan Ahead

Maybe you want to go with a pure cloud solution, like Google Kubernetes Engine

(GKE), Amazon Elastic Kubernetes Service (EKS) or Azure Kubernetes Service

(AKS). Or perhaps you want to use your on-premises data center for solutions

like RedHat’s OpenShift or Rancher. You’ll need to evaluate all the different

components required to get your cluster up and running. For instance, you’ll

likely have a preferred container network interface (CNI) plugin that meets your

project’s requirements and drives your cluster’s networking. Once your clusters

are operational and you’ve completed the development phase, you’ll begin testing

your application. But now, your platform team is struggling to maintain your

stateful application’s availability and reliability. As part of your stateful

application, you’ve been using a database like Cassandra, MongoDB or MySQL.

Every time a container is restarted, you begin to see errors in your database.

You can prevent these errors with some manual intervention, but then you’re

missing out on the native automation capabilities of Kubernetes.

Understanding Kubernetes Compliance and Security Frameworks

Compliance has become crucial for ensuring business continuity, preventing

reputational damage and establishing the risk level for each application.

Compliance frameworks aim to address security and privacy concerns through

easy monitoring of controls, team-level accountability and vulnerability

assessment—all of which present unique challenges in a K8s environment. To

fully secure Kubernetes, a multi-pronged approach is needed: Clean code, full

observability, preventing the exchange of information with untrusted services

and digital signatures. One must also consider network, supply chain and CI/CD

pipeline security, resource protection, architecture best practices, secrets

management and protection, vulnerability scanning and container runtime

protection. A compliance framework can help you systematically manage all this

complexity. ... The Threat Matrix for Kubernetes, developed from the widely

recognized MITRE ATT@CK (Adversarial Tactics, Techniques & Common

Knowledge) Matrix, takes a different approach based on today’s leading

cyberthreats and hacking techniques.

Authentication in Serverless Apps—What Are the Options?

In serverless applications, there are many components interacting—not only end

users and applications but also cloud vendors and applications. This is why

common authentication methods, such as single factor, two-factor and

multifactor authentication offer only a bare minimum foundation. Serverless

authentication requires a zero-trust mentality—no connection should be

trusted, even communication between internal components of an application

should be authenticated and validated. To properly secure serverless

authentication, you also need to use authentication and authorization

protocols, configure secure intraservice permissions and monitor and control

incoming and outgoing access. ... A network is made accessible through a SaaS

offering to external users. Access will be restricted, and every user will

require the official credentials to achieve that access. However, this brings

up the same problems raised above—the secrets must be stored somewhere. You

cannot manage how your users access and store the credentials that you provide

them with; therefore, you should assume that their credentials are not being

kept securely and that they may be compromised at any point.

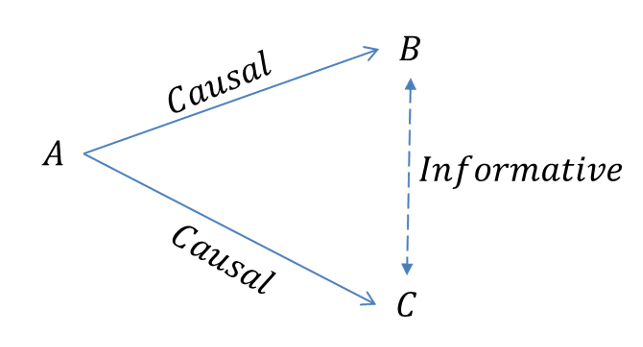

The economics behind adopting blockchain

If we take the insurance sector as a use case, we can see how blockchain

mitigates various issues around information asymmetries. One fundamental

concern in the insurance sector is the principal-agent problem, which stems

from conflicting incentives amidst information asymmetry between the principal

(insurer company) and the agent (of the company). Some adverse outcomes of

this include unprofessional conduct, agents forging documents to meet assigned

targets as well as a misrepresentation of the compliances, often leading to

misselling of insurance products. These problems occur primarily due to the

absence of an integrated mechanism to track and prevent fraudulent conduct of

the agent. In such a scenario, blockchain has the ability to bridge the gaps

and enhance the customer experience by virtue of providing a distributive,

immutable and transparent rating system that allows agents to be rated

according to their performance by companies as well as clients.

Techstinction - How Technology Use is Having a Severe Impact on our Climate

Like most large organisations, there is a general consciousness of the impact the Financial Services Industry is having on the environment. All three of these banks are taking serious measures to reduce their CO2 emissions and to change the behaviours of their staff. The Natwest group (who own RBS) for example recently published a working from home guide to their employees containing tips on how to save energy. Whilst this and all sustainability measures should be applauded, it’s important to acknowledge that "Sustainability in our work place" is very different and less important than "sustainability in our work", simply because there is more to be gained by optimising what we are doing as opposed to where we do it, both financially and for the environment. Sustainability in our work involves being lean in everything we do, including the hardware infrastructure being used, being completely digital in the services provided as well as how we produce software to deliver these services. All the major cloud providers invest heavily in providing energy efficient infrastructure as well using renewable energy sources.How machine learning speeds up Power BI reports

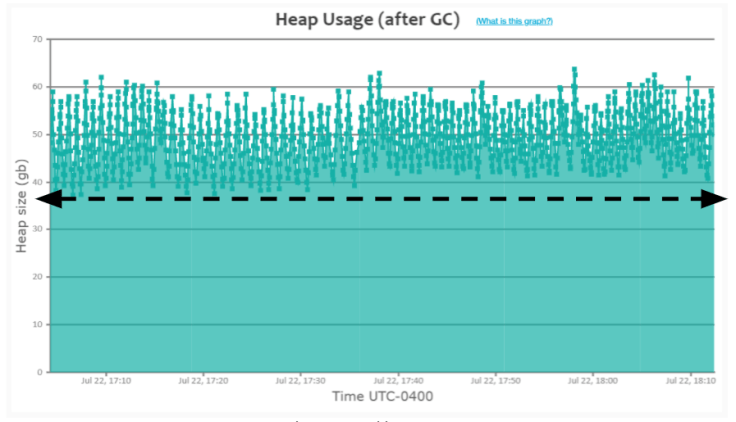

Creating aggregations you don't end up using is a waste of time and money.

"Creating thousands, tens of thousands, hundreds of thousands of aggregations

will take hours to process, use huge amounts of CPU time that you're paying

for as part of your licence and be very uneconomic to maintain," Netz warned.

To help with that, Microsoft turned to some rather vintage database technology

dating back to when SQL Server Analysis Service relied on multidimensional

cubes, before the switch to in-memory columnar stores. Netz originally joined

Microsoft when it acquired his company for its clever techniques around

creating collections of data aggregations. "The whole multidimensional world

was based on aggregates of data," he said. "We had this very smart way to

accelerate queries by creating a collection of aggregates. If you know what

the user queries are, [you can] find the best collection of aggregates that

will be efficient, so that you don't need to create surplus aggregates that

nobody's going to use or that are not needed because some other aggregates can

answer [the query].

How GitOps Benefits from Security-as-Code

The emergence of security-as-code signifies how the days of security teams

holding deployments up are waning. “Now we have security and app dev who are

now in this kind of weird struggle — or I think historically had been — but

bringing those two teams together and allowing flexibility, but not getting in

the way of development is really to me where the GitOps and DevSecOps emerge.

That’s kind of the big key for me,” Blake said. ... Developers today are

deploying applications in an often highly distributed microservices

environment. Security-as-code serves to both automate security for CI/CD with

GitOps while also ensuring security processes are taking interconnectivity

into account. “It’s sort of a realization that everything is so interconnected

— and you can have security problems that can cause operational problems. If

you think about code quality, one of your metrics for ‘this is good code’

doesn’t cause a security vulnerability,” Omier said. “So, I think a lot of

these terms really come from acknowledging that you can’t look at individual

pieces, when you’re thinking about how we are doing? ..."

The role of Artificial Intelligence in manufacturing

There are few key advantages which make the adoption of AI particularly

suitable as launching pads for manufacturers to embark on their cognitive

computing journey – intelligent maintenance, intelligent demand planning and

forecasting, and product quality control. The deployment of AI is a complex

process, as with many facets of digitisation, but it has not stopped companies

from moving forward. The ability to grow and sustain the AI initiative over

time, in a manner that generates increasing value for the enterprise, is

likely to be crucial to achieving early success milestones on an AI adoption

journey. Manufacturing companies are adopting AI and ML with such speed

because by using these cognitive computing technologies, organisations can

optimise their analytics capabilities, make better forecasts and decrease

inventory costs. Improved analytics capabilities enable companies to switch to

predictive maintenance, reducing maintenance costs and reducing downtime. The

use of AI allows manufacturers to predict when or if functional equipment will

fail so that maintenance and repairs can be scheduled in advance.

What the metaverse means for brand experiences

The metaverse is best described as a 3D World Wide Web or a digital facsimile

of the physical world. In this realm, users can move about, converse with

other users, make purchases, hold meetings, and engage in all manner of other

activities. In the metaverse, all seats at live performances are front and

center, sporting events are right behind home plate or center court, and of

course, all avatars remain young and beautiful — if that’s what you desire —

forever. As you might imagine, this is a marketer’s dream. Anheuser-Busch

InBev global head of technology and innovation Lindsey McInerney explained to

Built In recently that marketing is all about getting to where the people are,

and a fully immersive environment is ripe with all manner of possibilities,

from targeted marketing and advertising opportunities to fully virtualized

brand experiences. Already, companies like ABB are experimenting with

metaverse-type marketing opportunities, such as virtual horse racing featuring

branded NFTs.

Quote for the day:

"Making those around you feel

invisible is the opposite of leadership." -- Margaret Heffernan