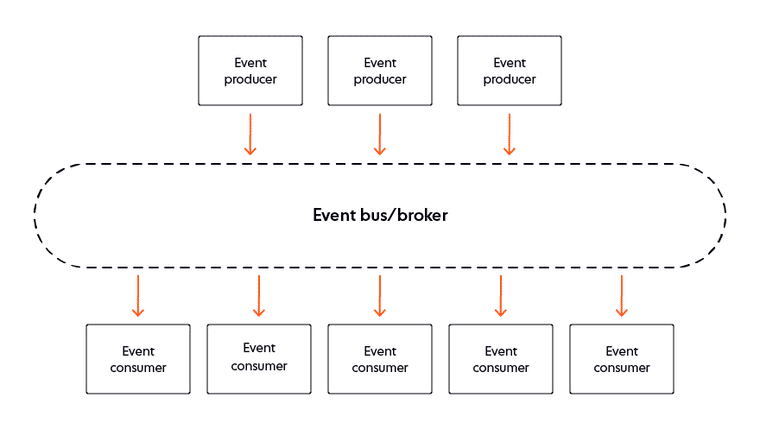

Why you should use a microservice architecture

Simply moving your application to a microservice-based architecture is not

sufficient. It is still possible to have a microservice-based architecture, but

have your development teams work on projects that span services and create

complex interactions between your teams. Bottom line: You can still be in the

development muck, even if you move to a microservice-based architecture. To

avoid these problems, you must have a clean service ownership and responsibility

model. Each and every service needs a single, clear, well-defined owner who is

wholly responsible for the service, and work needs to be managed and delegated

at a service level. I suggest a model such as the Single Team Oriented Service

Architecture (STOSA). This model, which I talk about in my book Architecting for

Scale, provides the clarity that allows your application—and your development

teams—to scale to match your business needs. Microservice architectures do come

at a cost. While individual services are easier to understand and manage, a

microservices application as a whole has significantly more moving parts and

becomes a more complex beast of its own.

Routine is a new productivity app that combines task management and notes

One of the most opinionated feature of Routine is the dashboard. Whatever you’re

doing on your computer, you can pull up the Routine dashboard with a simple

keyboard shortcut. By default, that shortcut is Ctrl-Space. The Routine app adds

an overlay on top of your screen with a few widgets. It looks a bit like the

now-defunct Dashboard on macOS. On that dashboard, you’ll find a handful of

things. On the left, you can see the tasks you have to complete today. On the

right, you can see how much time you have left before your next meeting and some

information about that event. The date is pulled directly from your Google

Calendar account. In the center of the screen, Routine displays a big input

field called the Console. You can type text and then press enter to create a new

task from there. It works a bit like the ‘Quick Add’ feature in Todoist. The

idea is that you can add a task without wasting time opening your to-do app,

moving to the right project, clicking the add task button and entering text into

several fields. With Routine, you can press Ctrl-Space, type some text, press

enter and you’re done.

3 Lessons I Learned From The Hard Way As A Data Scientist

Whatever algorithm you implement or analysis you make, the results are used in

the continuing processes or production. Thus, it is of vital importance to make

sure the results are correct. By results being correct, I do not mean not having

any errors on your predictions or hitting 100% accuracy which is not reasonable

or legitimate. In fact, you should be really suspicious of results which are too

good to be true. The mistakes I mention are usually data related issues. For

instance, you might be making a mistake while joining stock information of

products from an SQL table to your main table. It results in serious problems if

your solution is based on product stocks. There are almost always controls in

your code that prevent making mistakes. However, it is not possible for us to

think of each and every possible mistake. Thus, taking a second look is always

beneficial. ... The glorious world of machine learning algorithms is very

attractive. The urge for using a fancy algorithm and building a model to perform

some predictions might cause you to skip digging into the data.

Research finds consumer-grade IoT devices showing up... on corporate networks

"Remote workers need to be aware that IoT devices could be compromised and used

to move laterally to access their work devices if they're both using the same

home router, which in turn could allow attackers to move onto corporate

systems," said Palo Alto. Poor IoT device security stems mainly from

manufacturers' desire to keep price points low, cutting security out as an

unnecessary overhead. This approach inadvertently exposed large numbers of

easily pwned devices to the wider internet – causing such a headache that

governments around the world are now preparing to mandate better IoT security

standards. Even IoT trade groups have woken up to the threat, albeit perhaps the

threat of regulation rather than the security threat, but if that's what it

takes, the outcome is no bad thing. ... Half of respondents said they worried

about attacks against their industrial IoT devices, with 46 per cent being

similarly worried about connected cameras being compromised. Smart cameras are a

tried-and-trusted compromise method for miscreants

The Rise Of No-Code And Low-Code Solutions: Will Your CTO Become Obsolete?

There are many reasons behind the rise of no-code and low-code tools, but the

key one is a large imbalance between the ever-growing demand for software

development services and the shortage of skilled developers in the market. For

decades, there's been movement toward a withdrawal from complicated coding in

favor of easy-to-use visual tools. However, over time, no-code and low-code

platforms have become more sophisticated, allowing non-developers to build more

powerful websites and applications without hiring software specialists. That has

even evoked some neo-Luddite concerns and discussions about the potential of

such platforms to make good old software developers obsolete. But what’s behind

it? Both no-code and low-code approaches hide the complexities of software

programming under the mask of high-level abstractions. Low-coding reduces

programming efforts down to minimum levels, and no-coding empowers anyone to

create apps without any knowledge in programming.

Complex Systems: Microservices and Humans

There is one aspect to this that I think is worth talking about, and that is

that we actually already have an organization of people. We work in

organizations that are, in general, organized into teams. You see a

theoretical org chart here on the left. This might look like something that

you might see in your own companies. We have these org charts, and these

organizations of teams. Then that org chart doesn't map very neatly onto the

microservices architecture necessarily, and maybe it shouldn't. The

interrelationships between these teams are actually more subtle and often more

complicated than what you see in the org chart. That is because if you have

microservices, and you have dependencies between these microservices and

interactions between them, then the teams owning them, by necessity, sometimes

need to interact with each other. Microservices are constructed in a way that

gives as much independence as possible and as much autonomy as possible to the

individual teams.

Maximizing agile productivity to meet shareholder commitments

Companies’ public commitments to ambitious—and sometimes expansive—goals tend

to have multiyear timelines, while agile teams are trained to focus on the

next three to six months. In organizations with siloed processes, product

owners often feel that they don’t have enough visibility into their

organizations’ processes to forecast the timeline for their initiatives, let

alone to predict the long-term impact of their work. To balance the demands of

the near future with longer-term goals, the companies that meet their

transformation goals support agile teams with information and expertise.

Successful companies provide product owners with relevant financial and

operational data for the company, benchmarked to best-in-class organizations,

to help them assess the potential value of their work for the next 18–24

months. They also assign initiative owners and relevant subject-matter experts

from business functions early in the research and discovery process to help

quantify possible improvements to the existing journey.

Satellite IoT dreams are crashing into reality

Even with smaller satellites, building a profitable wireless network is hard.

On one side, there’s a capital-intensive phase that requires establishing

connectivity (in this case, by building and launching satellites) and on the

other, these companies must establish a market for the connectivity. But while

the economics of building and launching satellites have changed dramatically,

the demand for devices that rely on satellite networks hasn’t kept up. The

biggest growth has come from people-tracking products, such as the Garmin

inReach walkie-talkies, which people can wear into the wilderness and use to

get help if needed. There are also rumors that Apple may include some form of

satellite service in an upcoming iPhone. While this is a real and growing

market, however, it isn’t enough to justify the launch of constellations by

almost a dozen companies whose goal is to be IoT connectivity providers. So

former connectivity players eschew bandwidth and turn to full solutions in

order to provide a service that isn’t a commodity and eke out more revenue per

customer.

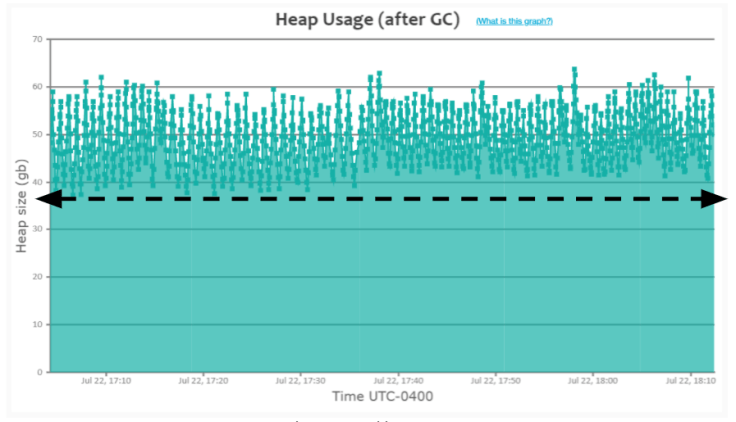

Interesting Application Garbage Collection Patterns

When an application is caching many objects in memory, ‘GC’ events wouldn’t be

able to drop the heap usage all the way to the bottom of the graph (like you

saw in the earlier ‘Healthy saw-tooth pattern). ... you can notice that heap

usage keeps growing. When it reaches around ~60GB, the GC event (depicted as a

small green square in the graph) gets triggered. However, these GC events

aren’t able to drop the heap usage below ~38GB. Please refer to the dotted

black arrow line in the graph. In contrast, in the earlier ‘Healthy saw-tooth

pattern’, you can see that heap usage dropping all the way to the bottom

~200MB. When you see this sort of pattern (i.e., heap usage not dropping till

all the way to the bottom), it indicates that the application is caching a lot

of objects in memory. When you see this sort of pattern, you may want to

investigate your application’s heap using heap dump analysis tools like

yCrash, HeapHero, Eclipse MAT and figure out whether you need to cache these

many objects in memory. Several times, you might uncover unnecessary objects

to be cached in the memory. Here is the real-world GC log analysis report,

which depicts this ‘Heavy caching’ pattern.

Designing the Internet of Things: role for enterprise architects, IoT architects, or both?

Great use cases, but an architectural nightmare that calls for a new role to

plan and piece it all together into a coherent and viable system. This may be

someone in a relatively new role, an IoT architect, or expanding the current

roles of enterprise architects. The need for architects of either stripe was

recently explored in a Gartner eBook, which looked at the ingredients needed

to ensure success with enterprise IoT. ... Those having such capabilities in

two or more of these areas will be in extremely high demand. The good news is

that organizations can use existing digital business efforts to train up

candidates." Responsibilities for the IoT architect role include the

following: "Engaging and collaborating with stakeholders to establish an

IoT vision and define clear business objectives."; "Designing an

edge-to-enterprise IoT architecture."; "Establishing processes for

constructing and operating IoT solutions."; and "Working with the

organization's architecture and technical teams to deliver value." Then

there's the enterprise architect -- who are likely to see their roles greatly

expanded to encompass the extended architectures the IoT is bringing.

Quote for the day:

"Leadership is familiar, but not well

understood." -- Gerald Weinberg

/filters:no_upscale()/articles/productivity-software-development-kaizen/en/resources/1figure-1-senecaglobal-implements-the-kaizen-method-1634561591693.jpg)