Micro Frontend Architecture

The idea behind Micro Frontends is to think about a web app as a composition of

features that are owned by independent teams. Each team has a distinct area of

business it cares about and specializes in. A team is cross-functional and

develops its features end-to-end, from database to user interface. ... But why

do we need micro frontends? Let’s find out. In the Modern Era, with new web

apps, the front end is becoming bigger and bigger, and the back end is getting

less important. Most of the code is the Micro Frontend Architecture and the

Monolith approach doesn’t work for a larger web application. There needs to be a

tool for breaking it up into smaller modules that act independently. The

solution to the problem is the Micro frontend. ... It heavily depends on your

business case, whether you should or should not use micro frontends. If you have

a small project and team, micro frontend architecture is not as such required.

At the same time, large projects with distributed teams and a large number of

requests benefit a lot from building micro frontend applications. That is why

today, micro frontend architecture is widely used by many large companies, and

that is why you should opt for it too.

CodeSee Helps Developers ‘Understand the Codebase’

As a developer, you’ve likely faced one problem again and again throughout your

career: struggling to understand a new codebase. Whether it’s a lack of

documentation, or simply poorly-written and confusing code, working to

understand a codebase can take a lot of time and effort, but CodeSee aims to

help developers not only gain an initial understanding, but to continually

understand large codebases as they evolve over time. “We really are trying to

help developers to master the understanding of codebases. We do that by

visualizing their code, because we think that a picture is really worth a

thousand words, a thousand lines of code,” said CodeSee CEO and co-founder

Shanea Leven. “What we’re trying to do is really ensure that developers, with

all of the code that we have to manage out there — and our codebases have grown

exponentially over the past decade — that we can deeply understand how our code

works in an instant.” Earlier this month, CodeSee, which is still in beta,

launched OSS Port to bring its code visibility and “continuous understanding”

product to open source projects, as well as give potential contributors and

maintainers a way to find their next project.

Non-Coder to Data Scientist! 5 Inspiring Stories and Valuable Lessons

While looking for inspiring journeys I focus on people coming from a

non-traditional background. People coming from non-technology backgrounds.

People having zero coding experience. I guess this makes their story inspiring.

All those who found their success in data science were willing to learn to code.

They were not intimidated by the Kaggle notebooks that they were not able to

understand initially. They all understood that it takes time to gain knowledge

and pursued till they acquired all the required knowledge. Programming is one of

the biggest show stoppers. It is this particular skill that makes many

frustrated. It even makes them give up their passion for a career in data

science. Programming is not exactly a hard thing to learn. ... Having a growth

mindset plays a major role in data science. There are many topics to learn and

it can be overwhelming. Instead of saying, I can’t learn math, I can’t be a good

programmer, I can never understand statistics. People with a growth mindset tend

to stay positive and keep trying.

How To Stay Ahead of the Competition as an Average Programmer

Apart from getting the satisfaction of being helpful, it has multiple career

benefits too. One, I get to learn a lot more by helping others. Two,

continuously helping others builds trusted relationships within the

organization.In the software industry, your allies come to your help more than

you realize. They can return the favor during application integration, defect

resolution, challenging meetings, or even in promotion discussions. If you

know people and have helped them before, they will be happy to bail you out

from difficult situations. Hence, never hesitate to help others at your

workplace. ... Simultaneously, it might not be possible for you to be of help

to everyone. But you can justify why you are unable to help. Being arrogant or

repeatedly rejecting the requests as not your responsibility makes others

think you are not a team player. ... While working in a team environment, you

are bound to face challenges. You need to follow company policies and

processes that you might find hindering your productivity. You will have to

work with people who slow down the team’s progress due to their poor

contribution.

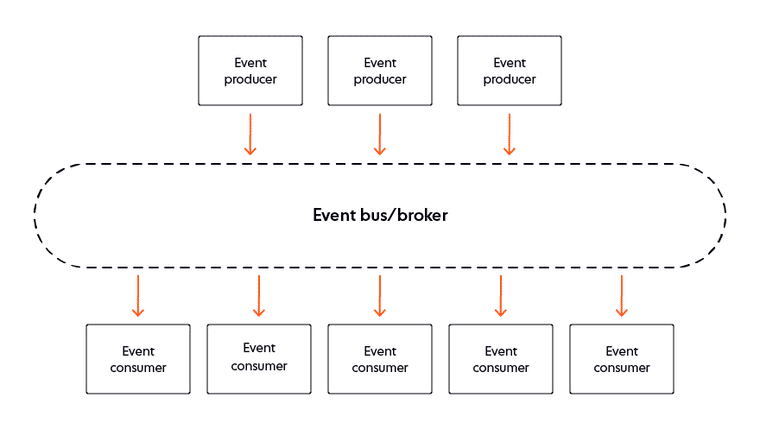

A real-world introduction to event-driven architecture

An event-driven architecture eliminates the need for a consumer to poll for

updates; it instead receives notifications whenever an event of interest

occurs. Decoupling the event producer and consumer components is also

advantageous to scalability because it separates the communication logic and

business logic. A publisher can avoid bottlenecks and remain unaffected if its

subscribers go offline, or if their consumption slows down. If any subscriber

has trouble keeping up with the rate of events, the event stream records them

for future retrieval. The publisher can continue to pump out notifications

without throughput limitations and with high resilience to failure. Using a

broker means that a publisher does not know its subscribers and is unaffected

if the number of interested parties scales up. Publishing to the broker offers

the event producer the opportunity to deliver notifications to a range of

consumers across different devices and platforms. Estimates suggest that 30%

of all global data consumed by 2025 will result from information exchange in

realtime.

Pros and cons of cloud infrastructure types and strategies

A multi-cloud strategy simply means that an organisation has chosen to use

multiple public cloud providers to host their environments. A hybrid cloud

approach means that a company is using a combination of on-premises

infrastructure, private cloud and public cloud — and possibly more than one of

the latter, meaning that company would be implementing a multi-cloud strategy

with a hybrid approach. At times, these terms are used interchangeably.

Companies choose a multi-cloud strategy for a multitude of reasons, not least

of which is avoiding vendor lock-in. Spreading workloads across multiple cloud

providers increases reliability, as a company is able to fail over to a

secondary provider if another provider experiences an outage. Optionality is a

huge benefit to companies who want to be able to pick and choose which

services will most seamlessly integrate into their environments, as each major

public cloud provider provides some unique services for different types of

workloads. Furthermore, when a company uses multiple public cloud providers,

it retains flexibility and can transfer workloads from one provider to

another.

Gartner: Top strategic technology trends for 2022

The first of those trends is the growth of the distributed enterprise. Driven

by the massive growth in remote and hybrid working patterns, traditional

office-centric organizations are evolving into geographically distributed

enterprises. “For every organization, from retail to education, their delivery

model has to be reconfigured to embrace distributed services,” Groombridge

said. Such operations will stress the network that supports users and

consumers alike, and businesses will need to rearchitect and redesign to

handle it. ... “Data is widely scattered in many organization and some of that

valuable data can be trapped in siloes,” Groombridge said. “Data fabrics can

provide integration and interconnectivity between multiple silos to unlock

those resources.” Groombridge added that data-fabric deployments will also

force significant network-topology readjustments and in some cases, to work

effectively, could require their own edge-networking capabilities . The result

is that the fabric will unlock data that can be used by AI and analytics

platforms to support new applications bring about business innovations more

quickly, Groombridge said.

BlackMatter Ransomware Defense: Just-In-Time Admin Access

To be fair, the BlackMatter alert, beyond including intrusion system rules,

also details the group's known tactics, techniques and procedures, and

includes additional recommended defenses, such as implementing "time-based

access for accounts set at the admin-level and higher," due to

ransomware-wielding attackers' propensity to attack organizations after hours,

over weekends, on Christmas Eve or any other inconvenient time. What does

time-based access look like? One approach is just-in-time access, which

enforces least-privileged access except for temporarily granting higher access

levels via Active Directory. "This is a process where a network-wide policy is

set in place to automatically disable admin accounts at the AD level when the

account is not in direct need," according to the advisory. "When the account

is needed, individual users submit their requests through an automated process

that enables access to a system, but only for a set timeframe to support task

completion."

Why is collaboration between the CISO and the C-suite so hard to achieve?

Poor communication between the CISO and business unit heads is a major barrier

to safe and successful business transformation. To properly educate people

within the organisation about the realities of a cyber attack, the CISO must

move beyond data, buzzwords and technical jargon and tell a story that brings

the threat to life for those without subject-matter insight. If the CISO can

intelligibly and clearly articulate the threats and the steps necessary to

mitigate them, they are much more likely to capture executives’ attention and

help ensure that all key stakeholders understand the trade-offs between new

technology and added risk. If they’re able to adapt their language to specific

individuals and business functions, they’ll have even greater success. For

instance, a chief marketing officer is most likely interested in the risks to

customer data, while chief financial officers will want to better understand

how to secure banking information. ... “CISOs still have more work to do

in breaking down the communication barriers by talking in less technical

language for boards to better understand potential business risks.”

Developer Learning isn’t just Important, it’s Imperative

Every company that isn’t consistently upgrading its codebase or shifting to

new frameworks is facing a serious business problem. If your codebase is

getting older and older, you face the risk of massive future migrations. And

if you’re not moving to new framework versions, you’re missing important

benefits that your team could otherwise leverage. Technical debt naturally

increases over time. The longer it goes unaddressed, the sooner you’ll get

stuck paying high costs in migration, hiring, or massive upskilling efforts

that take weeks or months. Like saving for retirement, incremental upskilling

pays dividends in the long run. Every industry leader I’ve talked to worries

about the scarcity of high-quality software engineers. That means companies

feel serious pressure to constantly hire new, better developers. But rather

than looking externally for a solution, what if companies looked internally

Here’s the reality: meaningful developer learning helps companies convert

silver medalists into gold medalists.

Quote for the day:

"A true dreamer is one who knows how

to navigate in the dark" -- John Paul Warren

No comments:

Post a Comment