Why Your Next CIO Will Be a CPO

The role of the CIO was to deploy technology efficiently to support the

company’s strategies and plans. The role of the CPO, as inherited from pure

technology companies, is to develop and maintain a deep understanding of the

customer and market and guide the delivery of products to best meet and monetize

their needs, and do so ahead of any and all competition. The traditional CIO

derives the why and what from other parts of the organization and supplies the

how. Transitional versions of the CIO and the CDO and other neologisms may start

to encroach on the what. But the true CPO drives the why and what—and the how if

they also have engineering, or collaborates on the how with a CTO or head of

development if not. Does this sound broad, even encroaching on CEO

territory? Well, yes. It’s no accident that former product chiefs are the new

CEOs of Google and Microsoft. So what does that mean for you if you are in an IT

organization? Well, first, while your organization may or may not change the

actual title to CPO from CIO, it’s important for your career to recognize when

the definition of their job becomes that of what a CPO would do in a “pure”

software company.

The most fundamental skill: Intentional learning and the career advantage

Stanford psychologist Carol Dweck’s popular work on growth suggests that people

hold one of two sets of beliefs about their own abilities: either a fixed or a

growth mindset. A fixed mindset is the belief that personality characteristics,

talents, and abilities are finite or fixed resources; they can’t be altered,

changed, or improved. You simply are the way you are. People with this mindset

tend to take a polar view of themselves—they consider themselves either

intelligent or average, talented or untalented, a success or a failure. A fixed

mindset stunts learning because it eliminates permission not to know something,

to fail, or to struggle. Writes Dweck: “The fixed mindset doesn’t allow people

the luxury of becoming. They have to already be.”2 In contrast, a growth mindset

suggests that you can grow, expand, evolve, and change. Intelligence and

capability are not fixed points but instead traits you cultivate. A growth

mindset releases you from the expectation of being perfect. Failures and

mistakes are not indicative of the limits of your intellect but rather tools

that inform how you develop. A growth mindset is liberating, allowing you to

find value, joy, and success in the process, regardless of the outcome.

The Dos and Don’ts for SMB Cybersecurity in 2021

With insider threats accounting for the largest majority of cyberattacks, SMBs

need to get to the root of the problem — human behavior. Inspiring change begins

with raising awareness. To do this effectively, SMBs must first reflect on their

business as a whole. This means identifying every “weak point” and addressing

every potential impact the business could suffer if those weak points were

targeted. For instance, many SMBs operate across supply chains, which include

various virtual and physical touchpoints. Because of this, if one section of the

supply chain were to get hit by a cyberattack, the entire system could come

crumbling down. By gathering and sharing this information in consistent

organizationwide training sessions that inform and entertain, SMBs can empower

their staff with deeper threat awareness and help improve their individual

security posture. ... SMBs should consider bringing on external experts to

regularly analyze their IT infrastructure. This will ensure that they have an

unbiased opinion to the business’ needs and the strongest protection possible.

Coupled with this, SMBs should regularly conduct internal security audits to

better understand where hidden back doors exist across their organization.

Can Care Robots Improve Quality Of Life As We Age?

The new generation of care robots do far more than just manual tasks. They

provide everything from intellectual engagement to social companionship that was

once reserved for human caregivers and family members. When it comes to

replicating or substituting human connection, designers must be intentional

about what outcomes these robots are designed to achieve. To what degree are

care robots facilitating and maximizing emotional connection with others (a

personified AI assistant that helps you call your grandchildren, for example) or

providing the actual connection itself (such as a robot that appears as a

huggable, strokable pet)? Research suggests that an extensive social network

offers protection against some of the intellectual effects of aging. There could

also be legitimate uses for this kind of technology in mental health and

dementia therapy, where patients are not able to care for a “real” pet or

partner. Some people might also find it easier to bond or be vulnerable with an

objective robot than a subjective human. Yet the risks and externalities of

robots as social companions are not yet well understood. Would interacting with

artificial agents lead some people to engage less with the humans around them,

or develop intimacy with an intelligent robot?

IBM and ExxonMobil are building quantum algorithms to solve this giant computing problem

Research teams from energy giant ExxonMobil and IBM have been working together

to find quantum solutions to one of the most complex problems of our time:

managing the tens of thousands of merchant ships crossing the oceans to deliver

the goods that we use every day. The scientists lifted the lid on the progress

that they have made so far and presented the different strategies that they have

been using to model maritime routing on existing quantum devices, with the

ultimate goal of optimizing the management of fleets. ... Although the theory

behind the potential of quantum computing is well-established, it remains to be

found how quantum devices can be used in practice to solve a real-world problem

such as the global routing of merchant ships. In mathematical terms, this means

finding the right quantum algorithms that could be used to most effectively

model the industry's routing problems, on current or near-term devices. To do

so, IBM and ExxonMobil's teams started with widely-used mathematical

representations of the problem, which account for factors such as the routes

traveled, the potential movements between port locations and the order in which

each location is visited on a particular route.

Palo Alto Networks Joins Flexible Firewall Party. Will Cisco Follow Suit?

In addition to migrating workloads to public clouds, companies also started

demanding a cloud-like experience in their data centers. This includes

consumption-based pricing and the flexibility to scale usage and add services on

demand. “And what we’re now doing is bringing extreme flexibility, simplicity,

and agility to the network security and software firewalls,” Gupta said. “So

that’s why we’re reinventing yet again how customers buy these software

firewalls and security subscriptions. And I hope that the industry will adopt

that model and make it easier for customers.” However, other leading firewall

vendors already adopted similar consumption-based licensing approaches.

Fortinet, Forcepoint, and Check Point rolled theirs out last year. Fortinet’s

programs aim to give its virtual firewall customers more flexibility in how they

consume those products and security services, said Vince Hwang, senior director

of products at Fortinet. ... “They can allocate the points to any virtual

firewall size and type of security services in seconds without incurring a

procurement cycle. These virtual firewalls and security services can be used on

any cloud and anytime. Customers can manage their consumption through a central

portal available through Fortinet’s FortiCare service.”

India's Blockchain Ecosystem Is a Hotbed Of Crypto Innovation

Advancements in artificial intelligence have led to the development of automated

decentralized finance strategies to replace the role of traditional fund

managers, monitoring the market to identify the best risk-adjusted assets to

deliver investment returns. Rocket Vault Finance leverages these advanced

artificial intelligence predictive analysis tools and machine learning

algorithms to develop data-driven, intelligent, and automated investment

strategies to minimize losses and maximize gains. They consistently achieve over

100% APY returns for stablecoin capital and avoid managing multiple crypto

assets over a range of liquidity mining, staking, or other defi platforms,

reducing fees and risk. Rocket Vault Finance is free to use for retail investors

holding the platform’s RVF tokens, with paid services on offer to institutional

investors, providing an automated hybrid alternative to riskier yield farming

projects and traditional market returns. Several other projects are also

contributing to the rapidly growing Indian blockchain ecosystem, expanding the

value proposition as a result.

The virtual security guard: AI-based security startups have been the toast of the town, here’s why

As the threat landscape evolves, security providers have to be always on their

toes, and businesses have to adopt a more unified approach to cyber risk

management. Some of the biggest challenges that security and risk management

leaders face are the lack of a consistent view at a micro and macro level, the

ability to prioritise what’s most critical, and maintaining transparency across

the organisation when it comes to cybersecurity. “SAFE is built on the premise

of these challenges and our ability to provide realtime visibility at both a

granular IP level and at an organisational level across people, process,

technology, cybersecurity products, and third parties brings a completely new

approach to enterprise cyber risk management,” says Saket Modi, Co-founder &

CEO, Safe Security, a cybersecurity platforms company. ... Growing at a

mindboggling 450 per cent, WiJungle, another AI-based security startup uses AI

for automation at the network level and threat detection and analysis. The

NetSec (network security) vendor offers a solution for office and remote network

security.

How to adopt DevSecOps successfully

The DevSecOps manifesto says that the reason to integrate security into dev and

ops at all levels is to implement security with less friction, foster

innovation, and make sure security and data privacy are not left behind.

Therefore, DevSecOps encourages security practitioners to adapt and change their

old, existing security processes and procedures. This may be sound easy, but

changing processes, behavior, and culture is always difficult, especially in

large environments. The DevSecOps principle's basic requirement is to introduce

a security culture and mindset across the entire application development and

deployment process. This means old security practices must be replaced by more

agile and flexible methods so that security can iterate and adapt to the

fast-changing environment. ... Clearly, the biggest and most important change an

organization needs to make is its culture. Cultural change usually requires

executive buy-in, as a top-down approach is necessary to convince people to make

a successful turnaround. You might hope that executive buy-in makes cultural

change follow naturally, but don't expect smooth sailing—executive buy-in alone

is not enough. To help accelerate cultural change, the organization needs

leaders and enthusiasts that will become agents of change.

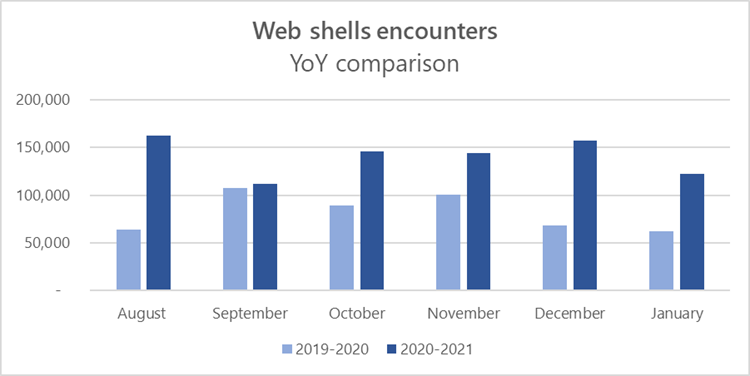

Web shell attacks continue to rise

The escalating prevalence of web shells may be attributed to how simple and

effective they can be for attackers. A web shell is typically a small piece of

malicious code written in typical web development programming languages (e.g.,

ASP, PHP, JSP) that attackers implant on web servers to provide remote access

and code execution to server functions. Web shells allow attackers to run

commands on servers to steal data or use the server as launch pad for other

activities like credential theft, lateral movement, deployment of additional

payloads, or hands-on-keyboard activity, while allowing attackers to persist in

an affected organization. As web shells are increasingly more common in attacks,

both commodity and targeted, we continue to monitor and investigate this trend

to ensure customers are protected. In this blog, we will discuss challenges in

detecting web shells, and the Microsoft technologies and investigation tools

available today that organizations can use to defend against these threats. We

will also share guidance for hardening networks against web shell attacks.

Attackers install web shells on servers by taking advantage of security gaps,

typically vulnerabilities in web applications, in internet-facing servers.

Quote for the day:

"Change the changeable, accept the

unchangeable, and remove yourself from the unacceptable." --

Denis Waitley