E-commerce innovation in 2021 will look like what was projected for 2025

According to McKinsey, over 75% of U.S. consumers have changed shopping

behavior and changed to new brands during the COVID-19 pandemic. The top

three reasons for shopping for a new brand were value, availability and

convenience. The most important filter for discretionary spend is safety.

The ability to offer e-commerce, contact-less payments, order online

curbside pickup, and home delivery are all requirements in order to compete

in the next normal. Salesforce research shows that U.S. retailers offering

creative pickup options experienced 29% growth in sales compared to 22% in

retailers who had a simple fulfillment option. ... Over the past 5 years we

have seen growing investment in social channels as advertising vehicles. In

2021, we will see brands take a step further, adopting commerce capabilities

provided by these social platforms. We also anticipate expanding

relationships with brands and social influencers as a accelerant to grow

sales. This shift will also challenge brands to re-think the traditional

definitions of "omni-channel", expanding the definition to include the

ability to identify customers, at any location, and the ability to deliver

and service their need, independent of time or location, based on the

customer's method of delivery.

Create a DevOps culture with open source principles

We can split remote work into fully remote and hybrid working models. A

fully remote working model means a DevOps team is geographically dispersed.

The members have no desk lying empty back at the office with their name on

it. However, COVID-19 restrictions have made every team a fully remote team,

at least for the time being. A fully remote team’s benefits include

increased agility and playing time zones to the advantage of your delivery

cycle. The challenges of a new remote DevOps team run the gamut right now,

depending on the level of support their organization had for remote workers

pre-COVID. In contrast, a hybrid DevOps team still maintains a presence in a

corporate office. Core team members may have permanent seats inside a

corporate office. Other team members may work from home or a satellite

office full-time or part-time. COVID-19 restrictions add a new factor to

hybrid teams because some companies may stagger returns to offices. A hybrid

DevOps team’s benefits include having the best of both worlds. Team

leadership can still maintain a face in the office. Their developers get the

option to work where they’re the most productive. The challenges of a hybrid

DevOps team can range from communications to system access issues.

Understand the IoT Cybersecurity Improvement Act, now law

"Ultimately, the government wants to put together a strategy on how to

address IoT devices and what those specific security baseline requirements

should be," said Donald Schleede, information security officer at Digi

International. To start, the law requires NIST to develop minimum security

standards for connected devices that the federal government purchases or

uses. It also has the agency develop standards and guidelines for the use

and management of all IoT devices that the government owns or uses. It

further requires NIST to address secure development, identity management,

patching and configuration management as part of its security standards. It

prohibits federal entities from buying or using any IoT device determined to

be noncompliant with the NIST standards. The legislation requires the

Department of Homeland Security to review such measures every five years to

determine any necessary revisions. This ensures the federal requirements for

connected devices remain current as technology, standards and attack

scenarios evolve. The federal law provides more-specific IoT security

standards for connected devices than past industry-led attempts and

legislative measures have, Schleede said.

New ways Google Workspace works with tools you already use

Creating and collaborating on content is at the heart of getting work done.

When working with content received from customers, partners, or teammates,

employees shouldn’t lose time converting files or working in unfamiliar

tools. With Google Drive, you can store and share over 100 different

file types and formats, including Microsoft Word, Excel, and PowerPoint

files, as well as PDFs, images, and videos. And by using intelligent

features like Priority and Quick Access in Drive, you can find files nearly

50% faster. With Office editing, users can also easily edit Microsoft

Office files in Google Docs, Sheets, and Slides without converting them,

with the added benefit of layering on Google Workspace’s enhanced

collaborative and assistive features. From assigning action items via

comment, to writing faster with Smart Compose, to accelerating data entry

with Sheets Smart Fill, Office editing brings Google Workspace functionality

to your Office files. And we recently extended Office editing to the Docs,

Sheets, and Slides mobile apps as well, so you can easily work on Office

files on the go. Starting today, you can also open Office files for

editing directly from a Gmail attachment, further simplifying your

workflows.

‘Smellicopter’ uses a live moth antenna to hunt for scents

“From a robotics perspective, this is genius,” says coauthor and co-advisor

Sawyer Fuller, assistant professor of mechanical engineering. “The classic

approach in robotics is to add more sensors, and maybe build a fancy

algorithm or use machine learning to estimate wind direction. It turns out,

all you need is to add a fin.” Smellicopter doesn’t need any help from the

researchers to search for odors. The team created a “cast and surge”

protocol for the drone that mimics how moths search for smells. Smellicopter

begins its search by moving to the left for a specific distance. If nothing

passes a specific smell threshold, Smellicopter then moves to the right for

the same distance. Once it detects an odor, it changes its flying pattern to

surge toward it. Smellicopter can also avoid obstacles with the help of four

infrared sensors that let it measure what’s around it 10 times each second.

When something comes within about eight inches (20 centimeters) of the

drone, it changes direction by going to the next stage of its cast-and-surge

protocol. “So if Smellicopter was casting left and now there’s an obstacle

on the left, it’ll switch to casting right,” Anderson says.

Tiny four-bit computers are now all you need to train AI

So what does 4-bit training mean? Well, to start, we have a 4-bit computer,

and thus 4 bits of complexity. One way to think about this: every single

number we use during the training process has to be one of 16 whole numbers

between -8 and 7, because these are the only numbers our computer can

represent. That goes for the data points we feed into the neural network,

the numbers we use to represent the neural network, and the intermediate

numbers we need to store during training. So how do we do this? Let’s first

think about the training data. Imagine it’s a whole bunch of black-and-white

images. Step one: we need to convert those images into numbers, so the

computer can understand them. We do this by representing each pixel in terms

of its grayscale value—0 for black, 1 for white, and the decimals between

for the shades of gray. Our image is now a list of numbers ranging from 0 to

1. But in 4-bit land, we need it to range from -8 to 7. The trick here is to

linearly scale our list of numbers, so 0 becomes -8 and 1 becomes 7, and the

decimals map to the integers in the middle.

How can the cloud industry adapt to a post-COVID world?

Technology will play a major part in instigating the changes needed in

future, with a key role to play for many of the firms that have enjoyed

success during the pandemic. While demand for software such as video

conferencing platforms may not be as sky-high as it was at the beginning of

the pandemic, Wrenn argues the next big step is how cloud companies can eat

further into the market share enjoyed by the traditional telephone industry.

“More and more businesses are using Microsoft Teams or Zoom to interact,” he

explains, “when previously they would have used conference lines or even

called a person directly due to it being more convenient. Cloud providers

need to think about how they can make the most of this opportunity as the

way in which people interact changes.” To some extent, we should all

consider ourselves lucky the global pandemic happened when it did, given

that cloud computing has only in recent recently become as advanced as it is

now. Thus, rather than ‘profiting from the pandemic’, this period has been

the making of the industry. After all, “cloud storage, processing, and

compute facilities are already set up, and ready to expand easily and

automatically, as and when enterprises need,” according to Royston, who

claims this wouldn’t have been the case ten to 15 years go.

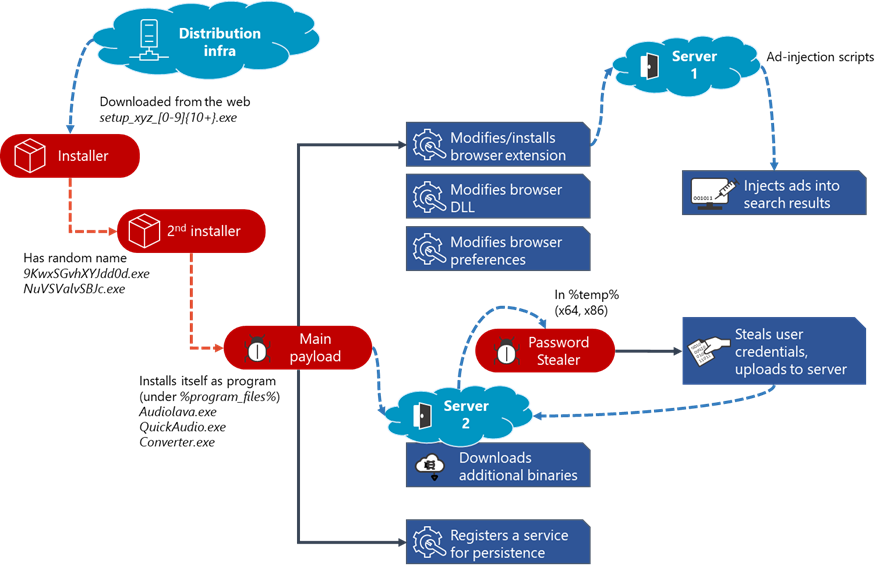

Feds: K-12 Cyberattacks Dramatically on the Rise

“Unfortunately, K-12 education institutions are continuously bombarded with

ransomware attacks, as cybercriminals are aware they are easy targets

because of limited funding and resources,” said James McQuiggan, security

awareness advocate at KnowBe4, via email. “The U.S. government is aware of

the growing need to protect the schools and has put forth efforts to provide

the proper tools for education institutions. A bill has been introduced

called the K-12 Cybersecurity Act of 2019, which unfortunately has not been

passed yet. This type of action by the government will start the process of

protecting school districts from ransomware attacks.” Meanwhile, other

malware types are being used in attacks on schools – with ZeuS and Shlayer

the most prevalent. ZeuS is a banking trojan targeting Microsoft Windows

that’s been around since 2007, while Shlayer is a trojan downloader and

dropper for MacOS malware. These are primarily distributed through malicious

websites, hijacked domains and malicious advertising posing as a fake Adobe

Flash updater, the agencies warned. Social engineering in general is on the

rise in the edtech sector, they added, against students, parents, faculty,

IT personnel or other individuals involved in distance learning.

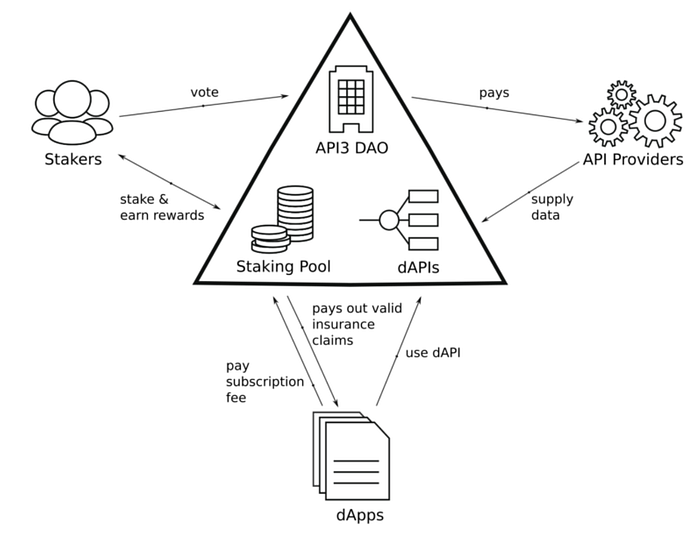

The Security Operations Center is an integrated unit dealing with

high-quality IT security operations. The primary of a Security Operations

Center are to monitor, prevent, detect, investigate, and respond to

various cyber threats. SOC teams monitor and protect an organization’s

assets like intellectual property, personnel data, business systems, and

brand integrity. The SOC team plays an important role in organizations by

defending them against incidents and intrusions — regardless of source,

time, or the type of attack — through their 24/7 monitoring. ... An

increase in the usage of cloud-based solutions across SMEs is the crucial

factor driving demand in the global SOC-as-a-Service. The adoption of

systems like machine learning, artificial intelligence, and blockchain

technologies for cyber defense has further opened new growth avenues in

this market. There is an increased demand for Security Operations Center

analysts across North America, Europe, the Middle East, Africa, Asia

Pacific, and Latin America. Out of these, North America holds a dominant

share in this market.

Australian intelligence community seeking to build a top-secret cloud

The project does not involve agencies collecting any new data. Nor does it expand their remit. All existing regulatory arrangements still apply. Rather, the NIC hopes that a community cloud will improve its ability to analyse data and detect threats, as well as improve collaboration and data sharing. "Top Secret" is the highest level in Australia's Protective Security Policy Framework. It represents material which, if released, would have "catastrophic business impact" or cause "exceptionally grave damage to the national interest, organisations or individuals". Until very recently the only major cloud vendor to handle top secret data, at least to the equivalent standards of the US government, was Amazon Web Services (AWS). AWS in 2017 went live with an AWS Secret Region targeted towards the US intelligence community, including the CIA, and other government agencies working with secret-level datasets. In Australia, AWS was certified to the protected level, two classification levels down from top secret. The "protected" certification came via the ASD's Certified Cloud Services List (CCSL), which was in June shuttered, leaving certifications gained through the CCSL process void.Quote for the day:

"Leadership is liberating people to do what is required of them in the most effective and humane way possible." -- Max DePree