5 signs your agile development process must change

Agile teams figure out fairly quickly that polluting a backlog with every

idea, request, or technical issue makes it difficult for the product owner,

scrum master, and team to work efficiently. If teams maintain a large backlog

in their agile tools, they should use labels or tags to filter the near-term

versus longer-term priorities. An even greater challenge is when teams adopt

just-in-time planning and prioritize, write, review, and estimate user stories

during the leading days to sprint start. It’s far more difficult to develop a

shared understanding of the requirements under time pressure. Teams are less

likely to consider architecture, operations, technical standards, and other

best practices when there isn’t sufficient time dedicated to planning. What’s

worse is that it’s hard to accommodate downstream business processes, such as

training and change management if business stakeholders don’t know the target

deliverables or medium-term roadmap. There are several best practices to plan

backlogs, including continuous agile planning, Program Implement planning, and

other quarterly planning practices. These practices help multiple agile teams

brainstorm epics, break down features, confirm dependencies, and prioritize

user story writing.

How to Align DevOps with Your PaaS Strategy

Some organizations are adopting a multi-PaaS strategy which typically takes

the form of developing an application on one PaaS and deploying it to multiple

public clouds. However, not all PaaS provide that capability. One reason to

deploy to multiple clouds is increase application reliability. Despite SLAs,

outages may occur from time to time. Alternatively, different applications may

require the use of different PaaS because the PaaS services vary from vendor

to vendor. However, more vendors mean more complexity to manage. "Tomorrow,

your business transaction is going to be going over SaaS services provided by

multiple vendors so I might have to orchestrate across multiple clouds,

multiple vendors to complete my business transaction," said Chennapragada.

"Tying myself [to] a vendor is going to constrain me from orchestrating, so

our clients are thinking of a more cloud-agnostic, vendor-agnostic solution."

One of the general concerns some organizations have is whether they have the

expertise to manage everything themselves, which has led to a huge

proliferation of managed service providers. That way, DevOps teams have more

time to focus on product development and delivery. PaaS expertise can be

difficult to find because PaaS skills are niche skills.

Low Code: CIOs Talk Challenges and Potential

CIO viewpoints honestly differed. For example, CIO Milos Topic suggests “it is

still early in experimentation in our environment, but it is mostly useful in

automating and provisioning repetitive processes and modules. But it is

essential to stress that low code doesn't mean hands off.” Meanwhile, CIO

David Seidl says “the adoption is big because of the ability to make more

responsive changes. The trade-off is interesting. The open question is: can

you remove one of the cost layers (maintaining code) and trade it for business

logic and platform maintenance? And how do you minimize platform maintenance

and could cloud services help. The big question is: do we consider business

logic code? It can be just as complex to build and debug complex business

logic in a drag and drop as traditional code. So, you win on the

UI/layout/integration components, but core code remains an open question.”

However, CIO Deb Gildersleeve suggests that low code gives business users

without technical coding expertise the tools to solve their problems. It takes

the burden outside of IT but can be provided with guardrails for security

governance.”

Security Think Tank: Integration between SIEM/SOAR is critical

Security operations teams will have a playbook which details the decisions and

actions to be taken from detection to containment. This may suggest actions to

be taken on detection of a suspicious event through escalation and possible

responses. SOAR can automate this, taking autonomous decisions that support

the investigation, drawing in threat intelligence and presenting the results

to the analyst with recommendations for further action. The analyst can then

select the appropriate action, which would be carried out automatically, or

the whole process can be automated. For example, the detection of a possible

command and control transmission could be followed up in accordance with the

playbook to gather relevant threat intelligence and information on which hosts

are involved and other related transmissions. The analyst would then be

notified and given the option to block the transmissions and isolate the hosts

involved. Once selected, the actions would be carried out automatically.

Throughout the process, ticketing and collaboration tools would keep the team

and relevant stakeholders informed and generate reports as required.

Low-Code To Become Commonplace in 2021

The citizen developer concept has been gathering marketing steam, but it might

not be just hype. Now, data suggests low-code tools are actually opening doors

for such non-developers. Seventy percent of companies said non-developers in

their company already build tools for internal business use, and nearly 80%

predict to see more of this trend in 2021. It should be noted that low-code

and no-code do not seek to replace all engineering talent; instead, to free

them up to engage in more complex tasks. “With low-code, you free up your

engineers to work on harder problems, instead of having them work on basic

things,” said Arisa Amano, CEO of Internal. She believes this could translate

into more innovation companywide. Surprisingly, bringing non-traditional

engineers into the development fold is not being met with ambivalence—69.2% of

respondents foresee that citizen developers positively affect engineering

teams, with the rest primarily exhibiting a neutral reaction. The costs of

internal security threats are high. Breaches could decrease customer trust,

harm brand reputation and lead to escalating legal fees. With cyberattacks a

prevalent concern, cybersecurity must come back in style.

People want data privacy but don’t always know what they’re getting

In practice, differential privacy isn’t perfect. The randomization process

must be calibrated carefully. Too much randomness will make the summary

statistics inaccurate. Too little will leave people vulnerable to being

identified. Also, if the randomization takes place after everyone’s unaltered

data has been collected, as is common in some versions of differential

privacy, hackers may still be able to get at the original data. When

differential privacy was developed in 2006, it was mostly regarded as a

theoretically interesting tool. In 2014, Google became the first company to

start publicly using differential privacy for data collection. Since then, new

systems using differential privacy have been deployed by Microsoft, Google and

the U.S. Census Bureau. Apple uses it to power machine learning algorithms

without needing to see your data, and Uber turned to it to make sure their

internal data analysts can’t abuse their power. Differential privacy is often

hailed as the solution to the online advertising industry’s privacy issues by

allowing advertisers to learn how people respond to their ads without tracking

individuals. But it’s not clear that people who are weighing whether to share

their data have clear expectations about, or understand, differential privacy.

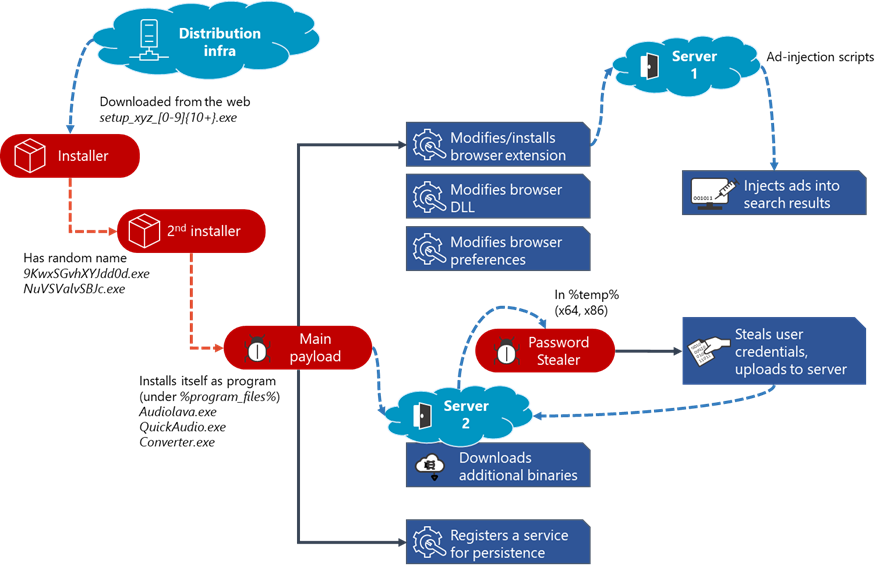

Widespread malware campaign seeks to silently inject ads into search results

The malware makes changes to certain browser extensions. On Google Chrome, the

malware typically modifies “Chrome Media Router”, one of the browser’s default

extensions, but we have seen it use different extensions. Each extension on

Chromium-based browsers has a unique 32-character ID that users can use to

locate the extension on machines or on the Chrome Web store. On Microsoft Edge

and Yandex Browser, it uses IDs of legitimate extensions, such as

“Radioplayer” to masquerade as legitimate. As it is rare for most of these

extensions to be already installed on devices, it creates a new folder with

this extension ID and stores malicious components in this folder. On Firefox,

it appends a folder with a Globally Unique Identifier (GUID) to the browser

extension. ... Despite targeting different extensions on each browser, the

malware adds the same malicious scripts to these extensions. In some cases,

the malware modifies the default extension by adding seven JavaScript files

and one manifest.json file to the target extension’s file path. In other

cases, it creates a new folder with the same malicious components. These

malicious scripts connect to the attacker’s server to fetch additional

scripts, which are responsible for injecting advertisements into search

results.

Penetration Testing: A Road Map for Improving Outcomes

Traditional penetration testing is a core element of many organizations'

cybersecurity efforts because it provides a reliable measurement of the

organization's security and defense measures. However, because a client can

classify assets as out of scope, the pen test may not give an accurate read on

the organization's full security posture. Because the pen-testing approach,

authorization process, and testing ranges are defined in advance, these

assessments may not measure an organization's true ability to identify and act

on suspicious activities and traffic. Ultimately, placing restrictions on a

test's scope or duration can harm the tested organization. In the real world,

neither time nor scope are of any consideration to attackers, meaning the

results of such a test are not entirely reliable. Incorporating

objective-oriented penetration testing can improve typical pen-testing systems

and, in turn, enhance an organization's security posture and incident

response, as well as limit their risk of exposure. The first step is to

agree on attackers' likely objectives and a reasonable time frame. For

example, consider ways attackers could access and compromise customer data or

gain access to a high-security network or physical location.

Facial recognition's fate could be decided in 2021

Several lawsuits filed in 2020 that could see resolution next year may also

have an impact on facial recognition. Clearview AI is facing multiple

lawsuits about its data collection. The company collected billions of public

images from social networks including YouTube, Facebook and Twitter. All of

those companies have sent a cease-and-desist letter to Clearview AI, but the

company maintains that it has a First Amendment right to take these images.

That argument is being challenged by Vermont's attorney general, the American

Civil Liberties Union and two lawsuits in Illinois. Clearview AI didn't

respond to requests for comment. The Clearview decision could play a role in

facial recognition's future. The industry relies on hordes of images of

people, which it gets in many ways. An NBC News report in 2019 called it a

"dirty little secret" that millions of photos online have been getting

collected without people's permission to train facial recognition

algorithms. "We're likely to also see growing amounts of litigation

against schools, businesses and other public accommodations under a new wave

of biometric privacy laws, including New York City's forthcoming ban on

commercial biometric surveillance," said the Surveillance Technology Oversight

Project's Cahn.

Hacking Group Dropping Malware Via Facebook, Cloud Services

While the newly discovered DropBook backdoor uses fake Facebook accounts for its

command-and-control operations, the report notes that both SharpStage and

DropBook utilize Dropbox to exfiltrate the data stolen from their targets, as

well as for storing espionage tools, according to the report. Once a device is

compromised, the SharpStage backdoor can capture screenshots, check for Arabic

language presence in the victims' device for precision targeting and download

and execute additional components. DropBook, on the other hand, is used for

reconnaissance and to deploy shell commands, the report notes. The attackers use

MoleNet to collect system information from the compromised devices, communicate

with the command-and-control servers and maintain persistence, according to the

report. Besides the new backdoor components, researchers note the hackers

deployed an open-source remote access Trojan called Quasar, which was previously

linked to a Molerats campaign in 2017. Cybereason researchers note that once the

DropBook malware is in the victims' devices, it begins its operation by fetching

a token from a post on a fake Facebook account.

Quote for the day:

"Example has more followers than reason. We unconsciously imitate what pleases us, and approximate to the characters we most admire." -- Christian Nestell Bovee

No comments:

Post a Comment