The Past, Present, and Future of API Gateways

AJAX (Asynchronous JavaScript and XML) development techniques became ubiquitous during this time. By decoupling data interchange from presentation, AJAX created much richer user experiences for end users. This architecture also created much “chattier” clients, as these clients would constantly send and receive data from the web application. In addition, ecommerce during this era was starting to take off, and secure transmission of credit card information became a major concern for the first time. Netscape introduced Secure Sockets Layer (SSL) -- which later evolved to Transport Layer Security (TLS) -- to ensure secure connections between the client and server. These shifts in networking -- encrypted communications and many requests over longer lived connections -- drove an evolution of the edge from the standard hardware/software load balancer to more specialized application delivery controllers (ADCs). ADCs included a variety of functionality for so-called application acceleration, including SSL offload, caching, and compression. This increase in functionality meant an increase in configuration complexity.

Adapting Cloud Security and Data Management Under Quarantine

The current state of affairs is not something envisioned by many business continuity plans, says Wendy Pfeiffer, CIO of Nutanix. Most organizations are operating in a hybrid mode, she says, with infrastructure and services running in multiple clouds. This can include private clouds, SaaS apps, Amazon Web Services, Microsoft Azure, and Google Cloud Platform. Though this specific situation may not have been planned for, the cloud allows for unexpected needs to scale and pivot, Pfeiffer says. “Maybe we envisioned a region being inaccessible but not necessarily every region all at once.” Normally it can be easy to declare standards within IT, she says, and instrument an environment to operate in line with those standards to maintain control and security. Losing control of that environment under quarantines can be problematic. “If everyone suddenly pivots to work from home, then we no longer control the devices people use to access the network,” Pfeiffer says. Such disruption, she says, makes it difficult to control performance, security, and the user experience.

While 78 per cent of organisations said they are using more than 50 discrete cybersecurity products to address security issues, 37 per cent used more than 100 cybersecurity products. Organisations who discovered misconfigured cloud services experienced 10 or more data loss incidents in the last year, according to the report. IT professionals have concerns about cloud service providers. Nearly 80 per cent are concerned that cloud service providers they do business with will become competitors in their core markets. "Seventy-five per cent of IT professionals view public cloud as more secure than their own data centres, yet 92 per cent of IT professionals do not trust their organization is well prepared to secure public cloud services," the findings showed. Nearly 80 per cent of IT professionals said that recent data breaches experienced by other businesses have increased their organization's focus on securing data moving forward.

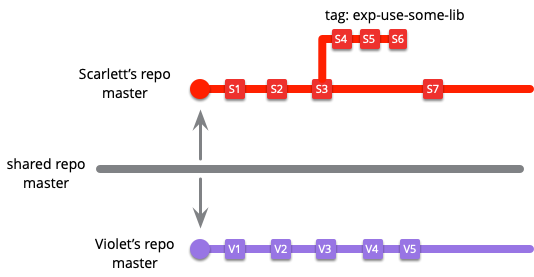

Continuous Security Through Developer Empowerment

Before DevOps kicked in, app performance monitoring (APM) was owned by IT, who used synthetic measurements from many points around the world to assess and monitor how performant an application was. These solutions were powerful, but their developer experience was horrible. They were expensive, which limited tests developers could run. They excelled in explaining the state through aggregating tests, but offered little value to a developer trying to troubleshoot a performance problem. As a result, developers rarely used them. Then, New Relic came on the scene, introducing a different approach to APM. Their tools were free or cheap to start with, making it accessible to all dev teams. They used instrumentation to offer rich results in developer terms (call stacks, lines of code), making them better for fixing problems. This new approach revolutionized the APM industry, embedded performance monitoring into dev practices and made the web faster. The same needs to happen for application security.

Data security guide: Everything you need to know

The move to the cloud presents an additional threat vector that must be well understood in respect to data security. The 2019 SANS State of Cloud Security survey found that 19% of survey respondents reported an increase in unauthorized access by outsiders into cloud environments or cloud assets, up 7% since 2017. Ransomware and phishing also are on the rise and considered major threats. Companies must secure data so that it cannot leak out via malware or social engineering. Breaches can be costly events that result in multimillion-dollar class action lawsuits and victim settlement funds. If companies need a reason to invest in data security, they need only consider the value placed on personal data by the courts. Sherri Davidoff, author of Data Breaches: Crisis and Opportunity, listed five factors that increase the risk of a data breach: access; amount of time data is retained; the number of existing copies of the data; how easy it is to transfer the data from one location to another -- and to process it; and the perceived value of the data by criminals.

This new, unusual Trojan promises victims COVID-19 tax relief

The malware is unusual as it is written in Node.js, a language primarily reserved for web server development. "However, the use of an uncommon platform may have helped evade detection by antivirus software," the team notes. The Java downloader, obfuscated via Allatori in the lure document, grabs the Node.js malware file -- either "qnodejs-win32-ia32.js" or "qnodejs-win32-x64.js" -- alongside a file called "wizard.js." Either a 32-bit or 64-bit version of Node.js is downloaded depending on the Windows system architecture on the target machine. Wizard.js' job is to facilitate communication between QNodeService and its command-and-control (C2) server, as well as to maintain persistence through the creation of Run registry keys. After executing on an impacted system, QNodeService is able to download, upload, and execute files; harvest credentials from the Google Chrome and Mozilla Firefox browsers, and perform file management. In addition, the Trojan can steal system information including IP address and location, download additional malware payloads, and transfer stolen data to the C2.

Quantum computing analytics: Put this on your IT roadmap

"There are three major areas where we see immediate corporate engagement with quantum computing," said Christopher Savoie, CEO and co-founder of Zapata Quantum Computing Software Company, a quantum computing solutions provider backed by Honeywell. "These areas are machine learning, optimization problems, and molecular simulation." Savoie said quantum computing can bring better results in machine learning than conventional computing because of its speed. This rapid processing of data enables a machine learning application to consume large amounts of multi-dimensional data that can generate more sophisticated models of a particular problem or phenomenon under study. Quantum computing is also well suited for solving problems in optimization. "The mathematics of optimization in supply and distribution chains is highly complex," Savoie said. "You can optimize five nodes of a supply chain with conventional computing, but what about 15 nodes with over 85 million different routes? Add to this the optimization of work processes and people, and you have a very complex problem that can be overwhelming for a conventional computing approach."

COBIT Tool Kit Enhancements

The value of this tool is that it provides a convenient means of quickly assessing and assigning relevant roles to practices across the 40 COBIT objectives. COBIT promotes using a common language and common understanding among practitioners. Common terminology facilitates communication and mitigates opportunities for error. Using RACI charts and the new COBIT Tool Kit spreadsheet provides the guidance to help practitioners extract the COBIT practices relevant for each job role. Another benefit of compiling all practices into a single RACI chart is that metrics reporting can be better assessed. A user can filter all practices by accountability of a single role and then compare metrics reporting on those practices and determine whether sufficient coverage has been created. An assessment of that type is not as effective when RACIs are developed at the higher, objective, level. The new spreadsheet can be found in the complementary COBIT 2019 Tool Kit. The tool kit is available on the COBIT page of the ISACA website.

Build your own Q# simulator – Part 1: A simple reversible simulator

Simulators are a particularly versatile feature of the QDK. They allow you to perform various different tasks on a Q# program without changing it. Such tasks include full state simulation, resource estimation, or trace simulation. The new IQuantumProcessor interface makes it very easy to write your own simulators and integrate them into your Q# projects. This blog post is the first in a series that covers this interface. We start by implementing a reversible simulator as a first example, which we extend in future blog posts. A reversible simulator can simulate quantum programs that consist only of classical operations: X, CNOT, CCNOT (Toffoli gate), or arbitrarily controlled X operations. Since a reversible simulator can represent the quantum state by assigning one Boolean value to each qubit, it can run even quantum programs that consist of thousands of qubits. This simulator is very useful for testing quantum operations that evaluate Boolean functions.

Diligent Engine: A Modern Cross-Platform Low-Level Graphics Library

This article describes Diligent Engine, a light-weight cross-platform graphics API abstraction layer that is designed to solve these problems. Its main goal is to take advantages of the next-generation APIs such as Direct3D12 and Vulkan, but at the same time provide support for older platforms via Direct3D11, OpenGL and OpenGLES. Diligent Engine exposes common C/C++ front-end for all supported platforms and provides interoperability with underlying native APIs. It also supports integration with Unity and is designed to be used as graphics subsystem in a standalone game engine, Unity native plugin or any other 3D application. ... As it was mentioned earlier, Diligent Engine follows next-gen APIs to configure the graphics/compute pipeline. One big Pipelines State Object (PSO) encompasses all required states (all shader stages, input layout description, depth stencil, rasterizer and blend state descriptions, etc.). This approach maps directly to Direct3D12/Vulkan, but is also beneficial for older APIs as it eliminates pipeline misconfiguration errors.

Quote for the day:

"Different times need different types of leadership." -- Park Geun-hye