Is it time to believe the blockchain hype?

While much criticised at the outset and subsequently watered down to appease regulators, Libra has also triggered discussion around central bank digital currencies (CBDCs) with almost every major central bank announcing their attention to explore the possibilities of these. Among the numerous benefits to CBDCs, the most oft-repeated is addressing the decline in the use of cash, something which has accelerated this year with more shopping taking place online and bricks-and-mortar retailers ceasing to accept paper money. According to a recent report by campaign group Positive Money the disappearance of cash would lead to an essential privitisation of money with commercial banks holding an oligopoly over digital money and payment systems. Such a situation would also prove damaging for the unbanked population, which still totals an estimated 1.7 billion people worldwide. While these people do not have access to the current financial systems due to not being able to prove their identities, they could use digital currencies provided they have a mobile phone and an internet connection.

Banks failing to protect customers from coronavirus fraud

A paltry 13 out of the 64 banks accredited by the UK government for its Coronavirus Business Interruption Loan Scheme (CBILS) have bothered to implement the strictest level of domain-based messaging authentication, reporting and conformance – or Dmarc – protection to stop cyber criminals from spoofing their identity to use in phishing attacks. This means that 80% of accredited banks are unable to say they are proactively protecting their customers from fraudulent emails, and 61% have no published Dmarc record whatsoever, according to Proofpoint, a cloud security and compliance specialist. Domain spoofing to pose as a government body or other respected institution, such as a provider of financial services, is a highly popular method used by cyber criminals to compromise their targets. Using this technique, they can make an illegitimate email appear as if it is coming from a supposedly completely legitimate email address, which neatly gets around one of the most obvious ways people have of spotting a phishing email – the address does not match the institution in any way.

Flattening The Curve On Cybersecurity Risk After COVID-19

This is an opportunity but also a big risk for them. Many of them know their digital business system is vital to helping them navigate this change. But periods of disruption, whether driven by good or bad circumstances, present opportunities for hackers. So that cybersecurity risk gap I talked about earlier between threats and defensibility isn’t going to close naturally; that curve isn’t flattening. New cybersecurity risks are going to continue to emerge, and defensive capabilities have to continue to try to stay ahead. A common question that a lot of board members ask, is “Are we spending the right amount on cybersecurity?” That’s the wrong question. The right question is, “What do we need to protect, what’s the value of what we are trying to protect, and how secure is it for what we’re spending?” That’s their challenge heading into what could be massive waves of systemic change. The business value that their digital business systems drive is only increasing, and the threats to that value are only going to go up.

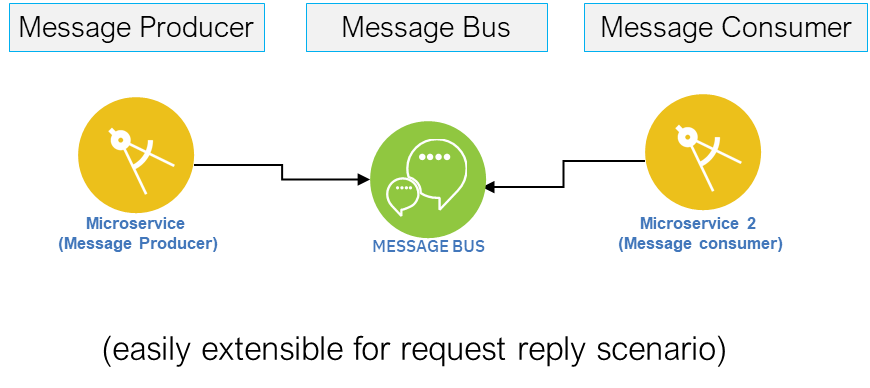

Architecture Decision for Choosing Right Digital Integration Patterns – API vs. Messaging vs. Event

Direct Application Programming Interface (API), allows two heterogeneous applications to talk to each other. For example, each time we use an app on our mobile devices, the app is likely making several API calls to various digital services. Direct APIs can be designed to be Blocking (Synchronous) or Non-Blocking (Asynchronous). Of these, Non-Blocking APIs are preferred to ensure resources are not blocked when the consumer is waiting for a response from provider. Non-blocking APIs also help create independently scalable integration model between API Consumers and API Provider ... A Message is fundamentally an asynchronous mode of communication between two applications — it is an indirect invocation, such that two applications do not directly connect to each other. Thus, the Messaging technique decouples the consumer and provider, and removes the need of provider being available at exact same point in time as the consumer. It also addresses the scalability limitations of the provider.

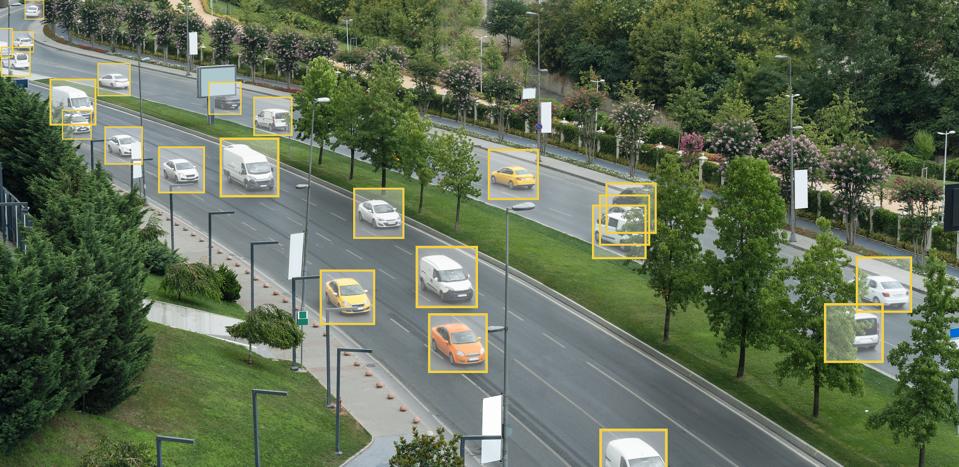

Machine learning algorithms explained

Machine learning algorithms train on data to find the best set of weights for each independent variable that affects the predicted value or class. The algorithms themselves have variables, called hyperparameters. They’re called hyperparameters, as opposed to parameters, because they control the operation of the algorithm rather than the weights being determined. The most important hyperparameter is often the learning rate, which determines the step size used when finding the next set of weights to try when optimizing. If the learning rate is too high, the gradient descent may quickly converge on a plateau or suboptimal point. If the learning rate is too low, the gradient descent may stall and never completely converge. Many other common hyperparameters depend on the algorithms used. Most algorithms have stopping parameters, such as the maximum number of epochs, or the maximum time to run, or the minimum improvement from epoch to epoch. Specific algorithms have hyperparameters that control the shape of their search. For example, a Random Forest Classifier has hyperparameters for minimum samples per leaf, max depth, minimum samples at a split, minimum weight fraction for a leaf, and about 8 more.

COVID-19 Impact on the Future of Fintech

Besides their age, scalability and financial condition, the outlook of many fintech organizations will also be driven by the product category they are in. This is especially true in the near term, when the impact of the pandemic on consumer behavior is expected to be the greatest. According to BCG, negative impact of COVID-19 will be more severe for those fintechs in international payments, unsecured and secured consumer lending, small business lending and for those where risks may be highest. It is believed that those fintech firms focused on B2B banking are less vulnerable as a group. ... As could be expected, technology providers were some of the early winners when COVID-19 hit as traditional banking organizations scurried to deploy digital solutions to meet consumer demand. Many of the sales were initiatives already agreed to but not yet implemented until market conditions required immediate action. It will be interesting to see if investment in technology and digital solutions continues as traditional financial institutions are forced to reduce costs.

Simplicity and Security: What Commercial Providers Offer for the Service Mesh

Whatever the maturity level, one of the advantages of a commercial offering is support. There’s no easy way to get advice or troubleshooting from purely open-source service meshes. For some organizations that doesn’t matter, but for others, the knowledge that there’s someone to call in case of a problem is critical — and might even be baked into corporate governance policies. One of the benefits of using a sidecar proxy service mesh with Kubernetes, Jenkins said, is that it allows a smaller central platform team to manage a large infrastructure, and it reduces the burden on application developers to manage anything related to infrastructure management. Using a commercial service mesh provider lets organizations even further reduce the need to manage infrastructure internally, he says. Austin agreed that one of the things that makes a service mesh “enterprise-grade” is increased operational simplicity, making it as simple as possible for small platforms to manage huge application suites. For enterprises, that translates to the ability to spend more engineering resources on feature development and creating business value and less on infrastructure management.

Sacrificing Security for Speed: 5 Mistakes Businesses Make in Application Development

Data tends to be the most important and valuable aspect of modern web applications. Poor application design and architecture leads to data and security breaches. Application development teams generally assume that by providing the right authentication and authorization measures to the application, data will be protected. This is a misconception. Right measures to provide data security involve focussing on data integrity, fine grained data access and encrypting data while in rest as well as in motion. In addition, data security needs to be looked at holistically from the time the request is made to the time response is sent back across all layers of the application runtime. Today’s modern web applications are highly sophisticated and built with a big focus on simplistic user experience combined with high scalability. This combination can be challenging for application development teams from a security perspective. Most development teams focus only on silos when securing the application.

Google vs. Oracle: The next chapter

So, what next? Gesmer speculates: "We will have to see what the parties have to say on this issue when they file their briefs in August. However, a decision based on a narrow procedural ground such as the standard of review is likely to be attractive to the Supreme Court. It allows it to avoid the mystifying complexities of copyright law as applied to computer software technology. It allows the Court to avoid revisiting the law of copyright fair use, a doctrine the Court has not addressed in-depth in the 26 years since it decided Campbell v. Acuff-Rose Music, Inc. It enables it to decide the case on a narrow standard-of-review issue and hold that a jury verdict on fair use should not be reviewed de novo on appeal, at least where the jury has issued a general verdict." In other words, Oracle will lose and Google will win… for now. We still won't have an answer on the legal question that programmers want to know: What extent, if any, does copyright cover APIs? For an answer to that my friends, we may have to await the results of yet another Oracle vs. Google lawsuit. It may be wiser for Oracle to finally leave this issue alone. As Charles Duan, the director of Technology and Innovation Policy at the R Street Institute, a Washington DC non-profit think tank and Google ally, recently argued: Oracle itself is guilty of copying Amazon's S3 APIs.

2020 State of Testing Report

It is very difficult for us to define exactly what we "see" in the report. The best description for it might be a "feeling" of change, maybe even of evolution. We are seeing many indications reinforcing the increasing collaboration of test and dev, showing how the lines between our teams are getting blurrier with time. We are also seeing how the responsibility of testers is expanding, and the additional tasks that are being required from us in different areas of the team's tasks and challenges. ... I feel it makes testers critically think about the automation strategy they can have that best suits their context and make it reliable and meaningful. The flip side of it that I see sometimes is that if their automation strategy is not smart enough (or say if their CI/CD infrastructure is lame) testers end up just writing more automation and spending enormous time in just maintaining it for the sake of keeping the pipeline green. These efforts hardly contribute to the user-facing quality of the product and add no meaningful value.

Quote for the day:

"To have long term success as a coach or in any position of leadership, you have to be obsessed in some way." -- Pat Riley