Digital twins – rise of the digital twin in Industrial IoT and Industry 4.0

Digital twins offer numerous benefits on which we’ll elaborate later. In fact, you might already have seen the concept in action. If you didn’t, the video below, using a bike equipped with sensors, gives you a good idea. However, in real life you’ll notice that digital twins today are predominantly used in the Industrial Internet or Industrial Internet of Things and certainly engineering and manufacturing. If you remember our airplane engine or other complex and technology-intensive physical assets such as IoT-enabled industrial robots and far more, you can imagine why. You can even create a digital twin of a an environment with a set of physical assets, as long as you get those data. ... In the future we’ll see twins expand to more applications, use cases and industries and get combined with more technologies such as speech capabilities, augmented reality for an immersive experience, AI capabilities, more technologies enabling us to look inside the digital twin removing the need to go and check the ‘real’ thing and so on.

AI will be the biggest disruptor in our lifetime: Amitabh Kant, CEO, NITI Aayog

India is among the very few countries globally where the government has driven digitization in a big way. For instance, almost 99.3 percent of Indians pay their Income Tax online. Almost 96 percent of these filings are cleared within three months because they are digital. The new Goods & Service Tax (GST), is digital – cashless and paperless. The Ayushman Bharat scheme is portable, paperless and digital. It provides health insurance to 500 million Indians. The number of beneficiaries is greater than the population of the USA, Europe, and Mexico put together. Every single rupee released through the Public Finance Management System (PFMS) is tracked to the last point digitally. By integrating technology into various aspects of the economy, the government has generated vast volumes of datasets. It is important that we use this data along with computing power and new algorithms to drive huge disruption. That’s the only way we can radically leapfrog and catch up with advanced economies.

European enterprises 'waste' £24,000 a day on unused cloud services, says Insight research

Given the foundational role that cloud is increasingly playing within enterprise digital transformation strategies, these are important areas to address and get right, the report continues, as organisations set about making better use of their data through the deployment of analytics, machine learning and artificial intelligence tools. Indeed, 46% of respondents flagged AI, big data, machine learning and deep learning tools as being “critical” to their digital transformation initiatives over the past two years. “When analysed, shared, and leveraged intelligently, [data] can facilitate more informed decision-making, improve the quality of offerings, and enhance the customer experience,” the report said. “IT professionals express confidence in AI, big data and machine learning because these technologies enable organisations to transform data into business intelligence.” But this confidence could prove to be misplaced unless organisations have a robust cloud strategy in place to underpin their plans, the report added.

There is a real demand for AI in healthcare, but preserving privacy is key

The introduction of AI into healthcare is important for several reasons. The main one, though? Scale. “With the NHS potentially losing up to 350,000 staff by 2030, using AI will be the only way to scale services to match the mounting demand that is hitting the UK with a shrinking workforce,” explains Lorica. The impact of AI in healthcare won’t only be felt in the NHS. Instead, the technology will have a wide range of applications in everything from personal medicine to research, diagnosis and logistics. But, despite a clear desire to integrate AI, it must be done correctly. And before it can effectively disrupt the sector, Lorica suggests that “various organisational and cultural changes need to be implemented. ... Before AI can truly transform the healthcare sector, the elephant in the room needs to be addressed: patient confidentiality or privacy. “The NHS holds personal information about almost every person living in the country, which means preserving privacy, collecting and cleaning data and data sharing is paramount,” explains Lorica.

The Interesting Case of Who’s Using the IT4IT™ Standard – Part Two

Digitalization is driving the proliferation of Cloud and Mobility and is causing IT organizations to rethink their IT Operating Model to support both the digital workforce and new service delivery models. To exploit the rapid pace of disruptive IT innovation, HCL Technologies chose to adopt The Open Group IT4IT Reference Architecture to design and develop its XaaS-based (Everything as a Service) product and service offering. This meant that HCL Global IT needed to better understand the business requirements of IT to allow it to achieve the agility and velocity the business and end users required of its services. To achieve this, HCL Global IT required a unified and sophisticated IT Operating Model to support the business in their Digital Transformation journey. Therefore, HCL aligned its product and services to the IT4IT Value Stream-based IT Reference Architecture and developed a product and platform named XaaS Service Management (XSM), which has the ability and capability to address the customer-specific issues and challenges.

BigTech is coming. Is banking ready?

Many banks are responding to the competition from BigTechs (and fintechs) by learning from and co-creating with them to strengthen their client propositions. They are also investing heavily to support new partnerships, acquisitions, and the development of in-house solutions. Upholding this, a recent Bloomberg report that ranked banks by technology spending so far in 2019 showed the top five had invested a combined USD44 billion. Corporates have also been responding to the ‘uberisation’ of commerce following BigTech’s move from online consumer models further into the B2B arena. As well as re-engineering their physical and financial supply chains, corporates are now also rethinking their relationships with transaction banks. For example, as manufacturers try to replicate BigTech’s speed, they are considering decentralised production. Having multiple yet smaller assembly locations puts companies closer to the end-consumer. It also creates a more conducive environment to react to changing local demand and offer more customisation.

How Artificial Intelligence and IoT are transforming real estate

The trend for workplace flexibility also provides an incentive for rental platforms and Space as a Service. The increase in smaller companies (self-employed persons) is resulting in increased demand for flexible and on-demand workplaces. Many corporates are trying to cut on overheads, opting for shared workspaces. As part of this we have developed a software product called Yardi Kube that centres can use to manage members’ space allocations. Yardi Kube folds in a technology management system for shared workspaces, providing IP addresses, Wi-Fi and telephones that are crucial for this sector. Yardi’s coworking module will be released in 2020 across the Middle East. The platform will provide the most comprehensive coworking software on the market as it combines financial, workspace and technology management all in a centralised database. IoT is a technology where systems such as plumbing, electrical outlets, thermostats and lighting are connected and perform smart functions via the internet. From convenient property showing and increased energy efficiency to predictive maintenance, IoT applications are making it easier for people to buy, sell and own rental properties. Smart homes with IoT capabilities usually have a higher market value than those that don’t.

Can Oracle substantiate its cloud bluster?

Commenting on the competition in the cloud, Ellison said the cloud databases were open source-based and a lot more specialised. But he added: “None of them are autonomous. None of them are secure. None of them give you 99.995% availability. I mean, they’re – we’re 100 times more reliable.” But Oracle does face a challenge from these open source competitors. The competition is not coming directly from its previous enterprise customers and CIOs in these organisations. Instead, it is being driven from the bottom up by software developers choosing products they consider more exciting and, arguably, technically superior for the applications that use them, compared to Oracle. A recent Stack Overflow survey of 6,000 developers in the UK and Ireland reported that Oracle was not among the top three database servers being used: MySQL was used by 44.9% of respondents, Microsoft SQL Server by 40.7% and PostgreSQL by 31.4%.

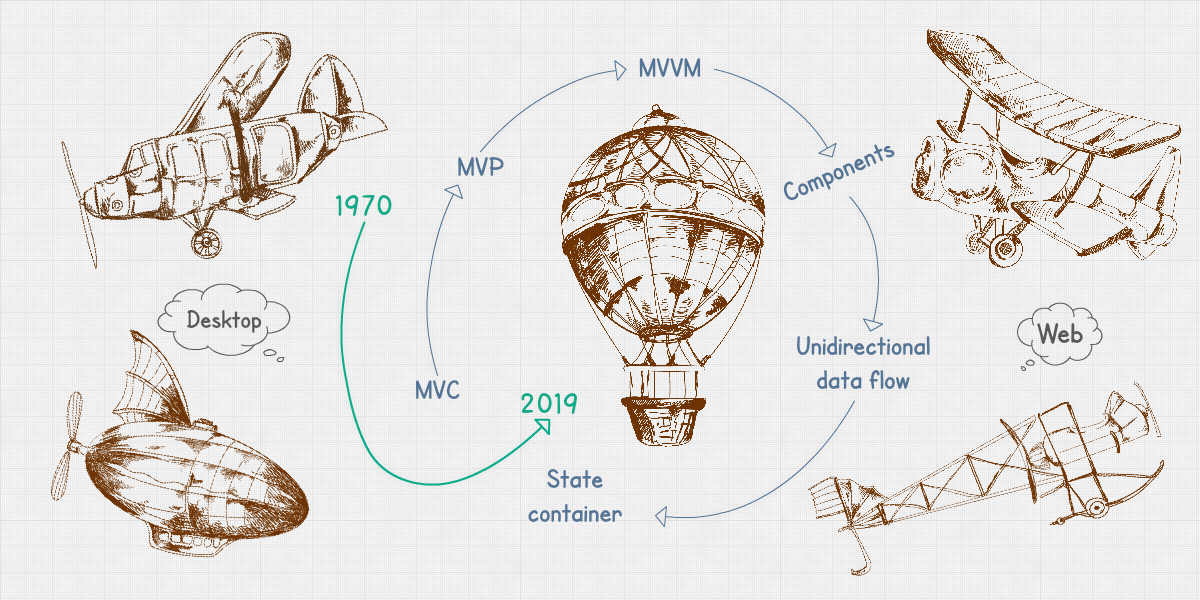

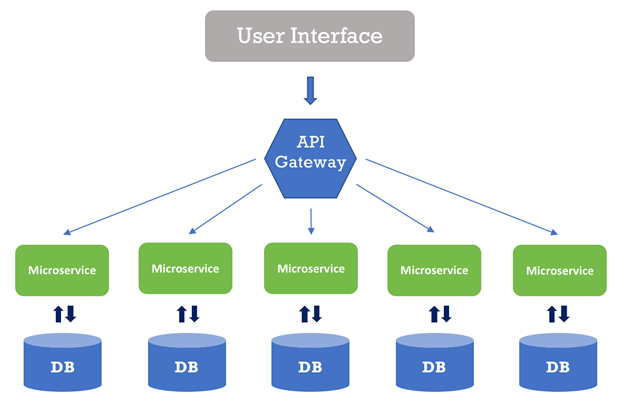

Testing Microservice: Examining the Tradeoffs of Twelve Techniques - Part 2

Most projects need a combination of testing techniques, including test doubles, to reach sufficient test coverage and stable test suites — you can read more about this so-called testing pyramid. You are faster to market with test doubles in place because you test less than you otherwise would have. Costs can grow with complexity. Because you do not need much additional infrastructure or test-doubles knowledge, this technique doesn’t cost much to start with. Costs can grow, however — for example, as you require more test infrastructure to host groups of related microservices that you must test together. Testing a test instance of a dependency reduces the chance of introducing issues in test doubles. Follow the test pyramid to produce a sound development and testing strategy or you risk ending up with big E2E test suites that are costly to maintain and slow to run. Use this technique with caution only after careful consideration of the test pyramid and do not fall into the trap of the inverted testing pyramid.

Google Wins 'Right to Be Forgotten' Case

Google commented on the ruling in a statement: "Since 2014, we've worked hard to implement the right to be forgotten in Europe, and to strike a sensible balance between people's rights of access to information and privacy. It's good to see that the court agreed with our arguments."Europe's General Data Protection Regulation, which went into full effect last year, has a separate "right to be forgotten" provision with much broader requirements. ... While ruling that Google does not have to extend the right to be forgotten for European citizens outside of Europe, the court acknowledged that in today's globalized world, information can harm a person's reputation. But it said different countries have different approaches to the right to be forgotten, and hence a universal law cannot be applied. "A global de-referencing would meet the objective of protection referred to in EU law in full. ... Numerous third states do not recognize the right to de-referencing or have a different approach to that right," the court said.

Quote for the day:

"Great leaders go forward without stopping, remain firm without tiring and remain enthusiastic while growing" -- Reed Markham