Cisco will use AI/ML to boost intent-based networking

“By applying machine learning and related machine reasoning, assurance can also sift through the massive amount of data related to such a global event to correctly identify if there are any problems arising. We can then get solutions to these issues – and even automatically apply solutions – more quickly and more reliably than before,” Apostolopoulos said. In this case, assurance could identify that the use of WAN bandwidth to certain sites is increasing at a rate that will saturate the network paths and could proactively reroute some of the WAN flows through alternative paths to prevent congestion from occurring, Apostolopoulos wrote. “In prior systems, this problem would typically only be recognized after the bandwidth bottleneck occurred and users experienced a drop in call quality or even lost their connection to the meeting. It would be challenging or impossible to identify the issue in real time, much less to fix it before it distracted from the experience of the meeting. Accurate and fast identification through ML and MR coupled with intelligent automation through the feedback loop is key to successful outcome.”

DevOps security best practices span code creation to compliance

As software development velocity increases with the adoption of continuous approaches, such as Agile and DevOps, traditional security measures struggle to keep pace. DevOps enables quicker software creation and deployment, but flaws and vulnerabilities proliferate much faster. As a result, organizations must systematically change their approaches to integrate security throughout the DevOps pipeline. ... Software security often starts with the codebase. Developers grapple with countless oversights and vulnerabilities, including buffer overflows; authorization bypasses, such as not requiring passwords for critical functions; overlooked hardware vulnerabilities, such as Spectre and Meltdown; and ignored network vulnerabilities, such as OS command or SQL injection. The emergence of APIs for software integration and extensibility opens the door to security vulnerabilities, such as lax authentication and data loss from unencrypted data sniffing. Developers' responsibilities increasingly include security awareness: They must use security best practices to write hardened code from the start and spot potential security weaknesses in others' code.

Reinforcement learning explained

The environment may have many state variables. The agent performs actions according to a policy, which may change the state of the environment. The environment or the training algorithm can send the agent rewards or penalties to implement the reinforcement. These may modify the policy, which constitutes learning. For background, this is the scenario explored in the early 1950s by Richard Bellman, who developed dynamic programming to solve optimal control and Markov decision process problems. Dynamic programming is at the heart of many important algorithms for a variety of applications, and the Bellman equation is very much part of reinforcement learning. A reward signifies what is good immediately. A value, on the other hand, specifies what is good in the long run. In general, the value of a state is the expected sum of future rewards. Action choices—policies—need to be computed on the basis of long-term values, not immediate rewards. Effective policies for reinforcement learning need to balance greed or exploitation—going for the action that the current policy thinks will have the highest value

The Linux desktop's last, best shot

Closer to home in the West, companies are turning to Linux for their engineering and developer desktops. Mark Shuttleworth, founder of Ubuntu Linux and its corporate parent Canonical, recently told me: "We have seen companies signing up for Linux desktop support because they want to have fleets of Ubuntu desktop for their artificial intelligence engineers." Even Microsoft has figured out that advanced development work requires Linux. That's why Windows Subsystem for Linux (WSL) has become a default part of Windows 10. So, the opportunity is there for Linux to grab some significant market share. My question is: "Is anyone ready to take advantage of this opportunity?" All the major Linux companies -- Canonical, Red Hat and SUSE -- support Linux desktops, though it's not a big part of their businesses. The groups which do focus on the desktop, such as Mint, MX Linux, Manjaro Linux, and elementary OS, are small and under-financed. So I can't see them delivering the support most users -- nevermind governments and companies -- need.

DNS – a security opportunity not to be overlooked, says Nominet

“We are seeing a lot more breaches, and with many businesses embracing digital transformation, the attack surface is getting wider. But in many cases, having an understanding of what is going on in the DNS layer can reduce the impact of breaches and even prevent them,” said Reed. “DNS has an important role to play because it underpins the network activity of all organisations. And because around 90% of malware uses DNS to cause harm, DNS potentially provides visibility of malware before it does so.” In addition to providing organisations with an opportunity to intercept malware before it contacts its command and control infrastructure, DNS visibility enables organisations to see other indictors of compromise such as spikes in IP traffic and DNS hijacking. “Being able to track and monitor DNS activity is important as it enables organisations to identify phishing campaigns and the associated leakage of data. It also enables them to reduce the time attackers are in the network and spot new domains being spun up for malicious activity and data exfiltration,” said Reed.

The Sustainability Revolution Hits Retail

Technology is paramount to building a truly sustainable business. Retailers are already applying advanced data analytics to supply chains to make the most of resources and reduce waste, which has a knock on effect in terms of sustainability. The Industrial Internet of Things (IIoT) will continue to improve operational efficiency across different organisations, cutting down on energy and expenditure. Despite debate over the sustainability of blockchain, distributed ledger technology could bring about the transparency that could kill environmentally or socially questionable products. Blockchain could provide visibility across the entire supply chain, so buyers know exactly where it came from, and how it was made. Richline Group, for example, is already using blockchain to ensure that its diamonds are ethically sourced. Materials science also has an important role in finding new materials that are cheaper and lower maintenance than existing alternatives. 3D printing is key to working with new materials, creating rapid prototypes for testing. The adoption of innovative manufacturing techniques like 3D printing and advanced robotics is hoped to make supply chains more efficient.

Blazor on the Server: The Good and the Unfortunate

If you're wondering what the difference is between Blazor and BotS ... well, from "the code on the ground" point of view, not much. It's pretty much impossible, just by looking at the code in a page, to tell whether you're working with Blazor-on-the-Client or Blazor-on-the-Server. The primary difference between the two -- where your C# code executes -- is hidden from you. With BotS, SignalR automatically connects activities in the browser with your C# code executing on the server. That SignalR support obviously makes Blazor solutions less scalable than other Web technologies because of SignalR's need to maintain WebSocket connections between the client and the server. However, that scalability issue may not be as much of a limitation as you might think. What BotS does do, however, is "normalize" a lot of the ad hoc ways that have been needed when working Blazor in previous releases. BotS components are, for example, just another part of an ASP.NET Core project and play well beside other ASP.NET Core technologies like Razor Pages, View Components and good old Controllers+Views.

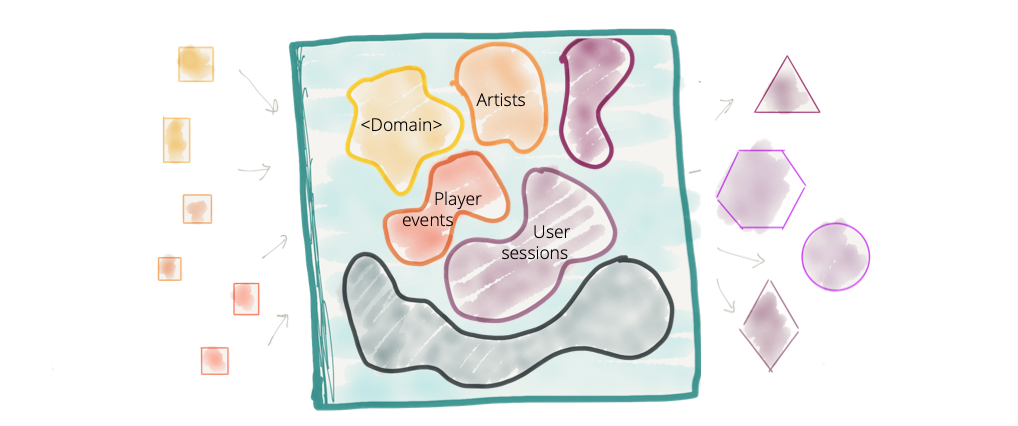

Self-learning sensor chips won’t need networks

Key to Fraunhofer IMS’s Artificial Intelligence for Embedded Systems (AIfES) is that the self-learning takes place at chip level rather than in the cloud or on a computer, and that it is independent of “connectivity towards a cloud or a powerful and resource-hungry processing entity.” But it still offers a “full AI mechanism, like independent learning,” It’s “decentralized AI,” says Fraunhofer IMS. "It’s not focused towards big-data processing.” Indeed, with these kinds of systems, no connection is actually required for the raw data, just for the post-analytical results, if indeed needed. Swarming can even replace that. Swarming lets sensors talk to one another, sharing relevant information without even getting a host network involved. “It is possible to build a network from small and adaptive systems that share tasks among themselves,” Fraunhofer IMS says. Other benefits in decentralized neural networks include that they can be more secure than the cloud. Because all processing takes place on the microprocessor, “no sensitive data needs to be transferred,” Fraunhofer IMS explains.

New RCE vulnerability impacts nearly half of the internet's email servers

In a security alert shared with ZDNet earlier today, Qualys, a cyber-security firm specialized in cloud security and compliance, said it found a very dangerous vulnerability in Exim installations running versions 4.87 to 4.91. The vulnerability is described as a remote command execution -- different, but just as dangerous as a remote code execution flaw -- that lets a local or remote attacker run commands on the Exim server as root. Qualys said the vulnerability can be exploited instantly by a local attacker that has a presence on an email server, even with a low-privileged account. But the real danger comes from remote hackers exploiting the vulnerability, who can scan the internet for vulnerable servers, and take over systems. "To remotely exploit this vulnerability in the default configuration, an attacker must keep a connection to the vulnerable server open for 7 days (by transmitting one byte every few minutes)," researchers said. "However, because of the extreme complexity of Exim's code, we cannot guarantee that this exploitation method is unique; faster methods may exist."

What is CI/CD? Continuous integration and continuous delivery explained

Continuous integration is a development philosophy backed by process mechanics and some automation. When practicing CI, developers commit their code into the version control repository frequently and most teams have a minimal standard of committing code at least daily. The rationale behind this is that it’s easier to identify defects and other software quality issues on smaller code differentials rather than larger ones developed over extensive period of times. In addition, when developers work on shorter commit cycles, it is less likely for multiple developers to be editing the same code and requiring a merge when committing. Teams implementing continuous integration often start with version control configuration and practice definitions. Even though checking in code is done frequently, features and fixes are implemented on both short and longer time frames. Development teams practicing continuous integration use different techniques to control what features and code is ready for production.

Quote for the day:

"When building a team, I always search first for people who love to win. If I can't find any of those, I look for people who hate to lose." - H. Ross Perot