Lenovo Partners With Blockchain Platform to Develop Its IoT and AR/VR Hybrid Software

“The Internet of Things and augmented reality are already changing the way we interact with the world. We are excited to partner with AR titan Lenovo New Vision. I see the combination of AI/AR and IoT revolutionizing the business environment," Credits CEO & founder Igor Chugunov said in the press release. Lenovo New Vision Technology intends to incorporate Credits’ blockchain solution to streamline internal operations and management procedures. “Credits [has] been chosen by Lenovo New Vision Technology thanks to its distinctive technical solutions, such as [a] unique consensus algorithm which consists of dPoS (delegated-proof-of-stake) and BFT (Byzantine Fault Tolerance) features,” says the press release. The blockchain platform that Credits has built is capable of performing up to one million transactions per second, it offers a processing speed of 0.01 seconds, combined with commission rates of as low as $0.001. The extended functionality of Credits’ smart contracts makes it possible to set cycles and create schedules.

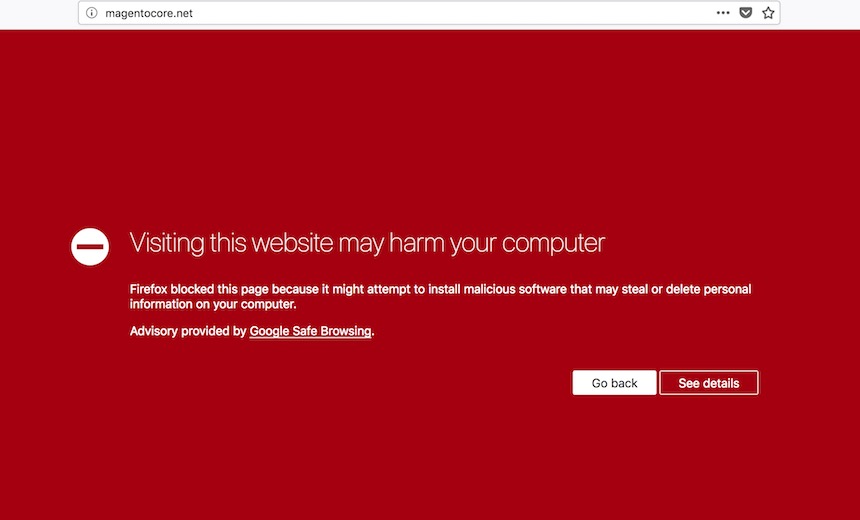

The ultimate guide to finding and killing spyware and stalkerware on your smartphone

Our digital selves, more and more, are becoming part of our full identity. The emails we send, the conversations we have over social media -- both private and public -- as well as photos we share, the videos we watch, and the websites we visit all contribute to our digital forms. ... As mobile devices are now a common tool for social interactions, it is not just ad agencies, data miners, and surveillance-hungry powers that want to keep track of us. When a government agency, country, or cybercriminals decide to peek into our digital lives, there are generally ways to prevent them from doing so. Virtual private networks (VPNs), end-to-end encryption and using browsers that do not track user activity are all common methods. Sometimes, however, surveillance is more difficult to detect -- and closer to home. This guide will run through what spyware is, what the warning signs of infection are, and how to remove such pestilence from your mobile devices.

The How-To: Improving Customer Satisfaction With Digital Leadership

Hiring the right people and giving them a platform doesn’t automatically make them customer service champions. What does your business think about the digital revolution? What ideas do you have in your company on how to satisfy your customer with digital platforms. Do you have a guideline for every department on how they can use digital tools to improve their service to customers? Having a digital culture shows your employees that your business is serious about implementing digital innovations in serving customers. Zappos uses the 'WOW Philosophy' to go the extra mile in ensuring that there customers are happy. The company doesn’t want to be known as the “shoe company” but as “a great customer service company. Zappos created a fun working environment which leads to workers having fun at and with their tasks, and this robs off on the customer. A solid digital culture is also a way of showing your customers that you’re trying your best as a business to improve customer service.

Industrial Networking Enabling IIoT Communication

Industrial networking is different from networking for the enterprise or networking for consumers. First there is the convergence of Information Technology (IT) and Operational Technology (OT). Important networking considerations include whether to use wired or wireless, how to support mobility (e.g. vehicles, equipment, robots and workers) and how to reconfigure components. Other factors include the lifecycle of the deployments, physical environmental conditions such as those found in mining and agriculture and electromagnetic conditions where interference from machines and equipment can be a problem. Then we have power. Will it be available? Or will devices need to run on local power such as batteries? Second there are the technical requirements. They include network latency and jitter, throughput needs, reliability and availability. The requirements can vary from being relaxed to highly demanding. The network must meet the end-to-end performance requirements for applications deployed both at the edge and in the cloud.

Design Thinking: Future-proof Yourself from AI

Integrating design thinking and machine learning can give you “super powers” that future-proof whatever career you decide to pursue. To meld these two disciplines together, one must: Understand where and how machine learning can impact your business initiatives. While you won’t need to write machine learning algorithms (though I wouldn’t be surprised given the progress in “Tableau-izing” machine learning), business leaders do need to learn how to “Think like a data scientist” in order understand how machine learning can optimize key operational processes, reduce security and regulatory risks, uncover new monetization opportunities; Understand how design thinking techniques, concepts and tools can create a more compelling and emphatic user experience with a “delightful” user engagement through superior insights into your customers’ usage objectives, operating environment and impediments to success. Let’s jump into the specifics about what business leaders need to know about integrating design thinking and machine learning in order to provide lifetime job security (my career adviser bill will be in the mail)!

The Pentagon is investing $2 billion into artificial intelligence

Artificial intelligence, which lets machines perform tasks traditionally done by humans, is a trendy topic in technology and business circles. For example, Google recently delighted and alarmed observers when it showed how an AI system could call a restaurant and book a reservation while sounding entirely human. Breakthroughs in the last decade have inspired companies to recruit top AI talent away from academia. Machines are now much more accurate at recognizing speech, understanding images and processing words, leading to products such as Amazon's Alexa, Apple's Siri, and Waymo's self-driving vans. The country's biggest and most innovative companies rely on it to stay ahead of competitors.Waymo's autonomous vehicles have driven more than 9 million miles on US roads thanks to artificial intelligence. National governments, such as Canada, China, India and France, are prioritizing AI now too. They view artificial intelligence as essential to growing their economies in the 21st century. Most notably, China has said it wants to be the global leader by 2030.

How corporations and startups can more effectively work with one another

If the corporate posture of the past around innovation could be described as “not invented here” with a strong bias toward building internally, today’s corporate posture leans in a much different direction, with many thinking about how to disrupt themselves before an external party beats them to it. Not surprisingly, this has created more corporate and startup partnerships. While getting this type of collaboration right is beneficial for both parties, if you speak to most startups selling into large enterprises or corporate executives looking to partner with startups today, you will find many justifiable frustrations on both sides. As the vice president of Business Development at RRE Ventures, an early-stage venture capital firm based in New York, a major part of my role is leading our business development initiatives, where we enable collaboration between corporations and startups. Before this role, I spent time on the corporate side and on the startup side, so I’ve gotten to see this dynamic from both angles throughout my career.

Decentralisation: the next big step for the world wide web

If it is done right, say enthusiasts, either you won’t notice or it will be better. One thing that is likely to change is that you will pay for more stuff directly – think micropayments based on cryptocurrency – because the business model of advertising to us based on our data won’t work well in the DWeb. Want to listen to songs someone has recorded and put on a decentralised website? Drop a coin in the cryptocurrency box in exchange for a decryption key and you can listen. Another difference is that most passwords could disappear. One of the first things you will need to use the DWeb is your own unique, secure identity, says Blockstack’s Ali. You will have one really long and unrecoverable password known only to you but which works everywhere on the DWeb and with which you will be able to connect to any decentralised app. Lose your unique password, though, and you lose access to everything. ... The decentralised web isn’t quite here yet. But there are apps and programs built on the decentralised model.

In the IoT world, general-purpose databases can’t cut it

We live in an age of instrumentation, where everything that can be measured is being measured so that it can be analyzed and acted upon, preferably in real time or near real time. This instrumentation and measurement process is happening in both the physical world, as well as the virtual world of IT. For example, in the physical world, a solar energy company has instrumented all its solar panels to provide remote monitoring and battery management. Usage information is collected from a customers’ panels and sent via mobile networks to a database in the cloud. The data is analyzed, and the resulting information is used to configure and adapt each customer’s system to extend the life of the battery and control the product. If an abnormality or problem is detected, an alert can be sent to a service agent to mitigate the problem before it worsens. Thus, proactive customer service is enabled based on real-time data coming from the solar energy system at a customer’s installation.

Don’t Rely on the Cloud Provider to Protect Your Data

Unfortunately, many organizations that rely on cloud services for mission-critical applications assume that there’s no need to protect the data and apps that live there. The survey above sheds light on this issue, showing that most IT organizations are either using just scripts (25 percent) or nothing at all (23 percent) to back up their AWS data. And when it comes to disaster recovery for AWS, only 19 percent have plans in place. Thirty-six percent are working on a plan, but an alarming 45 percent have no DR plan at all. Those who are relying on their cloud provider to protect their data are making a huge mistake. That's because the big cloud services, such as Amazon EC2 or S3, are exceptionally durable and redundant, they assume that the cloud’s architecture mitigates any risk of downtime from a failure, error, outage or security attack. This is wrong. The end-user license agreements of AWS and most large public clouds put final responsibility for data on the customer. While the “cloud” itself is secured by AWS, everything within that cloud is the customer’s responsibility.

Quote for the day:

"Leadership in the past was a model of direction and control. Now it should help people set directions for the future and facilitate their delivery." -- John Bailey