IBM researchers build AI-powered prototype to help small farmers test soil

The AgroPad is a paper device about the size of a business card. It has a microfluidics chip inside that can perform a chemical analysis of a water or soil sample in less than 10 seconds. A farmer simply puts his sample on one side of the card, and on the other side, a set of circles provides colorimetric test results. Using a dedicated smartphone app, the farmer can receive immediate, precise results. The app uses machine vision to translate the color composition and intensity into chemical concentrations, with results more reliable than those that rely on human vision. The current prototype measures pH, nitrogen dioxide, aluminum, magnesium and chlorine, though the research team is working on extending the library of chemical indicators. AgroPads could be personalized based on the needs of the individual farmer. Once the test results are in, the data can be streamed to the cloud and labeled with a digital tag to mark the time and location of the analysis. Results for millions of individual tests could be stored.

Microchip 'god mode' flaw: Is it time to rethink security?

This particular vulnerability might be described as a type of hardware backdoor, in which undocumented CPU instructions can take a process from an operating system's Ring 3, the least privileged level of access to resources, directly to Ring 0, the most privileged level of access to resources. Ring 3 is where applications run, and keeping them there keeps them from tinkering with the data or code that other applications use. Ring 0 is reserved for the operating system itself, which manages the resources that all running processes can access. An application needs to be in Ring 0 to enable this backdoor, but Domas found that some systems seem to have been shipped with the backdoor already enabled. Software running in Ring 0 can potentially bypass any security mechanism of other processes. If a process uses a password or cryptographic key, another process running in Ring 0 may also be able to get that password or key, thus virtually eliminating the security it provides.

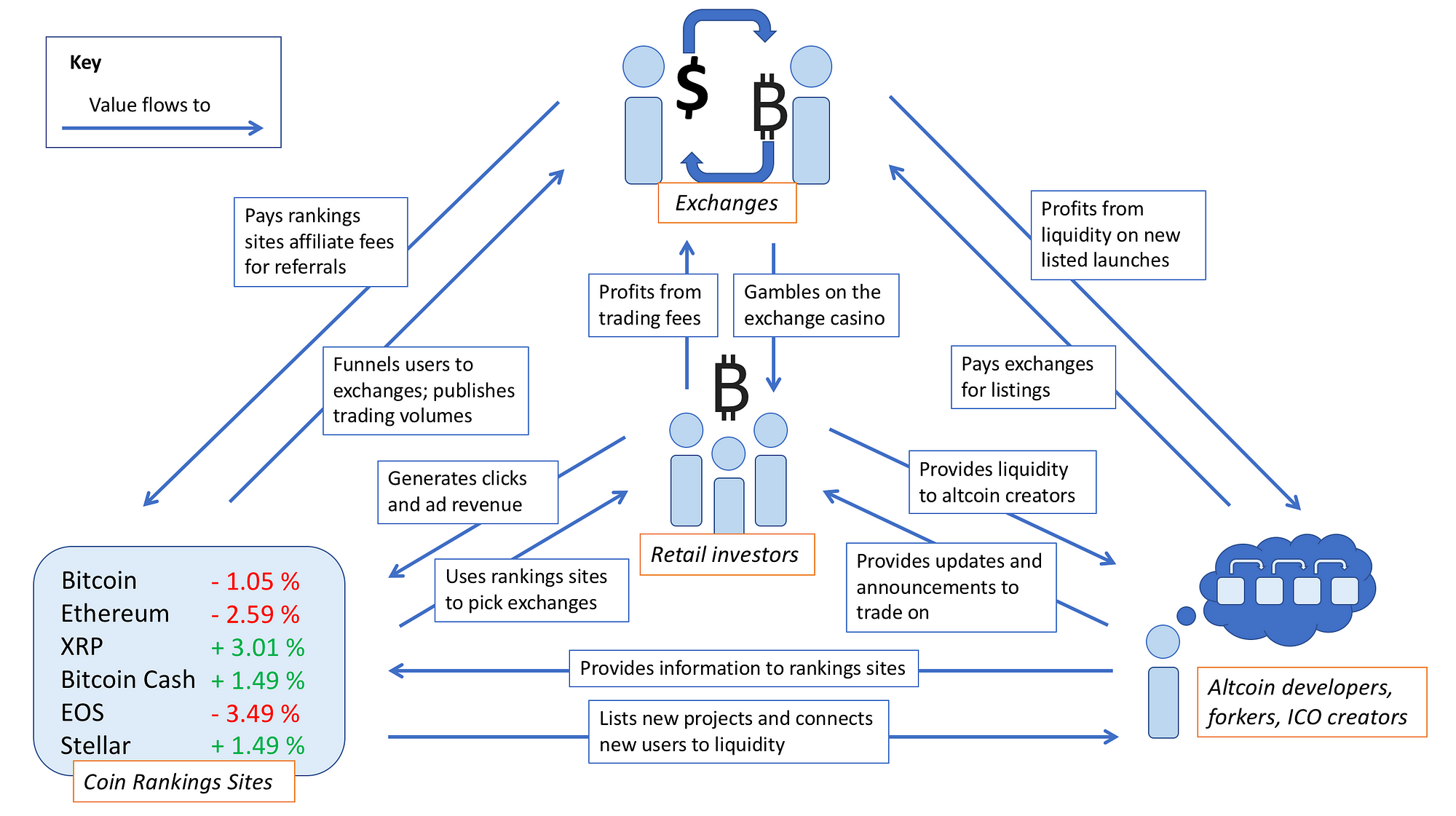

How blockchain technology could aid key data challenges

The current model for storing data is by keeping said data stored in one place. For example, a Microsoft Word document is saved to a desktop. While access to that document may be made through the server, even remotely, it is still saved in a single, centralized location. Blockchain is, arguably, the exact opposite. Data is stored as the “block” of the technology. The blockchain, in its entirety, is an encrypted ledger that is replicated throughout the database. The data (block) is decentralized through this replication. In other words, it is not saved to a single place, but instead exists across all blocks in the network. Even though the ledger exists in a public space, a private key is required to access a specific block—it enables data to be distributed, but not copied. This manner of data storage protects information from ransomware and hacking attacks by requiring a hacker to simultaneously breach and affect every block in order to render any damage, as opposed to corrupting or stealing just one document in the current centralized version of data storage.

Is a developer career right for you? 10 questions to ask yourself

Developers are among the most in-demand tech professionals in the workforce, with high salaries offered to those with the right skill sets. While learning to code and breaking into a new career may seem daunting, the high number of open jobs and training opportunities could make development a great option for many people. "A lot of developers suffer with imposter syndrome and feeling like they don't have enough knowledge or experience to apply for a developer position," said Cristina Blanchard, a front end web developer at Brew Agency. "The truth is, if you have a solid knowledge and understanding of the most basic, core concepts of development, you can learn just about anything with the right training and a little tenacity. Don't be afraid to apply for a position you feel you may be under-qualified for, because you never know who may be willing to train you or help you get the experience you need."

Government projects watchdog recommends terminating Gov.uk Verify identity project

Sources suggest that GDS hopes to make a case to continue with Verify. Just this week, it announced three further digital services using Verify had reached the “private beta” testing stage, although none of the services have a launch date. ... GDS is also understood to be making a case that Verify remains essential to the ongoing roll-out of Universal Credit, the government’s new benefits system. But even there, the Department for Work and Pensions has had to develop an additional identity system after finding that hundreds of thousands of benefits applicants could be unable to register successfully on Verify. ... There are also question marks over the commitment of the IDPs – also known as certified companies. A report by McKinsey for the Cabinet Office showed that more than 80% of users chose two of the seven IDPs – Experian and the Post Office – leaving Digidentity, Royal Mail, Barclays, Citizen Safe and Secure Identity to pick up the remainder between them.

Designing a Usable, Flexible, Long-Lasting API

Most APIs aren't truly REST APIs, so if you choose to build a RESTful API, do you understand the constraints of REST including hypermedia/HATEOAS? If you choose to build a partial REST or REST-like API, do you know why you are choosing to not follow certain constraints of REST? Depending on what your API needs to be able to do and where your API will be used, legacy formats such as SOAP may make sense. However, with each format comes a tradeoff in terms of usability, flexibility, and development costs. Finally, as we start to plan our API, it's important to understand how our users will interact with the API and how they'll use it in conjunction with other services. Be sure to use tools like RAML or Swagger/OAI during this process to involve your users, provide mock APIs for them to interact with, and to ensure your design is consistent and meets their needs. As you design your API, it's also important to remember that you're laying a foundation to build upon at a later time.

How to Cultivate Security Champions at the Workplace

Some things you consider simple are things that can make a big impact on people. Think even smaller, visiting with people one-on-one as time and events present themselves. Last note, there is no better time than an incident debrief to educate users one-on-one or in a group. The point is to get people’s attention. Show them why security is important. Show how easy it really can be for malicious actors to reign havoc in your environment. Show how they can have a direct impact in helping to prevent that. A few people will take it to heart and develop a security mindsight. Many people in information security are problem solvers. Approach it that way. Demonstrate to them how a malicious actor could easily attack your AD / Kerberos infrastructure. See how many ask what can be done to mitigate it. Instead of answering, ask them what they would do, what they can think of. Make it a problem for them to solve. Just keep your audience in mind. What will entice one audience, say demonstrating the intricacies of Kerberoasting to your server administrators, will be lost on business partners.

Cloud computing: Three reasons why it could be time to go cloud-first

"There's data governance questions and there might be clients that don't allow us to pass their data to the cloud -- and that's why there might be a situation why we can't go on demand. But our default option will always be to take the cloud option first if we can. And that approach will be multi-cloud where we'll use a range of providers." Kay says he doesn't believe the cloud is a one-size-fits-all situation right now. He says there's still a bit of an arms race taking place and that different providers have different strengths. "Some are better at doing things better than others. And we, therefore, want to be able to take advantage of those capabilities," says Kay. "So, we will not be dogmatic and push everything to a single provider. We're trying all of them -- at the SaaS level, we're using Salesforce, Microsoft Dynamics 365 and Workday. When it comes to IaaS and PaaS, we're using AWS, Azure and Google. That's a deliberate strategy. We have a view on which provider is stronger for a particular set of characteristics."

Four Ways to Take Charge in Your First Agile Project

Creating an environment of psychological safety is imperative for a high-performing team. Google conducted a two-year long study on team collaboration and found that when individuals felt that they could share their honest opinions without fear of backlash, they performed far better. When employees feel that their opinions matter, their engagement levels peak and, according to Gallup’s research, their productivity increases by an average of 12%. But unfortunately, this doesn’t always happen, especially when teams are new to Agile and Scrum. The Agile Report found that one of the top challenges reported while adopting an Agile approach was its alignment to cultural philosophy, along with lack of leadership support and troubles with collaboration. All of these issues are related to people’s personalities, including their strong points and areas of weakness. If a strong leader is put in place and a strategy is designed to work to each member’s strength, many of these problems can diminish.

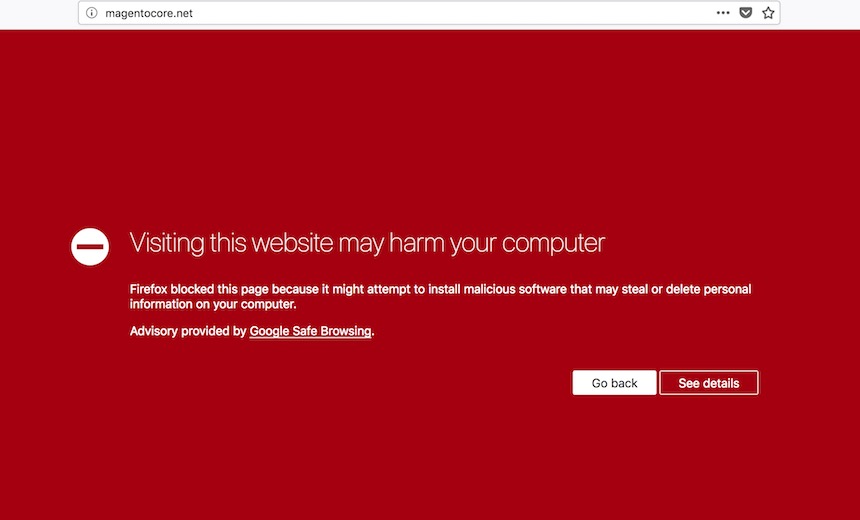

Ransomware Recovery: Don't Make Matters Worse

"Trying to decide whether to pay ransom or not is never an easy decision - the best answer is 'no, never' but that's not always a decision you can make," says former healthcare CIO David Finn, executive vice president of the security consultancy CynergisTek. "You will rarely negotiate from a position of strength with a hacker - or any criminal, for that matter. Having a well thought out plan would have helped, and certainly being able to restore the data yourself, without 'buying' decryption might have avoided the entire nasty event." An organization that chooses to pay attackers to unlock data "should apply the decryption key itself with whatever instruction the criminals can provide - instead of sending a file to the ransomware perpetrators to decrypt as evidence the key works," suggests Keith Fricke, principal consultant at tw-Security. "If possible, it is a good practice to have a third-party vendor pay for the decryption key on behalf of the [organization]," he adds.

Quote for the day:

"Give whatever you are doing and whoever you are with the gift of your attention." -- Jim Rohn