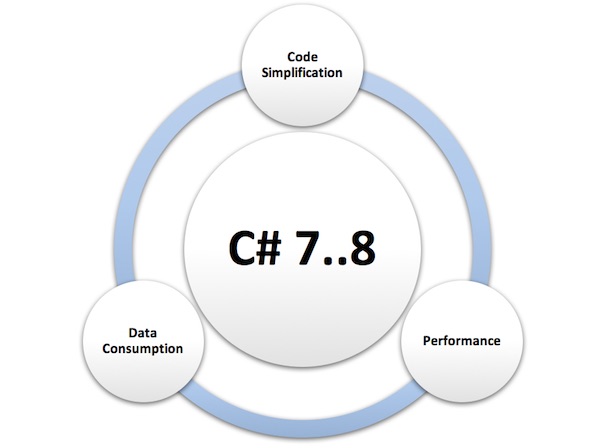

C# 8 Ranges and Recursive Patterns

Ranges easily define a sequence of data. It is a replacement for Enumerable.Range() except it defines the startand stop points rather than start and count and it helps you to write more readable code. ... Pattern matching is one of the powerful constructs, which is available in many functional programming languages like F#. Furthermore, pattern matching provides the ability to deconstruct matched objects, giving you access to parts of their data structures. C# offers a rich set of patterns that can be used for matching. Pattern matching was initially planned for C# 7, but after while .Net team has find that he need more time to finish this feature. For this reason, they have divided the task in two main parts. BasicPattern Matching, which is already delivered with C # 7, and the AdvancedPatternsMatching for C# 8. We have seen in C# 7 Const Pattern, Type Pattern, Var Pattern and the Discard Pattern. In the next C# 8 version, we will see more patterns like Recursive Pattern, which consist of multiple sub-patterns like the Positional Pattern, and Property Pattern.

There are also several issues to consider in terms of both PCI and HIPAA compliance when working with CSPs. Among them is the requirement that comes up during compliance audits regarding where your data resides and what protective measures are in place. With cloud services, that’s sometimes easier said than done. Many CSPs employ a network of data centers that work together to provide high availability and security of your data. As a result, the data may be moved to different data centers across large geographic spans based on service levels, resource demand, cost, latency, disaster recovery and business continuity needs. For security reasons, CSPs may be reluctant to divulge the location of their data centers or where data is specifically located at any one time. Things can become more complex in the case of global providers. With the European Union implementation of the General Data Protection Rules it is important to know where your data resides. Almost every business is touched by the impact of GDPR.

There are also several issues to consider in terms of both PCI and HIPAA compliance when working with CSPs. Among them is the requirement that comes up during compliance audits regarding where your data resides and what protective measures are in place. With cloud services, that’s sometimes easier said than done. Many CSPs employ a network of data centers that work together to provide high availability and security of your data. As a result, the data may be moved to different data centers across large geographic spans based on service levels, resource demand, cost, latency, disaster recovery and business continuity needs. For security reasons, CSPs may be reluctant to divulge the location of their data centers or where data is specifically located at any one time. Things can become more complex in the case of global providers. With the European Union implementation of the General Data Protection Rules it is important to know where your data resides. Almost every business is touched by the impact of GDPR.API Governance Models in the Public and Private Sector: Part 5

Beyond the technology, the legal department should have a significant influence over APIs going from development to production. Providing a structured framework that can generally apply across all services easily, but also providing granular level control over the fine-tuning of legal documents for specific services and use cases. With a handful of clear building blocks in use to help govern the delivery of APIs from the legal side of the equation. ... The legal department will play an important role in governing APIs as they move from development to production, and there needs to be clear guidance for all developers regarding what is required. Similar to the technical and business elements of delivering services, the legal components need to be easy to access, understand, and apply, but also make sure and protect the interests of everyone involved with the delivery and operation of enterprise services.Data Analytics Is The New Co-Pilot For Every CIO

Measurements are important – you won’t be motivated to improve what you’re not measuring. Organizations must always understand their security posture in order to know how they quickly they can react to potential breach events. ... Compliance has become a much bigger deal over the last few years. Where organizations were once concerned about doing only as much as required to tick the box, they are now concerned about doing as much as they practically can to align to both the letter and spirit of the regulations. ... Rely on the experts. There are software packages available that bring you coverage for multiple compliance regimes in a single application. It makes sense to leverage these pre-built tools, as most compliance regime requirements are similar and processing data multiple times to attain related outcomes is not efficient. ... Leverage analytics. As I stated above, ticking the box is no longer enough. You have to put best efforts into solving for the spirit of the regulation, and analytics can help you improve your ability to identify the conditions and incidents that the regulations are really getting after.

Here’s how to make AI inclusive

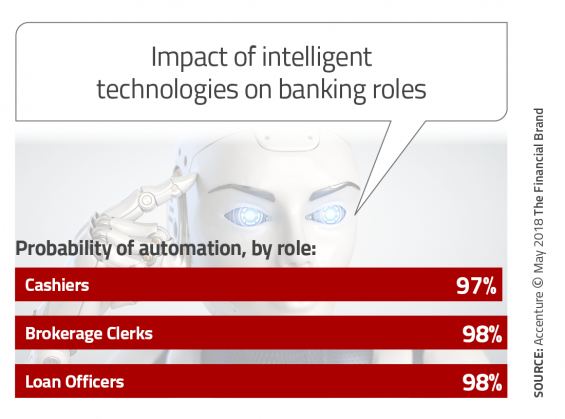

Governments around the world should prioritize preparing citizens for the proliferation of AI. Leaders should create a game plan that addresses the trajectory of job loss by asking difficult questions, including: should we slow down the evolution of technology to buy time to reskill the workforce? We know that certain jobs are going to become extinct. But can we minimize the impact by mapping out the “glide path” and helping prepare workers for new jobs before their current ones reach their natural conclusion? Think about AI like a car. There are two ways that the driver can reach a speed of 200 miles per hour. First, the driver can slam on the accelerator and go from 0 to 200 in matter of seconds. Or, the driver can control the acceleration by gradually applying pressure and monitoring the speedometer. The second scenario is much safer, of course. The same is true when it comes to monitoring the acceleration of AI. Governments should not try to stop its progression.

7 Ways IoT Is Changing Retail in 2018

With IoT, you can set up sensors around the store that send loyalty discounts to certain customers when they stand near products with their smartphones, if those customers sign up for a loyalty program in advance. Additionally, you can use IoT to track items a customer has been looking at online, and send that customer a personalized discount when she’s in-store. Imagine if your customer perused your purses online, and then, in-store, received a discount on her favorite purse? Rather than offering general discounts on a wide variety of products, you can tailor each discount using IoT to maximize your conversion rates. Ultimately, finding ways to incorporate IoT devices into your day-to-day business requires creativity and foresight, but the benefits of IoT in retail -- as outlined above -- can help your business discover innovative solutions to attract more valuable and loyal long-term customers.

The rise of autonomous systems will change the world

“It’s tough to make predictions, especially about the future”, as physicist Niels Bohr is quoted to have said. One thing for sure will change the world as we know it today, and this is the rise of autonomous systems. I would expect major progress in the synthesis of symbolic logic as for (explicit) knowledge representation in combination with (implicit) deep neural networks. This development will lead to autonomous systems that learn while interacting with their environment, that are able to generalize, to draw deductions, and to adapt to new, previously unknown situations. ... One of my favourite application areas is exploratory search, i.e. searching where you don’t know exactly where the search process might lead you to. Sometimes you might not be able to explicitly phrase your search intention. Probably because you lack the vocabulary or you might not be an expert in the domain in which you are looking for information. Then, first you have to gather information about your domain before you might be able to perform pinpoint retrieval.AI and Jobs: What’s The Net Effect?

Among workers mostly engaged in non-routine tasks, one in five will soon be using AI to some extent, according to Gartner’s research. However, those excited about using AI systems at work may need to temper their excitement. AI-powered systems will likely help with mundane tasks such as creating a work schedule, or systems might be able to prioritize emails so employees can focus on the most important tasks. However, these systems are likely to evolve into virtual secretaries over time, and those using these systems will find them to be valuable time-saving tools covering an ever-increasing range of tasks. AI seems to perpetually be viewed as a future technology. Research from O’Reilly, however, shows that the foundation for AI-empowered companies already exists. A total of 28 percent of the leaders polled in the early 2018 survey report already using deep learning, which is viewed as perhaps the most important AI technology for typical businesses. Furthermore, 54 percent of respondents plan on using deep learning for future projects.

Understanding Software System Behaviour With ML and Time Series Data

Because they own the memory of the simulation, they have access to the complete state of the system. Theoretically, this means it is possible to analyse the data to try to reverse-engineer what is going on at a higher level of understanding, just from looking at the underlying data. Although this tactic can provide small insights, it is unlikely that only looking at the data will allow you to completely understand the higher level of Donkey Kong. This analogy becomes really important when you are using only raw data to understand complex, dynamic, multiscale systems. Aggregating the raw data into a time-series view makes the problem more approachable. A good resource for this is the book Site Reliability Engineering,which can be read online for free. Understanding complex, dynamic, multiscale systems is especially important for an engineer who is on call. When a system goes down, he or she has to go in to discover what the system is actually doing. For this, the engineer needs both the raw data and the means to visualise it, as well as higher-level metrics that are able to summarise the data.

The top security and risk management trends facing organizations

“Customer data is the lifeblood of ever-expanding digital business services. Incidents such as the recent Cambridge Analytica scandal or the Equifax breach illustrate the extreme business risks inherent to handling this data,” Gartner noted. “Moreover, the regulatory and legal environment is getting ever more complex, with Europe's GDPR the latest example. At the same time, the potential penalties for failing to protect data properly have increased exponentially.” In the U.S., the number of organizations that suffered data breaches due to hacking increased from under 100 in 2008 to over 600 in 2016. "It's no surprise that, as the value of data has increased, the number of breaches has risen too," said Firstbrook. "In this new reality, full data management programs — not just compliance — are essential, as is fully understanding the potential liabilities involved in handling data."Quote for the day:

"Be a solution provider and not a part of the problem to be solved" -- Fela Durotoye

/https%3A%2F%2Fblueprint-api-production.s3.amazonaws.com%2Fuploads%2Fcard%2Fimage%2F815871%2F755acf4d-a6d5-4d7e-9216-92df6290695b.jpg)