The value of visibility in your data centre

Keeping key enterprise applications up and running well is an absolute requirement for modern business. As estimated by Gartner, IDC and others, the cost of IT downtime averages out to around £4,200 per minute. A simple infrastructure failure might cost around £75,000; while the failure of a critical, public-facing application costs more like £378,000 to £755,000 per hour. When failures impact large-scale global logistics and cause widespread inconvenience to customers, for example, last May’s, British Airways airline operations systems failure, costs can quickly become staggering. BA estimated losing $102.19 million USD (£77.08 million GBP) in hard costs including airfare refunds to stranded passengers, plus incalculable damage to reputation. BA’s parent company, IAG, subsequently lost $224 million USD (£170 million GBP) in value, based on its then-current stock valuation. Preventing such disasters, or intervening effectively and rapidly when they occur, means giving developers and operations staff (DevOps) visibility into IT infrastructure, networks, and applications.

Entrepreneurs think differently about risk

What is the worst thing that can happen? This is where a lot of people start and it’s why they don’t even bother evaluating the rest of it. A person who hates their job and doesn’t want to work for anyone again might shrug off becoming a freelancer because of the risk involved in quitting a 9–5, losing the steady paycheque and benefits, potentially not having clients and needing to find a new job. However I like to think “then what?” Will that kill me? No, it just means that you may need to find a new job. Plenty of people get laid off and need to find new jobs, that’s not the end of the world. If you’re worried that raising money for your startup will cause you to give away too much ownership in your company and that your investors will one day take control and oust you from the company, that’s a real fear. But even then, you still would own your shares in the company. Maybe if they oust you from the company it’s because you’re doing an abysmal job as CEO and they need someone who can grow the company. You still would own a big chunk of that company.

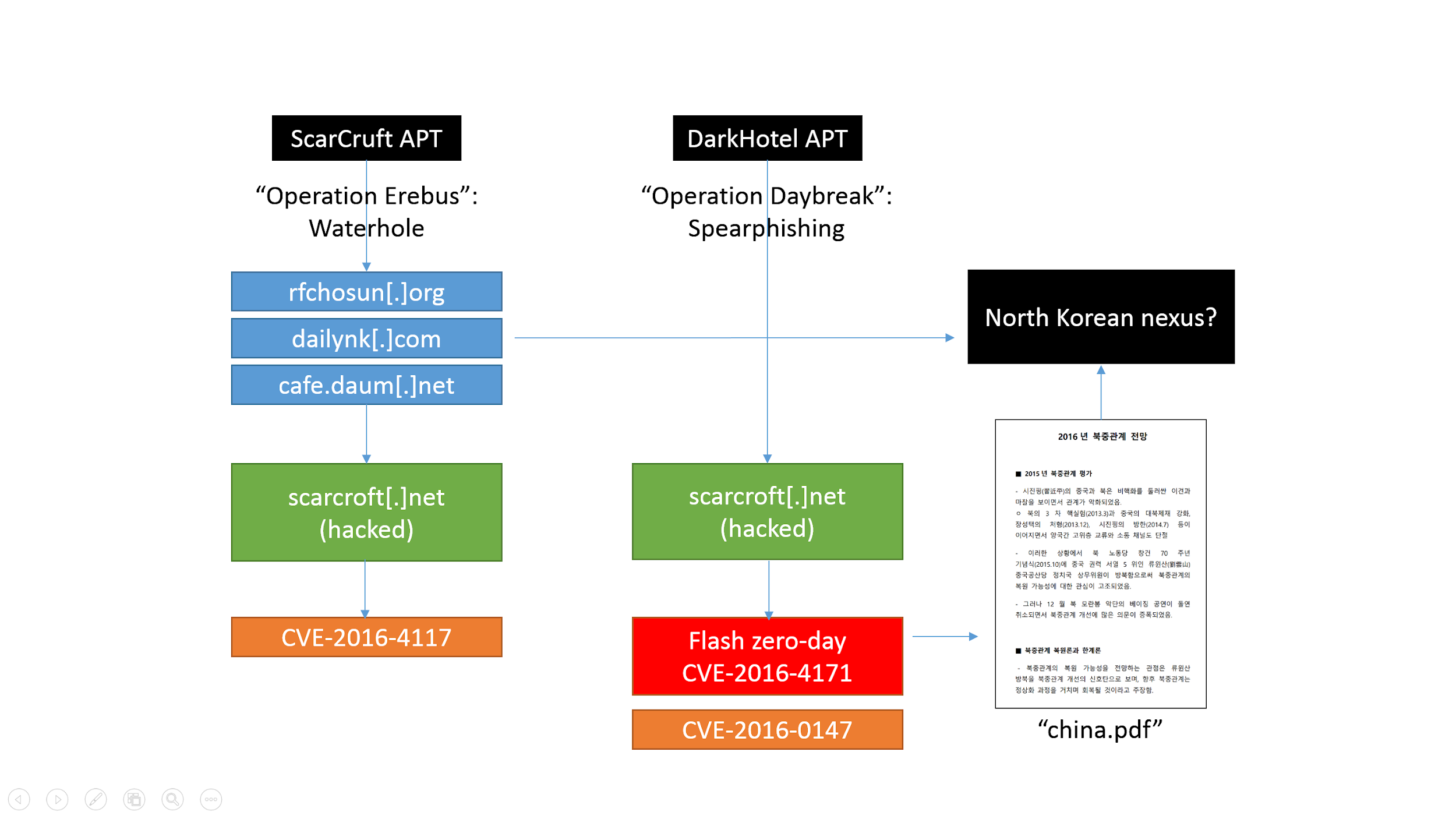

APT Trends Report Q2 2018

We also observed some relatively quiet groups coming back with new activity. A noteworthy example is LuckyMouse (also known as APT27 and Emissary Panda), which abused ISPs in Asia for waterhole attacks on high profile websites. We wrote about LuckyMouse targeting national data centers in June. We also discovered that LuckyMouse unleashed a new wave of activity targeting Asian governmental organizations just around the time they had gathered for a summit in China. Still, the most notable activity during this quarter is the VPNFilter campaign attributed by the FBI to the Sofacy and Sandworm (Black Energy) APT groups. The campaign targeted a large array of domestic networking hardware and storage solutions. It is even able to inject malware into traffic in order to infect computers behind the infected networking device. We have provided an analysis on the EXIF to C2 mechanism used by this malware. This campaign is one of the most relevant examples we have seen of how networking hardware has become a priority for sophisticated attackers. The data provided by our colleagues at Cisco Talos indicates this campaign was at a truly global level.

Organizations must act to safeguard 'the right to be forgotten'

The immediate need is clear—the capability to delete accounts and any associated personal data. But this is not as simple as it might first appear. Organizations are loath to give up data—it helps them improve their own business models, and quite frankly, it is profitable. One only need to look at the recent reselling of user information to third parties to realize its value.Enterprises, then, would need to be compelled to part with what it perceives as valuable—and governments are attempting this with legislation such as GDPR. Beyond the necessary business case, however, lie technological challenges. While many online services have built in deletion and removal options, lingering personal data is a different matter. If this personal information is located in an application or structured database, then the process is relatively straightforward—eliminate the associated account and its data is also removed. If the sensitive data is in files—detached from applications governed by the business—then they behave like abandoned satellites orbiting the earth, forever floating in the void of network-based file shares and cloud-based storage.

Big Data Is A Huge Boost To Emerging Telecom Markets

Big data in telecommunications is playing the biggest role by increasing the reach of major telecommunication brands in these markets. This is especially evident in Africa, where the telecommunications market growth has been the strongest. In 2004, only 6% of African consumers owned a mobile device. This figure has grown sharply over the past 14 years. There are now over 82 million mobile users throughout the continent. In some regions in Africa, the growth has been faster than even the most ambitious technology economists could have predicted. The number of people in Nigeria that own mobile devices has been doubling every year. Pairing big data and telecom has helped spur growth in the telecommunications industry in several ways. According to an analysis by NobelCom, this will likely lead to cheaper telephone calls between consumers in various parts of the world. Here are some of the biggest. A growing number of telecommunications providers are investing more resources trying to reach consumers throughout Africa and other emerging telecom markets.

Be smart about edge computing and cloud computing

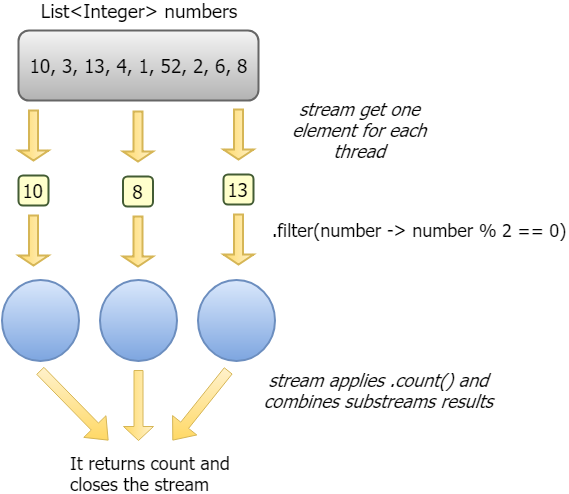

Edge computing is a handy trick. It’s the ability to place processing and data retention at a system that’s closer to the target system it’s collecting data for as well as to provide autonomous processing. The architectural advantages are plenty, including not having to transmit all the data to the back-end systems—typical in the cloud—for processing. This reduces latency and can provide better security and reliability as well. But, and this is a big “but,” edge computing systems don’t stand alone. Indeed, they work with back-end systems to collect master data and provide deeper processing. This is how edge computing and cloud computing provide a single symbiotic solution. They are not, and will never be, mutually exclusive. Some best practices are emerging around edge computing that allow enterprises to provide better use of both platforms. ... The edge computing hype will drive confusion in the next few years. To avoid that confusion, you need to understand what roles each type of system plays, and you need to understand that very few technologies take over existing technologies.

Can Cybersecurity be Entrusted with AI?

While the technology can help to fill cybersecurity skill gaps but at the same time its a powerful tool for hackers as well. In short AI can act as guard and threat at same time. What matter is who use it for what purpose. At end It all depends upon Natural Intelligence to make good or bad use of Artificial Intelligence. There are paid and free tools available which can attempt to modify malwares to bypass machine learning antivirus software. Question is how to detect and stop? Cyberattacks like phishing and ransomeware are said to be much more effective when they are powered by AI. On the other hand to power up the behavioural patterns AI in particular is extremely good at recognizing patterns and anomalies. This makes it an excellent tool for threat hunting. Will AI be the bright future of security as the sheer volume of threats is becoming very difficult to track by humans alone. May be AI might come out as the most dark era, all depends upon Natural Intelligence. Natural Intelligence is needed to develop AI/machine learning tools. Despite popular belief, these technologies cannot replace humans (in my personal opinion). Using them requires human training and oversight.

How to Adopt a New Technology: Advice from Buoyant on Utilising a Service Mesh

Adopting technology and deploying it into production requires more than a simple snap of the fingers. Making the rollout successful and making real improvements is even tougher. When you’re looking at a new technology, such as service meshes, it is important to understand that the organizational challenges you’ll face are just as important as the technology. But there are clear steps you can take in order to navigate the road to production. To get started, it is important to identify what problems a service mesh will solve for you. Remember, it isn’t just about adopting technology. Once the service mesh is in production, there need to be real benefits. This is the foundation of your road to production. Once you’ve identified the problem that will be solved, it is time to go into sales mode. No matter how little the price tag is, it requires real investment to get a service mesh into production. The investment will be required by more than just you as well. Changes impact coworkers in ways that range from learning new technology to disruption of their mission critical tasks.

How Businesses Can Navigate the Ethics of Big Data

The laws regarding data protection and privacy differ from country to country all across the world. The EU has an authentic set of laws pertaining to this matter, but they are visibly different than what the United States has. Privacy within the EU is often said to be stronger than what it is in the U.S. Although the myths may exaggerate the difference, the EU is miles ahead of the U.S. when it comes to stringent data and privacy protection. Privacy is considered a fundamental right for all individuals living in the EU. Details about privacy and data protection are discussed as much as gun control in the U.S. The U.S. does have privacy protection problems, but the crux of the matter is that these laws are separate for both the governing bodies. The diversity in laws concerned with data protection in numerous countries puts forward the notion that there is a need for globally-accepted norms that govern how privacy and protection are provided to users and their data. The globally accepted norms will set the standards and a pathway for others to follow when it comes to data protection.

Selling tech initiatives to the board: Eight success tips for IT leaders

Too many IT leaders, especially if they are busy running multiple projects, underestimate how much time it takes to put together a really persuasive presentation. Some of the most compelling presentations are those built around a demo of the technology being discussed or those with a strong video presentation that draws the audience into the topic. However, demos and videos aren't going to help if you don't have a clear and cogent message for board members who are charged with ensuring that the company is well run, is making the right kinds of investments, and is positioning itself for the future. If what you present doesn't check all of these boxes, it won't succeed. ... It's easy for a technology leader to get mired in tech talk and lose an audience. The board already knows that you know tech. What it wants to know is how well you understand the business and how tech can advance it. The best way to show them that you're focused on the business is to present a clear message in plain English and to avoid technology buzzwords and levels of detail that are extraneous to the business decision that has to be made.

Quote for the day:

"Growth happens when you fail and own it, not until. Everyone who blames stays the same." -- Dan Rockwell

.png?t=1530908105849)