Developers, rejoice: Now AI can write code for you

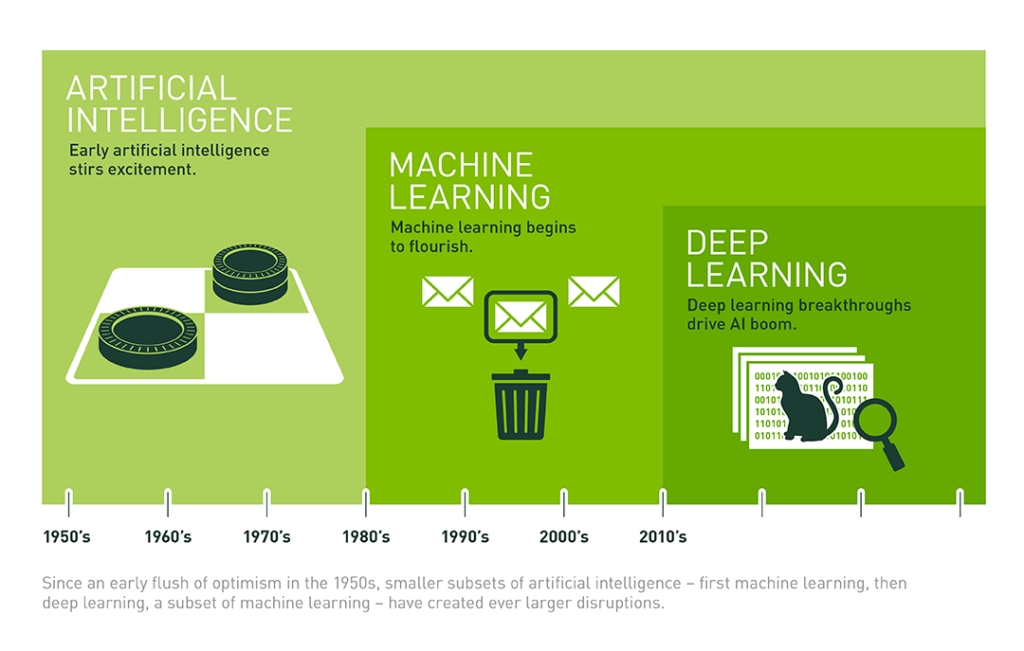

A new deep learning, software coding application can help human programmers navigate the increasingly complex number of APIs, making coding easier for developers. The system—called BAYOU—was developed by Rice University computer scientists, with funding from the US Department of Defense's Defense Advanced Research Projects Agency (DARPA) and Google. While the technology is in its infancy, it represents a major breakthrough in using artificial intelligence (AI) for programming software, and can potentially make coding much less time intensive for human developers. BAYOU essentially acts as a search engine for coding, allowing developers to enter a few keywords and see code in Java that will help with their task. Researchers have tried to build AI systems that can write code for more than 60 years, but failed because these methods require a lot of details about the target program, making them inefficient, BAYOU co-creator Swarat Chaudhuri, an associate professor of computer science at Rice, said in a press release.

6 Reasons Why IT Workers Will Quit In 2018

Across every generation, job satisfaction is strong, with 70 percent of IT workers saying they are content with their current job. But while they enjoy their careers, nearly two-thirds of IT pros said they aren’t happy with their compensation. By generation, 68 percent of millennials (those born between 1981 to 1997) said they feel underpaid, while 60 percent of Gen Xers (those born between 1965 to 1980) and 61 percent of baby boomers (those born between 1946 to 1964) said the same. Of those who said they were looking for a new job in 2018, 81 percent of millennials said they wanted to get a higher salary, while 70 percent of Gen Xers and 64 percent of baby boomers said the same. Millennials may be more motivated by salary considering they make an average salary of $50,000 per year. Meanwhile, Gen Xers in IT earn an average of $65,000 per year, while baby boomers average around $70,000 per year. Some companies are already taking steps to secure their junior workers with a pay raise, as 62 percent of millennials expect to get a raise in 2018 from their current employer and 31 percent expect a promotion.

Employees still in the dark about data protection

According to the EEF report, a “worryingly large” 12% of manufacturers surveyed have no process measures in place to mitigate against the threat, only 62% of respondents said they train staff in cyber security, 34% said they do not offer cyber security training and 4% said they did not know. “The Beyond the phish report illustrates the importance of combining the use of assessments and training across many cyber security topic areas, including phishing prevention,” said Joe Ferrara, general manager at Wombat. “Our hope is that by sharing this data, infosec professionals will think more about the ways they are evaluating vulnerabilities within their organisations and recognise the opportunity they have to better equip employees to apply cyber security best practices and, as a result, better manage end-user risk.” According to Wombat, the report validates the need for organisations to use a combination of simulated attacks and question-based knowledge assessments to evaluate their end-users’ susceptibility to phishing.

Organizations gaining new benefits by automating data engineering

Historically, the necessity of data engineering was only matched by its tediousness. Preparation for data analytics and application use involved some wrangling that produced two undesirable side effects. First, wrangling measures like cleansing, transforming, integrating and curating raw data traditionally monopolized data scientists’ time. Secondly, the complexity and lengthy duration of these tasks often alienated the business from using data. However, a number of advancements in data engineering have now decreased data preparation time while increasing time for exploration and applications. By automating aspects of the wrangling process, expediting data quality measures, and making these functions both repeatable and easily shared with other users, alternative solutions to this problem are “empowering your more business type users with functionality that maybe would have only been available to a database administrator or DB doers,” explains Noah Kays, director of content subscriptions at Unilog, which offers a product information management platform.

Apple Is Struggling To Stop A 'Skeleton Key' Hack On Home Wi-Fi

Even with all Apple's expertise and investment in cybersecurity, there are some security problems that are so intractable the tech titan will require a whole lot more time and money to come up with a fix. Such an issue has been uncovered by Don A. Bailey, founder of Lab Mouse Security, who described to Forbes a hack that, whilst not catastrophic, exploits iOS devices' trust in Internet of Things devices like connected toasters and TVs. And, as he describes the attack, it can turn Apple's own chips into "skeleton keys." There's one real caveat to the attack: it first requires the hacker take control of an IoT technology that's exposed on the internet and accessible to outsiders. But, as Bailey noted, that may not be so difficult, given the innumerable vulnerabilities that have been highlighted in IoT devices, from toasters to kettles and sex toys. Once a hacker has access to one of those broken IoT machines, they can start exploiting the trust iOS places in them.

“SamSam” ransomware – a mean old dog with a nasty new trick

One cybersecurity catchphrase you’ll hear these days is that “X is the new ransomware”. That’s because the ransomware scene is no longer clearly dominated by long-running, well-known “brand names” (so to speak) such as CryptoLocker, TeslaCrypt or Locky. In other words, many people are convinced that ransomware has had its day, is dying out, and new threats are taking over. A popular value for the variable X in in the equation above is cryptojacking, where crooks sneakily insinuate cryptocurrency mining software onto your computer or into your browser. Rather than snatching away your files, like ransomware does, cryptojackers steal your processing power and your electricity instead. This means that the crooks earn a tiny bit of money from every victim for as long as they’re infected, rather that taking the all or nothing approach of ransomare, where victims face a stark choice: pay and win, or refuse and lose.

Five areas of fintech that are attracting investment

Overall investment and merger and acquisition activity in fintech almost halved from a record high of $46.7bn in 2015 to only $24.7bn last year, according to KPMG. This is partly a natural, even welcome, correction after the initial hype. Uncertainty created by the Brexit vote and Mr Trump’s election has also had an effect, however. Another negative factor was the governance scandal last year at Lending Club, the biggest online lender in the US, combined with disappointing performances by some of its rivals, which turned investors off peer-to-peer lending. Investor interest continues to rise in some areas of fintech, however, including cyber security, artificial intelligence, blockchain technology and insurtech. There has also been positive news from the two winners of last year’s Future of Fintech awards. Paytm, the Indian electronic payments company, has thrived following the country’s withdrawal of high-value banknotes, and Transmit Security, the cyber security start-up, recently announced a $40m self-funding round.

Data and privacy breach notification plans: What you need to know

IT alone is not in a position to have all the knowledge needed to execute on even the most refined notification plans. Instead, “the lawyers, the security officers, crisis communication specialists and IT professionals all need to be lashed together at the hip,” Bahar said. “It takes their combined expertise and judgment.” Bahar even suggests that your organization’s legal team might have to take a leadership role in the notification process. “The potential litigation and regulatory stakes are so high, not to mention the public relations and reputational stakes, so the lawyers need to be heavily involved,” he says. The legal team can help work out what is said and how it is said to best meet requirements and minimize risk—and they don’t need to be wasting time conducting time-sensitive legal research. Many regulations require public disclosure of the breach, whether that’s to customers, shareholders, partners, and so on. This is where marketing and public relations teams can help with that communication.

Best Security Software: How 9 Cutting Edge Tools Tackle Today's Threats

Threats are constantly evolving and, just like everything else, tend to follow certain trends. Whenever a new type of threat is especially successful or profitable, many others of the same type will inevitably follow. The best defenses need to mirror those trends so users get the most robust protection against the newest wave of threats. Along those lines, Gartner has identified the most important categories in cybersecurity technology for the immediate future. We wanted to dive into the newest cybersecurity products and services from those hot categories that Gartner identified, reviewing some of the most innovative and useful from each group. Our goal is to discover how cutting-edge cybersecurity software fares against the latest threats, hopefully helping you to make good technology purchasing decisions. Each product reviewed here was tested in a local testbed or, depending on the product or service, within a production environment provided by the vendor. Where appropriate, each was pitted against the most dangerous threats out there today as we unleashed the motley crew from our ever-expanding malware zoo.

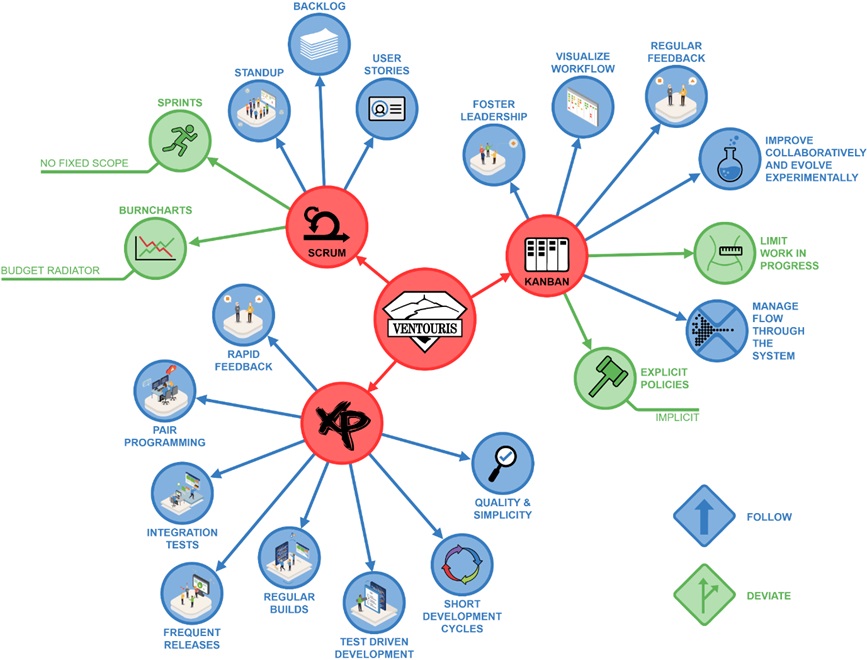

Sustainable Software with Agile

In the Agile Software Factory of Cegeka, all teams have a bi-weekly reporting to monitor whether we’re still doing the right things right within the agreed budget and timeframe. They are filling in a Progress report – PMI style reporting on customer, timing, budget, scope, dependencies & quality. This report is made available towards the software factory management & the customers. We value the transparency, openness of the status of all project activities. It includes reporting on the sprint, the agreed SLA’s, the defects… The monitoring of the application is happening from different perspectives on a permanent basis. The Ventouris team has implemented a continuous build & deploy environment in which the automated tests are running by each check-in of new code. If the code is broken the information radiator is indicating that it must be claimed to be fixed with the highest priority. With a test coverage of more than 100%, the team can avoid regression. The Ventouris team is using "New Relic" as application monitoring tool for the performance follow-up on each of the SLA per transaction type.

Quote for the day:

"If you don't demonstrate leadership character, your skills and your results will be discounted, if not dismissed." -- Mark Miller