The coming revolution is about an AI understanding the human brain — our preferences, our choices, or desires. That will require a Herculean effort. For one thing, my preferences change. Today I’m thinking about biking apparel, tomorrow I’m thinking about going to the beach. An AI will have to adapt, respond, adjust, and customize a thousand times per day. It will need to work like the human brain, constantly making micro-adjustments based on changing variables. A true AI is one that serves us and knows us; we no longer have to know or serve it. We speak and it hears us. We don’t need to learn its parameters, it will learn our parameters. We’re not there yet, of course. Most of us are still tethered to a smartphone all day. By 2030 or so, bots will become adaptive assistants that learn about our behaviors and fit smoothly into our daily routine. We’ll stop being enamored by tech.

The push toward comprehensive endpoint security suites

In a recent research project, ESG asked 385 security professionals the following question, “As new endpoint security requirements arise and your organization considers new endpoint security controls, which of the following choices do you think would be most attractive to your organization?” The results were quite interesting, as 44 percent of respondents said they would choose a comprehensive endpoint security suite from a “next-generation” vendor, 43 percent said they would choose a comprehensive endpoint security suite from a single established vendor, 8 percent said they would choose an assortment of endpoint security technologies from different vendors, and 3 percent said they would choose an assortment of endpoint security technologies from vendors that establish technical partnerships for integration.

Science may have cured biased AI

Scientists at Columbia and Lehigh Universities have effectively created a method for error-correcting deep learning networks. With the tool, they’ve been able to reverse-engineer complex AI, thus providing a work-around for the mysterious ‘black box’ problem. Deep learning AI systems often make decisions inside a black box – meaning humans can’t readily understand why a neural-network chose one solution over another. This exists because machines can perform millions of tests in short amounts of time, come up with a solution, and move on to performing millions more tests to come up with a better solution. The researchers created DeepXplore, software that exposes flaws in a neural-network by tricking it into making mistakes. Co-developer Suman Jana of Columbia University told EurekAlert:

Opening a Bitcoin wallet is just one contingency plan firms can make to prepare for cyber breaches in which client data is stolen, according to John Sweeney, president of IT and cyber security advisors LogicForce. This can be a useful "last resort" when the data is not backed up and cannot be restored unless a ransom is paid. "The firms doing this are smarter," said Sweeney, and are looking to take "conscientious" proactive, rather than reactive, steps. Sweeney stressed he did not generally advocate paying ransoms, but said it "makes sense" for firms to have a Bitcoin wallet to hand. "I certainly don't see it as a bad move," he said. Data breaches at law firms are a growing concern: confidential information, often sent in unencrypted emails, risks being stolen and ransomed back to firms, used for fraud or sold to third parties to be used in crimes such as insider trading.

In actual fact, banks are now competing against every firm in the world that delivers a powerful, positive and engaged digital experience for their customers. If we take customer-centric innovators like Amazon, Netflix, Google and Facebook, and examine what sets them apart from the competition, we see it’s their ability to experiment, scale and deliver new features and functionality almost on a constant basis. And how do they manage this? They leverage the full capabilities and flexibilities that cloud technologies can offer. It is this shift that is responsible for the banking world now embracing digital transformation. Once the realm of retail banking, digital transformation is now entering the unchartered territories of front, middle and back office operations of commercial, investment, business and private banks.

The #1 IOT Challenge: Use Case Identification, Validation and Prioritization

So while we have an amazing compilation of technologies, sensors, gateways, connected devices and such for capturing data, understanding ahead of time what you are doing to do with that data – and why – is important because it frames what technologies, architectures, data, analytics and applications the organization is going to need in order to “monetize” IOT. So before you jump into the IOT pond, let’s make sure that there are no logs, boulders or sea monsters waiting for you. Let’s start our IOT journey by first creating an “IOT Business Strategy.” ... There is a bounty of business use cases from which the business can choose in order to monetize their IOT efforts. However this bounty of use cases is both a gift and a curse because the best way to ensure that you don’t successfully complete any use case is to try to do them all.

Will Machine Learning Make You a Better Manager?

“If you are a credit card processor and you have everyone’s transactions, you could predict whether a particular customer is going to run themselves into debt and default in the future.” Machine learning is even being used to learn more about machines, says Teodorescu, who points out that manufacturers are increasingly using algorithms for preventive maintenance. “You can predict when things are going to break down based on prior performance,” Teodorescu says. “That could preempt costly assembly line shutdowns later.” In all of these ways, it’s clear that while machines may not be taking over the world any time soon, machine learning certainly is. “It will become less and less a mysterious thing and more of a regular topic taught in schools in 20 years,” says Teodorescu. “It will be something everyone learns.”

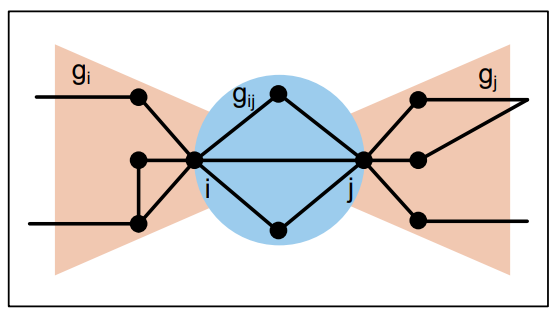

Building Reactive Systems Using Akka’s Actor Model & DDD

The actor model is designed to be message-driven and non-blocking, with throughput as part of the natural equation. It gives developers an easy way to program against multiple cores without the cognitive overload typical in concurrency. Let’s see how that works. Actors consist of senders and receivers; simple message-driven objects designed for asynchronicity. Let's revise the ticket counter scenario described above, replacing a thread based implementation with actors. An actor must of course run on a thread. However, actors only use threads when they have something to do. In our counter scenario, the requestors are represented as customer actors. The ticket count is now maintained with an actor, and it holds the current state of the counter. Both the customer and tickets actors do not hold threads when they are idle or have nothing to do, that is, have no messages to process.

Microsoft's open source sonar tool helps developers find security flaws in their websites

Beyond open sourcing the code, Microsoft donated the project to the JS Foundation over the summer to make it more accessible to all. Microsoft intended for sonar to "avoid reinventing the wheel," Molleda wrote, instead tapping and integrating existing tools and services that help developers build for the web. With that being the case, sonar integrates with aXe Core, AMP validator, snyk.io, SSL Labs, and Cloudinary. The tool could make a real difference for developers in terms of producing higher quality websites: A recent Northeastern University analysis of over 133,000 websites found that 37% had at least one JavaScript library with a known vulnerability. As ZDNet noted, Snyck also ran a scan of the top 5,000 URLs earlier this year, and found that more than 76% were running a JavaScript library with at least one vulnerability as well.

Sony’s big bet on 3D sensors that can see the world

The new 3-D detectors are in a category called time-of-flight sensors, which scatter infrared light pulses to measure the time it takes for them to bounce back. The basic technology has been around for a while and forms the basis for the Xbox’s motion-based Kinect, as well as laser-based rangefinders on autonomous vehicles and in military planes. Sony’s big innovation over existing TOF sensors is that they’re smaller and calculate depth at greater distances. Used with regular image sensors, they effectively give machines the ability to see like humans. “Instead of making images for the eyes of human beings, we’re creating them for the eyes of machines,” Yoshihara said. “Whether it’s AR in smartphones or sensors in self-driving cars, computers will have a way of understanding their environment.” The most immediate impact from TOF sensors, which will be fabricated at Sony’s factories in Kyushu, will probably be seen in augmented-reality gadgets.

Quote for the day:

"Education's purpose is to replace an empty mind with an open one." -- Malcolm Forbes

![wireless network - internet of things edge [IoT] - edge computing](https://images.idgesg.net/images/article/2017/09/wireless_network_management_world_map_iot_internet_of_things_edge_computing_thinkstock_684807514_3x2_1200x800-100736491-large.jpg)