Establish AI Governance, Not Best Intentions, to Keep Companies Honest

Transparency is necessary to adapt analytic models to rapidly changing

environments without introducing bias. The pandemic’s seesawing epidemiologic

and economic conditions are a textbook example. Without an auditable,

immutable system of record, companies have to either guess or pray that their

AI models still perform accurately. This is of critical importance as,

say, credit card holders request credit limit increases to weather

unemployment. Lenders want to extend as much additional credit as prudently

possible, but to do so, they must feel secure that the models assisting such

decisions can still be trusted. Instead of ferreting through emails and

directories or hunting down the data scientist who built the model, the bank’s

existing staff can quickly consult an immutable system of record that

documents all model tests, development decisions and outcomes. They can see

what the credit origination model is sensitive to, determine if features are

now becoming biased in the COVID environment, and build mitigation strategies

based on the model’s audit investigation. Responsibility is a heavy mantle to

bear, but our societal climate underscores the need for companies to use AI

technology with deep sensitivity to its impact.

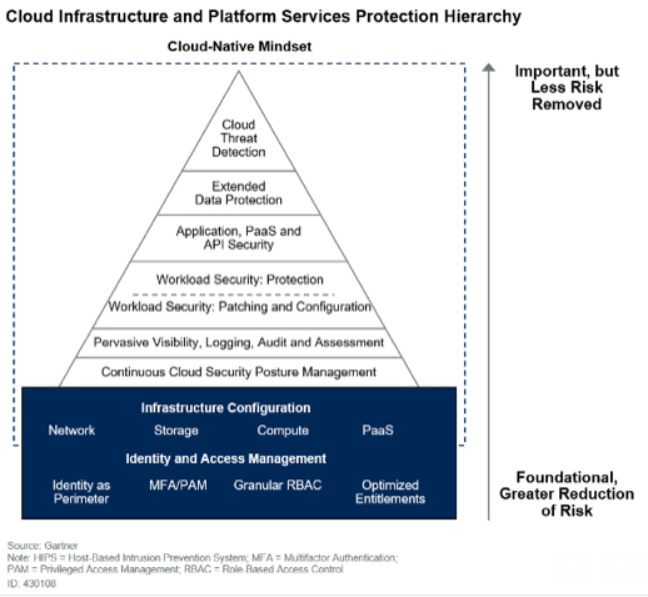

The three stages of security risk reprioritization

As organizations currently undergo planning and budget allocation for 2021, they

are looking to invest in more permanent solutions. IT teams are trying to

understand how they can best invest in solutions that will ensure a strong

security posture. There’s also a greater importance in starting to understand

the greater need for complete visibility into the endpoint, even as devices are

operating on remote networks. Policies are being created around how much work

should actually be done on a VPN and by default creating more forward-looking

permanent policies and technology solutions. But as security teams embrace new

tools for security and operations to enable continuity efforts, it also

generates new attack vectors. COVID-19 has presented the opportunity for the IT

community to evaluate what can and can’t be trusted, even when operating under

Zero Trust architectures. For example, some of the technologies, like VPN, can

undermine what they were designed for. At the beginning of the pandemic, CISA

issued a warning around the continued exploitation of specific VPN

vulnerabilities.

Updates To The Open FAIR Body Of Knowledge Part 2

The Open FAIR BoK Update Project Working Group made a deliberate effort to

more logically present information in O-RA. In Section 4: Risk Measurement:

Modeling and Estimate, the ideas of accuracy and precision are now presented

before the concepts of subjectivity and objectivity, and the section ends with

the concepts of estimates and calibration. O-RA now also emphasizes having

usefully precise estimates; in other words, an estimate is usefully precise if

more precision would not improve or change the decision being made with the

information. The concept of “Confidence Level in the Most Likely Value” as a

parameter to model estimates has been removed from O-RA in bringing it to

Version 2.0. Instead, this concept has been replaced by the choice of

distribution that best represents what the Open FAIR risk analyst knows about

the risk factor being modelled; however, Open FAIR is agnostic on the

distribution type used. O-RA Version 2.0 also takes inspiration the Open FAIR™

Risk Analysis Process Guide to better define how to do an Open FAIR risk

analysis in Section 5: Risk Analysis Process and Methodology. To do this, O-RA

specifies that a risk analyst must first scope the analysis by identifying a

Loss Scenario (Stage 1). The Loss Scenario is the story of loss that forms a

sentence from the perspective of the Primary Stakeholder.

'Return to Office' Phishing Emails Aim to Steal Credentials

In the phishing campaign uncovered by Abnormal Security, the emails are

disguised as an automated internal notification from the company as indicated by

the sender's display name. "But the sender's actual address is

'news@newsletterverwaltung.de,' an otherwise unknown party," the research report

states. "Further, the IP originates from a blacklisted VPN service that is not

consistent with the corporate IP, which indicates the sender is impersonating

the automated internal system." The emails, sent to specific employees, contain

an HTML attachment that bears the recipient's name, which lures employees into

opening it. The email also contains text that makes it seem as if the recipient

has received a voicemail, researchers state. By clicking on the attachment, the

user is redirected to a SharePoint document with new instructions on the

company's remote working policy. "Underneath the new policy, there is text that

states 'Proceed with acknowledgement here.' Clicking on this link redirects the

user to the attack landing page, which is a form to enter the employee's email

credentials," researchers note. Once a recipient falls victim to this trap, the

login credentials for their email account are harvested.

CIO interview: John Davidson, First Central Group

“Intelligent automation means so much more for us than an efficiency tool,”

says Davidson. “We are building an entirely new technical competency into our

business, so that it becomes part of our DNA. This not only changes

operational execution but, importantly, changes the management mindset about

the art of the possible and strategic decision-making.” The automated renewal

process is another area where Blue Prism has been deployed. With the support

of Blue Prism’s partner, IT and automation consultancy T-Tech, the First

Central team can check for accuracy the issue of more than 3,000 renewal

invitations daily in just two hours. The new process verifies each renewal

notice, removing the need for costly, time-intensive manual work downstream to

correct anomalies and reduce the risk of a regulatory incident. Along

with driving operational efficiencies, Davidson believes RPA also boosts

business confidence. “Risk mitigation is a lot more intangible, but can

measure the cost of distraction and can measure our effectiveness from a

robotics perspective,” he says. Davidson’s team has established a robotics

capability for the business capability. “It is not my job to close down

operational risk,” he says. “That’s the responsibility of the process owner.

My team has to deliver technology that closes down the risk.

Q&A on the Book The Power of Virtual Distance

Virtual work gives us many options as to where, when and how to work. And this

is highly useful and a positive development. However, as we discovered from the

beginning, the trade-offs and unintended consequences are extensive and need to

be corrected. When we work mainly through screens, the human contextual markers

that guide our cognitive and emotional selves, to know who we can trust and

under what circumstances, disappear behind virtual curtains. We have shown

conclusively that high Virtual Distance is the statistical equivalent of

Distrust, while lower Virtual Distance results in the strong trust bonds we need

to build relationship foundations that ultimately result in both better work

product as well as higher levels of well-being. Recently a senior executive from

a large global company expressed his concern regarding the fact that many

leaders do not trust their employees to work virtually. And we’ve found that

it’s a two-way street, as many employees don’t trust their leaders to assess or

treat them fairly under these conditions. The erosion of trust was highly

problematic before Covid19. Now, it’s risen to the level of a “crisis of

distrust”.

Why I'd Take Good IT Hygiene Over Security's Latest Silver Bullet

The most common way to perform lateral movement is to reuse privileges in

the assets that attackers have a foothold on, such as secrets and

credentials stored on breached machines. Vendors will preach that they can

distinguish between legitimate traffic and lateral movements — to even

automatically block such illicit activity. They'll use terms like machine

learning and AI to make their product sound advanced, but these capabilities

are very limited. The product may block well-known malware that performs the

exact same sequence in any invocation and hence was "signed" by them —

making such products glorified, network-based, signature-matching systems.

But because AI and machine learning are based on training, they aren't able

to distinguish between legitimate traffic and lateral movement with an

accuracy that fully supports runtime prevention. Moreover, no one knows how

these applications work in all scenarios. Are you willing to block traffic

just because it hasn't been seen before? Or what about an edge case in the

app it's never seen? On the other hand, managing lateral movement risk

is definitely possible. This can be done by analyzing the secrets and

privileges stored and associated with any given asset and determining if

they're overly permissive.

Automation Justification

The human touch is also recommended in code reviews — yes, please use the code

grammar checkers and test coverage tools, but getting your code reviewed and

reviewing others’ code benefits everyone involved. Sometimes folks worry about

the cost of tools and labor to get the process started. Lastly, when starting

a larger automation project, do not try to do everything at once. Prioritizing

and easing into the automation process makes it simpler and increases the

probability it can be done with no loss of functionality. In terms of

naysayers, some of the reasons given by humans are “if it ain’t broke, don't

fix it,” some don't feel comfortable if they are not in control, sometimes the

person does not understand the tools needed, and some folks feel like a

computer will replace their job. So what do we do? Show them the metrics that

can show improvements, teach them how to use the tools, or just let them know

that now that their time is freed up; they can do more meaningful, fun, cool

stuff with their time. Alluding back to an earlier slide, here are some

metrics that will show your team, your management, and the bean counters some

improvement: cost and time savings; test coverage and speedup; customer

satisfaction; fewer defects; faster time to release, as well as to recover

from issues; and reduced risk.

The vicious cycle of circular dependencies in microservices

In software engineering, modularity refers to the degree to which an

application can be divided into independent, interchangeable modules that

work together to form a single functioning item that can serve a specific

business function. Modularity promotes reusability, better maintenance and

manageability and promotes low coupling and high cohesion. Despite the

benefits it offers, modular design is still plagued by dependency problems.

In a typical microservices architecture, you'll often encounter dependencies

among the services and components. Although these services are modeled as

isolated, independent units, they still need to communicate for the purpose

of data and information exchange. Ideally, a microservices application

shouldn't contain circular dependencies. This means that one service should

not call another one directly. Instead, those services should operate on

event-based triggers. However, reality dictates that most developers will

still need to closely link certain parts of an application, and problematic

dependencies will persist. A circular dependency is defined as a

relationship between two or more application modules that are codependent.

What is cyber insurance? Everything you need to know about what it covers and how it works

Different policy providers might offer coverage of different things, but

generally cyber insurance coverage will be likely to cover the immediate costs

associated with falling victim to a cyberattack. "Cyber insurance policies are

designed to cover the costs of security failures, including data recovery,

system forensics, as well as the costs of legal defence and making reparations

to customers," says Mark Bagley, VP at cybersecurity company AttackIQ.

Underwriting data recovery and system forensics, for example, would help cover

some of the cost of investigating and re-mediating a cyberattack by employing

forensic cybersecurity professionals to aid in finding out what happened – and

fix the issue. This is the sort of standard procedure that follows in the

aftermath of a ransomware attack, one of the most damaging and disrupting

kinds of incident an organisation can face right now. It is also the case that

some cyber insurance companies tcover the cost of actually giving in and

paying a ransom – even though that's something that law enforcement and the

information security industry doesn't recommend, as it just encourages cyber

criminals to commit more attacks.

Quote for the day:

"Leadership is not a position. It is a combination of something you are (character) and some things you do (competence)." -- Ken Melrose

No comments:

Post a Comment