It wouldn't make sense for every office environment, of course; having such a gadget in a crowded cubicle farm would probably lead to more annoyances (not to mention mischievous co-worker interference) than anything. But if you have a relatively isolated space in which you work, be it your own executive suite (look at you!) or a more humble home office (like mine), you might be surprised at how handy a Google Home or Smart Display could be.[Get fresh tips and insight in your inbox every Friday with JR's new Android Intelligence insider's newsletter. Exclusive extras await!] Now, is there a fair amount of overlap between what a Google Home or Smart Display on your desk can do and what you could already do with your phone? You'd better believe it. But performing a task on a permanent, stationary device can often be easier and more effective than futzing around with your phone. Using a smart speaker also doesn't wear down your precious mobile battery, and the device's standalone nature makes it better suited for certain types of tasks.

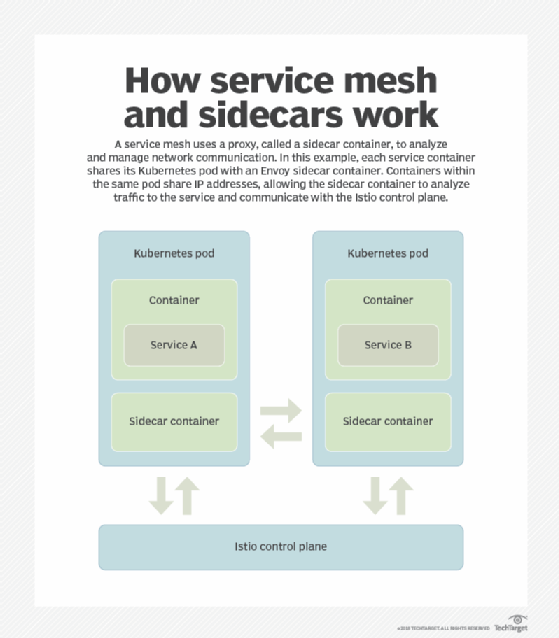

Service mesh architecture radicalizes container networking

To Thomas, true microservices are as independent as possible. Each service handles one individual method or domain function; uses its own separate data store; relies on asynchronous event-based communication with other microservices; and lets developers design, develop, test, deploy and replace this individual function without having to redeploy any other part of the application. "Plenty of mainstream companies are not necessarily willing to invest quite that much time and money into their application architecture," Thomas contended. "They're still doing things in a more coarse-grained manner, and they're not going to use a mesh, at least until the mesh becomes built into the platform as a service that they're using, or until we get brand-new development frameworks." Some early adopters of the service mesh architecture don't believe a slew of microservices is necessary to benefit from the technology. "It allows you to push traffic around in a centralized way that's consistent across many different environments and technologies, and I feel like that's useful at any scale," said Zack Angelo, director of platform engineering at BigCommerce, an e-commerce company based in Austin, Texas, that uses the Linkerd service mesh. "Even if you have 10 or 20 services, that's an immensely useful capability to have."

Redefining work in the digital age

To ensure a successful transition, experts say organizations must figure out the right intersection of humans and intelligent machines. Fifty-four percent of those surveyed by Accenture said human-machine collaboration is important to achieving strategic priorities, while 46 percent believe traditional job descriptions are now obsolete and 29 percent have already redesigned job roles extensively. “We’ve never seen change like this,” says Katherine Lavelle, managing director of Accenture’s Strategy, Talent & Organization practice in North America. “This is about generating new levels of capabilities and results for clients and customers augmented through smart automation and humans. Whoever figures out the collaboration between the two is poised to win the war.” Training and reskilling workers will be essential to creating an enhanced employee experience that redefines the nature of work. “In some ways, we’ll go back to the basics on things we put a value on prior to automation,” Lavelle says.

7 steps to better code reviews

Code review had been demonstrated to significantly speed up the development process. But what are the responsibilities of the code reviewer? When running a code review, how do you ensure constructive feedback? How do you solicit input that will expedite and improve the project? Here are a few tips for running a solid code review. ... Try to get to the initial pass as soon as possible after you receive the request. You don’t have to go into depth just yet. Just do a quick overview and have your team write down their first impressions and thoughts. Use a ticketing system. Most software development platforms facilitate comments and discussion on different aspects of the code. Every proposed change to the code is a new ticket. As soon as any team member sees a change that needs to be made, they create a ticket for it. The ticket should describe what the change is, where it would go, and why it’s necessary. Then the others on your team can review the ticket and add their own comments.

How advanced OCR found new life in big data systems

One reason that OCR was rarely used until recently is that it wasn’t especially reliable. Even when, in the early 2000s, the programs reached about 95% accuracy, businesses ran the risk that software would produce documents containing major mistakes – and particularly with numerals, such errors can be labor intensive to identify and correct. Analysts would do just as well entering the data by hand. However, now that the scan accuracy is significantly improved, the resultant data is more valuable, and analysts need only cross-reference the scans with original documents if something in the content doesn’t make sense. NLP has also helped increase the accuracy of OCR scans. For example, older OCR programs might read chart lines as the letter ‘L’ or number ‘1.’ NLP is context dependent, however, so it can identify if something is a chart or graph, whether it’s reading a bill or an invoice, and other types of nuanced content.

Climb the five steps of a continuous delivery maturity model

A maturity model describes milestones on the path of improvement for a particular type of process. In the IT world, the best known of these is the capability maturity model (CMM), a five-level evolutionary path of increasingly organized and systematically more mature software development processes. The CMM focuses on code development, but in the era of virtual infrastructure, agile automated processes and rapid delivery cycles, code release testing and delivery are equally important. Continuous delivery (CD), sometimes paired with continuous integration to make CI/CD, is an automated process for the rapid deployment of new software versions. A complicated process, CD includes several steps that span multiple departments. CI/CD and DevOps can prove daunting to organizations that view modernization as a dichotomy: Either you're DevOps or you're legacy. But continuous delivery is an efficiency improvement that can evolve in stages.

Analysis: Anthem Data Breach Settlement

"Credit monitoring itself as an award is frankly not that effective, at least in my personal view," DeGraw, who was not involved in the Anthem case, says in an interview with Information Security Media Group. "A persisting problem is that post-breach, [bad actors] can still potentially use the stolen records, including medical information, to cause harm." A more affective approach for most consumers, DeGraw says, is to put a credit freeze on their accounts "which is a bit more cumbersome at times ... but that's a more effective remedy." For breach victims, "there is no easy way to clean up your life," the attorney says. "You have a fair number of out-of-pocket costs, including taking a day off [from work] to file a report ... and maybe hire people to clean up your accounts and other things that have been opened in your name. It can be a hassle and it's time-consuming and it doesn't go away soon because we can't change our Social Security numbers or healthcare numbers relatively easily."

Testing Programmable Infrastructure - a Year On

Worse than the technical challenges, we faced cultural challenges too. Sysadmins and testers aren't used to working with one another! The project made it very clear to me that programmable infrastructure is becoming widespread. There are very specific domain issues that make testing it tricky. But it felt like nobody had the answers. Infrastructure resources are critical to successful software. If there's a problem with your database or your load balancer, it now could be due to committed code. That code is production code, so we should test it! Over a year has passed since I first presented that talk. Even longer since the project which inspired me to present it. I have been on a number of other projects since, and my thinking has changed too. ... When testing anything new, it’s important to revisit fundamentals. When I first gave my talk, I focused a lot on howwe tested it, but not a lot on whywe tested it. I cannot tell you what your cloud infrastructure landscape looks like. The topic is very broad, and fast changing.

Microsoft Office 365 Turns Data Storage Upside Down

In addition to syncing and storing your own private files, the OneDrive for Business client can also sync corporate data stored elsewhere in SharePoint. So this client provides access to files in both locations. Best of all you can choose what you “see” in your OneDrive for Business client and what you are going to sync locally to your computer. To muddy the waters just a bit more, Microsoft recently announced that One Drive for Business will soon start to offer the option to automatically sync your local profile default data locations such as the documents and pictures folders. And it will also have one-button ransomware protection for your files. So now we’re storing personal data, bits of the user profile, and we’re syncing locally some or all of the data in SharePoint. But we’re still upside down from how business has historically stored data because our corporate space is smaller than the personal space.

How security binding choices impact everything in global file search

Few non-software architects understand the outsized impact that security binding has on global file search performance, scalability, multi-tenancy, hardware, supporting infrastructure, capital expenditures, operating expenditures and the total cost of ownership. The wrong security binding choice can add hundreds of thousands to millions of dollars to the TCO. From additional expensive hardware, supporting infrastructure, maintenance, software licensing, training, power, cooling, shelf space, rack space, cables, conduit, transceivers and allocated overhead, the costs can be shockingly high. When we examine the pros, cons, tradeoffs, consequences, and workarounds, for each of three different security binding choices – late binding, early binding, and real-time binding - we find that real-time binding provides the performance of early binding with the accuracy of late binding.

Quote for the day:

"Good leaders must first become good servants." -- Robert Greenleaf

![mobile apps crowdsourcing via social media network [CW cover - October 2015]](https://images.techhive.com/images/article/2015/10/mobile_apps_crowdsourcing_via_social_media_network_thinkstock-100618594-large.jpg)