Data Lakes Evolve: Divisive Architecture Fuels New Era of AI Analytics

“Data lakes led to the spectacular failure of big data. You couldn’t find anything when they first came out,” Sanjeev Mohan, principal at the SanjMo tech consultancy, told Data Center Knowledge. There was no governance or security, he said. What was needed were guardrails, Mohan explained. That meant safeguarding data from unauthorized access and respecting governance standards such as GDPR. It meant applying metadata techniques to identify data. “The main need is security. That calls for fine-grained access control – not just throwing files into a data lake,” he said, adding that better data lake approaches can now address this issue. Now, different personas in an organization are reflected in different permissions settings. ... This type of control was not standard with early data lakes, which were primarily “append-only” systems that were difficult to update. New table formats changed this. Table formats like Delta Lake, Iceberg, and Hudi have emerged in recent years, introducing significant improvements in data update support. For his part, Sanjeev Mohan said standardization and wide availability of tools like Iceberg give end-users more leverage when selecting systems.

Data at the Heart of Digital Transformation: IATA's Story

It's always good to know what the business goals are, from a strategic perspective, which informs the data that is needed to enable digital transformation. Data is at the heart of digital transformation. Business strategy comes first and then data strategy, followed by technology strategy. At IATA, we formed the Data Steering Group and identified critical datasets across the organization. We then set up a data catalog and established a governance structure. This was followed by the launch of the Data Governance Committee and the role of a chief data officer. We're going to be implementing an automated data catalog and some automation tools around data quality. Data governance has allowed us to break down data silos. It has also enabled us to establish IATA's industry data strategy. We treat data as an asset, and that data is not owned by any particular division but looked at holistically at the organizational level. And that has allowed us opportunities to do some exciting things in the AI and analytics space and even in the way we deal with our third-party data suppliers and member airlines.

New Android Warning As Hackers Install Backdoor On 1.3 Million TV Boxes

"This is a clear example of how IoT devices can be exploited by malicious

actors,” Ray Kelly, fellow at the Synopsys Software Integrity Group, said, “the

ability of the malware to download arbitrary apps opens the door to a range of

potential threats.” Everything from a TV box botnet for use in distributed

denial of service attacks through to stealing account credentials and personal

information. Responsibility for protecting users lies with the manufacturers,

Kelly said, they must “ensure their products are thoroughly tested for security

vulnerabilities and receive regular software updates.” "These off-brand devices

discovered to be infected were not Play Protect certified Android devices,” a

Google spokesperson said, “If a device isn't Play Protect certified, Google

doesn’t have a record of security and compatibility test results.” Whereas these

Play Protect certified devices have undergone testing to ensure both quality and

user safety, other boxes may not have done. “To help you confirm whether or not

a device is built with Android TV OS and Play Protect certified, our Android TV

website provides the most up-to-date list of partners,” the spokesperson

said.

Engineers Day: Top 5 AI-powered roles every engineering graduate should consider

Generative AI engineer: They play a pivotal role in analysing vast datasets to

extract actionable insights and drive data-informed decision-making processes.

This role demands a comprehensive understanding of statistical analysis, machine

learning techniques, and programming languages such as Python and R. ... AI

research scientist: They are at the forefront of advancing AI technologies

through groundbreaking research and innovation. With a robust mathematical

background, professionals in this role delve into programming languages such as

Python and C++, harnessing the power of deep learning, natural language

processing, and computer vision to develop cutting-edge solutions. ... Machine

Learning engineer: Machine learning engineers are tasked with developing

cutting-edge machine learning models and algorithms to address complex problems

across various industries. To excel in this role, professionals must develop a

strong proficiency in programming languages such as Python, along with a deep

understanding of machine learning frameworks like TensorFlow and PyTorch.

Expertise in data preprocessing techniques and algorithm development is also

quite crucial here.

Kubernetes attacks are growing: Why real-time threat detection is the answer for enterprises

Attackers are ruthless in pursuing the weakest threat surface of an attack

vector, and with Kubernetes containers runtime is becoming a favorite target.

That’s because containers are live and processing workloads during the runtime

phase, making it possible to exploit misconfigurations, privilege escalations or

unpatched vulnerabilities. This phase is particularly attractive for

crypto-mining operations where attackers hijack computing resources to mine

cryptocurrency. “One of our customers saw 42 attempts to initiate crypto-mining

in their Kubernetes environment. Our system identified and blocked all of them

instantly,” Gil told VentureBeat. Additionally, large-scale attacks, such as

identity theft and data breaches, often begin once attackers gain unauthorized

access during runtime where sensitive information is used and thus more exposed.

Based on the threats and attack attempts CAST AI saw in the wild and across

their customer base, they launched their Kubernetes Security Posture Management

(KSPM) solution this week. What is noteworthy about their approach is how it

enables DevOps operations to detect and automatically remediate security threats

in real-time.

Begun, the open source AI wars have

Open source leader julia ferraioli agrees: "The Open Source AI Definition in its

current draft dilutes the very definition of what it means to be open source. I

am absolutely astounded that more proponents of open source do not see this very

real, looming risk." AWS principal open source technical strategist Tom Callaway

said before the latest draft appeared: "It is my strong belief (and the belief

of many, many others in open source) that the current Open Source AI Definition

does not accurately ensure that AI systems preserve the unrestricted rights of

users to run, copy, distribute, study, change, and improve them." ...

Afterwards, in a more sorrowful than angry statement, Callaway wrote: "I am

deeply disappointed in the OSI's decision to choose a flawed definition. I had

hoped they would be capable of being aspirational. Instead, we get the same

excuses and the same compromises wrapped in a facade of an open process." Chris

Short, an AWS senior developer advocate, Open Source Strategy & Marketing,

agreed. He responded to Callaway that he: "100 percent believe in my soul that

adopting this definition is not in the best interests of not only OSI but open

source at large will get completely diluted."

What North Korea’s infiltration into American IT says about hiring

Agents working for the North Korean government use stolen identities of US

citizens, create convincing resumes with generative AI (genAI) tools, and make

AI-generated photos for their online profiles. Using VPNs and proxy servers to

mask their actual locations — and maintaining laptop farms run by US-based

intermediaries to create the illusion of domestic IP addresses — the

perpetrators use either Western-based employees for online video interviews or,

less successfully, real-time deepfake videoconferencing tools. And they even

offer up mailing addresses for receiving paychecks. ... Among her assigned

tasks, Chapman maintained a PC farm of computers used to simulate a US location

for all the “workers.” She also helped launder money paid as salaries. The group

even tried to get contractor positions at US Immigration and Customs Enforcement

and the Federal Protective Services. (They failed because of those agencies’

fingerprinting requirements.) They did manage to land a job at the General

Services Administration, but the “employee” was fired after the first meeting. A

Clearwater, FL IT security company called KnowBe4 hired a man named “Kyle” in

July. But it turns out that the picture he posted on his LinkedIn account was a

stock photo altered with AI.

Contesting AI Safety

The dangers posed by these machines arise from the idea that they “transcend

some of the limitations of their designers.” Even if rampant automation and

unpredictable machine behavior may destroy us, the same technology promises

unimaginable benefits in the far future. Ahmed et al. describe this epistemic

culture of AI safety that drives much of today’s research and policymaking,

focused primarily on the technical problem of aligning AI. This culture traces

back to the cybernetics and transhumanist movements. In this community, AI

safety is understood in terms of existential risks—unlikely but highly impactful

events, such as human extinction. The inherent conflict between a promised

utopia and cataclysmic ruin characterizes this predominant vision for AI safety.

Both the AI Bill of Rights and SB 1047 assert claims about what constitutes a

safe AI model but fundamentally disagree on the definition of safety. A model

deemed safe under SB 1047 might not satisfy the Safe and Effective principle of

the White House AI Blueprint; a model that follows the AI Blueprint could cause

critical harm. What does it truly mean for AI to be safe?

Why Companies Should Embrace Ethical Hackers

Security researchers (or hackers, take your pick) are generally good people

motivated by curiosity, not malicious intent. Making guesses, taking chances,

learning new things, and trying and failing and trying again is fun. The love of

the game and ethical principles are two separate things, but many researchers

have both in spades. Unfortunately, the government has historically sided with

corporations. Scared by the Matthew Broderick movie WarGames plot, Ronald Reagan

initiated legislation that resulted in the Computer Fraud and Abuse Act of 1986

(CFAA). Good-faith researchers have been haunted ever since. Then there is The

Digital Millennium Copyright Act (DMCA) of 1998, which made it explicitly

illegal to “circumvent a technological measure that effectively controls access

to a work protected under [copyright law],” something necessary to study many

products. A narrow harbor for those engaging in encryption research was carved

out in the DMCA, but otherwise, the law put researchers further in danger of

legal action against them. All this naturally had a chilling effect as

researchers grew tired of being abused for doing the right thing. Many

researchers stopped bothering with private disclosures to companies with

vulnerable products and took their findings straight to the public.

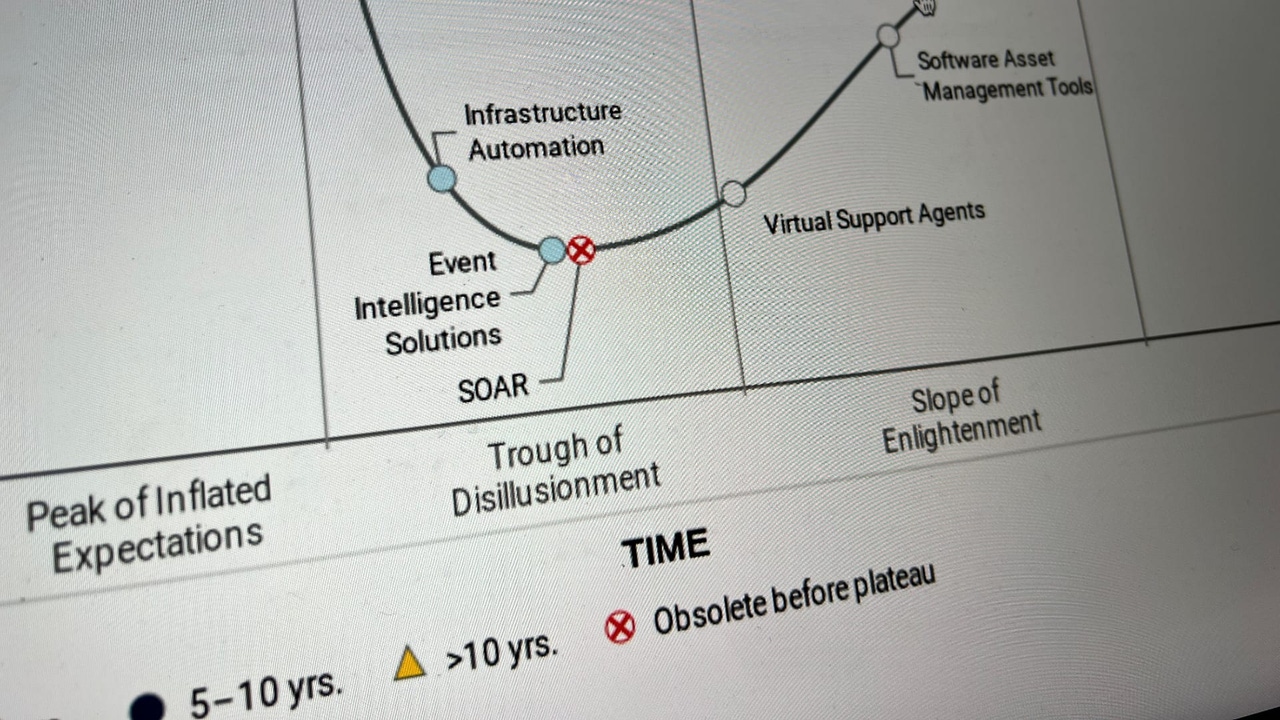

Why AI Isn't Just Hype - But A Pragmatic Approach Is Required

It is far better to take a pragmatic view where you open yourself up to the

possibilities but proceed with both caution and some help. That must start with

working through the buzzwords and trying to understand what people mean, at

least at a top level, by an LLM or a vector search or maybe even a Naive Bayes

algorithm. But then, it is also important to bring in a trusted partner to help

you move to the next stage to build an amazing new digital product, or to

undergo a digital transformation with an existing digital product. Whether

you’re in start-up mode, you are already a scale-up with a new idea, or you’re a

corporate innovator looking to diversify with a new product – whatever the case,

you don’t want to waste time learning on the job, and instead want to work with

a small, focused team who can deliver exceptional results at the speed of modern

digital business. ... Whatever happens or doesn’t happen to GenAI, as an

enterprise CIO you are still going to want to be looking for tech that can learn

and adapt from circumstance and so help you do the same. At the end of the day,

hype cycle or not, AI is really the one tool in the toolbox that can

continuously work with you to analyse data in the wild and in non-trivial

amounts.

Quote for the day:

"Your attitude is either the lock on

or key to your door of success." -- Denis Waitley

.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)