CISO accountability in the era of software supply chain security

A CISO now needs to start acting like a CFO on their very first day in the

role. CISOs no longer have the freedom to prioritize business interests and

subordinate cybersecurity, because they will be found liable for

misrepresenting security practices in the event of a cyber-incident. CFOs

can’t let some fraud, financial crime, absence of key stated controls, or

insider dealing go while they ease into the role, and CISOs will need to start

acting the same way regarding their company’s security program. While some may

find this new era of CISO accountability a threat, they need to look at the

massive opportunity as well — and the opportunity is quite big! Yes, CISOs

will have more work to do with this new level of scrutiny and accountability.

However, this new era will allow them to take a more senior and influential

role in the organization, receive greater allocations of resources to maintain

an appropriate level of perceived risk, prioritize critical enterprise

security needs, and be fully transparent on what security issues their company

is dealing with. And because CISOs and their respective companies will be more

transparent and accountable, this should lead to greater trust in them from

customers, board members, investors, employees, regulators, and the

communities in which they operate.

From Chaos to Control: Nurturing a Culture of Data Governance

Data architecture encompasses the design, structure, and organization of data

assets. It involves defining the blueprint for how data is collected, stored,

processed, accessed, and managed throughout its lifecycle. Data architecture

sets the foundation for data governance by establishing standards, principles,

and guidelines for data management. It encompasses aspects such as data

models, data flow diagrams, database design, and the integration of data

across different systems. Effective data architecture is crucial for ensuring

data consistency, integrity, and accessibility, aligning data assets with the

organization's goals and objectives. Data modeling is a specific aspect of

data architecture that involves creating visual representations (models) of

the data and its relationships within an organization. This process helps in

understanding and documenting the structure of data entities, attributes, and

their interactions. Data modeling plays a vital role in data governance by

providing a standardized way to communicate and document data requirements,

ensuring a collective understanding among stakeholders.

Cloud migration is still a pain

The cloud providers sold the cloud as something that needed to be leveraged

ASAP, so massive workloads and data sets were lifted and shifted to this new

“miracle platform.” Three things occurred: First, it was more expensive than

we thought. I use the unproven number of the cloud costing 2.5 times what

enterprises believed it would cost to operate workloads and data sets in the

cloud. This all blew up in 2022, when we also had the accommodation of

workloads moved during the pandemic, many with unimproved applications and

data sets. Second, poorly designed, developed, and deployed applications moved

from enterprise data centers to the cloud, where applications still need to be

better designed, developed, and deployed. We’re paying more for them to run in

the cloud since we’re paying for the existing inefficiencies. ... Finally,

enterprises aren’t learning from their mistakes. I’ve often been taken aback

by the amount of lousy cloud reality that most enterprises accept. Although

some have moved back to enterprise data centers, some are indeed funding

application and data optimization. We’re still getting a C- in returning value

to the business, our shared objective.

The Growing Demand for Infrastructure Resiliency—How Digital Transformation Can Help

According to Bademosi, “”Integrating digital technologies is not just a trend,

it is the next frontier in creating sustainable, resilient, and advanced

infrastructure systems. As we look to the future, it is evident that digital

technology will be at the heart of every innovation” The benefits of

harnessing new technologies and transforming infrastructure seem limitless.

But government agencies and industry partners may not know where to start.

According to Bademosi, it begins by gauging the current state of critical

infrastructure systems and what is needed for the future. What are the

strengths, weaknesses, and potential opportunities available for

infrastructure? Next, it’s important to foster collaborations across

government agencies, industry leaders, and the communities that will be

impacted by the proposed project. Industry partners and government agencies

then need to empower their workforce with the training they need to deploy

these technologies on future projects. Once training is complete, they can

begin to experiment with these new technologies on smaller pilot projects,

using them as workshops to test strategies.

Falling into the Star Gate of Hidden Microservices Costs

We’re not going to argue that monoliths are perfect. But an intentionally

designed monolith has a comprehensible solution to each flaw, and unlike a

microservices architecture, each one you resolve creates a feedback loop of

improvement with internal scope. To improve your monolith in some dimension —

performance scaling, the ease of onboarding for new developers, the sheer

quality of your code — you need to invest in the application itself, not

abstract the problem to a third party or accept a higher cloud computing bill,

hoping that scale will solve your problems. Of their experience, the Amazon

Prime Video team wrote, “Moving our service to a monolith reduced our

infrastructure cost by over 90%. It also increased our scaling capabilities. …

The changes we’ve made allow Prime Video to monitor all streams viewed by our

customers and not just the ones with the highest number of viewers. This

approach results in even higher quality and an even better customer

experience.” Since the Amazon Prime Video engineering team published their

blog post, many have argued about whether their move is a major win for

monoliths, the same-old microservices architecture with new branding or a

semantic misinterpretation of what a “service” is.

The importance of IoT visibility in OT environments

The surge of sensory data volume and network traffic generated by IIoT devices

can overwhelm existing network infrastructure. Outdated hardware and bandwidth

constraints can severely cripple the efficient operation of these

interconnected systems. Scaling up and modernizing infrastructure becomes

imperative in paving the way for a flourishing IIoT ecosystem. ... In the

intricate game of cyber defense, network visibility reigns supreme. The map

and compass guide defenders through the ever-shifting digital landscape,

illuminating the hidden pathways where threats dwell. Organizations navigate

murky waters without it, blind to threat actors weaving through their systems.

Network visibility emerges as the antidote, empowering defenders with a

four-pronged shield: early threat detection, where anomalies transform into

bright beacons revealing potential attacks before they escalate. Secondly, it

facilitates swift incident response, allowing isolation and mitigation of the

affected area like quarantining a digital contagion; proactive threat hunting,

where defenders actively scour network data for lurking adversaries and hidden

vulnerabilities, pre-empting attacks before they materialize.

Embrace Change: Navigating Digital Transformation for Sustainable Success

Staying within the confines of one’s comfort zone for an extended period is

ill-advised, especially in the face of disruptive innovations. The world has

little patience for those who cling to past glories and turn a blind eye to

emerging technologies. Historical examples, such as the decline of the Roman

Empire, serve as stark reminders of the perils of stagnation and resistance to

change. In a world that is in a constant state of flux, the choice to adapt or

face extinction rests squarely on the shoulders of individuals and

organizations. The significance of speed as a competitive edge cannot be

overstated. Just as the velocity of an aircraft enables it to soar through the

skies and the dynamic force of a fast-moving car propels it forward, adapting

to the rapid pace of change is imperative for survival in the business realm.

Embracing change willingly is not merely a suggestion; it is a strategic

imperative. In a world characterized by constant evolution, the notion of

being “too big to fail” is a myth. The decision to adapt is not dictated by

external forces; it is entirely within the control of individuals and

organizations.

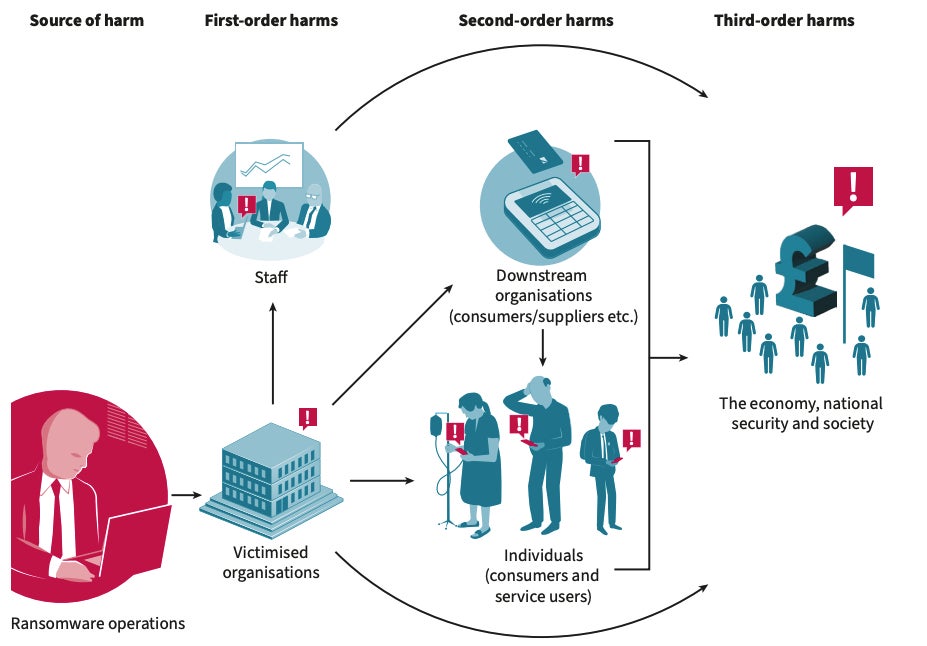

“All About the Basics”: Cyber Hygiene in the Digital Age

In this world, the digital equivalent of leaving your front door unlocked and

the windows wide open is a reality. The result? Well, it’s not pretty.First,

there’s the risk of data breaches. These aren’t just inconveniences; they’re

full-blown catastrophes. When we’re lax with updates and passwords, we’re

essentially rolling out the red carpet for cybercriminals. They waltz in,

pilfer sensitive data, and leave chaos in their wake. The fallout? Compromised

personal information, financial loss, and let’s not forget the ever-lasting

damage to our reputation. It’s the kind of nightmare that keeps grumpy CISOs

up at night. Then there are the phishing attacks. Without proper awareness and

training, our well-meaning but sometimes naïve users might unwittingly invite

trouble right into our digital living room. It starts with an innocent click

on what seems like a legitimate email. And before you know it, malware has

spread through your systems like wildfire. The result? System downtimes,

productivity loss, and a frantic race against time to contain the breach. And

let’s not even get started on unsecured devices; it’s like leaving your secret

plans in a cafe, waiting for the first curious bystander to pick them

up.

Navigating the New Era with Generative AI Literacy

As technology has evolved, that focus on data literacy has quickly

transitioned into a focus on generative AI literacy -- a new breed of data

literacy built on the core tenet of data literacy: data collection and

curation, data visualization, and interpretation. With the advent of

generative AI tools from industry leaders such as OpenAI, Google, Microsoft,

and Anthropic, companies need their employees to know how to leverage these

tools to create business value. Ultimately, data literacy and generative AI

literacy have the same goals -- to drive effective business decision-making

and to create organizational value. ... Generative AI’s power is due in part

to its ability to accept such a wide array of inputs and prompts, but this

also requires that employees learn to expand their thinking. As repetitive

tasks are automated away, employees will be free to think more innovatively,

which is not always intuitive for them. Educational institutions have focused

on teaching students to learn facts for many years but are now being required

to teach students how to think in terms of problem sets, alternative

approaches, and innovative solution discovery.

Risk Management is Never Having to Say, ‘I Am sorry’

Enterprise architecture is largely an exercise in risk management. Unless architecture organizations are willing to take on risk, they are unlikely to be perceived as influential partners in solving problems. Rory established his team as a solver of gnarly problems, not complaining bystanders, by accepting accountability to deliver the mobile commerce platform. One of the biggest categories of risk that architecture leaders must manage is relational risk, i.e. navigating the executive sociology. It wasn’t easy, but between Rory and Loretta the architecture department was able to achieve a key accomplishment, increasing the value of the company’s search and mobile toolkit assets, by establishing empathy with powerful business partners and creating a win / win solution to an urgent business problem. Architects can use what I call “organizational jujitsu” to gain support from agile teams by positioning high quality architecture assets as accelerators of agility. That is, if the architecture department can make the use of existing assets and contracts the fastest route to working tested product frequently delivered to customers, it can leverage the

Quote for the day:

"Your greatest area of leadership often comes out of your greatest area of

pain and weakness." -- Wayde Goodall