Is Strategic Thinking Dead? 5 Ways To Think Better For An Uncertain Future

Strategic thinking is distinguished from tactical thinking because it takes a

longer view rather than reacting to events as they happen. It pushes you to be

proactive in your actions, rather than reactive. And even in the addressing

the immediate, strategic thinking can actually increase your

effectiveness—because your advanced planning will have given you the

opportunity to explore potential situations, assess responses and judge

outcomes—and these can prepare you for how you react when you have less

runway. ... One of the hallmarks of a strategic thinker is clarity of purpose.

Be sure you’re clear about where you want to go—as an individual, a team or a

business. Know your true north because it will help you choose wisely among

multiple options. The language you choose to describe where you want to be (or

how you understand a challenge) will constrain or create possibilities, so

also be careful about how you describe your intentions. If your purpose is to

unleash human potential for students, that will likely take you farther than a

goal to simply provide great classroom experiences.

Why Backing up SaaS Is Necessary

Looking at the possibilities to protect their data on those SaaS-platforms,

organisations started to quickly realise that their SaaS solutions were not as

protected as their other applications run in their own datacentre or their

private cloud. Companies that did know that fact had to put up with it as the

product forced them to use it as it was. Users had to learn the hard way that

most SaaS solutions have a shared responsibility model where the customer is

responsible for his or her own data. ... Even more critically, it’s important

to ensure backups are stored in an independent cloud dedicated to data

protection and not dependent on one of the large hyperscalers. A third-party

cloud gives total control over backed up data and can easily ensure three to

four copies are always made and reside in multiple locations. By retaining

SaaS data in an independent backup-focused cloud, customers can also avoid the

egress charges that come part and parcel with the public cloud. These extra

charges often result in surprise bills after data restores and make it

difficult to budget.

7 steps to take before developing digital twins

Leaders in any emerging technology area look for stories to inspire adoption.

Some should be inspirational and help illustrate the art of the possible,

while others must be pragmatic and demonstrate business outcomes to entice

supporters. If your business’s direct competitors have successfully deployed

digital twins, highlighting their use cases often creates a sense of urgency.

... Harry Powell, head of industry solutions at TigerGraph, says, “When

creating a digital twin of a moderately sized organization, you will need

millions of data points and relationships. To query that data, it will require

traversing or hopping across dozens of links to understand the relationships

between thousands of objects.” Many data management platforms support

real-time analytics and large-scale machine learning models. But digital twins

used to simulate the behavior across thousands or more entities, such as

manufacturing components or smart buildings, will need a data model that

enables querying on entities and their relationships

Enterprise Architecture Management (EAM) in digital transformation

The point is to accompany these “things” throughout their entire life cycle on

the basis of a coherent technology vision, to recognise innovation potential,

to identify technology risks, to derive a technology strategy. And often EAM

already fails because of this corporate language, because the mostly abstract

orders, including abstract or economic business language, come directly from

the board and “have to be implemented”. There is usually no budgeting, because

“everyone has to participate”. This is the reality, and EAM is ground between

the board and development and operations. ... What could be a benefit of

EAM? You always have to think about this question in the context of your own

company! A TOGAF copy of the EAM goals or principles is not helpful, e.g. “The

primary goal of EAM is cost reduction”. Has never worked. Yes, it may be that

costs can be reduced. But EAM always brings more quality, and the cost savings

are not accounting, they always go straight into new methods or procedures: a

better overview of the applications enables projects to start faster, the time

gained and the less effort is immediately put into sensible other efforts.

Online Safety Bill could pose risk to encryption technology used by Ukraine

The Online Safety Bill will give the regulator, Ofcom, powers to require

communications companies to install technology, known as client-side scanning

(CSS), to analyse the content of messages for child sexual abuse and terrorism

content before they are encrypted. The Home Office maintains that client-side

scanning, which uses software installed on a user’s phone or computer, is able

to maintain communications privacy while policing messages for criminal

content. But Hodgson told Computer Weekly that Element would have no choice

but to withdraw its encrypted mobile phone communications app from the UK if

the Online Safety Bill passed into law in its current form. Element supplies

encrypted communications to governments, including the UK, France, Germany,

Sweden and Ukraine. “There is no way on Earth that any of our customers would

every consider that setup [client-side scanning], so obviously we wouldn’t put

that into the enterprise product,” he said. “But it would also mean that we

wouldn’t be able to supply a consumer secure messaging app in the UK. ...” he

added.

The biggest data security blind spot: Authorization

When authorization is overlooked, companies have little to no visibility into

who is accessing what. This makes it challenging to track access, identify

unusual behavior, or detect potential threats. It also leads to having

“overprivileged” users – a leading cause of data breaches according to many

industry reports. Authorization oversight is critical when employees leave a

company or change roles within the organization, as they might retain access

to sensitive data they no longer need. If access rights never expire,

unauthorized users have access to sensitive data. And with layoffs, the risk

of data theft increases. The lack of proper authorization also puts companies

at risk of non-compliance with privacy laws like the General Data Protection

Regulation (GDPR) and the California Consumer Privacy Act (CCPA), which can

result in significant penalties and reputational damage. Most organizations

store sensitive data in the cloud, and the majority do so without any kind of

encryption, making proper authorization all the more necessary.

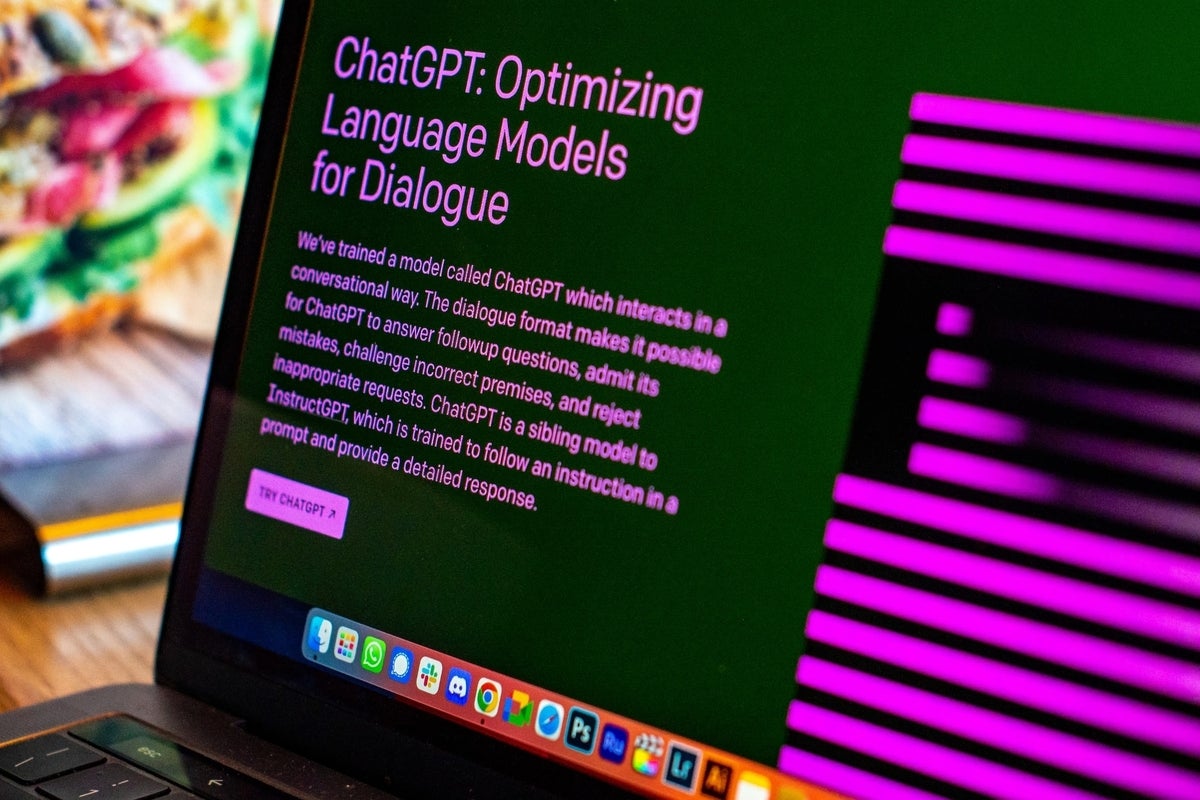

AI can write your emails, reports, and essays. But can it express your emotions? Should it?

What do we lose when we outsource expressing our emotions to an AI chatbot?

We've all heard that sitting on our emotions and feeling them is how we

process them and get the intensity to pass. Speaking from the heart about a

complex, heavy topic is one way we can feel true catharsis. AI can't do that

processing for us. There's a common theme during periods of technological

innovation that technology is supposed to do the mundane, annoying, dangerous,

or insufferable tasks that humans hate doing. Many of us would sometimes

prefer to avoid emotional processing. But experiencing complex emotions is

what makes us human. And it's one of the few things an AI model as advanced as

ChatGPT can't do. If you think of expressing emotions as less of an experience

and more of a task, it might seem clever to automate them. But you can't

conquer human emotions by passing the unsavory parts of them to a language

model. Emotions are critical to the human experience, and denying them their

place within yourself can lead to unhealthy coping mechanisms and poor

physical health.

Benefits of data mesh might not be worth the cost

Data mesh might be a good framework for businesses that acquire companies but

don't consolidate with them, thus wanting a decentralized approach to most or

even all of the individual companies' data, Thanaraj said. It might also be a

good option for large organizations that operate in multiple countries. These

organizations' leaders might want to -- and are sometimes required to --

maintain local data autonomy. "That's where I see data mesh being a much more

appropriate data architecture to apply," Thanaraj said. Still, questions remain

about the long-term value of data mesh. In fact, Gartner labeled data mesh as

"obsolete before plateau" in its 2022 "Hype Cycle for Data Management."

Moreover, organizations could more readily use other better-defined and more

easily implemented approaches to improve their data programs, Aiken said.

Organizations have DataOps, existing data management frameworks and data

governance practices at their disposal. If a data program doesn't follow best

data management practices, data mesh won't improve it. "Those improvements could

be achieved by other practices that don't have a buzz around them like data

mesh," he said.

Do the productivity gains from generative AI outweigh the security risks?

In short, using generative AI to code is dangerous, but its efficiencies are

so great that it will be extremely tempting for corporate executives to use it

anyway. Bratin Saha, vice president for AI and ML Services at AWS, argues the

decision doesn’t have to be one or the other. How so? Saha maintains that the

efficiency benefits of coding with generative AI are so sky-high that there

will be plenty of dollars in the budget for post-development repairs. That

could mean enough dollars to pay for extensive security and functionality

testing in a sandbox — both with automated software and expensive human talent

— and the very attractive spreadsheet ROI. Software development can be

executed 57% more efficiently with generative AI — at least the AWS flavor —

but that efficiency gets even better if it replaces les experienced coders,

Saha said in a Computerworld interview. “We have trained it on lots of

high-quality code, but the efficiency depends on the task you are doing and

the proficiency level,” Saha said, adding that a coder “who has just started

programming won’t know the libraries and the coding.”

The staying power of shadow IT, and how to combat risks related to it

The problem, when it comes to uncovering shadow IT, is that information about

what applications exist and who has access to them is spread across a company,

in many different silos. It lives in the files of sometimes hundreds of

business application owners – end-users in marketing, sales, customer service,

finance, HR, product development, legal and other departments who acquired the

applications. How do most organizations go about finding this data? They send

emails, Microsoft Teams or Slack messages to employees asking them to notify

IT if they have purchased or signed up for a free app, and who they’ve given

access to (and hope everyone will respond). Then IT manually inputs any

information they get into a spreadsheet. ... The data must be automatically

and continuously collected and normalized. It must be made available to all

SaaS management stakeholders, from the people who own and must therefore take

responsibility for managing their apps, to IT leaders and admins, IT security

teams, procurement managers, and more.

Quote for the day:

“Unless we are willing to go through

that initial awkward stage of becoming a leader, we can’t grow.” --

Claudio Toyama