Use cases for AI and ML in cyber security

With more employees working from home, and possibly using their personal

devices to complete tasks and collaborate with colleagues more often, it’s

important to be wary of scams that are afoot within text messages. “With

malicious actors recently diversifying their attack vectors, using Covid-19 as

bait in SMS phishing scams, organisations are under a lot of pressure to

bolster their defences,” said Brian Foster, senior vice-president of product

management at MobileIron. “To protect devices and data from these advanced

attacks, the use of machine learning in mobile threat defence (MTD) and other

forms of managed threat detection continues to evolve as a highly effective

security approach. “Machine learning models can be trained to instantly

identify and protect against potentially harmful activity, including unknown

and zero-day threats that other solutions can’t detect in time. Just as

important, when machine learning-based MTD is deployed through a unified

endpoint management (UEM) platform, it can augment the foundational security

provided by UEM to support a layered enterprise mobile security strategy.

“Machine learning is a powerful, yet unobtrusive, technology that continually

monitors application and user behaviour over time so it can identify the

difference between normal and abnormal behaviour. ...”

Evilnum group targets FinTech firms with new Python-based RAT

The infection chain also adds a rogue scheduled task called “Adobe Update

Task", which executes yet another malicious downloader that poses as Adobe's

Flash Player and is called Fplayer.exe. This file is a maliciously modified

version of Nvidia's Stereoscopic 3D driver Installer. It seems that the

Evilnum attackers have gone to great lengths to maintain persistence and

stealth by impersonating a variety of legitimate programs that administrators

might not find suspicious on a Windows system. The PyVil RAT talks to the

command-and-control (C&C) server using HTTP but the data inside is

encrypted with a hard-coded key to hide it from network-level Web traffic

inspection products. In the past, Evilnum configured its malware to only talk

to command-and-control servers using IP addresses, not domain names. However,

Cybereason has detected a growing number of domains being associated with the

IP addresses used by the Evilnum C&C infrastructure during the past weeks,

signaling a change in tactics as well as a growing infrastructure. The

researchers also observed PyVil RAT downloading a custom version of an

open-source password dumping tool called LaZagne, a post-exploitation tool

that's written in Python and is popular with penetration testers.

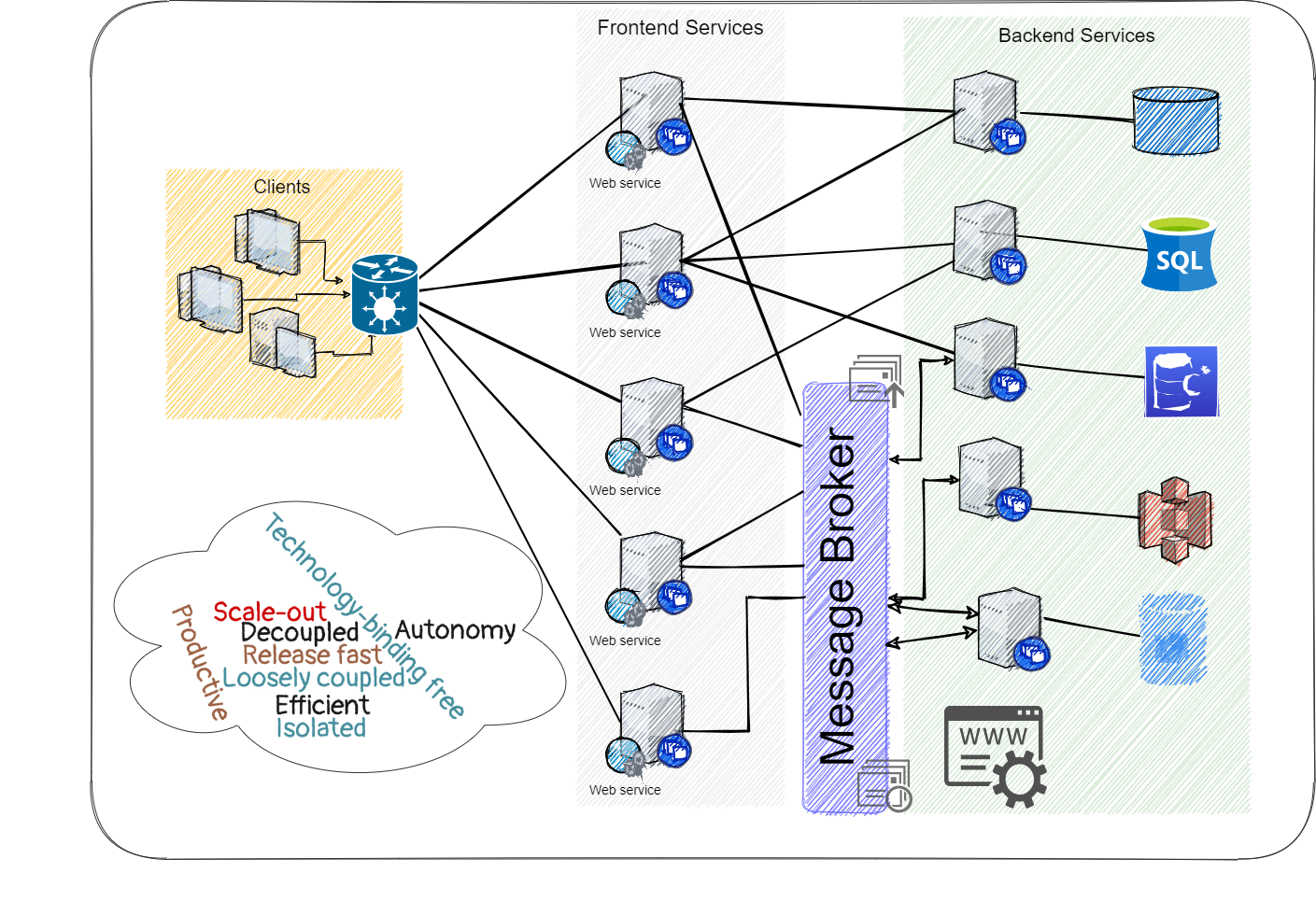

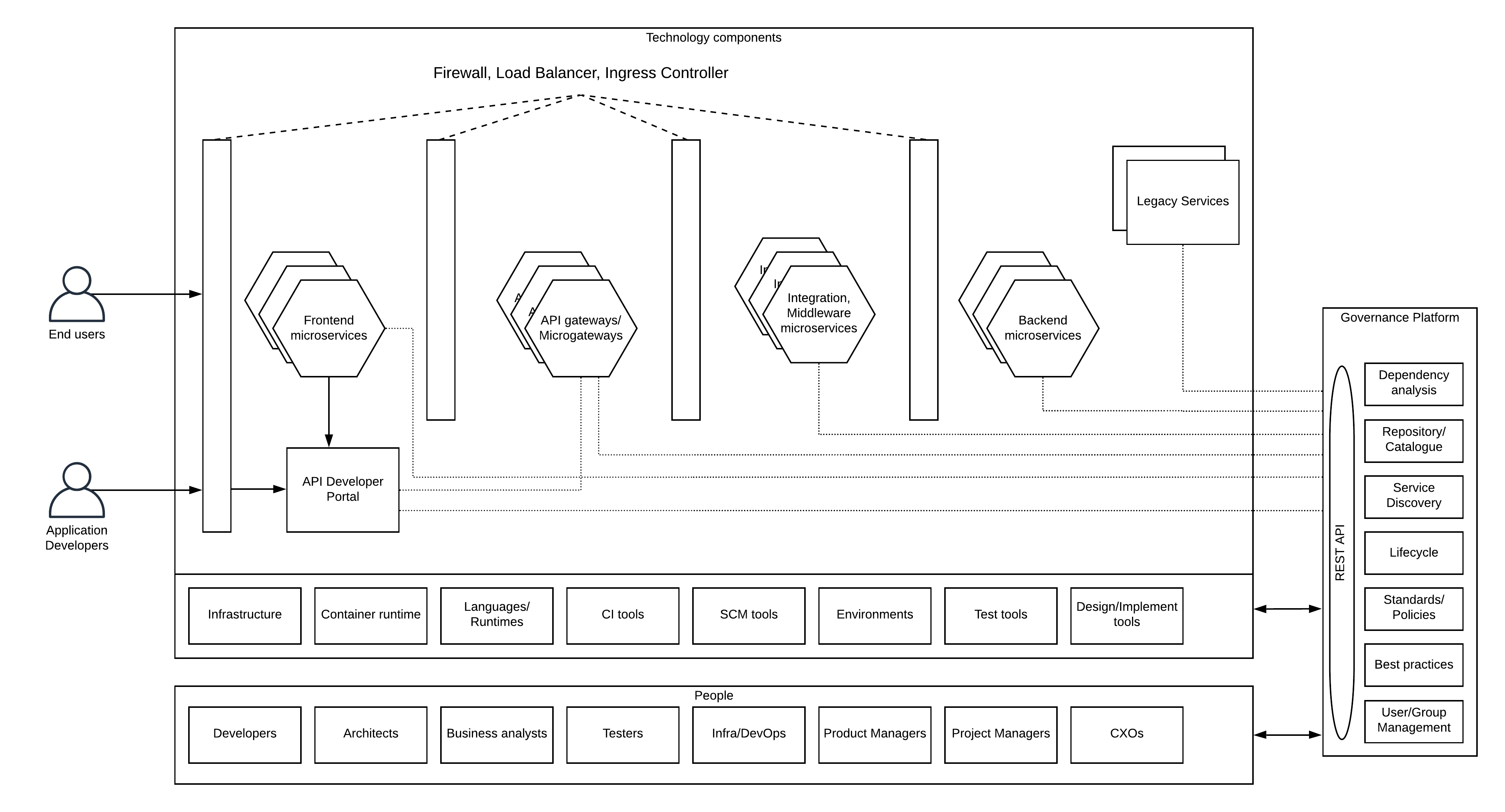

Open source data control for cloud services with Apache Ranger

RBAC is based on the concepts of users, roles, groups, and privileges in an

organization. Administrators grant privileges or permissions to pre-defined

organizational roles—roles that are assigned to subjects or users based on

their responsibility or area of expertise. For example, a user who is assigned

the role of a manager might have access to a different set of objects and/or

is given permission to perform a broader set of actions on them as compared to

a user with the assigned role of an analyst. When the user generates a request

to access a data object, the access control mechanism evaluates the role

assigned to the user and the set of operations this role is authorized to

perform on the object before deciding whether to grant or deny the request.

RBAC simplifies the administration of data access controls because concepts

such as users and roles are well-understood constructs in a majority of

organizations. In addition to being based on familiar database concepts, RBAC

also offers administrators the flexibility to assign users to various roles,

reassign users from one role to another, and grant or revoke permissions as

required. Once an RBAC framework is established, the administrator's role is

primarily to assign or revoke users to specific roles.

Using Measurement to Optimise Remote Work

Citrix’s Remote Works Podcast recently interviewed Laura Giurge, a

post-doctoral researcher at London Business School and Oxford University’s

Wellbeing Research Centre. Giurge explained that the pandemic has created a

"big experiment of working from home." She explained that its findings were

challenging the traditional assumption that productivity is measured in hours

worked, rather than the impact of an employee’s output. Giurge explained that

this required a change in mindset and was particularly challenging for

traditional managers: It is really hard for managers, if you are really used

to seeing your employees in the office and all of a sudden you’re not. It’s

very difficult. But if you start from a mindset of experimentation and

understanding there are better ways for experimenting with new ways of working

and seeing what works, then you are likely to get your employees to work

better and also be happier. Longman wrote that he "calculated the average

number of stories" completed "during 2019 and used this as a comparison with

2020 data." By examining trends by month and by quarter he wrote that "both

views suggested that the work completed during lockdown was within ...

expected levels of volatility."

Data Labeling for Natural Language Processing: a Comprehensive Guide

Once you have identified your training data, the next big decision is in

determining how you’d like to label that data. The labels to be applied can

lead to completely different algorithms. One team browsing a dataset of

receipts may want to focus on the prices of individual items over time and use

this to predict future prices. Another may be focused on identifying the

store, date and timestamp and understanding purchase patterns. Practitioners

will refer to the taxonomy of a label set. What level of granularity is

required for this task? Is it enough to understand that a customer is sending

in a customer complaint and route the email to the customer support team? Or

would you like to specifically understand which product the customer is

complaining about? Or even more specifically, whether they are asking for an

exchange/refund, complaining of a defect, an issue in shipping, etc.? Note

that the more granular the taxonomy you choose, the more training data will be

required for the algorithm to adequately train on each individual label;

phrased differently, each label requires a sufficient number of examples, so

more labels means more labeled data overall.

Chilean bank shuts down all branches following ransomware attack

The incident is currently being investigated as having originated from a

malicious Office document received and opened by an employee. The malicious

Office file is believed to have installed a backdoor on the bank's network.

Investigators believe that on the night between Friday and Saturday, hackers

used this backdoor to access the bank's network and install ransomware. Bank

employees working weekend shifts discovered the attack when they couldn't

access their work files on Saturday. BancoEstado reported the incident to

Chilean police, and on the same day, the Chilean government sent out a

nationwide cyber-security alert warning about a ransomware campaign targeting

the private sector. While initially, the bank hoped to recover from the attack

unnoticed, the damage was extensive, according to sources, with the ransomware

encrypting the vast majority of internal servers and employee workstations.

The bank initially disclosed the attack on Sunday, but as time went by, bank

officials realized employees wouldn't be able to work on Monday, and decided

to keep branches closed, while they recover. Luckily, it appears the bank had

done its job and properly segmented its internal network, which limited what

the hackers could encrypt.

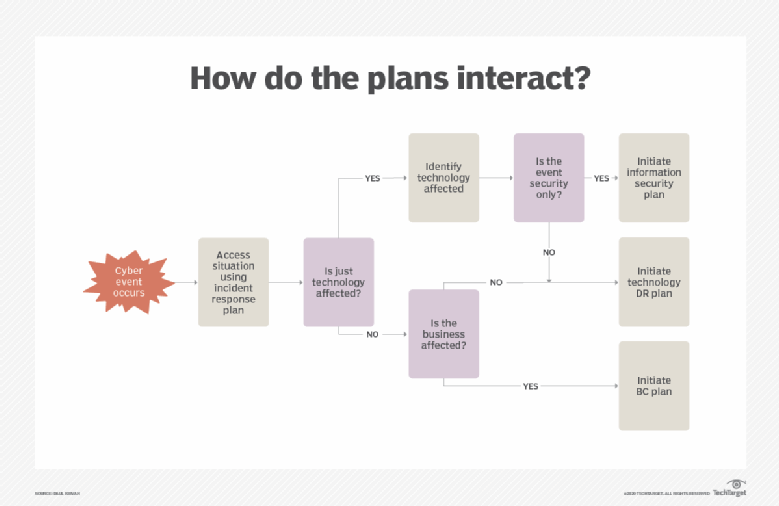

How to ensure cybersecurity and business continuity plans align

Ideally, according to industry good practice, a disruptive incident should

trigger an IR plan that assesses the damage and initiates steps to respond

quickly to the cyber incident. Results of the IR plan can trigger a BC or a DR

plan, or both, based on the nature of the event. BC/DR plans recover and

restore critical assets -- people, processes, technology and facilities -- the

business needs to function. Cybersecurity plans respond to specific disruptive

events and may include an IR plan component to determine the nature of the

event before launching response activities. The key is to determine at what

point the cybersecurity attack threatens the organization and its ability to

conduct business. This suggests that descriptive language should be added to

cybersecurity plans to trigger IR, as well as BC/DR plans. Let's assume

there's a full complement of plans in place that deal with business- and

technology-focused incidents. In some cases, only a specific security strategy

or plan -- e.g., information security -- will be needed. In other situations,

one or more plans may need to be launched. The figure below depicts a simple

decision flow diagram showing how such plan linkages may be arranged and

launched in response to a cybersecurity attack.

UK tech sector vacancies up 36% during summer — Tech Nation

“Since lockdown, companies have come to realise that they need

industrial-grade technology to run their businesses and tech companies are

hiring people to service these new customers, expand and build new products,”

said Haakon Overli, co-founder of enterprise software-focused venture fund

Dawn Capital. “We’re seeing it right across our portfolio.” The Tech Nation

research also suggests that recovery from the pandemic is set to be uneven,

with industries such as travel and retail predicted to drastically cut their

workforces, while others are able to prosper from changes in customer

behaviour. Additionally, the report said the trend of remote working will

continue to open up “high-paid, quality” opportunities to residents outside

larger cities. Recent research from Culture Shift found that culture has

improved for tech sector employees while remote working. However, 50% said

they feel isolated while working from home. Despite employment in the UK tech

sector looking promising due to the surge in vacancies, the skills gap remains

an issue, with two thirds of businesses already have unfilled digital skills

vacancies, while 58% say they’ll need significantly more digital skills in the

next five years, according to the CBI.

Why More Healthcare Providers are Moving to Public Cloud

In what may be one of the hardest truths of this extraordinary time -- apart

from the human suffering -- is that the need for dynamic surge capacity will

not disappear when a vaccine is available. As the World Economic Forum has

said, we have entered a new era where the risk of future pandemics is high.

This forever alters the infrastructure needed to support shifting demands on

technology. The public cloud offers the systems resilience that healthcare

providers need in order to sustain operations under severe disruption, flexing

to address highly volatile customer demand and managing vastly increased needs

for remote network access. Providers long viewed investing in the public cloud

as a risky business because of security concerns. But over the past two years,

many have begun their cloud journey buoyed by other industries’ and research

institutions’ embrace of its “deny by default” security posture and, most

importantly, limitless opportunities for innovation. There could not be a

better time for this. An investment in systems resilience via cloud is an

investment in business enablement. A resilient technology infrastructure

scales up or down on demand based on real-time changes in usage to support

care volume variability. It identifies traffic spikes and automatically

adjusts capacity to drive responsiveness with new cost efficiencies.

Cybersecurity Skills Gap Worsens, Fueled by Lack of Career Development

The fundamental causes for the skill gap are myriad, starting with a lack of

training and career-development opportunities. About 68 percent of the

cybersecurity professionals surveyed by ESG/ISSA said they don’t have a

well-defined career path, and basic growth activities, such as finding mentor,

getting basic cybersecurity certifications, taking on cybersecurity internships

and joining a professional organization, are missing steps in their endeavors.

The survey also found that many professionals start out in IT, and find

themselves working in cybersecurity without a complete skill set. A full 63

percent of respondents in the survey said they’ve worked in cybersecurity for

less than three years, with 76 percent starting as IT professionals before

switching their career to cybersecurity. “Cybersecurity professionals often

muddle through their careers with little direction, jumping from job to job and

enhancing their skill sets on the fly rather than in any systematic way,”

according to the report. To go along with this, the survey asked respondents to

speculate on how long it takes a cybersecurity professional to become proficient

at the job. The highest percentage of respondents (39 percent) believe it takes

anywhere from three to five years to develop real cybersecurity proficiency,

while 22 percent say two to three years, and 18 percent claim it takes more than

five years.

Quote for the day:

"It's fine to celebrate success but it is more important to heed the lessons of failure." -- Bill Gates