Analysis by researchers at cybersecurity company Tessian reveals that 52 percent of employees believe they can get away with riskier behaviour when working from home, such as sharing confidential files via email instead of more trusted mechanisms. ... In some cases, employees aren't purposefully ignoring security practices, but distractions while working from home such as childcare, room-mates and not having a desk set-up like they would at the office are having an impact on how people operate. Meanwhile, some employees say they're being forced to cut security corners because they're under pressure to get work done quickly. Half of those surveyed said they've had to find workarounds for security policies in order to efficiently do the work they're required to do – suggesting that in some cases, security policies are too much of a barrier for employees working from home to adapt to. However, by adopting workarounds employees could be putting their organisation at risk from cyber attacks, especially as hackers increasingly turn their attention to remote workers. "But, all it takes is one misdirected email, incorrectly stored data file, or weak password, before a business faces a severe data breach that results in the wrath of regulations and financial turmoil".

Google, Microsoft most spoofed brands in latest phishing attacks

In form-based phishing attacks, scammers leverage sites such as Google Docs and Microsoft Sway to trap victims into revealing their login credentials. The initial phishing email typically contains a link to one of these legitimate sites, which is why these attacks can be difficult to detect and prevent. Among the nearly 100,000 form-based attacks that Barracuda detected over the first four months of 2020, Google file sharing and storage sites were used in 65% of them. These attacks included such sites as storage.googleapis.com, docs.google.com, storage.cloud.google.com, and drive.google.com. Microsoft brands were spoofed in 13% of the attacks, exploiting such sites as onedrive.live.com, sway.office.com, and forms.office.com. Beyond Google and Microsoft, other sites spoofed in these attacks were sendgrid.net, mailchimp.com, and formcrafts.com. ... criminals try to spoof emails that seem to have been creating automatically through file sharing sites such as Microsoft OneDrive. The emails contain links that take users to a legitimate site such as sway.office.com. But that site then leads the victim to a phishing page prompting for login credentials.

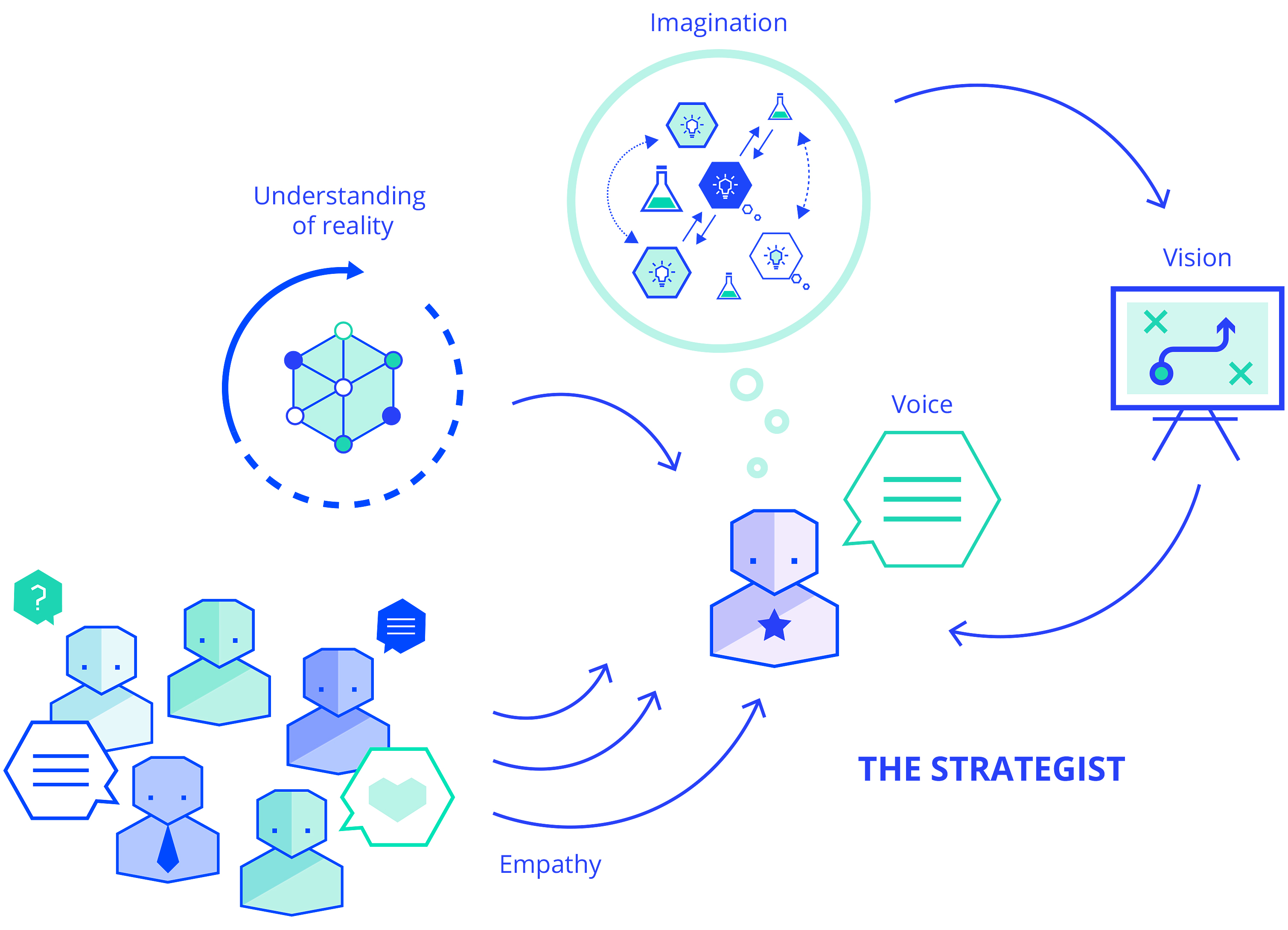

Four ways to reflect that help boost performance

On top of a mountain, a leader retreats to ask him or herself a set of questions about life, stress, and sacrifice, capturing the answers in a beautifully bound notebook. The questions don’t vary much. Where are you going? How are you living your values? What gives you meaning, purpose, or fulfillment? Are all the components of your life managed as you need them to be managed: career, family, friends, finances, health, and spiritual growth? The power of this reflection comes from digging deep and being in touch with your core. It is very much an affair of the heart. With the insights from this exercise, you come back to your role renewed, focused on what matters to you and clearer about how you will lead this year. Although this kind of deep reflection is a useful process, it may not be enough to tackle the range of problems a business encounters in the course of a year because it focuses solely on the leader. In our experience working together and independently coaching leaders, we find that they and their teams benefit from four ways of more targeted reflection that help refocus and reframe challenges

IT Staffing Guide

After taking the time to write out your job description and put it out there on as many job boards as possible, you can only hope and pray that the right candidate finds you. Meanwhile, your organization loses time and money while operating with less than full staff and taking time away from work to conduct interviews that may or may not lead to a successful hire. In the best-case scenario, you find someone great, and you are just out the original time and money. In the worst-case scenario, time drags on, and no one who is right for the position ever applies, or you hire someone, and it doesn’t work out, hopefully only once. A thriving, growing company just does not have time for this every time they need to add to the team. In short, IT staffing agencies will save your company both time and money. IT staffing agencies take the time to get to know the needs of both the company and the potential employees and takes the time to match the two in both technical and cultural aspects.

Flutter: Reusable Widgets

Most of the time, we are duplicating so many widgets just for a little change. What could be the best possible way to get rid of these things? It’s creating Reusable Widgets. It’s always good practice to use Reusable Widgets to maintain consistency throughout the app. When we are dealing with multiple projects, we don’t like to write to each code multiple times. It will create duplication and in the end, if any issue comes we end up with a mess. So, the best way is to create a base widget and use it everywhere. You can modify it based on your requirement and another advantage is if any change comes then you need to do it in one place and it’ll be reflected everywhere. ... Try to code less business logic inside a UI widget. All the communication between the user and UI should be done via events. So, if there is a need to use the same widget in another project, you can do it quickly. ... Access data via callbacks is the best possible way to separate your View part from business logic(Just like View and ViewModel).

The mobile testing gotchas you need to know about

If you’re dealing with a native mobile application, you can find yourself in the wild west. It’s not so bad on iOS, where current OS support is available for devices several years old, but in the Android world, the majority of currently active devices are running versions four or five years old. This presents a huge challenge for testing. In my group, we’re lucky enough to only deliver on iPads, and we set a policy of only supporting the currently shipping version of iOS and one major release back. But if you are trying to be more inclusive or are stuck supporting the much more heterogeneous Android ecology, you have to do a lot of testing across multiple devices and OS versions. You can’t even get away with testing on a lowest common denominator release. Your dev team is probably conditionally taking advantage of new OS features, such as detecting which OS version the device is running and using more modern features when available. As a result, you have to test against pretty much every version of the OS you need to support.

Fujitsu delivers exascale supercomputer that you can soon buy

Fujitsu announced last November a partnership with Cray, an HPE company, to sell Cray-branded supercomputers with the custom processor used in Fugaku. Cray already has deployed four systems for early evaluation located at Stony Brook University, Oak Ridge National Laboratory, Los Alamos National Laboratory, and the University of Bristol in Britain. According to Cray, systems have been shipped to customers interested in early evaluation, and it is planning to officially launch the A64fx system featuring the Cray Programming Environment later this summer. Fugaku is remarkable in that it contains no GPUs but instead uses a custom-built Arm processor designed entirely for high-performance computing. The motherboard has no memory slots; the memory is on the CPU die. If you look at the Top500 list now and proposed exaFLOP computers planned by the Department of Energy, they all use power-hungry GPUs. As a result, Fugaku prototype topped the Green500 ranking last fall as the most energy efficient supercomputer in the world. Nvidia’s new Ampere A100 GPU may best the A64fx in performance but with its 400-watt power draw it will use a lot more power.

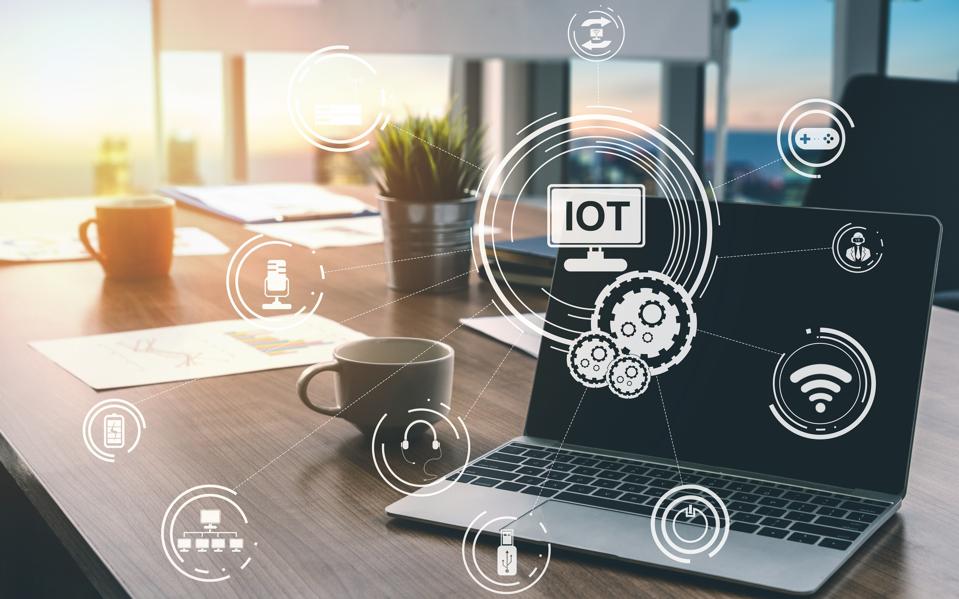

Use of cloud collaboration tools surges and so do attacks

The use rate of certain collaboration and videoconferencing tools has been particularly high. Cisco Webex usage has increased by 600%, Zoom by 350%, Microsoft Teams by 300% and Slack by 200%. Again, manufacturing and education ranked at the top. While this rise in the adoption of cloud services is understandable and, some would argue, a good thing for productivity in light of the forced work-from-home situation, it has also introduced security risks. McAfee's data shows that traffic from unmanaged devices to enterprise cloud accounts doubled. "There's no way to recover sensitive data from an unmanaged device, so this increased access could result in data loss events if security teams aren't controlling cloud access by device type." Attackers have taken notice of this rapid adoption of cloud services and are trying to exploit the situation. According to McAfee, the number of external threats targeting cloud services increased by 630% over the same period, with the greatest concentration on collaboration platforms.

Analytics critical to decisions about how to return to work

"There's a couple of things, and one is understanding your performance," Menninger said. "That's a key aspect of analytics -- understanding your current performance, extrapolating from that performance, planning and looking forward with that information -- and finding some patterns in the past that perhaps might be useful." Doing an internal analysis can also help an organization find ways to cut costs it may not have taken advantage of in the past. Trimming costs, meanwhile, is something many enterprises don't do when the economy is more stable and their profits more predictable, but economic uncertainty forces organizations to more closely examine their spending, said Mike Palmer, CEO of analytics startup Sigma Computing. "One thing to look at is how to optimize the business -- where do I have efficiencies that I can gain, how many do I have?" Palmer said. "There are so many questions that the average company doesn't effectively answer in good times because they don't focus on optimization."

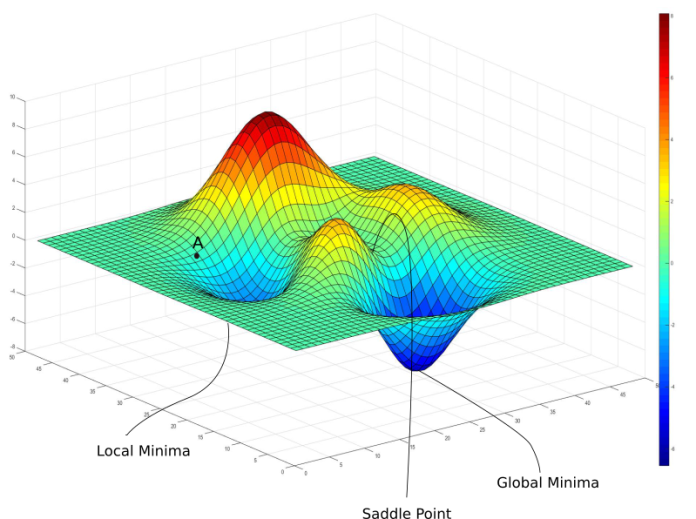

Machine Learning in Java With Amazon Deep Java Library

Interest in machine learning has grown steadily over recent years. Specifically, enterprises now use machine learning for image recognition in a wide variety of use cases. There are applications in the automotive industry, healthcare, security, retail, automated product tracking in warehouses, farming and agriculture, food recognition and even real-time translation by pointing your phone’s camera. Thanks to machine learning and visual recognition, machines can detect cancer and COVID-19 in MRIs and CT scans. Today, many of these solutions are primarily developed in Python using open source and proprietary ML toolkits, each with their own APIs. Despite Java's popularity in enterprises, there aren’t any standards to develop machine learning applications in Java. ... One of these implementations is based on Deep Java Library (DJL), an open source library developed by Amazon to build machine learning in Java. DJL offers hooks to popular machine learning frameworks such as TensorFlow, MXNet, and PyTorch by bundling requisite image processing routines, making it a flexible and simple choice for JSR-381 users.

Quote for the day:

"It is one thing to rouse the passion of a people, and quite another to lead them." -- Ron Suskind