Real Time Matters in Endpoint Protection

And the problem is pervasive. According to a report from IDC, 70% of all successful network breaches start on endpoint devices. The astonishing number of exploitable operating system and application vulnerabilities makes endpoints an irresistible target for cybercriminals. They are not just desirable because of the resources residing on those devices, but also because they are an effective entryway for taking down an entire network. While most CISOs agree that prevention is important, they also understand that 100% effectiveness over time is simply not realistic. In even the most conscientious security hygiene practice, patching occurs in batches rather than as soon as a new patch is released. Security updates often trail behind threat outbreaks, especially those from malware campaigns as opposed to variants of existing threats. And there will always be that one person who can’t resist clicking on a malicious email attachment. Rather than consigning themselves to defeat, however, security teams need to adjust their security paradigm. When an organization begins to operate under the assumption that every endpoint device may already be compromised, defense strategies become clearer, and things like zero trust network access and real time detection and defusing of suspicious processes become table stakes.

The Problem with Artificial Intelligence in Security

There is a lot of promise for machine learning to augment tasks that security teams must undertake — as long as the need for both data and subject matter experts are acknowledged. Rather than talking about "AI solving a skill shortage," we should be thinking of AI as enhancing or assisting with the activities that people are already performing. So, how can CISOs best take advantage of the latest advances in machine learning, as its usage in security tooling increases, without being taken in by the hype? The key is to come with a very critical eye. Consider in detail what type of impact you want to have by employing ML and where in your overall security process you want this to be. Do you want to find "more bad" or do you want to help prevent user error or one of the other many possible applications? This choice will point you toward different solutions. You should ensure that the trade-offs of any ML algorithm employed in these solutions are abundantly clear to you, which is possible without needing to understand the finer points of the math under the hood.

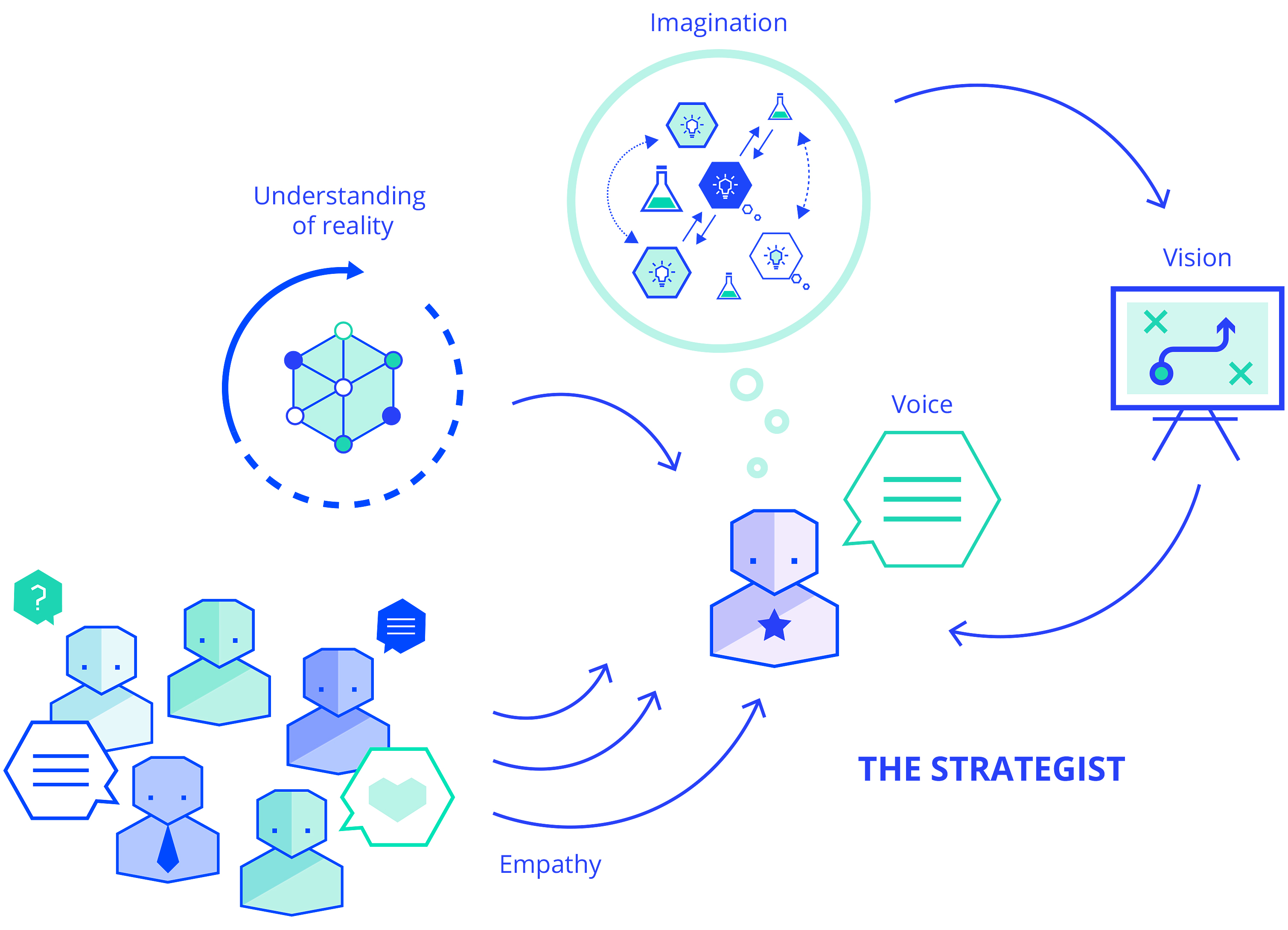

Strategy never comes into existence fully formed. Today, for example, we know that part of Ikea’s strategy is to produce low-cost furniture for growing families. We also know that, behind the scenes, Ikea innovates with its products and supply chain. Once upon a time, the founder of Ikea did not sit at his kitchen table to create this strategy. And he absolutely did not use a Five Forces template or a business-model canvas. What happened was that, once the business had started and as time passed, events shaped Ikea and, of course, Ikea shaped events. ... Strategy patterns form a bridge between expert strategists, those who have walked the walk, and those who are less experienced. They accelerate the production of new strategies, reduce the number of arguments that arise from uncertainty, and help groups to align on their next actions. By using patterns, those less experienced can benefit from knowledge they haven't had time to build on their own. At the same time, patterns give experienced strategists a rubric that lets them teach strategy.

Blazor Finally Complete as WebAssembly Joins Server-Side Component

Blazor, part of the ASP.NET development platform for web apps, is an open source and cross-platform web UI framework for building single-page apps using .NET and C# instead of JavaScript, the traditional nearly ubiquitous go-to programming language for the web. As Daniel Roth, principal program manager, ASP.NET, said in an announcement post today, Blazor components can be hosted in different ways, server-side with Blazor Server and now client-side with Blazor WebAssembly. "In a Blazor Server app, the components run on the server using .NET Core. All UI interactions and updates are handled using a real-time WebSocket connection with the browser. Blazor Server apps are fast to load and simple to implement," he explained. "Blazor WebAssembly is now the second supported way to host your Blazor components: client-side in the browser using a WebAssembly-based .NET runtime. Blazor WebAssembly includes a proper .NET runtime implemented in WebAssembly, a standardized bytecode for the web. This .NET runtime is downloaded with your Blazor WebAssembly app and enables running normal .NET code directly in the browser."

How event-driven architecture benefits mobile UX

At its most basic, the EDA consists of three types of components: event producers, event channels and event consumers. They may be referred to by other names, but most EDA systems follow the same basic outline. The producers and consumers operate without knowledge of or dependencies on each other, making it possible to develop, deploy, scale and update the components independently. The events themselves tie the decoupled pieces together. A producer can be any application, service or device that generates events for publishing to the event channel. Producers can be mobile applications, IoT devices, server services or any other systems capable of generating events. The producer is indifferent to the services and systems that consume the event and is concerned only with passing on formatted events to the event channel. The event channel provides a communication hub for transferring events from the producers to the consumers.

Microsoft Teams Rooms: Switch to OAuth 2.0 by Oct 13 or your meetings won't work

While it is simple to set up, it exposes credentials to attackers capturing them on the network and using them on other devices. Basic Authentication is also an obstacle to adopting multi-factor authentication in Exchange Online, said Microsoft. Microsoft intends to turn off Basic Authentication in Exchange Online for Exchange ActiveSync (EAS), POP, IMAP and Remote PowerShell on October 13, 2020. It's encouraging customers to use the OAuth 2.0 token-based 'Modern Authentication'. After installing the Teams Room update, admins will be able to configure the product to use Modern Authentication to connect to Exchange, Teams, and Skype for Business services. This move reduces the need to send actual passwords over the network by using OAuth 2.0 tokens provided b Azure Active directory. While the change is optional until October 13, Microsoft suggests login problems could arise after the cut-off date for Microsoft Teams Rooms configured with basic authentication. "Modern authentication support for Microsoft Teams Rooms will help ensure business continuity for your devices connecting to Exchange Online," it said. But it will let customers choose when to switch to modern authentication until October 13.

Digital Transformation without the Judgement

CEOs have to focus ruthlessly on a small number of priorities. One customer in the rail industry went for approval of an SAP S/4HANA project, and the CFO saw the 8-figure budget and asked the CIO: would you like me to approve this project, or buy one more locomotive this year? You might be thinking “buy the train,” but it’s not that simple. What if this IT project improved rail network throughput by 2%, or decreased the chances of a derailment by 10%? What if it provided efficiencies in cargo prioritisation that meant two fewer locomotives needed to be in service? What are your priorities? How might they be achieved by IT investments? Today’s new hires are the Instagram generation. They primarily share images on Social Media, not diatribes about their personal life. Tomorrow’s new hires will be the Snapchat and TikTok generation, and before we know it, there will be a generation of employees who have never used a laptop. That might be an exaggeration, but the new generation of workers expect to have an excellent user experience for the tools they use in the workplace. If you want to hire the best talent, you are going to need to think about their needs.

Introducing Project Tye

Project Tye is an experimental developer tool that makes developing, testing, and deploying microservices and distributed applications easier. When building an app made up of multiple projects, you often want to run more than one at a time, such as a website that communicates with a backend API or several services all communicating with each other. Today, this can be difficult to setup and not as smooth as it could be, and it’s only the very first step in trying to get started with something like building out a distributed application. Once you have an inner-loop experience there is then a, sometimes steep, learning curve to get your distributed app onto a platform such as Kubernetes. ... If you have an app that talks to a database, or an app that is made up of a couple of different processes that communicate with each other, then we think Tye will help ease some of the common pain points you’ve experienced.

Containers as an enabler of AI

The use of containers can greatly accelerate the development of machine learning models. Containerized development environments can be provisioned in minutes, while traditional VM or bare-metal environments can take weeks or months. Data processing and feature extraction are a key part of the ML lifecycle. The use of containerized development environments makes it easy to spin up clusters when needed and spin them back down when done. During the training phase, containers provide the flexibility to create distributed training environments across multiple host servers, allowing for better utilization of infrastructure resources. And once they're trained, models can be hosted as container endpoints and deployed either on premises, in the public cloud, or at the edge of the network. These endpoints can be scaled up or down to meet demand, thus providing the reliability and performance required for these deployments. For example, if you're serving a retail website with a recommendation engine, you can add more containers to spin up additional instances of the model as more users start accessing the website.

Google Open-Sources AI for Using Tabular Data to Answer Natural Language Questions

Co-creator Thomas Müller gave an overview of the work in a recent blog post. Given a table of numeric data, such as sports results or financial statistics, TAPAS is designed to answer natural-language questions about facts that can be inferred from the table; for example, given a list of sports championships, TAPAS might be able to answer "which team has won the most championships?" In contrast to previous solutions to this problem, which convert natural-language queries into software query languages such as SQL, which then run on the data table, TAPAS learns to operate directly on the data and outperforms the previous models on common question-answering benchmarks: by more than 12 points on Microsoft's Sequential Question Answering (SQA) and more than 4 points on Stanford's WikiTableQuestions (WTQ). Many previous AI systems solve the problem of answering questions from tabular data with an approach called semantic parsing, which converts the natural-language question into a "logical form"---essentially translating human language into programming language statements.

Quote for the day:

"Leadership is not a solo sport; if you lead alone, you are not leading." -- D.A. Blankinship

No comments:

Post a Comment