Microsoft: .NET 5 preview for Windows 10, iPhone, Android Surface Duo apps is out

Ahead of the final version of .NET 5, Microsoft has a clear message for developers: ".NET Core and then .NET 5 is the .NET you should build all your NEW applications with." "Having a version 5 that is higher than both .NET Core and .NET Framework also makes it clear that .NET 5 is the future of .NET, which is a single unified platform for building any type of application," said Scott Hunter, director of program management at Microsoft .NET. The first preview includes support for Windows Arm64 and the .NET Core runtime, while the second preview will include an SDK with ASP .NET Core but not WPF or Windows Forms, which should arrive in a subsequent preview. The preview should allow developers to update existing projects by updating the target framework. The main goals for .NET include providing a unified .NET SDK with a single Base Class Library (BCL) across all .NET 5 applications, with Xamarin moving to the .NET core BCL. Since Xamarin is integrated into .NET 5 the .NET SDK will support mobile. Microsoft's ongoing work on Blazor should also mean web application support across platforms, including browsers, on mobile devices and as a native desktop application for Windows 10 and Windows 10X.

IR35 reform delay: how tech companies and contractors should respond

Paul Wright, head of the technology practice, Odgers Interim has some very important advice on how companies should respond to the regulatory respite- revoke any blanket bans on contractors. He says “businesses have now been given some breathing room to get their houses in order and I cannot stress enough how important it is for them to take this time to revoke any blanket assessment statues they have enforced and re-evaluate their contingent workforce needs. “As the impact of Covid-19 steers the economy into unchartered waters, the UK’s freelance, independent and contractor workforces will be more important than ever for tech firms – which already rely heavily on this industry.” Wright also sees contractors and freelancers as the solution to absences in the permanent workforce cause by Covid-19. “Many organisations will not only need to procure the specialist skillsets of contractors and independents to help guide them through increasing levels of disruption but will also need to call upon their support to fill in for permanent staff who are either self-isolating or having to look after family members.

Data Governance: How to Tackle 3 Key Issues

Some security practitioners argue that larger organizations should designate different accountable parties for protecting the privacy of customer, product and financial data - or even designate those in charge in each region. But organizations need someone at the top of the chain, such as a chief data officer, so that federated ownership can be kept in check, Deb says. Deb has also implemented a RACI - responsible, accountable, consulted and informed - matrix that helps him assign data owners. "So respective business units or their heads own the data and the accountability," he says. "For instance, IT is the data custodian, assurance functions are the data governors and so on. That way, an entire RACI matrix is built for every application, platform and data we process internally." One of the major roadblocks in the data governance process is the problem of shadow IT, Deb says. Shadow IT is where development happens either in-house or through an outsourced partner without the supervision and governance of the IT InfoSec and privacy teams.

9 Cybersecurity Takeaways as COVID-19 Outbreak Grows

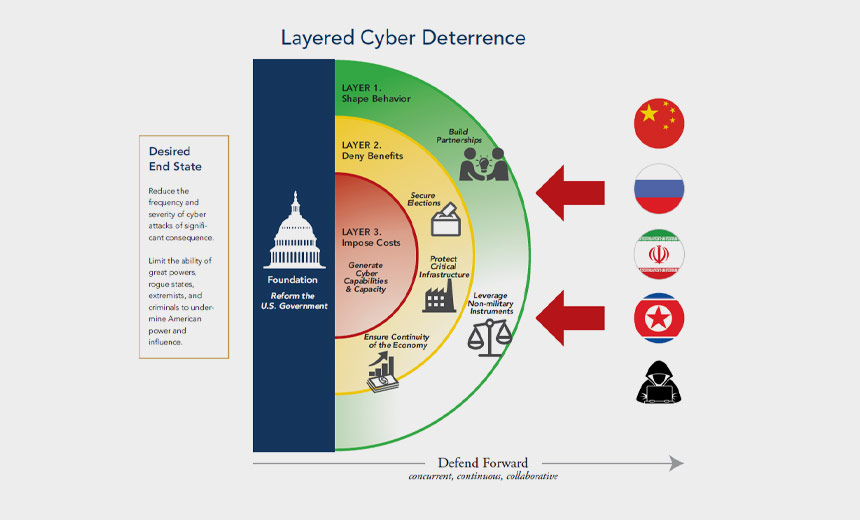

Security experts cite phishing attacks as being one of the biggest threats in this new environment, and warn that existing efforts to safeguard employees are too often inadequate. "Phishing attacks are on the rise, and employees at home might be especially vulnerable," attorneys Jonathan Armstrong and André Bywater say in a client note. "We've expressed concerns before that a lot of 'off-the-shelf' phishing training is not fit for purpose. It's important to make sure employees are trained and that they have regular reminders. Organizations using [Office 365] may be especially vulnerable at this time." To help, many organizations are releasing materials for free. For example, the SANS Institute has released large parts of its commercial awareness materials. But with phishing attacks that prey on coronavirus fears already surging, many organizations are playing catchup. "Like many phishing scams, these emails are preying on real-world concerns to try and trick people into doing the wrong thing," the U.K.'s National Cyber Security Center says, noting that shipping, transport and retail industries were being targeted.

Reasons For Transitioning To Cloud Computing In 2020

Cloud computing has now become a common term that all of us have heard of. However, unfortunately, many of us still don’t understand the complete potential of cloud computing. It is high time for all us to understand how it can make our lives easier. Instead of storing data on a computer or hard drive, cloud computing stores programs and data over the internet. In other words, in order to access your data, you must be connected to the internet. In fact, many of us already use cloud computing unknowingly, while listening to our favorite tunes on Spotify or using Google Drive for data storage. The flexibility and functionality of cloud computing have already proven to be a lifesaver for businesses. However, cloud computing for a business is entirely different from the personal use of the cloud. Before the implementation of cloud computing, businesses need to choose between Software-as-a-Service (SaaS), Platform-as-a-Service (or PaaS), or Infrastructure-as-a-Service (IaaS). In a nutshell, PaaS allows users the freedom to come up with customized applications as per their requirements. On the other hand, SaaS requires users to subscribe to a chosen application.

IT Priorities 2020: Digitisation drives IT modernisation growth

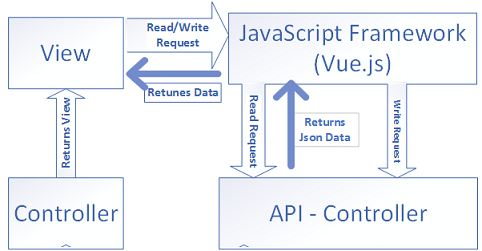

Opening up APIs, with access controlled via an API management platform, is one of the ways IT departments can minimise the effort needed to modernise applications. The survey reported that 47% of IT professionals said they planned to increase the use of cloud infrastructure to support digital transformation initiatives in 2020. Applications can be replatformed from on-premise servers to public cloud-hosted infrastructure-as-a-service (IaaS) platforms. In fact, 38% of the respondents said they would increase their cloud budgets in 2020. This potentially shifts spending from a capital expenditure model for on-premise datacentre hardware to pay-as-you-go in the public cloud. Many of the legacy applications that are migrated to the cloud can only run in virtual machines (VMs). VMs in the public cloud replace physical servers or on-premise VMs. But as organisations move along their journey to become cloud-native, in some instances, IT professionals are looking at splitting legacy code into functional building blocks.

AI adoption in the enterprise 2020

AI adoption is proceeding apace. Most companies that were evaluating or experimenting with AI are now using it in production deployments. It’s still early, but companies need to do more to put their AI efforts on solid ground. Whether it’s controlling for common risk factors—bias in model development, missing or poorly conditioned data, the tendency of models to degrade in production—or instantiating formal processes to promote data governance, adopters will have their work cut out for them as they work to establish reliable AI production lines. Survey respondents represent 25 different industries, with “Software” (~17%) as the largest distinct vertical. The sample is far from tech-laden, however: the only other explicit technology category—“Computers, Electronics, & Hardware”—accounts for less than 7% of the sample. The “Other” category (~22%) comprises 12 separate industries. One-sixth of respondents identify as data scientists, but executives—i.e., directors, vice presidents, and CxOs—account for about 26% of the sample. The survey does have a data-laden tilt, however: almost 30% of respondents identify as data scientists, data engineers, AIOps engineers, or as people who manage them.

Electronics should sweat to cool down, say researchers

Computing devices should sweat when they get too hot, say scientists at Shanghai Jiao Tong University in China, where they have developed a materials application they claim will cool down devices more efficiently and in smaller form-factors than existing fans. It’s “a coating for electronics that releases water vapor to dissipate heat from running devices,” the team explain in a news release. “Mammals sweat to regulate body temperature,” so should electronics, they believe. The group’s focus has been on studying porous materials that can absorb moisture from the environment and then release water vapor when warmed. MIL-101(Cr) checks the boxes, they say. The material is a metal organic framework, or MOF, which is a sorbent, a material that stores large amounts of water. The higher the water capacity one has, the greater the dissipation of heat when it's warmed. MOF projects have been attempted before. “Researchers have tried to use MOFs to extract water from the desert air,” says refrigeration-engineering scientist Ruzhu Wang, who is senior author of a paper on the university’s work that has just been published in Joule.

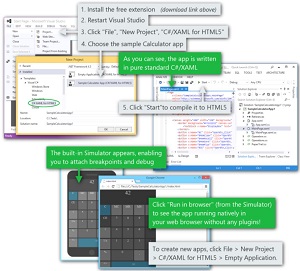

Silverlight Reborn? Check Out 'C#/XAML for HTML5'

Now ... comes C#/XAML for HTML5 from Userware, which today announced its Silverlight-replacement project, also called CSHTML5, has reached release candidate status after a lengthy beta program. The tool comes as a Visual Studio extension in the Visual Studio Marketplace, promising to create HTML5 apps using only C# and XAML -- or migrate existing Silverlight apps to the Web. "Developers are now able to use C# and XAML to write apps that run in the browser," the French company said. "Absolutely no knowledge of HTML5 or JavaScript is required to use the extension, as it compiles your files to HTML5 and JavaScript for you. That means you can now build Web apps with static typing and all the strengths of C# and XAML, and make sure your code is ready when WebAssembly comes out." WebAssembly is upcoming experimental technology presented as an open standard that lets developers write low-level assembly-like code for the browser in non-JavaScript languages like C, C++ and even .NET languages like C# for improved performance over JavaScript. Until WebAssembly fully supported in the Web ecosystem, CSHTML5 might be seen as an alternative for .NET-centric developers.

More Business Websites Hit by Credit-card Skimming Malware

A malicious script planted on the NutriBullet website's payment page stole credit card numbers, expiry dates, CVV codes, names, and addresses of unsuspecting blender buyers and sent it to a server under the control of cybercriminals. According to the report, the sensitive data was then sold to other criminals on underground forums. RiskIQ says that although NutriBullet has attempted to clean up the poisoned webpages, the attackers continue to break back in and plant malicious code - suggesting that the attackers continue to exploit a way of compromising the blender maker's infrastructure. Peter Huh, the CIO of NutriBullet, confirmed that a security breach had occurred and said that a forensic investigation into the incident had been initiated. There is no word yet as to what plans NutriBullet has to inform affected customers. In both cases it feels like the companies at the centre of the security breaches should be responding more transparently with their users, ensuring that they are informed promptly and given as much detail as possible about what has occurred.

Quote for the day:

"Leaders must encourage their organizations to dance to forms of music yet to be heard." -- Warren G. Bennis