The augmented city: how technologists are transforming the Earth into theater

Want incredible immersive experiences in your city? Remaining technological hurdles include saving digital content to location, accessing a real-time 3D semantic world map, occlusion of digital content with the physical world, and multi-player. Centimeter positioning is required. However, Global Navigation Satellite Systems (GNSS) such as BeiDou, Galileo, and GPS fail to achieve this without the software and hardware to tap into geodetic infrastructure. Advancing capabilities of consumer cameras, leveraging dual raw GNSS data, 5G networks, and computer vision offer potential solutions, including triangulating position from landscape images snapped from a smartphone. 2019, Buckingham Palace, London. The palace is one of the locations augmented, or enabled, or activated, via Snap’s Lens Studio Landmarker enabling real-time AR immersive experiences. Studio has achieved over 400,000 AR lens and 15 billion plays. Snap currently has a US market catchment of 90% of 13-to 24-year-olds, a higher share than Facebook or Instagram.

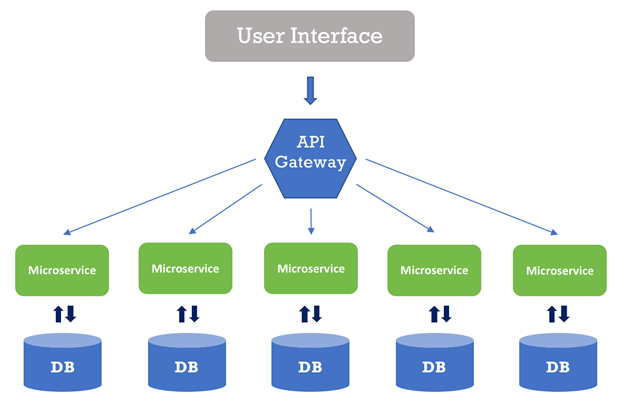

Origins of Enterprise Architecture Frameworks

Over the last thirty years, one EA framework has risen to become the most popular EA framework. That framework is The Open Group Architecture Framework, or TOGAF. The Open Group was formed in 1988 as a result of the merger of The Open Software Foundation and X/Open Company. The mission was to form a consortium that seeks to enable the achievement of business objectives through the development of open, vendor-neutral technology standards. The Open Group grew to over 650 active members who create standards for the field of computer engineering. Through this effort the Open Group created ArchiMate, a model that breaks down systems into active structures, passive structures or behaviors. TOGAF is currently in its tenth version, but the most widely recognizable feature of The Open Group’s TOGAF is the ADM, or Architecture Development Model. This model uses a cyclical approach to the development of an architecture. The cycle consists of developing a vision; defining the business, application, data, and technology domains; planning; managing change; deploying; and governing the architecture while maintain the requirements as a central focal point.

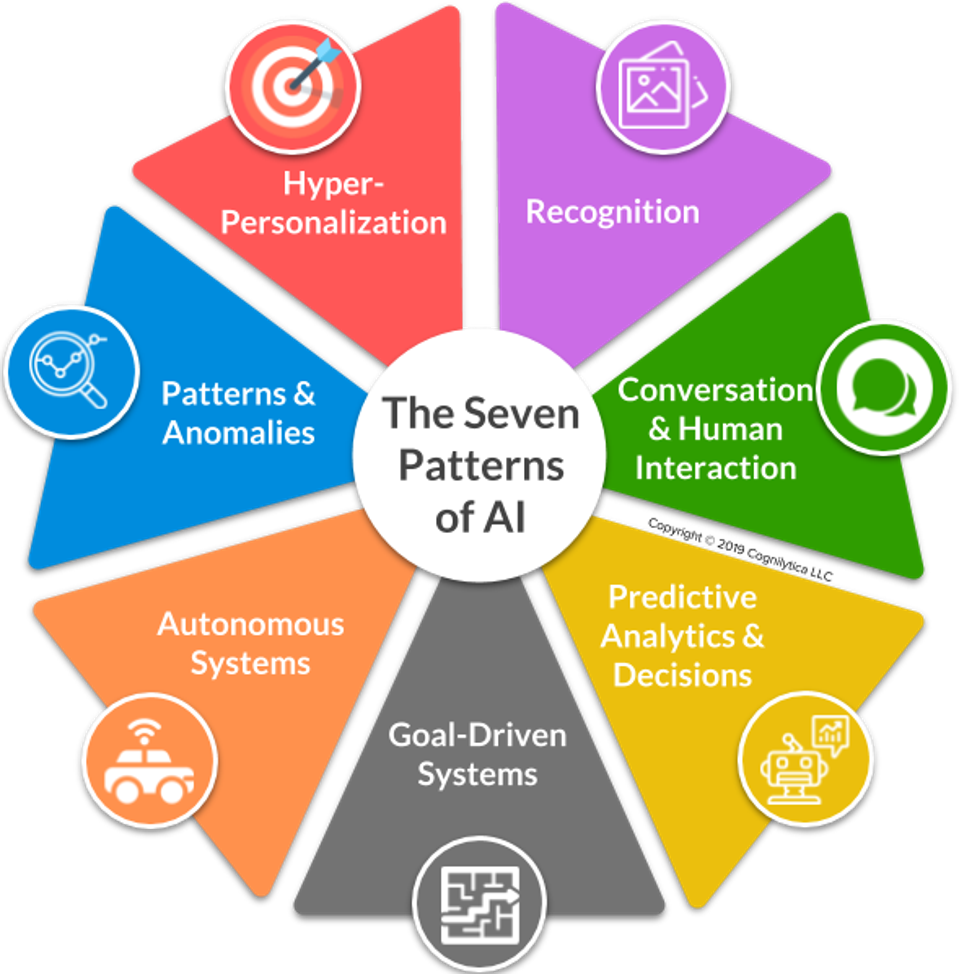

Make Artificial Intelligence Work for Your Business Needs

Enterprises beginning their AI journeys often rely on the services of the software provider or an AI development company to provide necessary customization. Some organizations, however, attempt to tackle the work in house, often with mixed results. "Having internal AI capability -– a combination of talent, platforms, tools, knowledge, relationship, and data -– offers the option of doing it internally versus outsourcing," said Monika Wilczak, an advisory managing director in artificial intelligence at business services advisory EY. "The stronger the internal AI capability, and more mature the enterprise is around the application of AI as a strategy for growth, the more likely it is to use their own data scientists and application engineers for customization," she explained. Still, even enterprises with full-fledged AI development teams can find customization to be an expensive and time-consuming undertaking. "Customization of vendors’ AI products requires data class inclusiveness, controls to avoid data bias, and the availability of a sufficient volume of labeled data,"

How To Drive Innovation During A Recession

Fast-Fail Innovation is technically easy for us to do, but we have no idea if anyone will buy these ideas from our company. This is where entrepreneurs play. Here you must go to market to quickly test and learn. You expect to fail fast and often before succeeding with an offering that may literally be refined by your customers’ in-market feedback. Unfortunately, although this type of innovation can be done quickly and inexpensively, your team must be ready to experience many, many, maaaaaaany failures before they find a winning, new idea. Under the pressure of a recession, teams are afraid to fail for fear of losing their jobs, so they will actively avoid engaging in the very activity that makes this quadrant successful. ... Differentiation Innovation is technically difficult for us to do, but we know our customers really want it. We know this because we can measure which problems to fix first, second and third. We can measure the size of each opportunity. We can measure the price customers will pay us if we address a specific need or problem.

Microsoft: Cyberattacks now the top risk, say businesses

This year, the second most widely considered top-five risk is economic uncertainty, followed by brand damage, regulation, and loss of key personnel. The World Economic Forum (WEF) 2019 Global Risks Report ranks data theft and cyberattacks as top-5 risks in terms of likelihood, but they are behind extreme weather events and climate change concerns. Of course, since 2017 the world has seen the damage caused by the WannaCry ransomware outbreak, which the US government blamed on North Korea. It was shortly followed by the hugely costly NotPetya malware, which was blamed by governments in the West on Kremlin hackers. Criminal ransomware attacks continue to strike targets too, such as the attack on Norsk Hydro earlier this year that cost it $40m. And over the past few months, multiple US local governments have weathered targeted ransomware attacks with at least one attacker demanding a ransom payment of $5.3m. Lately, universities across the West have come under fire from state-sponsored hacking groups in search of intellectual property. However, these days business email compromise (BEC) is shaping up to be the most costly and common threat.

Facial recognition technology threatens to end all individual privacy

The consequences can be even more malign. Experts including the London police ethics panel argue that facial recognition could have a racial and gender bias. That is certainly what the American experience with this technology implies. The technology relies on sifting through the biometric data of thousands of people on criminal databases. But the datasets do not have enough information on racial minorities or women to be accurate. Many of these groups already have a deep mistrust of the police. Being wrongly targeted by a racially biased algorithm will not help this. And it is not just the state that is involved. An investigation by Big Brother Watch found that privately owned sites – including shopping centres, property developers, museums and casinos – have been using facial recognition, too. A trial in Manchester’s Trafford Centre scanned more than 15 million faces before ultimately being stopped in its tracks by the surveillance camera commissioner. ... Sadly, the high court in Wales did not grasp the conflict with civil liberties, recently ruling that a facial recognition trial by South Wales police was legal.

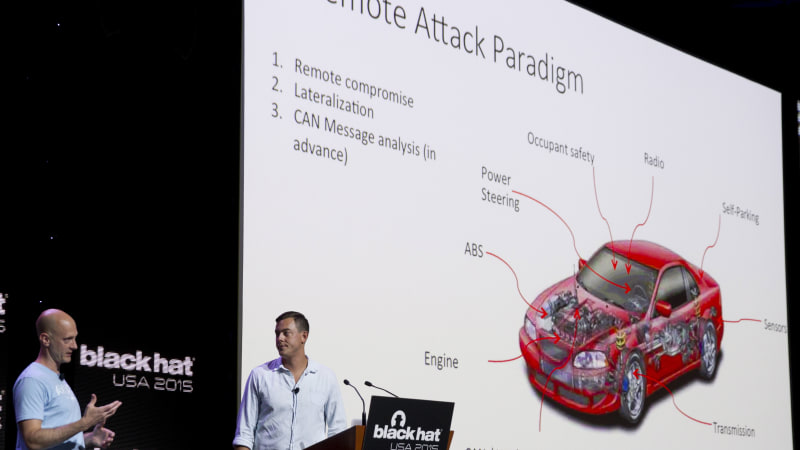

How a hacked Jeep Cherokee led to increased security from cyber carjackers

Harman saw its Jeep hack experience as a viable business opportunity: the supplier today sells cybersecurity software that allows automakers to monitor their fleets and provide over-the-air software updates. Analysts at IHS Markit consider Harman one of the top players in that segment, with some 20 automakers using its over-the-air services. Harman does not break out revenue for that business. But the company does try to recover some costs by charging higher prices for advanced security. "We have to educate our sales people in conversations with carmakers' purchasing departments and say 'don't let this go without adding cybersecurity to your quote'," said Amy Chu, Harman's senior director of automotive product security. Asaf Atzmon, the Israel-based vice president and general manager for automotive cybersecurity, said Harman has come a long way since he joined in March 2016 as part of the TowerSec deal. At the time, Harman employed only some security architects, and the company later changed its organizational structure, appointing or hiring professionals such as Wood and Chu to oversee cybersecurity efforts, Atzmon said.

Shared resources enable greater collaboration: big science in the cloud

The experience in developing DataLabs has provided a springboard for rolling out similarly collaborative platforms such as solutions supporting the Data and Analytics Facility for National Infrastructure (DAFNI). This is a project that aims to integrate advanced research models with established national systems for modelling critical infrastructure. “Led by Oxford University and funded by the EPSRC, the initiative aspires over the next 10 years to be able to model the UK at a household level, 50 years into the future,” explains Nick Cook, a senior analyst at Tessella. Here, the firm is involved in conceptualizing DAFNI’s capabilities and implementation roadmap. One of the project’s early goals is to create a “digital twin” of a UK city such as Exeter – in other words, to virtually describe a city with a population of several hundred thousand people together with its transport infrastructure, utility services and environmental context. This digital twin would, for example, help planners to decide where to invest in new road or rail networks, and to identify the best sites for housing, schools and doctors’ surgeries.

Automation in the workplace could disproportionately affect women

It wouldn’t be unprecedented. Decades ago, roles like “social media manager” and “data scientist” hadn’t been conceived, much less sought after. Krishnan said that typically, roughly 10% of employment at any given time is in these newly emerged groups of occupations, amounting to 160 million jobs globally. Whether they take up new work or acquire new skills in their current fields, Krishnan anticipates that tens of millions of workers will have to make some sort of occupational transition by 2030. Many of those workers are women — as many as 40 million to 160 million globally. Encouragingly, in both developed and emerging markets, the new jobs that are expected to come into vogue are likely to be higher-wage, according to Krishnan. Those jobs will furthermore involve less drudgery, which will be traded for tasks ostensibly more socially and intellectually stimulating. In fact, Krishnan believes that this future of work will require more interpersonal know-how of the workers who occupy its roles.

How Artificial Intelligence is Changing the Landscape of Digital Marketing

Artificial intelligence tools help digital marketers to understand customer behavior and make the right recommendations at the right time. A tool with the millions of predefined conditions knows how customers react to a particular situation, ad copy, videos or any other touchpoint. While humans can’t assess the large set of data better than a machine in a limited timeframe. You can collect the insights on your fingertips with the help of AI. Where to find an audience? how to interact with them? What to send them? How to send them? What is the right time to connect? When to send a follow-up? All these answers lie in the AI-powered digital marketing platforms. With a smart analysis pattern AI, tools can make better suggestions and help in decision making. A personalized content recommendation to the right audience at the right time guarantees the success of any campaign. Digital marketers are really getting pushed harder to demonstrate the success of content and campaigns. With AI tools utilization of potential data is very easy and effective.

Quote for the day:

"We can't understand someone else's ideas while we're busy thinking about our own." -- Tim Fargo