MIT: We're building on Julia programming language to open up AI coding to novices

The system allows coders to create a program that, for example, can infer 3-D body poses and therefore simplify computer vision tasks for use in self-driving cars, gesture-based computing, and augmented reality. It combines graphics rendering, deep-learning, and types of probability simulations in a way that improves a probabilistic programming system that MIT developed in 2015 after being granted funds from a 2013 Defense Advanced Research Projects Agency (DARPA) AI program. The idea behind the DARPA program was to lower the barrier to building machine-learning algorithms for things like autonomous systems. "One motivation of this work is to make automated AI more accessible to people with less expertise in computer science or math," says lead author of the paper, Marco Cusumano-Towner, a PhD student in the Department of Electrical Engineering and Computer Science. "We also want to increase productivity, which means making it easier for experts to rapidly iterate and prototype their AI systems."

The system allows coders to create a program that, for example, can infer 3-D body poses and therefore simplify computer vision tasks for use in self-driving cars, gesture-based computing, and augmented reality. It combines graphics rendering, deep-learning, and types of probability simulations in a way that improves a probabilistic programming system that MIT developed in 2015 after being granted funds from a 2013 Defense Advanced Research Projects Agency (DARPA) AI program. The idea behind the DARPA program was to lower the barrier to building machine-learning algorithms for things like autonomous systems. "One motivation of this work is to make automated AI more accessible to people with less expertise in computer science or math," says lead author of the paper, Marco Cusumano-Towner, a PhD student in the Department of Electrical Engineering and Computer Science. "We also want to increase productivity, which means making it easier for experts to rapidly iterate and prototype their AI systems."Certain Insulin Pumps Recalled Due to Cybersecurity Issues

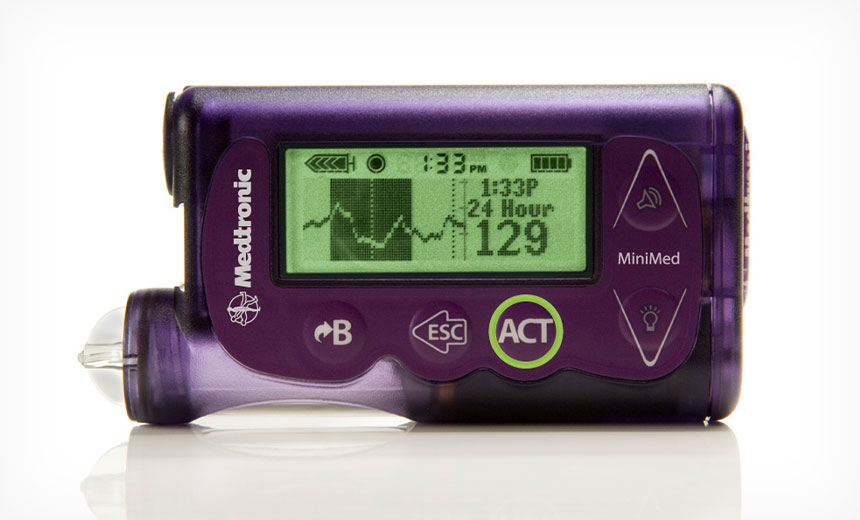

In a statement, the FDA says it is warning patients and healthcare providers that certain Medtronic MiniMed insulin pumps have potential cybersecurity risks. "Patients with diabetes using these models should switch their insulin pump to models that are better equipped to protect against these potential risks," the FDA says. The potential risks are related to the wireless communication between Medtronic's MiniMed insulin pumps and other devices such as blood glucose meters, continuous glucose monitoring systems, the remote controller and CareLink USB device used with these pumps, the FDA warns. "The FDA is concerned that, due to cybersecurity vulnerabilities identified in the device, someone other than a patient, caregiver or healthcare provider could potentially connect wirelessly to a nearby MiniMed insulin pump and change the pump's settings. This could allow a person to over deliver insulin to a patient, leading to low blood sugar (hypoglycemia), or to stop insulin delivery, leading to high blood sugar and diabetic ketoacidosis (a buildup of acids in the blood)," the agency's statement says.

Determining data value by measuring return on effort

I'm always very encouraged when professionals from different architectural disciplines can converge on common ground. This can be rare event, so when it does happen, I like to call it out. Such an event has happened recently with a contact coming from the business architecture discipline, namely Robert DuWors, with us both trying to put some metrics around the measurement of data value in our respective areas of expertise. I believe that business architecture and information architecture are the two core pillars of the architecture of an enterprise. But practitioners of these interconnected disciplines can frequently rub badly against each other, each side devaluing the other's methods and approaches. So, to reach agreement across the two on what constitutes enterprise value of our efforts is a happy place to be. ...and together, we agreed that this represents the value of a specific item of data to the enterprise from both information and business perspectives. Now, of course, this may be refined over time, but it already contains most of the aspects that together, Robert and I believe are key to this metric... So, what does this equation gives us? It's in 3 major sections, which I will call ‘Horizons.’SoftBank plans drone-delivered IoT and internet by 2023

Why the stratosphere? Well, one reason is that lower altitudes often have strong winds to deal with, including straight after storms. The companies say disaster communications could be a primary use case for the drones, and the stratosphere has a steady current. Also, because of the altitude, LTE and 5G coverage could be much more widespread than any alternative delivery mechanism implemented at a lower altitude. One High Altitude PlatformStation (HAPS), as the HAWK30’s genre of base stations are called, could provide service over about 125 miles in diameter, and about 40 HAPS could cover the entire Japanese archipelago. Whereas a set of older, tethered balloons (SoftBank developed one in 2011) might cover just six miles, SoftBank says. Others are aiming for the stratosphere, too. Newer balloons, such as Alphabet’s Loon, using tennis court-sized balloons also fly there. Softbank is a major provider of telecommunications in Japan, a country on the Pacific rim and prone to earthquakes. It is thus keen to find backup alternatives to wired, or even radio-based, ground assets that can get destroyed in natural disasters.

Five Facts on Fintech

From artificial intelligence to mobile applications, technology helps to increase your access to secure and efficient financial products and services. Since fintech offers the chance to boost economic growth and expand financial inclusion in all countries, the IMF and World Bank surveyed central banks, finance ministries, and other relevant agencies in 189 countries on a range of topics and received 96 responses. A new paper details the results of the survey alongside findings from other regional studies, and also identifies areas for international cooperation—including roles for the IMF and World Bank—and in which further work is needed by governments, international organizations, and standard-setting bodies. ... Awareness of cyber risks is high across countries and most jurisdictions have frameworks in place to protect financial systems. Most jurisdictions—79% of those with higher incomes according to the survey results—identified cyber risks in fintech as a problem for the financial sector.

The Windows 10 security guide: How to safeguard your business

Using the Windows Update for Business features built into Windows 10 Pro, Enterprise, and Education editions, you can defer installation of quality updates by up to 30 days. You can also delay feature updates by as much as two years, depending on the edition. Deferring quality updates by seven to 15 days is a low-risk way of avoiding the risk of a flawed update that can cause stability or compatibility problems. You can adjust Windows Update for Business settings on individual PCs using the controls in Settings > Update & Security > Advanced Options. In larger organizations, administrators can apply Windows Update for Business settings using Group Policy or mobile device management (MDM) software. You can also administer updates centrally by using a management tool such as System Center Configuration Manager or Windows Server Update Services. Finally, your software update strategy shouldn't stop at Windows itself. Make sure that updates for Windows applications, including Microsoft Office and Adobe applications, are installed automatically.

Use event processing to achieve microservices state management

Unfortunately, it's often confusing whether a process close to the event source actually maintains the state itself, or whether that state is somehow provided from outside the service. For this reason, it's essential to create unique transaction IDs for all state-dependent operations. You can use that ID to retrieve specific state information from a database or to drive transaction-specific orchestration. This ID also helps carry data from one phase of a transaction, or flow, to another. This setup is essentially a back-end approach to microservices state management. Front-end state management is another option. Control from the front end means that a user or edge process maintains the state data. This state information passes along through an event flow, and each successive process accumulates more information from the previous ones. Since this state information queues along with the events, you won't lose state data during a failure as long as the event stays intact. Also, when processes get scaled with more instances of the microservices involved, the event flow can provide the state data those processes need.

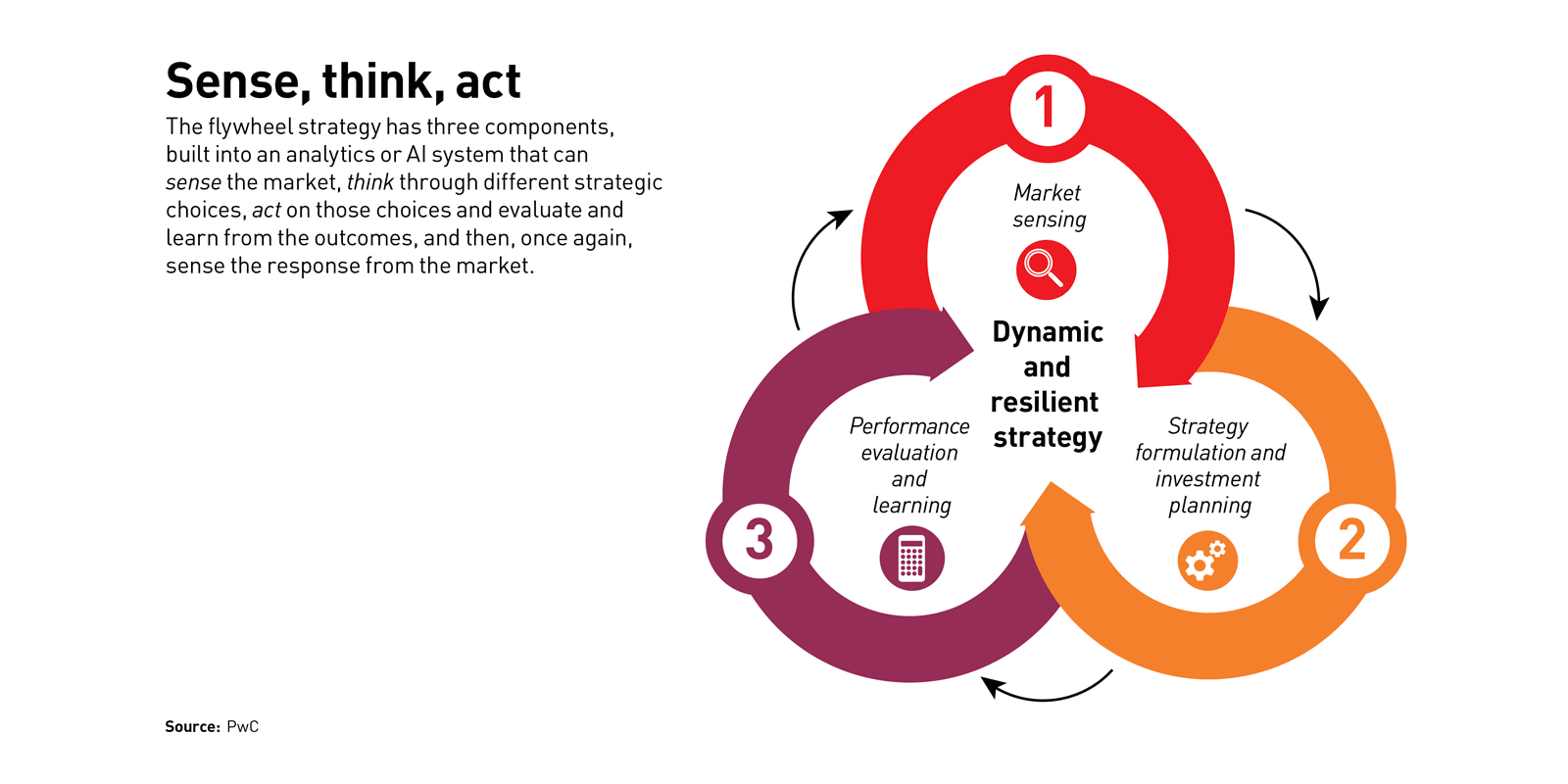

How to build disruptive strategic flywheels

Capabilities-driven strategy suggests that companies that have a clear way to play (WTP) that aligns with market demands, and that invest in a system of four to six differentiating capabilities that enable the company to excel at the WTP, are better positioned for success. But increasing clock speed changes the calculation. Today, the half-life of a competitive advantage may be fleeting. As industries are disrupted, players that have been successful within the context of one business cycle might need to rethink their differentiating capabilities, their investment portfolios, and possibly even their WTP more frequently and dynamically. Ford no longer just makes cars; it focuses instead on mobility solutions. Big oil companies are investing in renewable energy as a hedge against constraints on emissions. Amazon is competing with…everyone. As a result, it behooves organizations and managers to continually assess competitive moves, regulatory and technology evolution, and consumer preferences — and to adapt decisions in a dynamic fashion.

Smart Lock Turns Out to be Not So Smart, or Secure

Researchers are warning a keyless smart door lock made by U-tec, called Ultraloq, could allow attackers to track down where the device is being used and easily pick the lock – either virtually or physically. Ultraloq is a Bluetooth fingerprint and touchscreen door lock sold for about $200. It allows a user to use either fingerprints or a PIN for local access to a building. Ultraloq also has an app that can be used locally or remotely for access. When Pen Test Partners, with help from researchers identified as @evstykas and @cybergibbons, took a closer look they found Ultraloq was riddled with vulnerabilities. For starters, researchers found that the application programming interface (API) used by the mobile app leaked enough personal data from the user account to determine the physical address where the Ultraloq device was being used. ... API has no authentication at all,” researchers wrote. “The data is obfuscated by being base64 twice but decoding it exposes that the server side has no authentication or authorization logic. This leads to an attacker being able to get data and impersonate all the users,” researchers wrote.

Quote for the day:

"Humility is a great quality of leadership which derives respect and not just fear or hatred." -- Yousef Munayyer

![Microsoft > OneDrive [Office 365]](https://images.idgesg.net/images/article/2019/02/cw_microsoft_office_365_onedrive-100787148-large.jpg)