3 takeaways to boost your enterprise architect career in 2023

Many people confuse business architecture and IT systems architecture, but they

are different practices. Business architects transform business ideas and

potential projects that align with an organization's strategies and influence a

company's performance as a leader in their market, says Renee Biggs. IT

architects contribute to the technology components of business architecture or

enterprise architecture. ... Finding the right balance between documentation and

implementation is part of an architect's job. But what do you do if your culture

values performance a little too much, asks Evan Stoner, a senior specialist

solutions architect. His advice is to "deliver what is needed." This means

uncovering the current needs and designing for them. Change doesn't happen

overnight. The future is uncertain, so architectures are constantly

evolving. Software development is a key example, say Neal Fishman and Paul

Homan; you must continually redevelop business applications to address new

opportunities. But constant change can produce substandard solutions that

require frequent revision.

Risk and resilience: compliance in 2023

By starting to prepare early, David Tattam, Chief Research & Content Officer

and co-founder of Protecht expects that “businesses will start to realise the

tangible benefits a holistic actionable view of risk provides. Smart businesses

will track a measurable baseline of risk efficiencies over time, in line with

their profit and returns strategy, to demonstrate the ROI of their risk

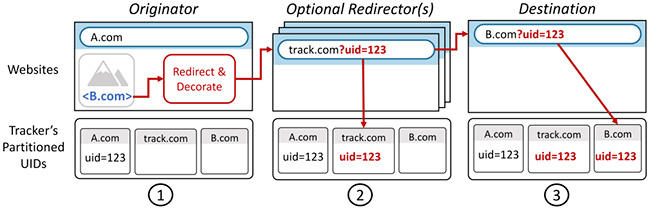

management program”. Some legislation may have complicated and far-reaching

impacts. Lee Biggenden, COO and Co-Founder of Nephos Technologies has noticed

that “there are ongoing discussions in the European Union about open data

platforms which, if passed, could revolutionise how data is used, shared and

owned. Anticipated to come into force in 2023, it will have a huge impact on

businesses who will need to put the controls and visibility in place over their

data regardless of what industry they're in. Although on paper this may seem

like a step in the right direction, it does raise concerns about personal

privacy as third-party data sharing is a key part of the proposed act. We are

all guilty of clicking privacy boxes without reading the full terms and

conditions”.

The Metaverse Doesn’t Have a Leg to Stand On

Zuckerberg’s not the only mark here. Microsoft also placed a bet on the

metaverse (the avatars in its iteration, Mesh, also lack legs). In the past few

years, a comically wide variety of companies have hired their own “chief

metaverse officer,” from Disney and Procter & Gamble to the Creative Artists

Agency and the accounting firm Prager Metis. Meta placed its bet on the

metaverse in the flashiest way, changing its name, spending all that dough, et

cetera, but it’s not alone in its conviction that these virtual worlds are the

inevitable future. Even writer Neal Stephenson, who coined the word metaverse in

his 1992 novel Snow Crash, founded an actual metaverse company in 2022. In the

past few years, metaverse startups like Decentraland and the Sandbox grabbed

venture capital interest by hyping themselves as hubs for a new NFT-fueled

economy. Despite these companies’ hefty valuations, they have remained decidedly

niche. (Refuting a third-party report that it had only 38 active users one day,

Decentraland said it had an average of 8,000 daily active users—which is still

tiny.) Why did Zuckerberg gamble his business on something so wobbly, so

literally legless?

The year 2022 for Women in Tech

The results of the Toptal survey are not able to clearly indicate that certain

progress has been made in the last year. These facts reveal that a bigger change

still needs to be achieved. There are certain steps and actions that should be

taken by everyone involved in the ecosystem, in order to overcome the existing

gender inequality in the world and in the domain of technology and STEM

specifically. The first step is to break the stereotypes. The stereotypical

thinking is at the root of the problem of misconception of the role of women in

society, the jobs and activities that are ‘appropriate’ for them. The second

step is bridging the gender gap and it presents the next big challenging shift

that must be called for. The gender gap exists notably in career opportunities

and this is easily noticed when comparing the number of women studying and

graduating in the STEM field and the number of women who manage to land jobs in

the tech field and achieve real-life professional realization in the tech area.

A significant part of the existing gender gap is the pay gap. Still, the gender

pay gap provenly exists even in the most advanced countries.

The Tech:Forward recipe for a successful technology transformation

Having an approach that is both this comprehensive and detailed was instrumental

in aligning one large OEM’s tech-transformation goals. Previous efforts had

stalled, often because of competing priorities across various business units,

which frequently led to a narrow focus on each unit’s needs. One might want to

push hard for cloud, for example, while another wanted to cut costs. Each unit

would develop its own KPIs and system diagnostics, which made it virtually

impossible to make thoughtful decisions across units, and technical dependencies

between units would often grind progress to a halt. The company was determined

to avoid making that mistake again. So it invested the time to educate

stakeholders on the Tech:Forward framework, detail the dependencies within each

part of the framework, and review exactly how different sequencing models would

impact outcomes. In this way, each function developed confidence that the

approach was both comprehensive and responsive to its needs. Meetings with the

CFO

3 cloud architecture best practices for industry clouds

Make no assumptions about the security of industry-specific clouds. Those sold

by the larger cloud providers may be secure as stand-alone services; however,

they could become a security vulnerability when integrated and operated directly

within your solution. The best practice here is to build and design security

into your custom applications that leverage industry clouds. Also, do so with

integration in mind so no new vulnerabilities are opened. You can take two

things that are assumed to be secure independently, and then add dependencies

that entirely change the security profile. ... However, you’ll often find the

best-of-breed option is on another cloud or perhaps from an independent industry

cloud provider that decided to go it alone. The best practice here is to not

limit the industry-specific services under consideration. As time goes on, there

will be dozens of services to do tasks such as risk analytics for investment

banking, for example. Picking the less optimized choice means you’ll lower the

value that’s returned to the business. In other words, you make less ROI when

you make less optimized decisions.

Benefits of A Technology-Enabled Risk Assessment Process

Organizations need effective risk assessments to manage resources and make

informed business decisions that enable growth. Performing an effective risk

assessment means going beyond an annual, check-the-box activity by implementing

a risk assessment process that can yield actionable results and findings and

serve as a business intelligence tool to inform risk management strategies. In

today’s rapidly evolving market, banks, financial services companies, payment

services providers, and fintechs are focused on improving their processes to

create dynamic and efficient digital experiences for their customers. But the

improvements do not extend just to customers. ... Technology-enabled solutions –

which support the automated or semi-automated collection of data, scoring of

inherent risk, mapping of controls, and scoring of residual risk – can help

organizations streamline and add efficiencies to their risk assessments and

provide a better understanding of real-time risk than the frequently outdated,

once-a-year process can. Organizations then can use the valuable business

intelligence obtained through the risk assessment process to increase revenue

and identify new business opportunities for further growth.

Making the case for an Enterprise Architect in Digital Transformation programs

Large transformation work need to be addressed in 3 buckets – Plan, Build &

Run. While “Build” is the largest portion of investments in any transformation

program, it is obvious that organizations are required to safeguard whatever has

been built with minimal “Run” budgets. “Build” and “Run” are cyclical in nature.

For example, you build, then you maintain (run), then you either build more or

something new and then maintain (run) that more or something new. So it is

pretty obvious that leaders responsible for large transformations think of Build

as the starting point and transition to Run as the end. It is senior leaders

however that need to see things being built (and run) as building blocks of a

vision or the journey. This is the Planning function (which is strategic). The

“Plan” function needs to be executed by someone who understands business vision

and maps the journey to that vision. S/he does that by identifying business

capabilities and value chain (not business process), and then developing the

strategy as building blocks. The skill required to do this is called “Enterprise

Architecture”.

EU Cyber Resilience Act: Good for Software Supply Chain Security, Bad for Open Source?

With all of the good that the CRA brings in evolving the regulatory

conversations past SBOMs, the current draft has some problematic language that

could actually hurt the future of open source. But first, what it gets right

about open source. Page 15, Paragraph 10 attempts to exempt, or carve out, open

source software (OSS) from the regulations, saying: In order not to hamper

innovation or research, free and open-source software developed or supplied

outside the course of a commercial activity should not be covered by this

Regulation. This is in particular the case for software, including

its source code and modified versions, that is openly shared and freely

accessible, usable, modifiable and redistributable. This is good, even great.

OSS and project maintainers should be exempt from these regulations that apply

liability, as this will have the effect of quashing innovation and sharing of

ideas via code. However, in the same paragraph, the CRA attempts to draw a line

between commercial and non-commercial use of open source software:

Finding the Right Data Governance Model

It is critical to distinguish the term “governance” from the term “management”

in the context of Data Governance. It should be noted that the principal

difference between “governance” and “management” is that governance refers to

the decisions that must be made and who must make them. This is to ensure

effective resource allocation and management of data operations. On the other

hand, Data Management involves implementing those decisions that arise from

assessing and monitoring either existing controls or the environment that

includes advancements in technology and the market. The activities required

for Data Governance can, therefore, be distinguished from those needed for

Data Management since management is influenced by governance. Data Governance

is oversight of Data Management activities to ensure that policy and ownership

of data are enforced in the organization. The emphasis is on formalizing the

Data Management function and associated data ownership roles and

responsibilities.

Quote for the day:

"Give whatever you are doing and

whoever you are with the gift of your attention." -- Jim Rohn