Quote for the day:

"Always remember, your focus determines your reality." -- George Lucas

The AI plateau: What smart CIOs will do when the hype cools

During the early stages of GenAI adoption, organizations were captivated by

its potential -- often driven by the hype surrounding tools like ChatGPT.

However, as the technology matures, enterprises are now grappling with the

complexities of scaling AI tools, integrating them into existing workflows and

using them to meet measurable business outcomes. ... History has shown that

transformative technologies often go through similar cycles of hype,

disillusionment and eventual stabilization. ... Early on, many organizations

told every department to use AI to boost productivity. That approach created

energy, but it also produced long lists of ideas that competed for attention

and resources. At the plateau stage, CIOs are becoming more selective. Instead

of experimenting with every possible use case, they are selecting a smaller

number of use cases that clearly support business goals and can be scaled. The

question is no longer whether a team can use AI, but whether it should. ...

CIOs should take a two-speed approach that separates fast, short-term AI

projects from larger, long-term efforts, Locandro said. Smaller initiatives

help teams learn and deliver quick results. Bigger projects require more

planning and investment, especially when they span multiple systems. ... A key

challenge CIOs face with GenAI is avoiding long, drawn-out planning cycles

that try to solve everything at once. As AI technology evolves rapidly,

lengthy projects risk producing outdated tools.

During the early stages of GenAI adoption, organizations were captivated by

its potential -- often driven by the hype surrounding tools like ChatGPT.

However, as the technology matures, enterprises are now grappling with the

complexities of scaling AI tools, integrating them into existing workflows and

using them to meet measurable business outcomes. ... History has shown that

transformative technologies often go through similar cycles of hype,

disillusionment and eventual stabilization. ... Early on, many organizations

told every department to use AI to boost productivity. That approach created

energy, but it also produced long lists of ideas that competed for attention

and resources. At the plateau stage, CIOs are becoming more selective. Instead

of experimenting with every possible use case, they are selecting a smaller

number of use cases that clearly support business goals and can be scaled. The

question is no longer whether a team can use AI, but whether it should. ...

CIOs should take a two-speed approach that separates fast, short-term AI

projects from larger, long-term efforts, Locandro said. Smaller initiatives

help teams learn and deliver quick results. Bigger projects require more

planning and investment, especially when they span multiple systems. ... A key

challenge CIOs face with GenAI is avoiding long, drawn-out planning cycles

that try to solve everything at once. As AI technology evolves rapidly,

lengthy projects risk producing outdated tools. Middle East Tech 2026: 5 Non-AI Trends Shaping Regional Business

The Middle Eastern biotechnology market is rapidly maturing into a

multi-billion-dollar industrial powerhouse, driven by national healthcare and

climate agendas. In 2026, the industry is marking the shift toward

manufacturing-scale deployment, as genomics, biofuels, and diagnostics

projects move into operational phases. ... Quantum computing has moved past

the stage of academic curiosity. In 2026, the Middle East is seeing the first

wave of applied industrial pilots, particularly within the energy and material

science sectors. ... While commercialization timelines remain long, the

strategic value of early entry is high. Foreign suppliers who offer algorithm

development or hardware-software integration for these early-stage pilots will

find a highly receptive market among national energy champions. ...

Geopatriation refers to the relocation of digital workloads and data onto

sovereign-controlled clouds and local hardware and stands out as a major

structural shift in 2026. Driven by national security concerns and the massive

data requirements of AI, Middle Eastern states are reducing their reliance on

cross-border digital architectures. This trend has extended beyond data

residency to include the localization of critical hardware capabilities. ...

the region is moving away from perimeter-based security models toward

zero-trust architectures, under which no user, device, or system receives

implicit trust. Security priorities now extend beyond office IT systems to

cover operational technology

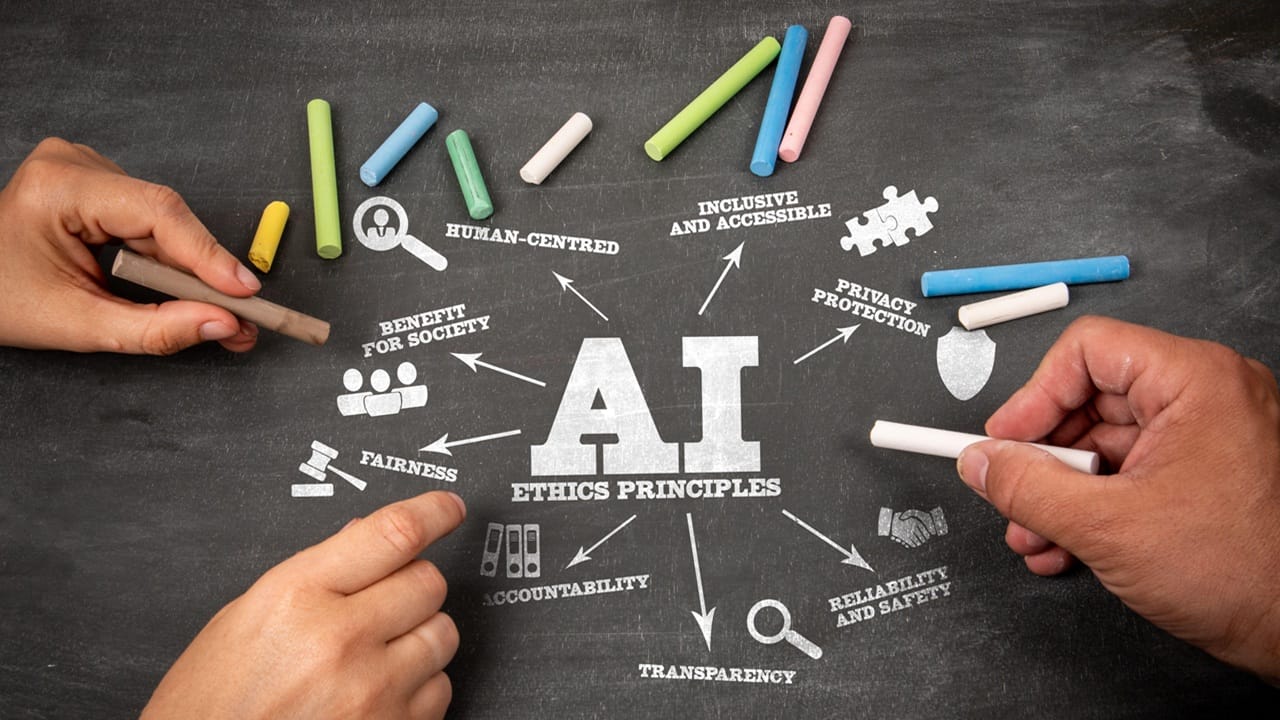

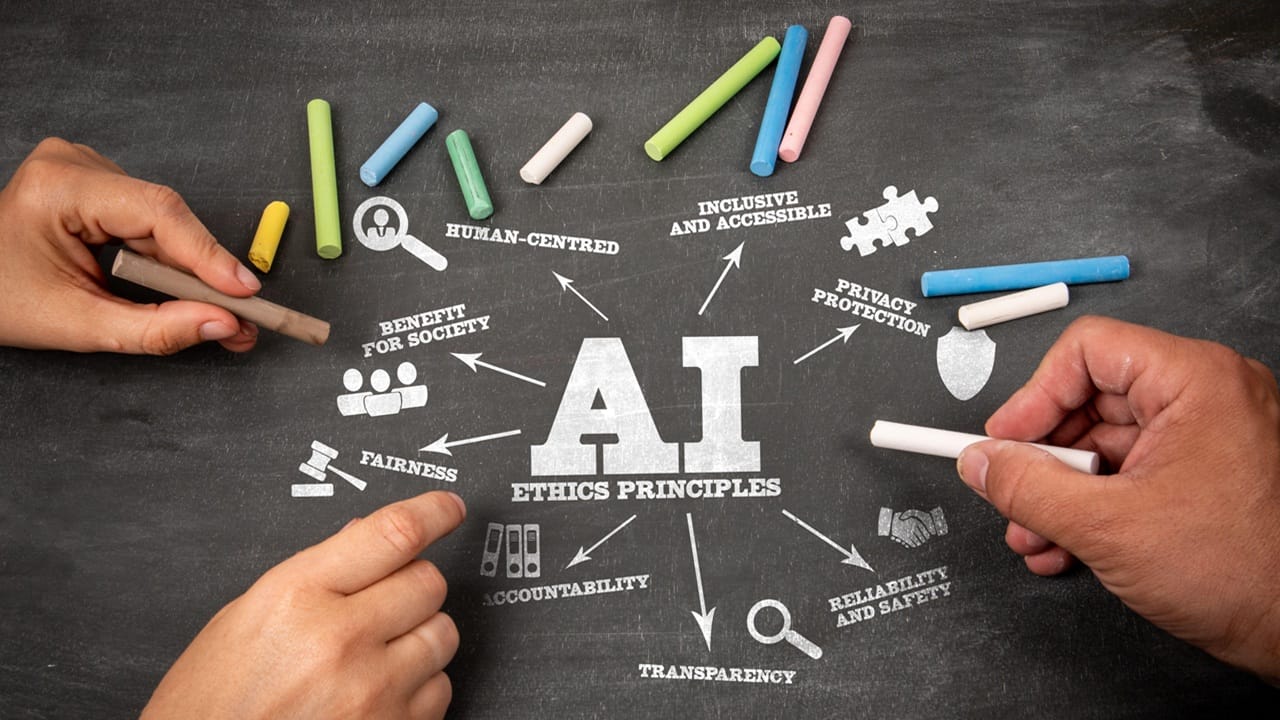

"Capturing AI's value while minimizing risk starts with discipline," Puig

said. "CIOs and their organizations need a clear strategy that ties AI

initiatives to business outcomes, not just technology experiments. This means

defining success criteria upfront, setting guardrails for ethics and

compliance, and avoiding the trap of endless pilots with no plan for scale."

... Puig adds that trust is just as important as technology. "Transparency,

governance, and training help people understand how AI decisions are made and

where human judgment still matters. The goal isn't to chase every shiny use

case; it's to create a framework where AI delivers value safely and

sustainably." ... Data security and privacy emerge as critical issues, cited

by 42% of respondents in the research. While other concerns -- such as

response quality and accuracy, implementation costs, talent shortages, and

regulatory compliance -- rank lower individually, they collectively represent

substantial barriers. When aggregated, issues related to data security,

privacy, legal and regulatory compliance, ethics, and bias form a formidable

cluster of risk factors -- clearly indicating that trust and governance are

top priorities for scaling AI adoption. ... At its core, governance ensures

that data is safe for decision-making and autonomous agents. In "Competing in

the Age of AI," authors Marco Iansiti and Karim Lakhani explain that AI allows

organizations to rethink the traditional firm by powering up an "AI factory"

-- a scalable decision-making engine that replaces manual processes with

data-driven algorithms.

"Capturing AI's value while minimizing risk starts with discipline," Puig

said. "CIOs and their organizations need a clear strategy that ties AI

initiatives to business outcomes, not just technology experiments. This means

defining success criteria upfront, setting guardrails for ethics and

compliance, and avoiding the trap of endless pilots with no plan for scale."

... Puig adds that trust is just as important as technology. "Transparency,

governance, and training help people understand how AI decisions are made and

where human judgment still matters. The goal isn't to chase every shiny use

case; it's to create a framework where AI delivers value safely and

sustainably." ... Data security and privacy emerge as critical issues, cited

by 42% of respondents in the research. While other concerns -- such as

response quality and accuracy, implementation costs, talent shortages, and

regulatory compliance -- rank lower individually, they collectively represent

substantial barriers. When aggregated, issues related to data security,

privacy, legal and regulatory compliance, ethics, and bias form a formidable

cluster of risk factors -- clearly indicating that trust and governance are

top priorities for scaling AI adoption. ... At its core, governance ensures

that data is safe for decision-making and autonomous agents. In "Competing in

the Age of AI," authors Marco Iansiti and Karim Lakhani explain that AI allows

organizations to rethink the traditional firm by powering up an "AI factory"

-- a scalable decision-making engine that replaces manual processes with

data-driven algorithms.

Scaling AI value demands industrial governance

"Capturing AI's value while minimizing risk starts with discipline," Puig

said. "CIOs and their organizations need a clear strategy that ties AI

initiatives to business outcomes, not just technology experiments. This means

defining success criteria upfront, setting guardrails for ethics and

compliance, and avoiding the trap of endless pilots with no plan for scale."

... Puig adds that trust is just as important as technology. "Transparency,

governance, and training help people understand how AI decisions are made and

where human judgment still matters. The goal isn't to chase every shiny use

case; it's to create a framework where AI delivers value safely and

sustainably." ... Data security and privacy emerge as critical issues, cited

by 42% of respondents in the research. While other concerns -- such as

response quality and accuracy, implementation costs, talent shortages, and

regulatory compliance -- rank lower individually, they collectively represent

substantial barriers. When aggregated, issues related to data security,

privacy, legal and regulatory compliance, ethics, and bias form a formidable

cluster of risk factors -- clearly indicating that trust and governance are

top priorities for scaling AI adoption. ... At its core, governance ensures

that data is safe for decision-making and autonomous agents. In "Competing in

the Age of AI," authors Marco Iansiti and Karim Lakhani explain that AI allows

organizations to rethink the traditional firm by powering up an "AI factory"

-- a scalable decision-making engine that replaces manual processes with

data-driven algorithms.

"Capturing AI's value while minimizing risk starts with discipline," Puig

said. "CIOs and their organizations need a clear strategy that ties AI

initiatives to business outcomes, not just technology experiments. This means

defining success criteria upfront, setting guardrails for ethics and

compliance, and avoiding the trap of endless pilots with no plan for scale."

... Puig adds that trust is just as important as technology. "Transparency,

governance, and training help people understand how AI decisions are made and

where human judgment still matters. The goal isn't to chase every shiny use

case; it's to create a framework where AI delivers value safely and

sustainably." ... Data security and privacy emerge as critical issues, cited

by 42% of respondents in the research. While other concerns -- such as

response quality and accuracy, implementation costs, talent shortages, and

regulatory compliance -- rank lower individually, they collectively represent

substantial barriers. When aggregated, issues related to data security,

privacy, legal and regulatory compliance, ethics, and bias form a formidable

cluster of risk factors -- clearly indicating that trust and governance are

top priorities for scaling AI adoption. ... At its core, governance ensures

that data is safe for decision-making and autonomous agents. In "Competing in

the Age of AI," authors Marco Iansiti and Karim Lakhani explain that AI allows

organizations to rethink the traditional firm by powering up an "AI factory"

-- a scalable decision-making engine that replaces manual processes with

data-driven algorithms.Information Management Trends in the Year Ahead

The digital workforce will make its presence felt. “Fleets of AI agents

trained on proprietary data, governed by corporate policy, and audited like

employees will appear in org charts, collaborate on projects, and request

access through policy engines,” said Sergio Gago, CTO for Cloudera. “They will

be contributing insights alongside their human colleagues.” A potential

oversight framework may effectively be called an “HR department for AI.” AI

agents are graduating from “copilots that suggest to accountable coworkers

inside their digital environments,” agreed Arturo Buzzalino ... “Instead of

pulling data into different environments, we’re bringing compute to the data,”

said Scott Gnau, head of data platforms at InterSystems. “For a long time, the

common approach was to move data to wherever the applications or models were

running. AI depends on fast, reliable access to governed data. When teams make

this change, they see faster results, better control, and fewer surprises in

performance and cost.” ... The year ahead will see efforts to reign in the

huge volume of AI projects now proliferating outside the scope of IT

departments. “IT leaders are being called in to fix or unify fragmented,

business-led AI projects, signaling a clear shift toward CIOs—like myself,”

said Shelley Seewald, CIO at Tungsten Automation. The impetus is on IT leaders

and managers to be “more involved much earlier in shaping AI strategy and

governance.

The digital workforce will make its presence felt. “Fleets of AI agents

trained on proprietary data, governed by corporate policy, and audited like

employees will appear in org charts, collaborate on projects, and request

access through policy engines,” said Sergio Gago, CTO for Cloudera. “They will

be contributing insights alongside their human colleagues.” A potential

oversight framework may effectively be called an “HR department for AI.” AI

agents are graduating from “copilots that suggest to accountable coworkers

inside their digital environments,” agreed Arturo Buzzalino ... “Instead of

pulling data into different environments, we’re bringing compute to the data,”

said Scott Gnau, head of data platforms at InterSystems. “For a long time, the

common approach was to move data to wherever the applications or models were

running. AI depends on fast, reliable access to governed data. When teams make

this change, they see faster results, better control, and fewer surprises in

performance and cost.” ... The year ahead will see efforts to reign in the

huge volume of AI projects now proliferating outside the scope of IT

departments. “IT leaders are being called in to fix or unify fragmented,

business-led AI projects, signaling a clear shift toward CIOs—like myself,”

said Shelley Seewald, CIO at Tungsten Automation. The impetus is on IT leaders

and managers to be “more involved much earlier in shaping AI strategy and

governance. What is outcome as agentic solution (OaAS)?

The analyst firm, Gartner predicts that a new paradigm it’s named outcome as

agentic solution (OaAS) will make some of the biggest waves, by replacing

software as a service (SaaS). The new model will see enterprises contract for

outcomes, instead of simply buying access to software tools. Instead of SaaS,

where the customer is responsible for purchasing a tool and using it to

achieve results, with OaAS providers embed AI agents and orchestration so the

work is performed for you. This leaves the vendor responsible for automating

decisions and delivering outcomes, says Vuk Janosevic, senior director analyst

at Gartner. ... The ‘outcome scenario’ has been developing in the market for

several years, first through managed services then value-based delivery

models. “OaAS simply formalizes it with modern IT buyers, who want results

over tools,” notes Thomas Kraus, global head of AI at Onix. OaAS providers are

effectively transforming systems of record (SoR) into systems of action (SoA)

by introducing orchestration control planes that bind execution directly to

outcomes, says Janosevic. ... Goransson, however, advises enterprises

carefully evaluate several areas of risk before adopting an agentic service

model, Accountability is paramount, he notes, as without clear ownership

structures and performance metrics, organizations may struggle to assess

whether outcomes are being delivered as intended.

The analyst firm, Gartner predicts that a new paradigm it’s named outcome as

agentic solution (OaAS) will make some of the biggest waves, by replacing

software as a service (SaaS). The new model will see enterprises contract for

outcomes, instead of simply buying access to software tools. Instead of SaaS,

where the customer is responsible for purchasing a tool and using it to

achieve results, with OaAS providers embed AI agents and orchestration so the

work is performed for you. This leaves the vendor responsible for automating

decisions and delivering outcomes, says Vuk Janosevic, senior director analyst

at Gartner. ... The ‘outcome scenario’ has been developing in the market for

several years, first through managed services then value-based delivery

models. “OaAS simply formalizes it with modern IT buyers, who want results

over tools,” notes Thomas Kraus, global head of AI at Onix. OaAS providers are

effectively transforming systems of record (SoR) into systems of action (SoA)

by introducing orchestration control planes that bind execution directly to

outcomes, says Janosevic. ... Goransson, however, advises enterprises

carefully evaluate several areas of risk before adopting an agentic service

model, Accountability is paramount, he notes, as without clear ownership

structures and performance metrics, organizations may struggle to assess

whether outcomes are being delivered as intended.Bridging the Gap Between SRE and Security: A Unified Framework for Modern Reliability

SRE teams optimize for uptime, performance, scalability, automation and

operational efficiency. Security teams focus on risk reduction, threat

mitigation, compliance, access control and data protection. Both mandates are

valid, but without shared KPIs, each team views the other as an obstacle to

progress. Security controls — patch cycles, vulnerability scans, IAM

restrictions and network changes — can slow deployments and reduce SRE

flexibility. In SRE terms, these controls often increase toil, create

unpredictable work and disrupt service-level objectives (SLOs). The SRE

culture emphasizes continuous improvement and rapid rollback, whereas security

relies on strict change approval and minimizing risk surfaces. ... This

disconnect impacts organizations in measurable ways. Security incidents often

trigger slow, manual escalations because security and operations lack common

playbooks, increasing mean time to recovery (MTTR). Risk gets mis-prioritized

when SRE sees a vulnerability as non-disruptive while security considers it

critical. Fragmented tooling means that SRE leverages observability and

automation while security uses scanning and SIEM tools with no shared

telemetry, creating incomplete incident context. The result? Regulatory

penalties, breaches from failures in patch automation or access governance and

a culture of blame where security faults SRE for speed and SRE faults security

for friction.

SRE teams optimize for uptime, performance, scalability, automation and

operational efficiency. Security teams focus on risk reduction, threat

mitigation, compliance, access control and data protection. Both mandates are

valid, but without shared KPIs, each team views the other as an obstacle to

progress. Security controls — patch cycles, vulnerability scans, IAM

restrictions and network changes — can slow deployments and reduce SRE

flexibility. In SRE terms, these controls often increase toil, create

unpredictable work and disrupt service-level objectives (SLOs). The SRE

culture emphasizes continuous improvement and rapid rollback, whereas security

relies on strict change approval and minimizing risk surfaces. ... This

disconnect impacts organizations in measurable ways. Security incidents often

trigger slow, manual escalations because security and operations lack common

playbooks, increasing mean time to recovery (MTTR). Risk gets mis-prioritized

when SRE sees a vulnerability as non-disruptive while security considers it

critical. Fragmented tooling means that SRE leverages observability and

automation while security uses scanning and SIEM tools with no shared

telemetry, creating incomplete incident context. The result? Regulatory

penalties, breaches from failures in patch automation or access governance and

a culture of blame where security faults SRE for speed and SRE faults security

for friction. The 2 faces of AI: How emerging models empower and endanger cybersecurity

More recently, the researchers at Google Threat Intelligence Group (GTIG)

identified a disturbing new trend: malware that uses LLMs during execution to

dynamically alter its own behavior and evade detection. This is not

pre-generated code, this is code that adapts mid-execution. ... Anthropic

recently disclosed a highly sophisticated cyber espionage operation,

attributed to a state-sponsored threat actor, that leveraged its own Claude

Codemodel to target roughly 30 organizations globally, including major

financial institutions and government agencies. ... If adversaries are

operating at AI speed, our defenses must too. The silver lining of this

dual-use dynamic is that the most powerful LLMs are also being harnessed by

defenders to create fundamentally new security capabilities. ... LLMs have

shown extraordinary potential in identifying unknown, unpatched flaws

(zero-days). These models significantly outperform conventional static

analyzers, particularly in uncovering subtle logic flaws and buffer overflows

in novel software. ... LLMs are transforming threat hunting from a

manual, keyword-based search to an intelligent, contextual query process that

focuses on behavioral anomalies. ... Ultimately, the challenge isn’t to halt

AI progress but to guide it responsibly. That means building guardrails into

models, improving transparency and developing governance frameworks that keep

pace with emerging capabilities. It also requires organizations to rethink

security strategies, recognizing that AI is both an opportunity and a risk

multiplier.

More recently, the researchers at Google Threat Intelligence Group (GTIG)

identified a disturbing new trend: malware that uses LLMs during execution to

dynamically alter its own behavior and evade detection. This is not

pre-generated code, this is code that adapts mid-execution. ... Anthropic

recently disclosed a highly sophisticated cyber espionage operation,

attributed to a state-sponsored threat actor, that leveraged its own Claude

Codemodel to target roughly 30 organizations globally, including major

financial institutions and government agencies. ... If adversaries are

operating at AI speed, our defenses must too. The silver lining of this

dual-use dynamic is that the most powerful LLMs are also being harnessed by

defenders to create fundamentally new security capabilities. ... LLMs have

shown extraordinary potential in identifying unknown, unpatched flaws

(zero-days). These models significantly outperform conventional static

analyzers, particularly in uncovering subtle logic flaws and buffer overflows

in novel software. ... LLMs are transforming threat hunting from a

manual, keyword-based search to an intelligent, contextual query process that

focuses on behavioral anomalies. ... Ultimately, the challenge isn’t to halt

AI progress but to guide it responsibly. That means building guardrails into

models, improving transparency and developing governance frameworks that keep

pace with emerging capabilities. It also requires organizations to rethink

security strategies, recognizing that AI is both an opportunity and a risk

multiplier.Hacker Conversations: Katie Paxton-Fear Talks Autism, Morality and Hacking

“Life with autism is like living life without the instruction manual that

everyone else has.” It’s confusing and difficult. “Computing provides that

manual and makes it easier to make online friends. It provides accessibility

without the overpowering emotions and ambiguities that exist in face-to-face

real life relationships – so it’s almost helping you with your disability by

providing that safe context you wouldn’t normally have.” Paxton-Fear became

obsessed with computing at an early age. ... During the second year into her

PhD study, a friend from her earlier university days invited her to a bug

bounty event held by HackerOne. She went – not to take part in the event (she

still didn’t think she was a hacker nor understood anything about hacking),

she went to meet up with other friends from the university days. She thought

to herself, ‘I’m not going to find anything. I don’t know anything about

hacking.’ “But then, while there, I found my first two vulnerabilities.” ...

he was driven by curiosity from an early age – but her skill was in

disassembly without reassembly: she just needed to know how things work. And

while many hackers are driven to computers as a shelter from social

difficulties, she exhibits no serious or long lasting social difficulties. For

her, the attraction of computers primarily comes from her dislike of

ambiguity. She readily acknowledges that she sees life as unambiguously black

or white with no shades of gray.

“Life with autism is like living life without the instruction manual that

everyone else has.” It’s confusing and difficult. “Computing provides that

manual and makes it easier to make online friends. It provides accessibility

without the overpowering emotions and ambiguities that exist in face-to-face

real life relationships – so it’s almost helping you with your disability by

providing that safe context you wouldn’t normally have.” Paxton-Fear became

obsessed with computing at an early age. ... During the second year into her

PhD study, a friend from her earlier university days invited her to a bug

bounty event held by HackerOne. She went – not to take part in the event (she

still didn’t think she was a hacker nor understood anything about hacking),

she went to meet up with other friends from the university days. She thought

to herself, ‘I’m not going to find anything. I don’t know anything about

hacking.’ “But then, while there, I found my first two vulnerabilities.” ...

he was driven by curiosity from an early age – but her skill was in

disassembly without reassembly: she just needed to know how things work. And

while many hackers are driven to computers as a shelter from social

difficulties, she exhibits no serious or long lasting social difficulties. For

her, the attraction of computers primarily comes from her dislike of

ambiguity. She readily acknowledges that she sees life as unambiguously black

or white with no shades of gray.‘A wild future’: How economists are handling AI uncertainty in forecasts

Economists have time-tested models for projecting economic growth. But they’ve

seen nothing like AI, which is a wild card complicating traditional economic

playbooks. Some facts are clear: AI will make humans more productive and

increase economic activity, with spillover effects on spending and employment.

But there are many unknowns about AI. Economists can’t isolate AI’s impact on

human labor as automation kicks in. Nailing down long-term factory job losses

to AI is not possible. ... “We’re seeing an increase in terms of productivity

enhancements over the next decade and a half. While it doesn’t capture AI

directly… there is all kinds of upside potential to the productivity numbers

because of AI. ... “There are basically two ways this can go. You can get more

output for the same input. If you used to put in 100 and get 120, maybe now

you get 140. That’s an expansion in total factor productivity. Or you can get

the same output with fewer inputs. “It’s unclear how much of either will

happen across industries or in the labor market. Will companies lean into AI,

cut their workforce, and maintain revenue? Or will they keep their workforce,

use AI to supplement them, and increase total output per worker? ... If AI and

automation remove the human element from labor-intensive manufacturing, that

cost advantage erodes. It makes it harder for developing countries to use

cheap labor as a stepping stone toward industrialization.

Economists have time-tested models for projecting economic growth. But they’ve

seen nothing like AI, which is a wild card complicating traditional economic

playbooks. Some facts are clear: AI will make humans more productive and

increase economic activity, with spillover effects on spending and employment.

But there are many unknowns about AI. Economists can’t isolate AI’s impact on

human labor as automation kicks in. Nailing down long-term factory job losses

to AI is not possible. ... “We’re seeing an increase in terms of productivity

enhancements over the next decade and a half. While it doesn’t capture AI

directly… there is all kinds of upside potential to the productivity numbers

because of AI. ... “There are basically two ways this can go. You can get more

output for the same input. If you used to put in 100 and get 120, maybe now

you get 140. That’s an expansion in total factor productivity. Or you can get

the same output with fewer inputs. “It’s unclear how much of either will

happen across industries or in the labor market. Will companies lean into AI,

cut their workforce, and maintain revenue? Or will they keep their workforce,

use AI to supplement them, and increase total output per worker? ... If AI and

automation remove the human element from labor-intensive manufacturing, that

cost advantage erodes. It makes it harder for developing countries to use

cheap labor as a stepping stone toward industrialization.Understanding transformers: What every leader should know about the architecture powering GenAI

Inside a transformer, attention is the mechanism that lets tokens talk to each

other. The model compares every token’s query with every other token’s key to

calculate a weight which is a measure of how relevant one token is to another.

These weights are then used to blend information from all tokens into a new,

context-aware representation called a value. In simple terms: attention allows

the model to focus dynamically. If the model reads “The cat sat on the mat

because it was tired,” attention helps it learn that “it” refers to “the cat,”

not “the mat.” ... Transformers are powerful, but they’re also expensive.

Training a model like GPT-4 requires thousands of GPUs and trillions of data

tokens. Leaders don’t need to know tensor math, but they do need to

understand scaling trade-offs. Techniques like quantization (reducing numerical

precision), model sharding and caching can cut serving costs by 30–50% with

minimal accuracy loss. The key insight: Architecture determines economics.

Design choices in model serving directly impact latency, reliability and total

cost of ownership. ... The transformer’s most profound breakthrough isn’t just

technical — it’s architectural. It proved that intelligence could emerge from

design — from systems that are distributed, parallel and context-aware. For

engineering leaders, understanding transformers isn’t about learning equations;

it’s about recognizing a new principle of system design.

Inside a transformer, attention is the mechanism that lets tokens talk to each

other. The model compares every token’s query with every other token’s key to

calculate a weight which is a measure of how relevant one token is to another.

These weights are then used to blend information from all tokens into a new,

context-aware representation called a value. In simple terms: attention allows

the model to focus dynamically. If the model reads “The cat sat on the mat

because it was tired,” attention helps it learn that “it” refers to “the cat,”

not “the mat.” ... Transformers are powerful, but they’re also expensive.

Training a model like GPT-4 requires thousands of GPUs and trillions of data

tokens. Leaders don’t need to know tensor math, but they do need to

understand scaling trade-offs. Techniques like quantization (reducing numerical

precision), model sharding and caching can cut serving costs by 30–50% with

minimal accuracy loss. The key insight: Architecture determines economics.

Design choices in model serving directly impact latency, reliability and total

cost of ownership. ... The transformer’s most profound breakthrough isn’t just

technical — it’s architectural. It proved that intelligence could emerge from

design — from systems that are distributed, parallel and context-aware. For

engineering leaders, understanding transformers isn’t about learning equations;

it’s about recognizing a new principle of system design.