Quote for the day:

"The measure of who we are is what we do with what we have." -- Vince Lombardi

Your passwordless future may never fully arrive

The challenges are many. Beyond legacy industrial systems, homegrown apps,

door/facility access systems, and IoT, even routine workgroup deployment of

passwordless solutions is anything but routine. Different operating systems

and specialized access requirements typically translate to enterprises needing

to roll out multiple passwordless packages, which can be expensive and

time-consuming, and create operational delays and other friction. Worst of

all, it can create new security holes as attackers try to slip between the

cracks of those multiple passwordless systems. ... “Passwordless

implementations typically leave a dangerous blind spot. Passwords are still

there, lurking inside the passkey enrollment and recovery flows,” says Aaron

Painter, CEO of Nametag. “Think of it this way: How do you really know who’s

enrolling or resetting a passkey? Attackers don’t have to break the

cryptography of passkeys. They go after the weakest link, whether it’s a

helpdesk call, an SMS code, or a ‘can’t access my passkey’ button. By keeping

both a password and a passkey, organizations multiply their attack surface.”

... Part of the passwordless debate focuses on ROI strategies. The proverbial

gold at the end of the rainbow is having all password credentials eliminated.

That means an attacker with a 12-month-old admin password from a breach of a

partner company would have nothing of value. But as long as some passwords

must be supported, the risk of such an attack remains.

The challenges are many. Beyond legacy industrial systems, homegrown apps,

door/facility access systems, and IoT, even routine workgroup deployment of

passwordless solutions is anything but routine. Different operating systems

and specialized access requirements typically translate to enterprises needing

to roll out multiple passwordless packages, which can be expensive and

time-consuming, and create operational delays and other friction. Worst of

all, it can create new security holes as attackers try to slip between the

cracks of those multiple passwordless systems. ... “Passwordless

implementations typically leave a dangerous blind spot. Passwords are still

there, lurking inside the passkey enrollment and recovery flows,” says Aaron

Painter, CEO of Nametag. “Think of it this way: How do you really know who’s

enrolling or resetting a passkey? Attackers don’t have to break the

cryptography of passkeys. They go after the weakest link, whether it’s a

helpdesk call, an SMS code, or a ‘can’t access my passkey’ button. By keeping

both a password and a passkey, organizations multiply their attack surface.”

... Part of the passwordless debate focuses on ROI strategies. The proverbial

gold at the end of the rainbow is having all password credentials eliminated.

That means an attacker with a 12-month-old admin password from a breach of a

partner company would have nothing of value. But as long as some passwords

must be supported, the risk of such an attack remains. CISOs are cracking under pressure

Most CISOs surveyed experienced a major security incident in the last six

months. For most, that level of disruption has become normal. More than half

said they are personally blamed when breaches occur, and fear their job would

be at risk if a serious incident happened under their watch. That sense of

personal accountability stands out because many breaches occur despite

defenses being in place. Fifty-eight percent of CISOs said at least one recent

incident happened even though a tool was supposed to stop it. The researchers

say this gap between investment and outcome has left security leaders exposed

to reputational and career risk for problems that are often beyond their

control. ... Most CISOs say they can quantify risk, but more than half admit

they lack standardized, business-focused metrics that make sense to

leadership. Boards often want trendlines that show risk is declining or

metrics that link incidents to business outcomes. Without these, the

conversation between CISOs and directors can break down. This disconnect means

security leaders are often held accountable without being equipped to

demonstrate progress in the terms boards expect. The researchers note that

aligning on a shared understanding of risk is key to reducing tension and

helping CISOs do their jobs. ... Many CISOs say they’re being pushed to use AI

to cut costs and automate tasks, with some already under formal mandates and

others feeling growing pressure from leadership. That puts CISOs in a

difficult position.

Most CISOs surveyed experienced a major security incident in the last six

months. For most, that level of disruption has become normal. More than half

said they are personally blamed when breaches occur, and fear their job would

be at risk if a serious incident happened under their watch. That sense of

personal accountability stands out because many breaches occur despite

defenses being in place. Fifty-eight percent of CISOs said at least one recent

incident happened even though a tool was supposed to stop it. The researchers

say this gap between investment and outcome has left security leaders exposed

to reputational and career risk for problems that are often beyond their

control. ... Most CISOs say they can quantify risk, but more than half admit

they lack standardized, business-focused metrics that make sense to

leadership. Boards often want trendlines that show risk is declining or

metrics that link incidents to business outcomes. Without these, the

conversation between CISOs and directors can break down. This disconnect means

security leaders are often held accountable without being equipped to

demonstrate progress in the terms boards expect. The researchers note that

aligning on a shared understanding of risk is key to reducing tension and

helping CISOs do their jobs. ... Many CISOs say they’re being pushed to use AI

to cut costs and automate tasks, with some already under formal mandates and

others feeling growing pressure from leadership. That puts CISOs in a

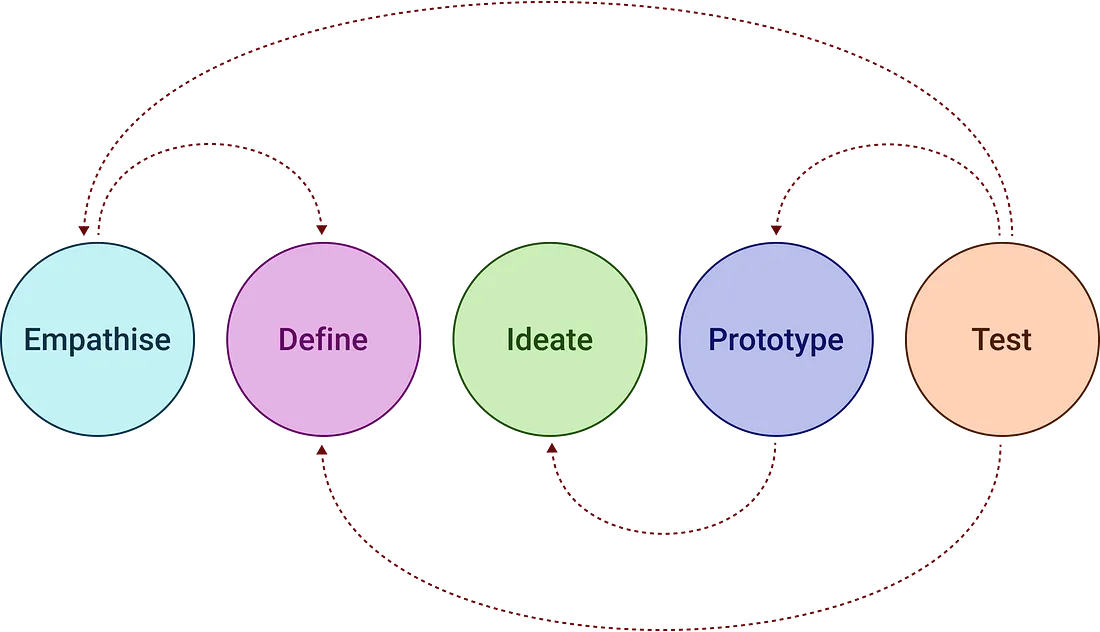

difficult position.The Sustainable Transformation Roadmap: Rethink, Align, Deploy

A significant part of the success stems from the weeding out process of debt.

Like technical debt in IT systems, process debt refers to the accumulation of

outdated procedures, inefficient workflows, and redundant steps that have

built up over years of incremental changes. These legacy practices hinder

productivity and make it challenging to fully realize the benefits of digital

solutions. Overall, while process debt is rampant, forward-thinking

organizations succeed by treating automation as a catalyst for redesign, not a

quick fix—potentially unlocking millions in annual savings per use case. To

sidestep potential traps, organizations should prioritize process optimization

before they begin automating. This entails a focus on audit and redesign,

starting with thorough process mapping to identify process debt. ... Also,

start small and iterate by piloting in low-risk areas, such as invoice chasing

or design reviews, measuring against baselines to ensure automation resolves,

not replicates, debt. Failure to confront process debt, Yousufani said, leads

to a familiar pitfall associated with “citizen-led transformation.”

Organizations distribute productivity tools hoping employees will optimize

their own workflows. But at best, this bottom-up innovation results in minor

efficiency gains: “If an individual deployed Gen AI, and they gain—in a

best-case scenario—10% productivity, that’s 10% for one employee. The gains

are much greater when looking at an entire process transformation,” he said.

A significant part of the success stems from the weeding out process of debt.

Like technical debt in IT systems, process debt refers to the accumulation of

outdated procedures, inefficient workflows, and redundant steps that have

built up over years of incremental changes. These legacy practices hinder

productivity and make it challenging to fully realize the benefits of digital

solutions. Overall, while process debt is rampant, forward-thinking

organizations succeed by treating automation as a catalyst for redesign, not a

quick fix—potentially unlocking millions in annual savings per use case. To

sidestep potential traps, organizations should prioritize process optimization

before they begin automating. This entails a focus on audit and redesign,

starting with thorough process mapping to identify process debt. ... Also,

start small and iterate by piloting in low-risk areas, such as invoice chasing

or design reviews, measuring against baselines to ensure automation resolves,

not replicates, debt. Failure to confront process debt, Yousufani said, leads

to a familiar pitfall associated with “citizen-led transformation.”

Organizations distribute productivity tools hoping employees will optimize

their own workflows. But at best, this bottom-up innovation results in minor

efficiency gains: “If an individual deployed Gen AI, and they gain—in a

best-case scenario—10% productivity, that’s 10% for one employee. The gains

are much greater when looking at an entire process transformation,” he said.

Building Resilient Platforms: Insights from Over Twenty Years in Mission-Critical Infrastructure

/articles/building-resilient-platforms-mission-critical-infrastructure/en/smallimage/building-resilient-platforms-mission-critical-infrastructure-thumbnail-1762169439707.jpg) In technology terms, a platform represents a set of integrated technologies

used as a base to develop other applications or processes. The best platform

builders succeed when they are taken for granted, seeing success not in

recognition, but in silence. Users can work without ever thinking about the

underlying infrastructure, because the platforms simply function, consistently

and reliably, making them invisible. ... Successfully hiding complexity while

delivering powerful functionality defines platform excellence. The

sophisticated engineering underneath should remain invisible to users who

simply want to accomplish their tasks without friction. ... Stability means

consistent, reliable operation at all times. However, achieving stability

through stagnation creates security vulnerabilities from unpatched systems.

Patching introduces changes that can impact stability while enabling

security. ... The temptation to defer maintenance always exists, but

falling behind creates insurmountable technical debt. From a security

perspective, increased exploitation of zero day vulnerabilities by bad actors

demonstrate how quickly deferred maintenance becomes crisis management.

Staying evergreen requires eternal vigilance and commitment. Once you fall

behind, catching up becomes nearly impossible. This principle demands upfront

planning and unwavering execution.

In technology terms, a platform represents a set of integrated technologies

used as a base to develop other applications or processes. The best platform

builders succeed when they are taken for granted, seeing success not in

recognition, but in silence. Users can work without ever thinking about the

underlying infrastructure, because the platforms simply function, consistently

and reliably, making them invisible. ... Successfully hiding complexity while

delivering powerful functionality defines platform excellence. The

sophisticated engineering underneath should remain invisible to users who

simply want to accomplish their tasks without friction. ... Stability means

consistent, reliable operation at all times. However, achieving stability

through stagnation creates security vulnerabilities from unpatched systems.

Patching introduces changes that can impact stability while enabling

security. ... The temptation to defer maintenance always exists, but

falling behind creates insurmountable technical debt. From a security

perspective, increased exploitation of zero day vulnerabilities by bad actors

demonstrate how quickly deferred maintenance becomes crisis management.

Staying evergreen requires eternal vigilance and commitment. Once you fall

behind, catching up becomes nearly impossible. This principle demands upfront

planning and unwavering execution.From Data Transfer to Data Trust

As more businesses move to hybrid and multi-cloud environments, data

exchanges happen across different infrastructures and jurisdictions, which

adds to the complexity and risk. Old models that only look at the perimeter

are no longer enough. Instead, companies need a model in which trust is not

taken for granted but is always checked. Gartner (2023) says that trust

should be built into every transaction, every request for access, and every

exchange of data. ... Businesses need to take a big-picture view based on

the following pillars to build a trusted data integration framework:

Authentication and Authorization: Use strict identity controls like OAuth

2.0, SAML, and context-aware Multi-Factor Authentication (MFA). API gateways

should enforce role-based access and rate limiting. Transport Layer Security

(TLS) should encrypt data while it is being sent, and Advanced Encryption

Standard (AES) should be used to encrypt data while it is at rest. Use

checksums, digital signatures, and data validation protocols to ensure the

data is correct. Monitoring and Observability: Use observability platforms

like ELK Stack, Prometheus, or Splunk to monitor logs, metrics, and traces

in real time. Principles of Site Reliability Engineering (SRE) say that you

should set up Service-Level Indicators (SLIs), Service-Level Objectives

(SLOs), and automatic incident detection.

As more businesses move to hybrid and multi-cloud environments, data

exchanges happen across different infrastructures and jurisdictions, which

adds to the complexity and risk. Old models that only look at the perimeter

are no longer enough. Instead, companies need a model in which trust is not

taken for granted but is always checked. Gartner (2023) says that trust

should be built into every transaction, every request for access, and every

exchange of data. ... Businesses need to take a big-picture view based on

the following pillars to build a trusted data integration framework:

Authentication and Authorization: Use strict identity controls like OAuth

2.0, SAML, and context-aware Multi-Factor Authentication (MFA). API gateways

should enforce role-based access and rate limiting. Transport Layer Security

(TLS) should encrypt data while it is being sent, and Advanced Encryption

Standard (AES) should be used to encrypt data while it is at rest. Use

checksums, digital signatures, and data validation protocols to ensure the

data is correct. Monitoring and Observability: Use observability platforms

like ELK Stack, Prometheus, or Splunk to monitor logs, metrics, and traces

in real time. Principles of Site Reliability Engineering (SRE) say that you

should set up Service-Level Indicators (SLIs), Service-Level Objectives

(SLOs), and automatic incident detection.Who Owns the Cybersecurity of Space?

There is no comprehensive and binding international cybersecurity framework

governing satellites, orbital systems or ground-to-space communications.

Australia's growing space sector, spanning manufacturing in South Australia,

launch facilities in the Northern Territory and emerging tracking

infrastructure in Queensland, is expanding quickly. ... Many satellites,

especially those launched before 2020, lack encryption or rely on outdated

telemetry protocols. A single compromised ground station could trigger

cascading effects across dependent systems. A man-in-the-middle attack in

orbit would not simply exfiltrate data. It could spoof navigation, interrupt

emergency communications or feed falsified intelligence to defense networks.

We saw a warning sign in the ViaSat KA-SAT attack during the early stages of

the Russia-Ukraine conflict, which temporarily crippled satellite

communications across Europe. ... Many satellites, especially those launched

before 2020, lack encryption or rely on outdated telemetry protocols. A

single compromised ground station could trigger cascading effects across

dependent systems. A man-in-the-middle attack in orbit would not simply

exfiltrate data. It could spoof navigation, interrupt emergency

communications or feed falsified intelligence to defense networks. ...

For cybersecurity professionals, space is now a part of your threat

landscape. Whether you work in defense, telecommunications, energy or

government, your organization likely depends on orbital networks.

There is no comprehensive and binding international cybersecurity framework

governing satellites, orbital systems or ground-to-space communications.

Australia's growing space sector, spanning manufacturing in South Australia,

launch facilities in the Northern Territory and emerging tracking

infrastructure in Queensland, is expanding quickly. ... Many satellites,

especially those launched before 2020, lack encryption or rely on outdated

telemetry protocols. A single compromised ground station could trigger

cascading effects across dependent systems. A man-in-the-middle attack in

orbit would not simply exfiltrate data. It could spoof navigation, interrupt

emergency communications or feed falsified intelligence to defense networks.

We saw a warning sign in the ViaSat KA-SAT attack during the early stages of

the Russia-Ukraine conflict, which temporarily crippled satellite

communications across Europe. ... Many satellites, especially those launched

before 2020, lack encryption or rely on outdated telemetry protocols. A

single compromised ground station could trigger cascading effects across

dependent systems. A man-in-the-middle attack in orbit would not simply

exfiltrate data. It could spoof navigation, interrupt emergency

communications or feed falsified intelligence to defense networks. ...

For cybersecurity professionals, space is now a part of your threat

landscape. Whether you work in defense, telecommunications, energy or

government, your organization likely depends on orbital networks.

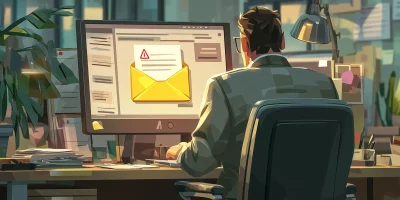

AI & phishing attacks highlight human risk in Australian fraud

Cybercriminals continue to rely on phishing attacks, exploiting trust and

human error to initiate breaches. Despite ongoing investment in advanced

detection technologies, there is widespread agreement that improving

behavioural awareness within organisations is crucial. ... Salehi highlighted

the growing sophistication of AI-powered attacks, describing how threat actors

automate reconnaissance and deploy harder-to-detect campaigns. "As AI reshapes

the threat landscape, these human vulnerabilities become even more

exploitable. Threat actors are using AI to automate reconnaissance and craft

highly personalised phishing campaigns that are faster, more convincing and

far harder to detect," said Salehi. He went further to advocate for a

risk-based security approach, aligning protection with business priorities and

focusing on critical assets. "To counter this, organisations must adopt a

risk-based approach that aligns security investments to business context -

prioritising protection of the assets most critical to operations and

continuity, while investing equally in human-centric education and training to

recognise AI-generated phishing and deepfake content," said Salehi. ... Fraud

schemes are also evolving beyond traditional IT boundaries, impacting

operational processes and supply chains. Complex webs of partners and

suppliers increase the risk of unnoticed manipulation and data leaks,

particularly as generative AI technology is embedded across business

operations.

Cybercriminals continue to rely on phishing attacks, exploiting trust and

human error to initiate breaches. Despite ongoing investment in advanced

detection technologies, there is widespread agreement that improving

behavioural awareness within organisations is crucial. ... Salehi highlighted

the growing sophistication of AI-powered attacks, describing how threat actors

automate reconnaissance and deploy harder-to-detect campaigns. "As AI reshapes

the threat landscape, these human vulnerabilities become even more

exploitable. Threat actors are using AI to automate reconnaissance and craft

highly personalised phishing campaigns that are faster, more convincing and

far harder to detect," said Salehi. He went further to advocate for a

risk-based security approach, aligning protection with business priorities and

focusing on critical assets. "To counter this, organisations must adopt a

risk-based approach that aligns security investments to business context -

prioritising protection of the assets most critical to operations and

continuity, while investing equally in human-centric education and training to

recognise AI-generated phishing and deepfake content," said Salehi. ... Fraud

schemes are also evolving beyond traditional IT boundaries, impacting

operational processes and supply chains. Complex webs of partners and

suppliers increase the risk of unnoticed manipulation and data leaks,

particularly as generative AI technology is embedded across business

operations.

The AI revolution has a power problem

In the race for AI dominance, American tech giants have the money and the

chips, but their ambitions have hit a new obstacle: electric power. "The

biggest issue we are now having is not a compute glut, but it's the power

and...the ability to get the builds done fast enough close to power,"

Microsoft CEO Satya Nadella acknowledged on a recent podcast with OpenAI chief

Sam Altman. "So if you can't do that, you may actually have a bunch of chips

sitting in inventory that I can't plug in," Nadella added. ... Already blamed

for inflating household electricity bills, data centers in the United States

could account for 7% to 12% of national consumption by 2030, up from 4% today,

according to various studies. But some experts say the projections could be

overblown. "Both the utilities and the tech companies have an incentive to

embrace the rapid growth forecast for electricity use," Jonathan Koomey, a

renowned expert from UC Berkeley, warned in September. ... Tech giants are

quietly downplaying their climate commitments. Google, for example, promised

net-zero carbon emissions by 2030 but removed that pledge from its website in

June. Instead, companies are promoting long-term projects. Amazon is

championing a nuclear revival through Small Modular Reactors (SMRs), an as-yet

experimental technology that would be easier to build than conventional

reactors.

In the race for AI dominance, American tech giants have the money and the

chips, but their ambitions have hit a new obstacle: electric power. "The

biggest issue we are now having is not a compute glut, but it's the power

and...the ability to get the builds done fast enough close to power,"

Microsoft CEO Satya Nadella acknowledged on a recent podcast with OpenAI chief

Sam Altman. "So if you can't do that, you may actually have a bunch of chips

sitting in inventory that I can't plug in," Nadella added. ... Already blamed

for inflating household electricity bills, data centers in the United States

could account for 7% to 12% of national consumption by 2030, up from 4% today,

according to various studies. But some experts say the projections could be

overblown. "Both the utilities and the tech companies have an incentive to

embrace the rapid growth forecast for electricity use," Jonathan Koomey, a

renowned expert from UC Berkeley, warned in September. ... Tech giants are

quietly downplaying their climate commitments. Google, for example, promised

net-zero carbon emissions by 2030 but removed that pledge from its website in

June. Instead, companies are promoting long-term projects. Amazon is

championing a nuclear revival through Small Modular Reactors (SMRs), an as-yet

experimental technology that would be easier to build than conventional

reactors.Cut Lead Time In Half With Pragmatic Agile

Agility isn’t sprints; it’s small, reversible changes flowing safely to users.

We get there by adopting trunk-based development, feature flags, and explicit

WIP limits. Trunk-based means branches live hours, not weeks. We merge small

increments behind flags, ship to production early, and turn features on when

we’re ready. Review stays fast because the surface area is small. If we need to

bail out, we toggle the flag off and fix forward. No hero rollbacks, no 2 a.m.

conference bridge. Feature flags don’t need to be fancy at the start, but they

must be disciplined: clear names, default off, auditability, and a plan to

retire them. Tooling is personal preference; control plane matters less than

consistency. We like OpenFeature because it’s vendor-neutral and simple. ...

Boring deploys are the highest compliment. We get them by codifying our path to

production and reducing manual gates. Start with a trunk-based pipeline that

runs unit tests, security checks, build, and deploy in the same PR context. Then

add guardrails: environment protection rules, small canaries, and automatic

rollbacks if health checks dip. ... Agile claims to balance speed with quality,

but without SLOs we end up arguing feelings. Service-level objectives anchor our

pace to user impact. We pick a few golden signals per service—availability,

latency, error rate—and set realistic targets based on current performance and

business expectations.

Agility isn’t sprints; it’s small, reversible changes flowing safely to users.

We get there by adopting trunk-based development, feature flags, and explicit

WIP limits. Trunk-based means branches live hours, not weeks. We merge small

increments behind flags, ship to production early, and turn features on when

we’re ready. Review stays fast because the surface area is small. If we need to

bail out, we toggle the flag off and fix forward. No hero rollbacks, no 2 a.m.

conference bridge. Feature flags don’t need to be fancy at the start, but they

must be disciplined: clear names, default off, auditability, and a plan to

retire them. Tooling is personal preference; control plane matters less than

consistency. We like OpenFeature because it’s vendor-neutral and simple. ...

Boring deploys are the highest compliment. We get them by codifying our path to

production and reducing manual gates. Start with a trunk-based pipeline that

runs unit tests, security checks, build, and deploy in the same PR context. Then

add guardrails: environment protection rules, small canaries, and automatic

rollbacks if health checks dip. ... Agile claims to balance speed with quality,

but without SLOs we end up arguing feelings. Service-level objectives anchor our

pace to user impact. We pick a few golden signals per service—availability,

latency, error rate—and set realistic targets based on current performance and

business expectations.

/articles/training-data-preprocessing-for-text-to-video-models/en/smallimage/training-data-preprocessing-for-text-to-video-models-thumbnail-1762244675850.jpg)