Should You Buy or Build an AI Solution?

Training an AI model is not cheap; ChatGPT cost $10 million to train in its current form, while the cost to develop the next generation of AI systems is expected to be closer to $1 billion. Traditional AI tends to cost less than generative AI because it runs on fewer GPUs, yet even the smallest scale of AI projects can quickly reach a $100,000 price tag. Building an AI model should only be done if it’s expected that you will recoup building costs within a reasonable time horizon. ... The right partner will help integrate new AI applications into the existing IT environment and, as mentioned, provide the talent required for maintenance. Choosing an existing model tends to be cheaper and faster than building a new one. Still, the partner or vendor must be vetted carefully. Vendors with an established history of developing AI will likely have better data governance frameworks in place. Ask them about policies and practices directly to see how transparent they are. Are they flexible enough to make said policies align with yours? Will they demonstrate proof of their compliance with your organization’s policies? The right partner will be prepared to offer data encryption, firewalls, and hosting facilities to ensure regulatory requirements are met, and to protect company data as if it were their own.

Business Data Privacy Standards and the Impact of Artificial Intelligence on Data Governance

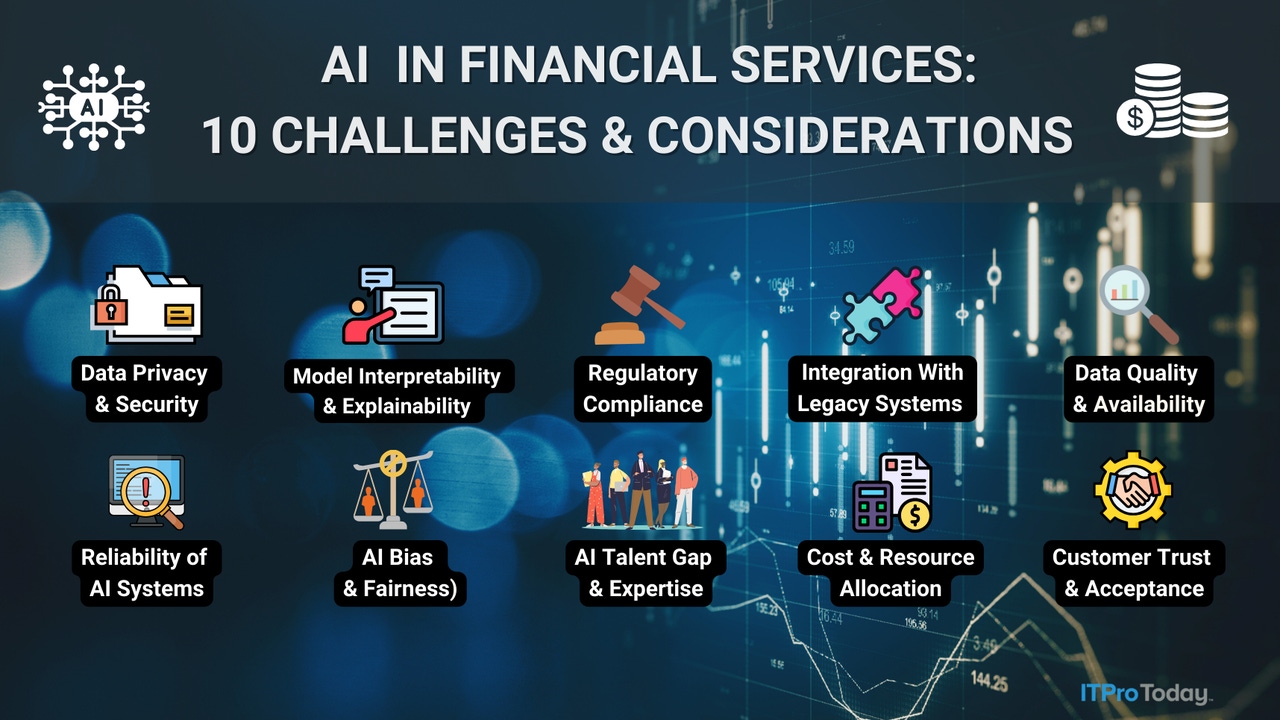

Artificial intelligence technologies, including machine learning and natural

language processing, have revolutionized how businesses analyze and utilize

data. AI systems can process vast amounts of information at unprecedented

speeds, uncovering patterns and generating insights that drive strategic

decisions and operational efficiencies. However, the use of AI introduces

complexities to data governance. Traditional data governance practices focused

on managing structured data within defined schemas. AI, on the other hand,

thrives on vast swaths of information and can generate entirely new data. ... As

AI continues to evolve, so too must data governance frameworks. Future

advancements in AI technologies, such as federated learning and differential

privacy, hold promise for enhancing data privacy while preserving the utility of

AI applications. Collaborative efforts between businesses, policymakers, and

technology experts are essential to navigate these complexities and ensure that

AI-driven innovation benefits society responsibly.

Foundations of Forensic Data Analysis

Forensic data analysis faces a variety of technical, legal, and administrative

challenges. Technical factors that affect forensic data analysis include

encryption issues, need for large amounts of disk storage space for data

collection and analysis, and anti-forensics methods. Legal challenges can arise

in forensic data analysis and can confuse or derail an investigation, such as

attribution issues stemming from a malicious program capable of executing

malicious activities without the user’s knowledge. These applications can make

it difficult to identify whether cybercrimes were deliberately committed by a

user or if they were executed by malware. The complexities of cyber threats and

attacks can create significant difficulties in accurately attributing malicious

activity. Administratively, the main challenge facing data forensics involves

accepted standards and management of data forensic practices. Although many

accepted standards for data forensics exist, there is a lack of standardization

across and within organizations. Currently, there is no regulatory body that

overlooks data forensic professionals to ensure they are competent and qualified

and are following accepted standards of practice.

Closing the DevSecOps Gap: A Blueprint for Success

Businesses need to start at the top and ensure all DevSecOps team members accept

a continuous security focus: Security isn't a one-time event; it's an ongoing

process. Leaders must encourage open communication between development,

security, and operation teams, which can be achieved with regular meetings and

shared communication platforms that facilitate constant collaboration.

Developers must learn secure coding practices when building their models, while

security and operations teams need to better understand development workflows to

create practical security measures. Peer-to-peer communication and training are

about partnership, not conflict, and effective DevSecOps thrives on

collaboration, not finger-pointing. Only once these personnel changes are

implemented can a DevSecOps team successfully execute a shift left security

approach and leverage the benefits of technology automation and efficiency. Once

internal harmony is achieved, DevSecOps teams can begin consolidating automation

and efficiency into their workflows by integrating security testing tools within

the CI/CD pipelines.

How micro-credentials can impact the world of digital upskilling in a big way

Micro-credentials, when correctly implemented, can complement traditional degree

programmes in a number of ways. Take for example the Advance Centre, in

partnership with University College Dublin, Technological University Dublin and

ATU Sligo, which offers accredited programmes and modules with the intent of

addressing Ireland’s future digital skill needs. “They enable students to gain

additional skills and knowledge that supplement their professional field. For

example, a mechanical engineer might pursue a micro-credential in cybersecurity

or data analytics to enhance their expertise and employability,” said O’Gorman.

By bridging very specific skills gaps, micro-credentials can cover materials

that may otherwise not be addressed in more traditional degree programmes. “This

is particularly valuable in fast-evolving fields where specific up-to-date

skills are in high demand.” Furthermore, it is fair to say that balancing work,

education and your personal life is no easy feat, but this shouldn’t mean that

you have to compromise on your career aspirations.

Eedge Data Center Supports Emerging Trends

Adopting AI technologies requires a lot of computational power, storage space

and low-latency networking to be able to train and run models. These

technologies prefer hosting environments, which makes them highly compatible

with data centres, therefore, as the demand for AI grows, so will the demand

for data centres. However, the challenge remains on limiting new data centres

to connect to the grid, which will impact data centre build out. This

highlights edge data centres as the solution to the data centre capacity

problem. ... With this pressure, cloud computing has emerged as a

cornerstone for these modernisation efforts, with companies choosing to move

their workloads and applications onto the cloud. This shift has brought

challenges for companies relating to them managing costs and ensuring data

privacy. As a result, organisations are considering cloud repatriation as a

strategic option. Cloud repatriation is essentially the migration of

applications, data and workloads from the public cloud environment back to

on-premises or a colocated centre infrastructure.

How To Get Rid of Technical Debt for Good

“To get rid of it or minimize it, you should treat this problem as a regular

task -- systematically. All technical debt should be precisely defined and

fixed with a maximum description of the current state and expected results

after the problem is solved,” says Zaporozhets. “As the next step, [plan] the

activities related to technical debt -- namely, who, when, and how should deal

with these problems. And, of course, regular time should be allocated for

this, which means that dealing with technical debt should become a regular

activity, like attending daily meetings.” ... Regularly addressing technical

debt requires discipline, motivation and systematic behavior from all team

members. “When the team stops being afraid of technical debt and starts

treating it as a regular task, the pressure will lessen, and there will be a

sense of control,” says Zaporozhets, “It's important not to put technical debt

on hold. I teach my teammates that each team member must remember to take a

systematic approach to technical debt and take initiative. When the whole team

works together on this, they will realize that technical debt is not so scary,

and controlling the backlog will become a routine task.”

New Orleans CIO Kimberly LaGrue Discusses Cyber Resilience

Cities are engrossed in the business of delivering services to constituents.

But appreciating that a cyber interruption could knock down a city makes

everyone think about that differently. In our cyberattack, we had the support

of the mayor, the chief administrative officer and the homeland security

office. The problem was elevated to those levels, and we were grateful that

they appreciated the importance of the challenges. The most integral part of a

good resilience strategy for government, especially for city government, is

for city leaders to pay attention to it and buy into the idea that these are

real threats, and they must be addressed? ... We learned of cyberattacks

across the state through Louisiana’s fusion center. They were very active,

very vocal about other threats. We gained a lot of insights, a lot of

information, and they were on the ground helping those agencies to recover.

The state had almost 200 volunteers in its response arsenal, led by the

Louisiana National Guard and the state of Louisiana’s fusion center. During

our cyberattack, the group of volunteers that was helping other agencies came

from those events straight to New Orleans for our event.

How cyber insurance shapes risk: Ascension and the limits of lessons learned

As research has supported, simple cost-benefit conditions among victims

incentivize immediate payment to cyber criminals unless perfect mitigation

with backups is possible and so long as the ransom is priced to correspond

with victim budgets. Any delay incurs unnecessary costs to victims, their

clients, and — cumulatively — to the insurer. The result is the rapid payment

posture mentioned above. The singular character of cyber risk for these

companies also sets limits on the lessons that can be learned for the average

CISO working to safeguard organizations across the vast majority of America’s

private enterprise. ... CISOs across the board should support firmer

discussions with the federal government about increasingly strict and even

punitive rules for limiting the payout of criminal fees. Limiting criminal

incident payouts would remove the incentives for consistent high-tempo strikes

on major infrastructure providers, which the federal government could

compensate for in the near term by providing better resources for Sector Risk

Management Agencies and beginning to resolve the abnormal dynamics surrounding

the insurer-critical infrastructure relationship.

Transform, don't just change: Palladium India’s Neha Zutshi

The world of work is evolving rapidly, and HR is at the forefront of this

transformation. One of the biggest challenges we face is managing change

effectively, as poorly planned and communicated changes often meet resistance

and fail. To navigate this, organisations must build the capability to manage

change quickly and efficiently. This involves fostering an agile, learning

culture where adaptability is valued, and employees are encouraged to embrace

new ways of working. Upskilling and reskilling are critical in this process,

ensuring that our workforce remains relevant and equipped to handle emerging

challenges. ... Technology and AI are pervasive, permeating every industry,

and HR is no exception. Various aspects of AI, such as machine learning and

digital systems, have streamlined HR processes and automate mundane tasks.

However, even though there are early adopter advantages, it is crucial to

assess the need and risks related to adopting innovative HR technologies.

Policy and ethical considerations must be addressed when adopting these

technologies. Clear policies governing confidentiality, fairness, and accuracy

are essential to ensure a smooth transition.

Quote for the day:

"Successful and unsuccessful people do

not vary greatly in their abilities. They vary in their desires to reach

their potential." -- John Maxwell

/dq/media/media_files/E15bvcg2jU0nsqhWogLd.jpg)