The tone of the top should drive risk-aware cultures. Top management should ensure that strategies, business models, and processes are looked at collectively through the lens of risk management and that buy-in is accomplished across all levels. For this to happen, the one thing that organisations should consciously avoid is people working in silos. Risk management is not a standalone function but a collaborative effort to ensure the enterprise is risk-mature and resilient. Well-thought-out training programs with case studies of success and failures should be showcased for the entire team to understand risk exposures and impact. Training should be an ongoing process because what worked well historically may not continue to be relevant in the dynamic business environments that we are in. Adaptability and agility are key transformative cultures to be ensured by top management. While strategies and processes flow from the top, the bottom-up feedback loop is equally important to understand the practical aspects at the trenches of processes.

Synthetic ID Fraud Rises 98% in Auto Lending Industry

It is important to note that more than ever, data breaches are targeting insurance in healthcare and government, but the same data is being used in other industries. This emerging trend in auto fraud can be attributed to the appeal of high credit limits and the ease of securing online auto loans without having to visit dealerships in person. At the same time, the practice of credit washing by a few credit repair companies is prevalent. Credit washing involves systematically disputing all negative tradelines on a credit report not as reporting errors but as outright identity theft. ... Not all fraudulent activities in the auto lending industry involve complex schemes such as synthetic identity fraud. Often, borrowers inflate their income or misrepresent their financial status to enhance their chances of securing a loan. Fraudsters also use shell companies to create false employment verifications. The report identifies over 11,000 fake companies circulating within the industry. Although seemingly harmless, 40% of loans secured with a fake employer result in charge-offs by borrowers who never intended to repay.

What is a digital twin and why is it important to IoT?

The terms simulation and digital twin are often used interchangeably, but they are different things. A simulation is designed with a CAD system or similar platform, and can be put through its simulated paces, but may not have a one-to-one analog with a real physical object. A digital twin, by contrast, is built out of input from IoT sensors on real equipment, which means it replicates a real-world system and changes with that system over time. ... Just as digital twins serve different purposes in different industries, the value of digital twins differs depending on the application. In the world of manufacturing, for example, a digital twin can enable product designers to try out prototypes before settling on a final design. It’s a way to use digital resources to develop and refine products instead of tapping physical engineering resources. With a digital replica of a product that simulates the real thing in a virtual space, designers can rapidly generate new iterations, optimize their product designs, and improve product quality along the way. In the semiconductor industry, digital twins can exist in the cloud and replace physical research models.

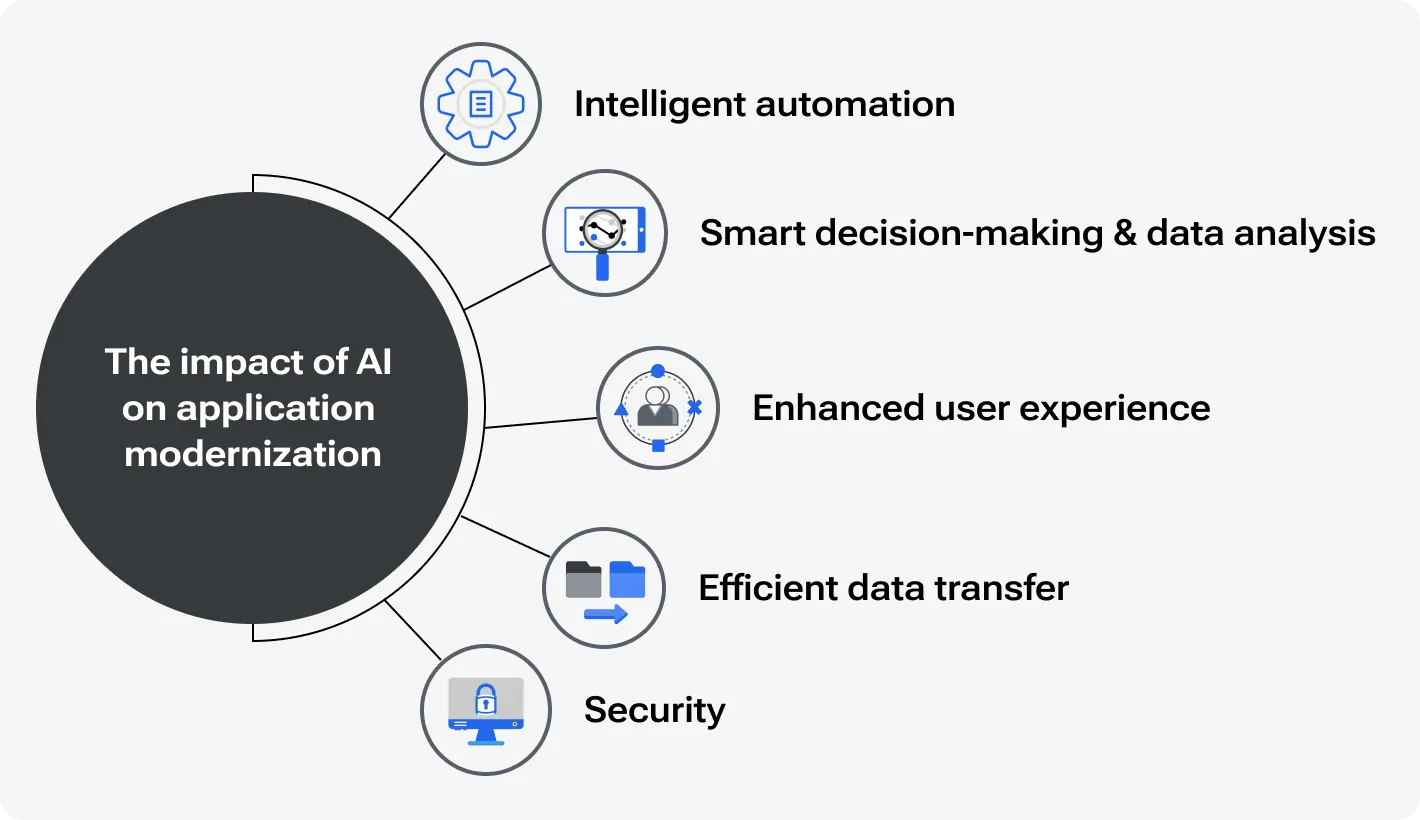

How does Artificial Intelligence Impact the Modernization of Legacy Applications?

Over the years of existence, legacy apps accumulate not only technical debt but also interest, which significantly complicates code optimization in the future: the more the application is used, the more updates it has, and the more technical debt it eventually accumulates. AI-powered assistance makes refactoring much easier, helping to identify code duplicating, extra memory, or other resource usage. To level up app performance AI can offer code quality enhancements, unit test case generation, or in some cases refactoring parts of monolithic code into composable. ... Most legacy applications cannot compete with modern ones due to complex, unclear, or confusing architecture that requires additional efforts for the latest integrations and maintenance. AI-powered analyzing tools can explore existing architecture, identify pitfalls and weaknesses, and suggest possible solutions. They can include moving to reliable and cost-efficient cloud-based storage, transit to microservices, or replacing outdated components. ... Generative AI identifies bottlenecks and offers a proper solution to handle high workloads. There can be new configurations for load balancers and algorithms to optimize traffic distribution.

Cybersecurity in a Race to Unmask a New Wave of AI-Borne Deepfakes

Mandia warns that the next wave of AI-generated audio and video will be

especially tough to detect as phony. "What if you have a 10-minute video and two

milliseconds of it are fake? Is the technology ever going to exist that's so

good to say, 'That's fake'? We're going to have the infamous arms race, and

defense loses in an arms race." Cyberattacks overall have become more costly

financially and reputation-wise for victim organizations, Mandia says, so it's

time to flip the equation and make it riskier for the threat actors themselves

by doubling down on sharing attribution intel and naming names. "We've actually

gotten good at threat intelligence. But we're not good at the attribution of the

threat intelligence," he says. The model of continuously putting the burden on

organizations to build up their defenses is not working. "We're imposing cost on

the wrong side of the hose," he says. Mandia believes it's time to revisit

treaties with the safe harbors of cybercriminals and to double down on calling

out the individuals behind the keyboard and sharing attribution data in

attacks.

Navigating the AI Revolution: Strategies for Success in 2024

As the AI landscape evolves, it becomes evident that there is no

one-size-fits-all solution. Organizations will need to adopt a multimodel

approach, incorporating a variety of models tailored to specific industries,

domains, and use cases. Shawn suggests: "Don't get distracted by a particular

LLM brand. Saying ChatGPT is better than Claude, and this one's better than

Meta, and so on and so forth, depends on your use case. You're going to end up

having multiple models in your environment to achieve different business goals.

In addition, models will continue to evolve." ... The rapid advancement of AI

has sparked global discussions about regulations, compliance, and ethics. To

ensure compliance, familiarize yourself with the European Union AI Act, the

National Institute of Standards and Technology (NIST) guidelines, and other

relevant regulations. However, it is crucial to prioritize responsible AI

practices beyond mere compliance. This involves addressing data privacy,

security, human-AI collaboration, and transparency.

Global alarm intensifies as state-sponsored cyberattacks raise risks

“One key factor has been the expansion of connected systems due to the IT/OT

convergence, where organizations are having their OT cybersecurity roll under

central IT structures. Another factor has been the wider adoption of remote

access driven after COVID,” Harshal Haridas, chief architect for Honeywell OT

Cybersecurity, told Industrial Cyber. “A lot of attacks involve malware that are

often deployed via USB devices. State-sponsored hackers are also using AI to

enable more of their capabilities in penetrating sensitive systems.” Bryce

Livingston, a senior adversary hunter at Dragos, said that the perceived surge

in cyberattacks can likely be attributed to several interconnected factors:

elevated geopolitical tensions in multiple regions across the globe, in addition

to continued growth in the global cybercriminal ecosystem, where we see

specialized criminal economies of scale emerging. “This specialization has

lowered the barrier to entry for engaging in cybercrime.” Additionally,

Livingston pointed out that “we see the increasing use of cyberattacks by

hacktivist personas to influence perceptions around certain events..."

How to Build and Foster High-performing Software Teams

As a leader, you should establish channels for regular communication and

collaboration between teams. This could involve project management tools,

regular meetings, etc. For us, what works well are informal social events (small

and big). Transparency builds trust and helps teams anticipate roadblocks or

opportunities to collaborate. It is of course easier said than done, but

defining clear, measurable goals for each team that contribute to the overall

organizational objectives is a real stepping stone to successful leadership. If

there is an opportunity, consider establishing a central coordination team or

committee. For instance, a recognition committee that will be responsible for

recognizing the achievements of the team members. The most effective strategy

will depend on the specific teams, their work styles, and the overall culture. I

always focus on empowering teams to achieve the desired outcomes, not

micromanaging them. Finally, celebrate successes achieved through collaboration

and install policies that reward such successes. This reinforces the value of

teamwork and motivates further collaboration across your teams.

Chinese State-Backed Hackers Suspected in Third Party Breach Impacting UK Armed Forces

The full details of the exposed UK armed forces data have yet to be made

public, but in addition to triggering an investigation the third party breach

also prompted the Ministry of Defence to announce an “eight point plan” to

identify security failings and prevent such incidents from happening again.

The Ministry did indicate that there was “evidence of potential failings” at

SSCL that the hackers took advantage of, but did not elaborate on whether that

means an unpatched vulnerability or an employee falling for a phishing

approach. It is also not yet clear what the Chinese government would want with

UK armed forces payroll data. For the most part these APT groups stick to

espionage and theft of beneficial corporate secrets, but generally stop short

of taking money or running financial scams. Some of the Chinese APT groups are

private sector contractors, however, and several have been observed targeting

crypto or other funds seemingly as a side activity for their own benefit. Tom

Lysemose Hansen, CTO of Promon, elaborates on what this stolen data might be

used for: “Nothing and nobody is unhackable, that’s the lesson from

this.

AI within the data center

AI demands even greater computing capacity, higher-capacity data centers, and

more energy than legacy applications, all of which lead to greater

environmental impacts. Yet, amidst these challenges, there is hope. There is a

strong and growing emphasis on standards and consumer preferences for

companies that embrace sustainability. By uniting sustainability and the new

high-density AI applications, we can pave the way for data center innovation

with construction and operational breakthroughs. This approach embraces AI's

higher demands and promotes sustainability, offering a promising path forward.

Historically, sustainability efforts were a corporate nod to investors and a

small faction of society that prioritized these ideas. As time has passed,

sustainability has become a critical planning factor, integrating

sustainability, finance, and business strategy. Part of the growing

sustainability movement is due to impending rules and regulations; part is

from societal pressure from those who put their dollars where their ecological

priorities lie. Furthermore, part is corporate awareness that businesses must

consider climate change in their business strategies, and part, we must

believe, is genuinely altruistic.

Quote for the day:

''Smart leaders develop people who

develop others, don't waste your time on those who won't help themselves.''

-- John C Maxwell

/dq/media/media_files/rxBPNl6RNRHwY9SBCNMW.jpg)