AI advancements are fueling cloud infrastructure spending

The IDC report offers insights into the evolving landscape of cloud deployment

infrastructure spending, explicitly focusing on AI. I’m not sure that anyone

will push back on that. However, there are some other market dynamics that we

should be paying attention to, namely:Tech leaders’ rapid deployment of AI

capabilities is changing infrastructure requirements, emphasizing the need for

specialized, high-performance hardware. However, this will likely translate

quickly into storage and databases, which are more critical to AI than

processing. Who would have thunk? The shift towards GPU-heavy servers at higher

price points but fewer units sold reflects the evolving market dynamics

influenced by the priorities of cloud providers and enterprise tech behemoths.

As I pointed out, this could be a false objective that leads many, including the

cloud providers, down the wrong path. ... The significant uptick in cloud

infrastructure spending underscores a robust investment in AI-related

capabilities, which has far-reaching implications for technology and business

landscapes.

How to develop your skillset for the AI era

Grounded in a rich understanding of the broader context and enhanced by a

diverse skill set, building specialization will ensure that engineers can bring

unique insights, creativity, and solutions that AI cannot. It's the intersection

of depth and breadth in an engineer's expertise that will define their

irreplaceability in an AI-driven world. This is where Roger Martin's Doctrine of

Relentless Utility comes into play, a career strategy that focuses on finding

your niche and monopolizing it. As you become more adept at navigating between

different roles and perspectives, you'll be better positioned to uncover unique

opportunities where your particular blend of skills and interests intersect with

unmet needs within your team or organization. Aligning what you're good at with

areas where you can make a significant impact allows you to establish a

distinctive role that plays to your strengths and passions. This strategy

promotes an active, value-driven approach, looking for ways to contribute beyond

the usual scope of your role. Your niche could be bridging the gap between

advanced technical knowledge and non-technical stakeholders or clients.

A phish by any other name should still not be clicked

The proper way for enterprises to reach out on these matters is something

like, “There is a new billing matter that requires your attention. Please log

into your portal and look into it.” Why don’t most enterprises do that? Some

blame a lack of training — and there is absolutely a lot of truth in that.

But, it’s often quite deliberate and intentional. More responsible enterprises

have tried doing this the proper way, but too many customers complained along

the lines of, “Do you know how many portals I have to deal with? Give me a

link to the portal you want me to use.” ... This gets us right back to the

security-vs.-convenience nightmare. This problem is complicated because the

situation is two-step. It’s not that the customer will be hurt if they click

on your link. It’s that you’re inadvertently making them comfortable with

clicking on an unknown link and they might get hurt two days from now when

they encounter an actual phishing attack email. Will the enterprise be held

liable, especially if you can’t prove the victim clicked because of what was

sent? It gets even worse. The old advice used to be to mouseover suspicious

links and make sure they’re legitimate. Today, that advice doesn’t

work.

How to keep humans in charge of AI

First, let users choose guardrails through the marketplace. We should

encourage a large multiplicity of fine-tuned models. Different users,

journalists, religious groups, civil organizations, governments and anyone

else who wants to should be able to easily create customized versions of

open-source base models that reflect their values and add their own preferred

guardrails. Users should then be free to choose their preferred version of the

model whenever they use the tool. This would allow companies that produce the

base models to avoid, to the extent possible, having to be the “arbiters of

truth” for AI. While this marketplace for fine-tuning and guardrails will

lower the pressure on companies to some extent, it doesn’t address the problem

of central guardrails. Some content — especially when it comes to images or

video — will be so objectionable that it can’t be allowed across any

fine-tuned models the company offers. ... How can companies impose centralized

guardrails on these issues that apply to all the different fine-tuned models

without coming right back to the politics problem Gemini has run head-long

into?

Managers tend to target loyal workers for exploitation, study finds

The researchers hypothesized that managers might view loyal employees as

more exploitable, targeting them for exploitation. Alternatively, they

considered whether managers might protect loyal workers to retain their

allegiance. Four studies were conducted with participants ranging from 211

to 510 full-time managers, recruited via Prolific. In the first study,

managers were split into three groups, with the first group reading about

a loyal employee named John. The survey then described scenarios requiring

someone to work overtime or perform uncomfortable tasks without

compensation, querying the likelihood of assigning John to these tasks.

The second and third groups underwent similar procedures, but with John

described as either disloyal or without any characterization. All

participants assessed John’s willingness to make personal sacrifices. ...

“Given that workers who agree to participate in their own exploitation

also acquire stronger reputations for loyalty, the bidirectional causal

links between loyalty and exploitation have the potential to create a

vicious circle of suffering for certain workers.” The study sheds light on

the relationship between workers’ loyalty and behaviors of

managers.

Mastering the CISO role: Navigating the leadership landscape

CISOs must also cultivate stronger partnerships with their C-suite

counterparts. IDC’s survey revealed discrepancies in how CISOs and CIOs

perceive the CISO’s role, underscoring the need for better alignment.

Creed recounted a recent example where the Allegiant Travel board made

decisions about connected aircraft without involving the CISO, leading to

a last-minute “fire drill” to address cyber security requirements. “Do you

think the board, when they first started talking of going down this path

of ‘we’re going to expand the fleet’, considered that there might be

security implications in that?” he asked. ... To bridge this gap, CISOs

must proactively educate executives on the business implications of

security risks and advocate for a seat at the strategic decision-making

table. As Russ Trainor, Senior Vice President of IT at the Denver Broncos,

suggested, “Sometimes I’ll forward news of the breaches over to my CFO:

here’s how much data was exfiltrated, here’s how much we think it cost.

Those things tend to hit home.” The evolving CISO role demands a delicate

balance of technical expertise, business acumen, and communication

prowess.

How companies are prioritising employee health for organisational success

The HR folks have a critical role in implementing wellness initiatives,

believes Ritika. “Fostering a supportive work culture, providing resources

for physical and mental health, and advocating for policies that

prioritise employee well-being to attract and retain talent effectively

are the key priorities for HR leaders are the key responsibilities of HR

leaders.” According to Ritika, investing in employee health and well-being

is not just a commitment but a cornerstone of organisational ethos. The

Human Resource (HR) department plays a pivotal role in promoting and

protecting the health of employees within an organisation. As per a

report, an alarming 43% of Indian tech workers encounter health issues

directly linked to their job responsibilities. Additionally, the study

indicates that these health issues go beyond physical ailments, with

almost 45% of respondents facing mental health challenges like stress,

anxiety, and depression. Samra Rehman, Head of People and Culture, Hero

Vired says that HR leaders are responsible for establishing policies and

programs that prioritise employee well-being, such as implementing health

insurance plans, offering gym memberships or fitness classes, and

organising wellness workshops.

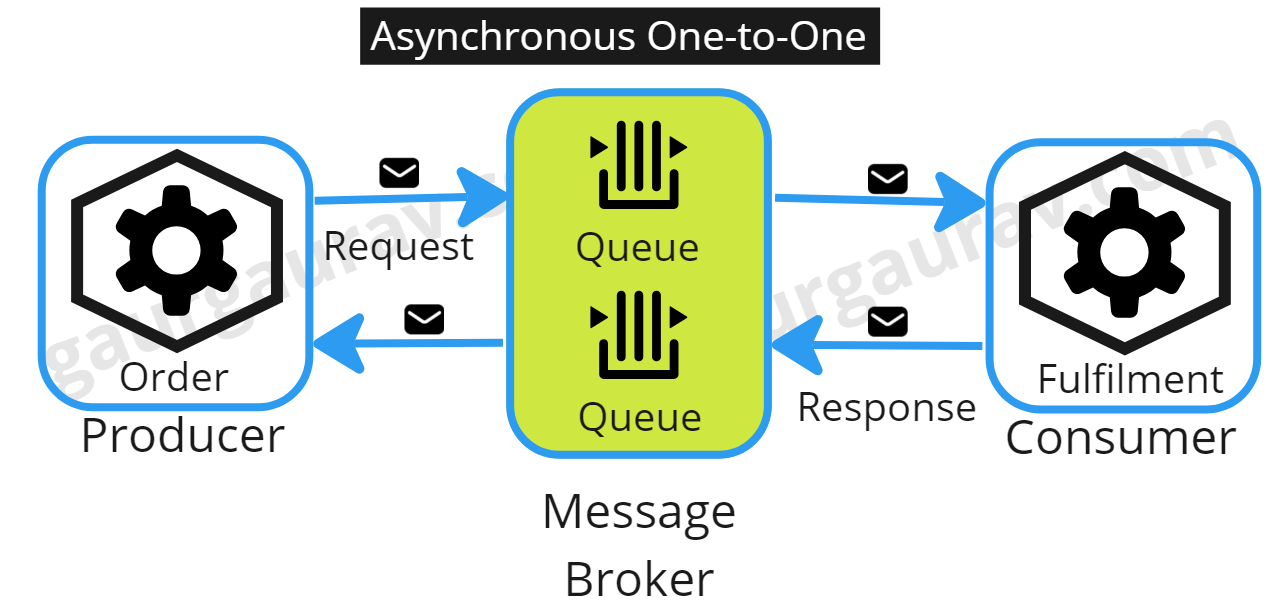

Decoding Synchronous and Asynchronous Communication in Cloud-Native Applications

The choice between synchronous and asynchronous communication patterns is

not binary but rather a strategic decision based on the specific

requirements of the application. Synchronous communication is easy to

implement and provides immediate feedback, making it suitable for

real-time data access, orchestrating dependent tasks, and maintaining

transactional integrity. However, it comes with challenges such as

temporal coupling, availability dependency, and network quality impact. On

the other hand, asynchronous communication allows a service to initiate a

request without waiting for an immediate response, enhancing the system’s

responsiveness and scalability. It offers flexibility, making it ideal for

scenarios where immediate feedback is not necessary. However, it

introduces complexities in resiliency, fault tolerance, distributed

tracing, debugging, monitoring, and resource management. In conclusion,

designing robust and resilient communication systems for cloud-native

applications requires a deep understanding of both synchronous and

asynchronous communication patterns.

Hackers Use Weaponized PDF Files to Deliver Byakugan Malware on Windows

Due to their high level of trust and popularity, hackers frequently use

weaponized PDF files as attack vectors. Even PDFs can contain harmful

codes or exploits that abuse the flaws in PDF readers. Once this malicious

PDF is opened by a user unaware of it, the payload runs and infiltrates

the system. ... FortiGuard Labs discovered a Portuguese PDF file

distributing the multi-functional Byakugan malware in January 2024. The

malicious PDF tricks people into clicking a link by presenting a blurred

table. This in turn activates a downloader that puts a copy (requires.exe)

and takes down DLL for DLL-hijacking. This runs require.exe to retrieve

the main module (chrome.exe). In particular, the downloader behaves

differently when called require.exe in temp because malware evasion is

evident. FortiGuard Labs discovered a Portuguese PDF file distributing the

multi-functional Byakugan malware in January 2024. The malicious PDF

tricks people into clicking a link by presenting a blurred table. This in

turn activates a downloader that puts a copy (requires.exe) and takes down

DLL for DLL-hijacking.

Cybercriminal adoption of browser fingerprinting

While browser fingerprinting has been used by legitimate organizations to

uniquely identify web browsers for nearly 15 years, it is now also

commonly exploited by cybercriminals: a recent study shows one in four

phishing sites using some form of this technique. ... Browser

fingerprinting uses a variety of client-side checks to establish browser

identities, which can then be used to detect bots or other undesirable web

traffic. Numerous pieces of data can be collected as a part of

fingerprinting, including:Time zone; Language settings; IP

address; Cookie settings; Screen resolution; Browser

privacy; User-agent string. Browser fingerprinting is used by

many legitimate providers to detect bots misusing their services and other

suspicious activity, but phishing site authors have also realized its

benefits and are using the technique to avoid automated systems that might

flag their website as phishing. By implementing their own browser

fingerprinting controls loading their site content, threat actors are able

to conceal phishing content in real-time. For example, Fortra has observed

threat actors using browser fingerprinting to bypass the Google Ad review

process.

Quote for the day:

"What you do has far greater

impact than what you say." -- Stephen Covey