Quote for the day:

"Success does not consist in never making mistakes but in never making the same one a second time." -- George Bernard Shaw

Expectations from AI ramp up as investors eye returns in 2026

The Next S-Curve of Cybersecurity: Governing Trust in a New Converging Intelligence Economy

Cybersecurity has crossed a threshold where it no longer merely protects

technology ~ it governs trust itself. In an era defined by AI-driven

decision-making, decentralized financial systems, cloud-to-edge computing, and

the approaching reality of quantum disruption, cyber risk is no longer episodic

or containable. It is continuous, compounding, and enterprise-defining. What

changed in 2025 wasn’t just the threat landscape. It was the architecture of

risk. Identity replaced networks as the dominant attack surface. Software supply

chains emerged as systemic liabilities. Machine intelligence ~ on both sides of

the attack began evolving faster than the controls designed to govern it. For

boards, investors, and executives, this marked the end of cybersecurity as a

control function and the beginning of cybersecurity as a strategic mandate. ...

The next S-curve of cybersecurity is not driven by better tooling. It is driven

by a shift in how trust is architected and governed across a converging

ecosystem. This new curve is defined by: Identity-centric security rather than

network-centric defense; Data-aware protection instead of application-bound

controls; Continuous assurance rather than point-in-time audits; and

Integration with enterprise risk, governance, and capital strategy Cybersecurity

evolves from a defensive posture into a trust architecture discipline ~ one that

governs how intelligence, identity, data, and decisions interact at scale.

Cybersecurity has crossed a threshold where it no longer merely protects

technology ~ it governs trust itself. In an era defined by AI-driven

decision-making, decentralized financial systems, cloud-to-edge computing, and

the approaching reality of quantum disruption, cyber risk is no longer episodic

or containable. It is continuous, compounding, and enterprise-defining. What

changed in 2025 wasn’t just the threat landscape. It was the architecture of

risk. Identity replaced networks as the dominant attack surface. Software supply

chains emerged as systemic liabilities. Machine intelligence ~ on both sides of

the attack began evolving faster than the controls designed to govern it. For

boards, investors, and executives, this marked the end of cybersecurity as a

control function and the beginning of cybersecurity as a strategic mandate. ...

The next S-curve of cybersecurity is not driven by better tooling. It is driven

by a shift in how trust is architected and governed across a converging

ecosystem. This new curve is defined by: Identity-centric security rather than

network-centric defense; Data-aware protection instead of application-bound

controls; Continuous assurance rather than point-in-time audits; and

Integration with enterprise risk, governance, and capital strategy Cybersecurity

evolves from a defensive posture into a trust architecture discipline ~ one that

governs how intelligence, identity, data, and decisions interact at scale.Why Mental Fitness Is Leadership's Next Frontier

The distinction Craze draws between mental health and mental fitness is crucial.

Mental health, he explains, is ultimately about functioning—being sufficiently

free from psychological injury or mental illness to show up and perform one's

job. "Your mental health or illness is a private matter between yourself, and

perhaps your family or physician, and is a matter of respecting your individual

rights," he says. Mental fitness, by contrast, is about capacity. "Assuming you

are mentally healthy enough to show up and perform your job, then mental fitness

is all about how well your mind performs under load, over time, and in

conditions of uncertainty," Craze explains. "Being mentally healthy is a

baseline. Being mentally fit is what allows leaders to think clearly at hour

ten, stay composed in conflict, and recover quickly after setbacks rather than

slowly eroding away," he says. Here, the comparison to elite athletics is

instructive. In professional sports, no one confuses being injury-free with

being competition-ready. Leadership has been slower to make that distinction,

even as today’s executives face sustained cognitive and emotional demands that

would have been unthinkable a generation ago. ... One of the most persistent

myths in leadership development, according to Craze, is the idea that thinking

happens in some abstract cognitive space, detached from the body. "In reality,

every act of judgment, attention and self-control has an underlying

physiological component and cost," he says.

The distinction Craze draws between mental health and mental fitness is crucial.

Mental health, he explains, is ultimately about functioning—being sufficiently

free from psychological injury or mental illness to show up and perform one's

job. "Your mental health or illness is a private matter between yourself, and

perhaps your family or physician, and is a matter of respecting your individual

rights," he says. Mental fitness, by contrast, is about capacity. "Assuming you

are mentally healthy enough to show up and perform your job, then mental fitness

is all about how well your mind performs under load, over time, and in

conditions of uncertainty," Craze explains. "Being mentally healthy is a

baseline. Being mentally fit is what allows leaders to think clearly at hour

ten, stay composed in conflict, and recover quickly after setbacks rather than

slowly eroding away," he says. Here, the comparison to elite athletics is

instructive. In professional sports, no one confuses being injury-free with

being competition-ready. Leadership has been slower to make that distinction,

even as today’s executives face sustained cognitive and emotional demands that

would have been unthinkable a generation ago. ... One of the most persistent

myths in leadership development, according to Craze, is the idea that thinking

happens in some abstract cognitive space, detached from the body. "In reality,

every act of judgment, attention and self-control has an underlying

physiological component and cost," he says. Taking the Technical Leadership Path

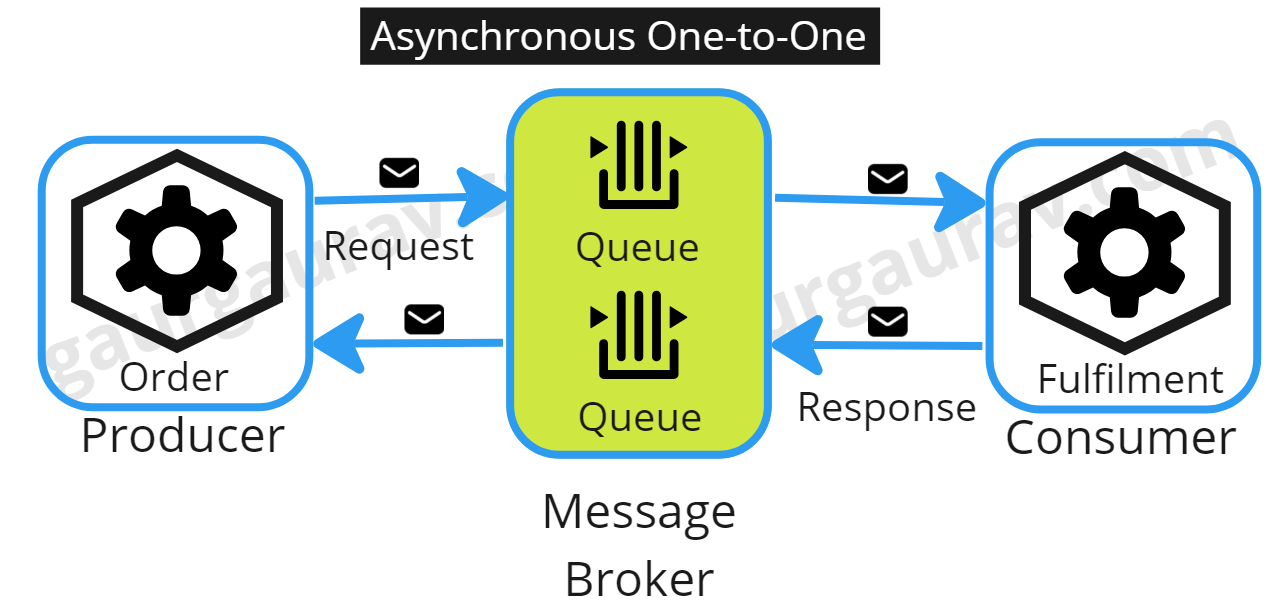

Without technical alignment, individuals constantly touch the same codebase,

adding their feature in the simplest way (for them) but often they do this

without ensuring the codebase is kept consistent. Over time accidental

complexity grows such as having five different libraries that do the same job,

or seven different implementations of how an email or push notification is

sent and when someone wants to make a future change to that area, their work

is now much harder. ... There are plenty of resources available to develop

leadership skills. Kua advised to break broader leadership skills into

specific ones, such as coaching, mentoring, communicating, mediating,

influencing, etc. Even when someone is not a formal leader, there are daily

opportunities to practice these skills in the workplace, he said. ... Formal

technical leaders are accountable for ensuring teams have enough technical

leadership. One way of doing this is to cultivate an environment where

everyone is comfortable stepping up and demonstrating technical leadership.

When you do this well, this means everyone can demonstrate informal technical

leadership. Formal leaders exist because not all teams are automatically

healthy or high-performing. I’m sure every technical person can remember a

team they’ve been on with two engineers constantly debating about which

approach to take, and wish someone had stepped in to help the team reach a

decision. In an ideal world, a formal leader wouldn’t be necessary, but it’s

rare that teams live in the perfect world.

From model collapse to citation collapse: risks of over-reliance on AI in the academy

Model collapse is the slow erosion of a generative AI system grounded in reality

as it learns more and more from machine-generated data rather than from

human-generated content. As a result of model collapse, the AI model loses

diversity in its outputs, reinforces its misconceptions, increases its

confidence in its hallucinations and amplifies its biases. ... Among all the

writing tasks involved in research, GenAI appears to be disproportionately good

at writing literature reviews. ChatGPT and Google Gemini both have deep research

features that try to take a deep dive into the literature on a topic, returning

heavily sourced and relatively accurate syntheses of the related research, while

typically avoiding the well-documented tendency to hallucinate sources

altogether. In some ways, it should not be too surprising that these

technologies thrive in this area because literature reviews are exactly the sort

of thing GenAI should be good at: textual summaries that stay pretty close to

the source material. But here is my major concern: while nothing is

fundamentally wrong with the way GenAI surfaces sources for literature reviews,

it risks exacerbating the citation Matthew effect that tools like Google Scholar

have caused. Modern AI models largely thrive on a snapshot of the internet circa

2022. In fact, I suspect that verifiably pre-2022 datasets will become prized

sources for future models, largely untainted by AI-generated content, in much

the same way that pre-World War II steel is prized for its lack of radioactive

contamination from nuclear testing.

Model collapse is the slow erosion of a generative AI system grounded in reality

as it learns more and more from machine-generated data rather than from

human-generated content. As a result of model collapse, the AI model loses

diversity in its outputs, reinforces its misconceptions, increases its

confidence in its hallucinations and amplifies its biases. ... Among all the

writing tasks involved in research, GenAI appears to be disproportionately good

at writing literature reviews. ChatGPT and Google Gemini both have deep research

features that try to take a deep dive into the literature on a topic, returning

heavily sourced and relatively accurate syntheses of the related research, while

typically avoiding the well-documented tendency to hallucinate sources

altogether. In some ways, it should not be too surprising that these

technologies thrive in this area because literature reviews are exactly the sort

of thing GenAI should be good at: textual summaries that stay pretty close to

the source material. But here is my major concern: while nothing is

fundamentally wrong with the way GenAI surfaces sources for literature reviews,

it risks exacerbating the citation Matthew effect that tools like Google Scholar

have caused. Modern AI models largely thrive on a snapshot of the internet circa

2022. In fact, I suspect that verifiably pre-2022 datasets will become prized

sources for future models, largely untainted by AI-generated content, in much

the same way that pre-World War II steel is prized for its lack of radioactive

contamination from nuclear testing.

Why is Debugging Hard? How to Develop an Effective Debugging Mindset

Here’s how most developers debug code: Something is broken; Let me change the line; Let’s refresh (wishing the error would go away); Hmm… still broken!; Now, let me add a console.log(); Let me refresh again (Ah, this time it may…); Ok, looks like this time it worked! This is reaction-based debugging. It’s like throwing a stone in the dark or finding a needle in a haystack. It feels busy, it sounds productive, but it’s mostly guessing. And guessing doesn’t scale in programming. This approach and the guessing mindset make debugging hard for developers. The lack of a methodology and solid approach makes many devs feel helpless and frustrated, which makes the process feel much more difficult than coding. This is why we need a different mental model, a defined skillset to master the art of debugging. ... Good debuggers don’t fight bugs. They investigate them. They don’t start with the mindset of “How do I fix this?”. They start with, “Why must this bug exist?” This one question changes everything. When you ask about the existence of a bug, you go back to the history to collect information about the code, its changes, and its flow. Then, you feed this information through a “mental model” to make decisions that lead you to the fix. ... Once the facts are clear and assumptions are visible, the debugging makes its way forward. Now you’ll need to form a hypothesis. A hypothesis is a simple cause-and-effect statement: If this assumption is wrong, then the behaviour makes sense. If not, provide a fix.Promptware Kill Chain – Five-Step Kill Chain Model for Analyzing Cyberthreats

While the security industry has focused narrowly on prompt injection as a

catch-all term, the reality is far more complex. Attacks now follow systematic,

sequential patterns: initial access through malicious prompts, privilege

escalation by bypassing safety constraints, establishing persistence in system

memory, moving laterally across connected services, and finally executing their

objectives. This mirrors how traditional malware campaigns unfold, suggesting

that conventional cybersecurity knowledge can inform AI security strategies. ...

The promptware kill chain begins with Initial Access, where attackers insert

malicious instructions through prompt injection—either directly from users or

indirectly through poisoned documents retrieved by the system. The second phase,

Privilege Escalation, involves jailbreaking techniques that bypass safety

training designed to refuse harmful requests. ... Traditional malware achieves

persistence through registry modifications or scheduled tasks. Promptware

exploits the data stores that LLM applications depend on. Retrieval-dependent

persistence embeds payloads in data repositories like email systems or knowledge

bases, reactivating when the system retrieves similar content. Even more potent

is retrieval-independent persistence, which targets the agent’s memory directly,

ensuring the malicious instructions execute on every interaction regardless of

user input.

While the security industry has focused narrowly on prompt injection as a

catch-all term, the reality is far more complex. Attacks now follow systematic,

sequential patterns: initial access through malicious prompts, privilege

escalation by bypassing safety constraints, establishing persistence in system

memory, moving laterally across connected services, and finally executing their

objectives. This mirrors how traditional malware campaigns unfold, suggesting

that conventional cybersecurity knowledge can inform AI security strategies. ...

The promptware kill chain begins with Initial Access, where attackers insert

malicious instructions through prompt injection—either directly from users or

indirectly through poisoned documents retrieved by the system. The second phase,

Privilege Escalation, involves jailbreaking techniques that bypass safety

training designed to refuse harmful requests. ... Traditional malware achieves

persistence through registry modifications or scheduled tasks. Promptware

exploits the data stores that LLM applications depend on. Retrieval-dependent

persistence embeds payloads in data repositories like email systems or knowledge

bases, reactivating when the system retrieves similar content. Even more potent

is retrieval-independent persistence, which targets the agent’s memory directly,

ensuring the malicious instructions execute on every interaction regardless of

user input.AI SOC Agents Are Only as Good as the Data They Are Fed

If your telemetry is fragmented, your schemas are inconsistent, or your context

is missing, you won’t get faster responses from AI SOC agents. You’ll just get

faster mistakes. These agents are being built to excel at cybersecurity analysis

and decision support. They are not constructed to wrangle data collection,

cleansing, normalization, and governance across dozens of sources. ... Modern

SOCs integrate telemetry from EDRs, cloud providers, identity, networks, SaaS

apps, data lakes, and more. Normalizing all that into a common schema eliminates

the constant “translation tax.” An agent that can analyze standardized fields

once, and doesn’t have to re-learn CrowdStrike vs. Splunk Search Processing

Language vs. vendor-specific JavaScript Object Notation, will make faster, more

reliable decisions. ... If the agent must “crawl back” into five source systems

to enrich an alert on its own, latency spikes and success rates drop. The right

move is to centralize, normalize, and clean security data into an accessible

store, like a data lake, for your AI SOC agents and continue streaming a

distilled, security-relevant subset to the Security Information and Event

Management (SIEM) platform for detections and cybersecurity analysts. Let the

SIEM be the place where detections originate; let the lake be the place your

agents do their deep thinking. The problem is that the industry’s largest SIEM,

Endpoint Detection and Response (EDR), and Security Orchestration, Automation,

and Response (SOAR) platforms are consolidating into vertically integrated

ecosystems. ...”

If your telemetry is fragmented, your schemas are inconsistent, or your context

is missing, you won’t get faster responses from AI SOC agents. You’ll just get

faster mistakes. These agents are being built to excel at cybersecurity analysis

and decision support. They are not constructed to wrangle data collection,

cleansing, normalization, and governance across dozens of sources. ... Modern

SOCs integrate telemetry from EDRs, cloud providers, identity, networks, SaaS

apps, data lakes, and more. Normalizing all that into a common schema eliminates

the constant “translation tax.” An agent that can analyze standardized fields

once, and doesn’t have to re-learn CrowdStrike vs. Splunk Search Processing

Language vs. vendor-specific JavaScript Object Notation, will make faster, more

reliable decisions. ... If the agent must “crawl back” into five source systems

to enrich an alert on its own, latency spikes and success rates drop. The right

move is to centralize, normalize, and clean security data into an accessible

store, like a data lake, for your AI SOC agents and continue streaming a

distilled, security-relevant subset to the Security Information and Event

Management (SIEM) platform for detections and cybersecurity analysts. Let the

SIEM be the place where detections originate; let the lake be the place your

agents do their deep thinking. The problem is that the industry’s largest SIEM,

Endpoint Detection and Response (EDR), and Security Orchestration, Automation,

and Response (SOAR) platforms are consolidating into vertically integrated

ecosystems. ...”

IT portfolio management: Optimizing IT assets for business value

The enterprise’s most critical systems for conducting day-to-day business are a

category unto themselves. These systems may be readily apparent, or hidden deep

in a technical stack. So all assets should be evaluated as to how

mission-critical they are. ... The goal of an IT portfolio is to contain assets

that are presently relevant and will continue to be relevant well into the

future. Consequently, asset risk should be evaluated for each IT resource. Is

the resource at risk for vendor sunsetting or obsolescence? Is the vendor itself

unstable? Does IT have the on-staff resources to continue running a given

system, no matter how good it is (a custom legacy system written in COBOL and

Assembler, for example)? Is a particular system or piece of hardware becoming

too expense to run? Do existing IT resources have a clear path to integration

with the new technologies that will populate IT in the future? ... Is every IT

asset pulling its weight? Like monetary and stock investments, technologies

under management must show they are continuing to produce measurable and

sustainable value. The primary indicators of asset value that IT uses are total

cost of ownership (TCO) and return on investment (ROI). TCO is what gauges the

value of an asset over time. For instance, investments in new servers for the

data center might have paid off four years ago, but now the data center has an

aging bay of servers with obsolete technology and it is cheaper to relocate

compute to the cloud.

The enterprise’s most critical systems for conducting day-to-day business are a

category unto themselves. These systems may be readily apparent, or hidden deep

in a technical stack. So all assets should be evaluated as to how

mission-critical they are. ... The goal of an IT portfolio is to contain assets

that are presently relevant and will continue to be relevant well into the

future. Consequently, asset risk should be evaluated for each IT resource. Is

the resource at risk for vendor sunsetting or obsolescence? Is the vendor itself

unstable? Does IT have the on-staff resources to continue running a given

system, no matter how good it is (a custom legacy system written in COBOL and

Assembler, for example)? Is a particular system or piece of hardware becoming

too expense to run? Do existing IT resources have a clear path to integration

with the new technologies that will populate IT in the future? ... Is every IT

asset pulling its weight? Like monetary and stock investments, technologies

under management must show they are continuing to produce measurable and

sustainable value. The primary indicators of asset value that IT uses are total

cost of ownership (TCO) and return on investment (ROI). TCO is what gauges the

value of an asset over time. For instance, investments in new servers for the

data center might have paid off four years ago, but now the data center has an

aging bay of servers with obsolete technology and it is cheaper to relocate

compute to the cloud.