Why adversarial AI is the cyber threat no one sees coming

Don’t settle for doing red teaming on a sporadic schedule, or worse, only when

an attack triggers a renewed sense of urgency and vigilance. Red teaming needs

to be part of the DNA of any DevSecOps supporting MLOps from now on. The goal

is to preemptively identify system and any pipeline weaknesses and work to

prioritize and harden any attack vectors that surface as part of MLOps’ System

Development Lifecycle (SDLC) workflows. ... Have a member of the DevSecOps

team staying current on the many defensive frameworks available today. Knowing

which one best fits an organization’s goals can help secure MLOps, saving time

and securing the broader SDLC and CI/CD pipeline in the process. Examples

include the NIST AI Risk Management Framework and OWASP AI Security and

Privacy Guide. ... Consider using a combination of biometrics modalities,

including facial recognition, fingerprint scanning, and voice recognition,

combined with passwordless access technologies to secure systems used across

MLOps. Gen AI has proven capable of helping produce synthetic data.

300K Internet Hosts at Risk for 'Devastating' Loop DoS Attack

The attack exploits a novel traffic-loop vulnerability present in certain user

datagram protocol (UDP)-based applications, according to a post by the

Carnegie Mellon University's CERT Coordination Center. An unauthenticated

attacker can use maliciously crafted packets against a UDP-based vulnerable

implementation of various application protocols such as DNS, NTP, and TFTP,

leading to DoS and/or abuse of resources. ... The researchers put the attack

on par with amplification attacks in the volumes of traffic they can cause,

with two major differences. One is that attackers do not have to continuously

send attack traffic due to the loop behavior, unless defenses terminate loops

to shut down the self-repetitive nature of the attack. The other is that

without a proper defense, the DoS attack will likely continue for a while.

Indeed, DoS attacks are almost always about resource consumption in Web

architecture, but until now it's been extremely tricky to use this type of

attack to take a Web property completely offline because "you have to have

systems smart enough to gather an army of hosts that will call upon the victim

web architecture all at once," explains Jason Kent.

Security Flaw Can Open Over 3 Million Door Locks, Mainly at Hotels

The researchers have not released technical details to prevent hackers from

exploiting the threat. Nevertheless, the vulnerability is relatively easy for

a bad actor to abuse. “An attacker only needs to read one keycard from the

property to perform the attack against any door in the property. This keycard

can be from their own room, or even an expired keycard taken from the express

checkout collection box,” they wrote. ... The vulnerability affects all locks

under the Saflok brand, including the Saflok MT, the Quantum Series, the RT

Series, the Saffire Series and the Confidant Series, among others.

Unfortunately, it’s impossible for a hotel guest to visually tell if a lock

has been patched, the researchers say. Whether anyone else knows about the

flaw remains unclear. ... In a statement, Dormakaba confirmed that the flaw

exists. "As soon as we were made aware of the vulnerability by a group of

external security researchers, we initiated a comprehensive investigation,

prioritized developing and rolling out a mitigation solution, and worked to

communicate with customers systematically," the company said.

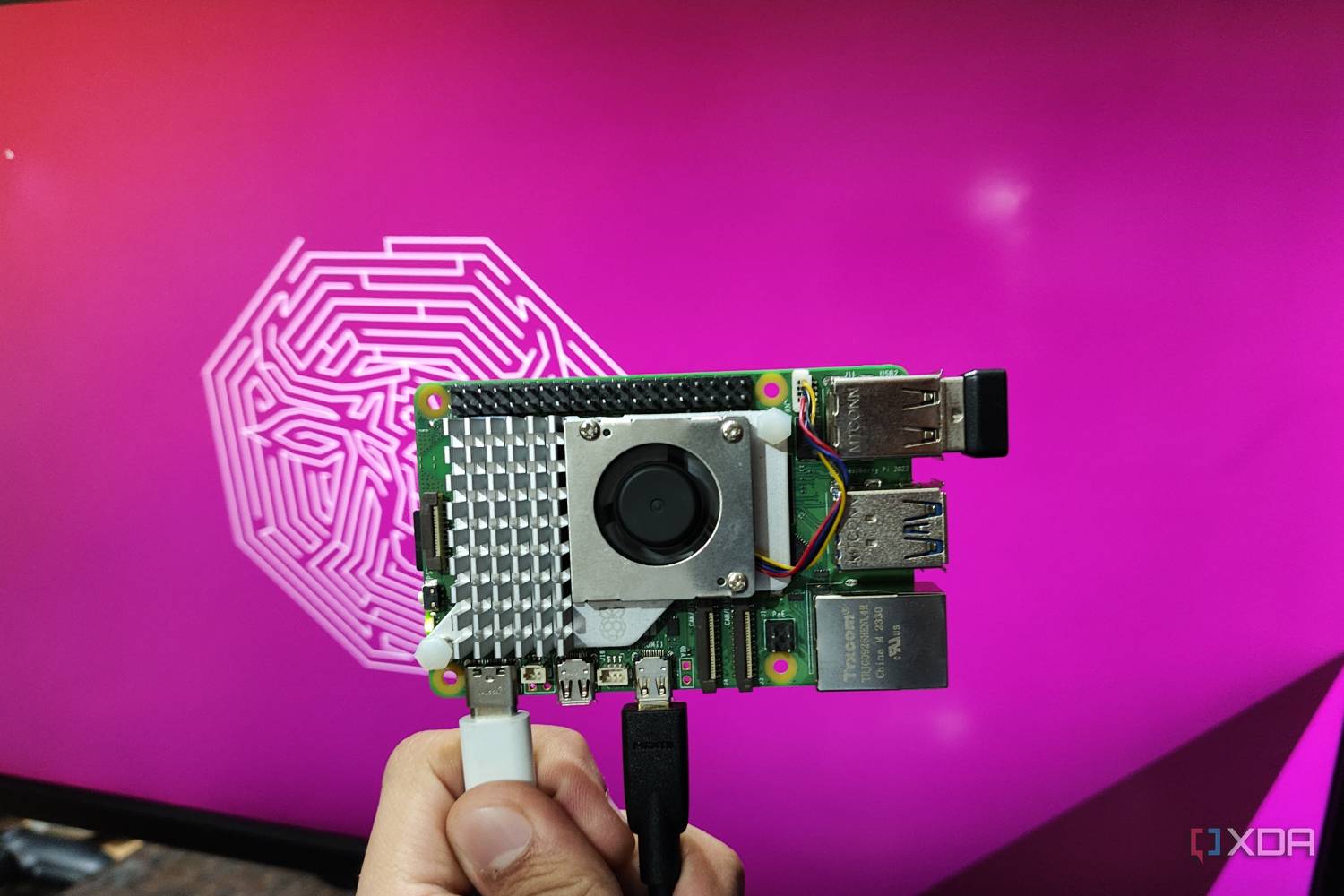

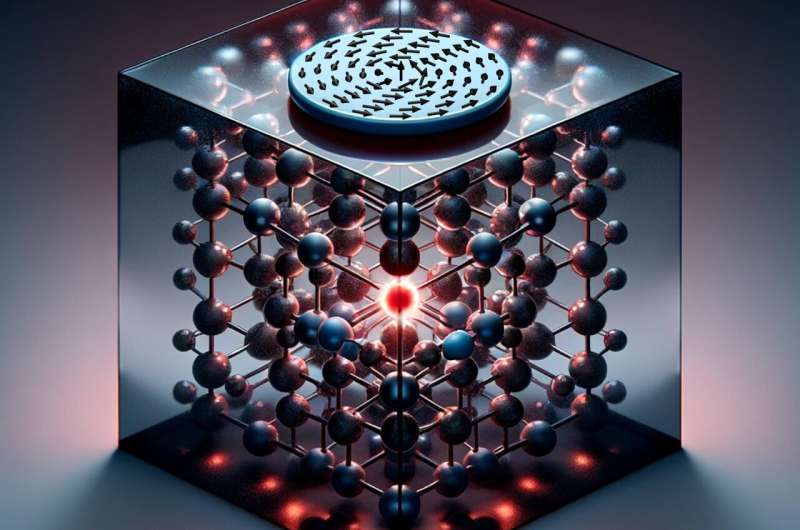

Quantum talk with magnetic disks

While much attention has been directed towards the computation of quantum

information, the transduction of information within quantum networks is

equally crucial in materializing the potential of this new technology.

Addressing this need, a research team at the Helmholtz-Zentrum

Dresden-Rossendorf (HZDR) is now introducing a new approach for transducing

quantum information. The team has manipulated quantum bits, so-called qubits,

by harnessing the magnetic field of magnons—wave-like excitations in a

magnetic material—that occur within microscopic magnetic disks. The

researchers have presented their results in the journal Science Advances. The

construction of a programmable, universal quantum computer stands as one of

the most challenging engineering and scientific endeavors of our time. The

realization of such a computer holds great potential for diverse industry

fields such as logistics, finance, and pharmaceutics. However, the

construction of a practical quantum computer has been hindered by the

intrinsic fragility of how the information is stored and processed in this

technology.

Relational Data at the Edge: How Cloudflare Operates Distributed PostgreSQL Clusters

/filters:no_upscale()/articles/cloudflare-distributed-postgres/en/resources/56figure-2-1710494709114.jpg)

The team opted for stolon, an open-source cluster management system written in

Go, running as a thin layer on top of the PostgreSQL cluster, with a

PostgreSQL-native interface and support for multiple-site redundancy. It is

possible to deploy a single stolon cluster distributed across multiple

PostgreSQL clusters, whether within one region or spanning multiple regions.

Stolon's features include stable failover with minimal false positives, with

the Keeper Nodes acting as the parent processes managing PostgreSQL changes.

Sentinel Nodes function as orchestrators, monitoring Postgres components'

health and making decisions, such as initiating elections for new primaries.

... Cloudflare chose PgBouncer as the connection pooler due to its

compatibility with PostgreSQL protocol: the clients can connect to PgBouncer

and submit queries as usual, simplifying the handling of database switches and

failovers. PgBouncer, a lightweight single-process server, manages connections

asynchronously, allowing it to handle more concurrent connections than

PostgreSQL.

Downtime Cost of Cyberattacks and How to Reduce It

“IT leaders and other business decision makers must think critically about

their support teams, identifying and encouraging continual upskilling via

real-world scenarios to mirror the threats they’re likely to experience,”

Hynes advises. "Staying skilled in parallel to increasingly complex and

intelligent cyberattacks can make recovery more efficient and alleviate

unnecessary downtime that puts the company reputation and stakeholder

relationships at risk.” This will often necessitate de-siloing an

organization. As one paper observes, cybersecurity analysts are sometimes not

looped into continuity plans, making those plans next to worthless when

something actually happens. Conversely, analysts do not necessarily share the

findings of their risk assessments with the necessary departments. So, nobody

can plan accordingly. As previously referenced, planning for alternate means

of communication, whether it be in a hospital or in another business, is

crucial. Ensuring that an immediate fallback to typical communication channels

is in place will almost assuredly save time in the event of an attack.

Are cobots collaborative enough to protect your cyberspace?

Critics argue that the rise of collaborative robots in modern manufacturing

brings about cybersecurity concerns. These sophisticated machines, integrated

with advanced sensors and AI, work alongside humans in shared spaces, posing

risks that must be addressed. Unauthorised access to sensitive data in cobots

can lead to intellectual property theft and operational disruptions, while

cyber attackers manipulating cobot programming can cause product and equipment

damage and physical harm to workers. “Moreover, disabling safety mechanisms

through cyber attacks exposes workers to injury risks. To safeguard human

labour in collaborative environments, comprehensive cybersecurity strategies

are imperative. This entails regular software updates, encryption methods, and

continuous monitoring for swift responses to potential breaches,” Vineet

Kumar, global president and founder, CyberPeace, a non-profit organisation of

cybersecurity explained. Experts believe that the firmware, controlling

lower-level operations such as sensors and actuators, is often updated over

the air, leaving robots vulnerable to penetration through the network or its

peripherals.

Legal Issues for Data Professionals: AI Creates Hidden Data and IP Legal Problems

From a legal perspective, the core risks are a) that analytics run on the

database will disclose customer information in violation of the

confidentiality agreement and b) that the use of the customer information

could be outside the scope of permitted use. It is common for confidentiality

and nondisclosure obligations to be integrated into the governing agreement

and not in a standalone nondisclosure agreement (NDA). Further, in many

industries, the terms of customer confidentiality will be tailored

specifically to the transaction and the agreement. As a result, it requires

both business and legal analysis to determine the permitted, the prohibited,

and the gray areas for scope of use. It is important to note that the company

and the customer entered into an NDA at the beginning of the transaction or

before the transaction. The NDA may have different terms than the final

agreement, but both the NDA and the agreement, with their different terms,

will be in the database. In addition, a company’s use of confidential

information outside its permitted scope of use constitutes a breach of

contract and could result in liability and the award of monetary damages

against the company.

CDOs, data science heads to fill Chief AI Officer positions in India

The refactoring of C-level technology roles across Indian enterprises,

according to CK Birla Hospitals’ CIO Mitali Biswas, can be chalked up to the

dearth of talent or skills presently available to take on the responsibilities

for the role or create an efficient team under that position. “While larger

enterprises may still want to create a new position and a team around it,

small and medium businesses will look up to their existing technology leaders,

such as the CIO or the CTO or the CDO to take up the CAIO mantle,” Biswas

explained, adding that maturity and pervasiveness of the CAIO role, at least

in the Indian healthcare sector, is two to three years away. Santanu Ganguly,

who is the CEO of advisory firm StrategINK, said he believes that other

industry sectors, including healthcare, will see the role of CAIO being

adopted in the next one to three years, driven by the boards and CEOs’ agenda

of shaping the future of customer-centricity, offering innovation, enhanced

productivity & efficient operations. Along the same lines, Gaurav Kataria,

vice president of digital manufacturing and CDIO at PSPD, ITC Limited said

that the evolution of the CAIO role is already happening in India.

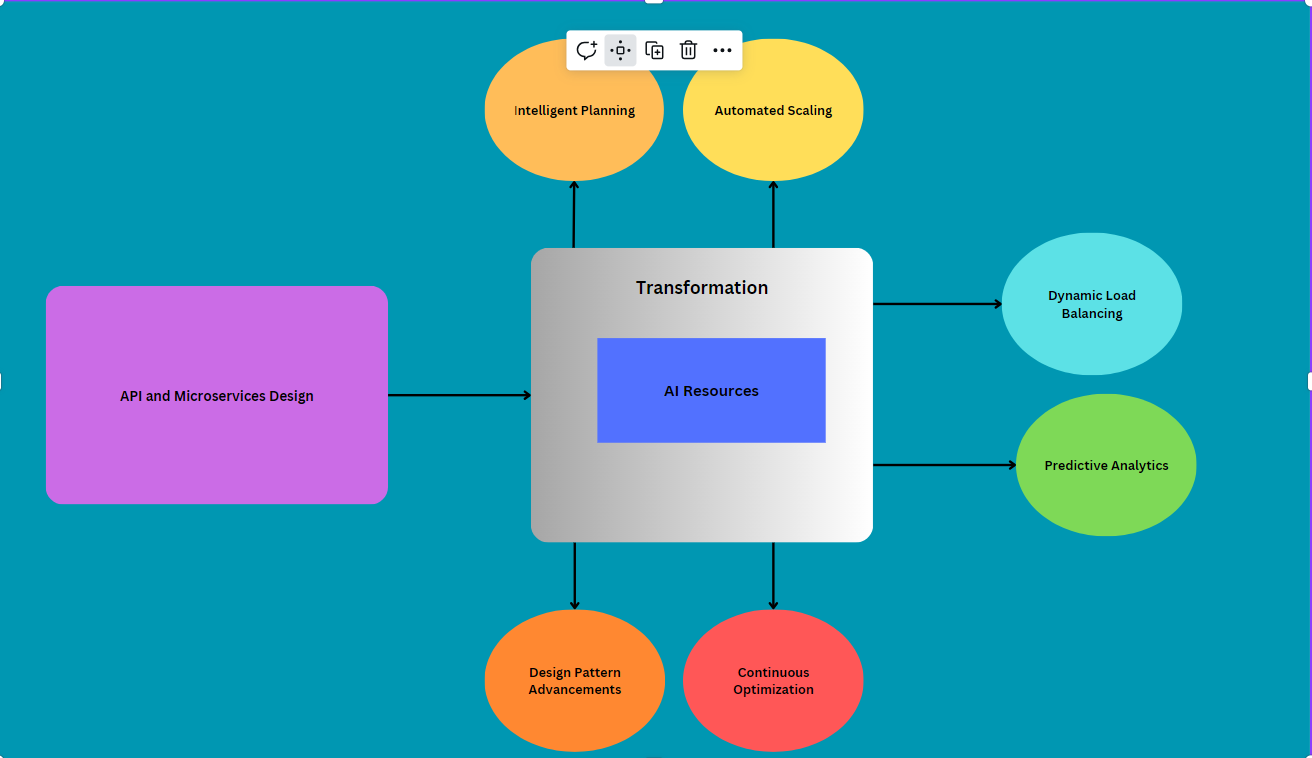

The Changing Face of Consumption and End User Experience

IT architecture has evolved through several distinct epochs that supported the

evolution of business technology. This business technology shift is more than

a “consumption gap … the idea that technology companies can add features and

complexity to their products much faster than consumers can consume

them.” Indeed, we have seen a trend that argues that increased technology

results in improved flexibility and intuitive product use. As Steve Jobs said,

“Simple can be harder than complex: You must work hard to get your thinking

clean to make it simple. But it’s worth it in the end because once you get

there, you can move mountains.” As business technology evolves, it

delivers simplicity with more flexible consumption models that support

building a more intuitive and contextual end-user experience. The

architectural skills that support this evolution are also changing. This

article discusses how those IT architecture skills are evolving. It suggests

how the architecture toolkit for the future is also evolving so that we can

continue to evolve technologies that “can make life easier … [we] touch the

right people. [with] things [that] can profoundly influence life.”

Quote for the day:

"Holding on to the unchangeable past

is a waste of energy, and serves no purpose in creating a better future." --

Unknown

/pcq/media/media_files/zE6gPkLY50H5AkPUFC4s.jpg)