What is the cost of not doing enterprise architecture?

Without an EA, an organisation may struggle to show how its IT projects and

technology decisions align with its business goals, leading to initiatives that

do not support the overall business strategy or deliver optimal value. A company

favouring growth through acquisition should be buying systems and negotiating

contracts that support onboarding of more users and more data/transactions

without cost increasing significantly. The EA should allow for understanding

which processes and technology would be impacted by the strategy, for modelling

out the impact and also being used as part of the decision process. Equally, the

architecture can consider strategic trends and be designed to support those, for

example, bankrupt US retailer, Sears, was slow to adopt e-commerce, allowing

competitors to capture the growing online shopping market. ... Your Enterprise

Architecture provides a framework for making informed decisions about IT

investments and strategies. Without the holistic view that EA offers,

decision-makers may lack the full context for their decisions, leading to

choices that are suboptimal or that fail to consider the interdependencies and

long-term implications for the organisation.

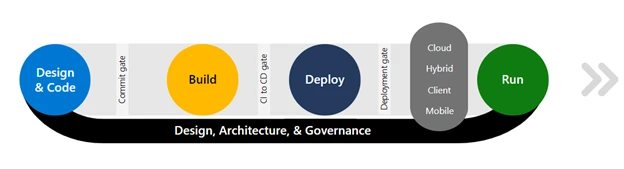

Making Software Development Boring to Deliver Business Value

Boerman argued that software development should become boring. He made the

distinction between boring software and exciting software: Boring software in

that categorization resembles all software that has been built countless times,

and will be so a billion times more. In this context, I am specifically thinking

about back-end systems, though this rings true for front-end systems as well.

Exciting software is all the projects that require creativity to build. Think

about purpose-built algorithms, automations, AI integrations, and the like.

Making software development boring again is about laying a prime focus on

delivering business value, and making the delivery of these aspects predictable

and repeatable, Boerman argued. This requires moving infrastructure out of the

way in such a way that it is still there, but does not burden the day-to-day

development process: While infrastructure takes most of the development time, it

technically delivers the least amount of business value, which can be found in

the data and the operations executed against it. New exciting experiments may be

fast-moving and unstable, while the boring core is meant to be and remain of

high quality such that it can withstand outside disruptions, Boerman

concluded.

New TDWI Assessment Examines the State of Data Quality Maturity Today

“With data becoming such a critical part of a business’s ability to compete,

it’s no wonder there’s a growing emphasis on data quality,” Halper began.

“Organizations need better and faster insights in order to succeed, and for that

they need better, more enriched data sets for advanced analytics -- such as

predictive analytics and machine learning.” She explained that to do this,

organizations are not only increasing the amount of traditional, structured data

they’re collecting, they’re also looking for newer data types, such as

unstructured text data or semistructured data from websites. Taken together,

these various types of data can offer significantly more opportunities for

insights, she added. As an example, Halper mentioned the idea of an organization

using notes from its call center -- typically unstructured or semistructured

text data -- to analyze customer satisfaction, either with a particular product

or with the company as a whole. This information can then be fed back into an

analytics or machine learning routine and reveal patterns or other insights

meaningful to the company. “Regardless of the type of data or its end use,” she

said, “the original data must be high quality. It must be accurate, complete,

timely, trustworthy, and fit for purpose.”

The Five Biggest Challenges with Large-Scale Cloud Migrations

Several issues can arise when attempting to migrate legacy systems to the

cloud. The system may not be optimized for cloud performance and scalability,

so it is important to develop and implement solutions that boost the system’s

speed and capacity to get the most from the cloud migration. Other issues

common with legacy system integration include data security, data integrity,

and cost management. The latter is often a particular concern because

companies may also be required to pay for training and maintenance in addition

to the cost of migration. ... The risks of migrating data to the cloud include

data security, data corruption, and excessive downtime, which can cost money

and negatively impact performance. To optimize migration success and minimize

downtime, it is vital for companies to understand the amount of data involved

and the bandwidth necessary to complete the transfer with minimal work

disruption. ... Due to poor infrastructure and configuration, many companies

cannot take advantage of the benefits of cloud computing. Often, companies

fail to maximize the move from fixed infrastructure to scalable and dynamic

cloud resources.

Getting the BELT: Empowering Executive Leadership in Data Governance

The active engagement of the ELT in the data governance process is critical

not only for setting a strategic direction, but also for catalyzing a shift in

organizational mindset. By championing the principles of NIDG, the ELT paves

the way for a governance model that is both effective and sustainable. This

leadership commitment helps in breaking down silos, promoting

cross-departmental collaboration, and establishing a shared vision that

recognizes data as a pivotal asset. Through their actions and decisions,

executive leaders serve as role models, demonstrating the value of data

governance and encouraging a culture of continuous improvement. Their

involvement ensures that data governance initiatives are aligned with business

strategies, driving the organization toward achieving its goals while

maintaining data integrity and compliance. ... The journey towards effective

data governance begins with buy-in, not just from the ELT, but across the

entire organization. Achieving this requires the ELT to understand the

strategic importance of data governance and to communicate this value

convincingly.

Going passwordless with passkeys in Windows and .NET

Passkeys managed by Windows Hello are “device-bound passkeys” tied to your PC.

Windows can support other passkeys, for example passkeys stored on a nearby

smartphone or on a modern security token. There’s even the option of using

third parties to provide and manage passkeys, for example via a banking app or

a web service. Windows passkey support allows you to save keys on third-party

devices. You can use a QR code to transfer the passkey data to the device, or

if it’s a linked Android smartphone, you can transfer it over a local wireless

connection. In both cases the devices need a biometric identity sensor and

secure storage. As an alternative, Windows will work with FIDO2-ready security

keys, storing passkeys on a YubiKey or similar device. A Windows Security

dialog helps you choose where to save your keys and how. If you’re saving the

key on Windows, you’ll be asked to verify your identity using Windows Hello

before the device is saved locally. If you’re using Windows 11 22H2 or later,

you can manage passkeys through Windows settings.

Generative AI on its own will not improve the customer experience

Businesses around the world hope that, beyond the hype of generative AI, there

lies a near-term path to improving business efficiency and in parallel a

longer-term ability to grow revenue. There is one, not insignificant,

consideration to weigh before the true savings can be measured. In 2024, as in

2023, generative AI and ChatGPT both trail "Customer Service / Telephone

number" as search terms on Google in most countries. Most of those searches

involve a quest by a customer to reach a human being. There is great

frustration because most businesses are working hard to make it difficult to

reach a person. This gap between the corporate commitment to removing the

human connection in customer service and the customer's desire for a human

connection almost always points to a bad business process. The business must

examine why the customer doesn't use the self-service channel. This discovery

process is a precursor to deeper self-service powered by generative AI. Our

first recommendation is to step back and ensure the customer service process

you want to supercharge with generative AI satisfies customers.

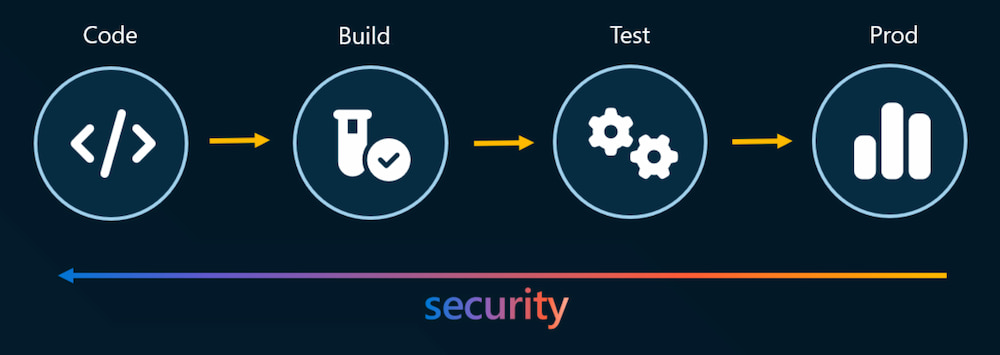

How continuous SDL can help you build more secure software

Beyond making the SDL automated, data-driven, and transparent, Microsoft is

also focused on modernizing the practices that the SDL is built on to keep up

with changing technologies and ensure our products and services are secure by

design and by default. In 2023, six new requirements were introduced, six were

retired, and 19 received major updates. We’re investing in new threat modeling

capabilities, accelerating the adoption of new memory-safe languages, and

focusing on securing open-source software and the software supply chain. We’re

committed to providing continued assurance to open-source software security,

measuring and monitoring open-source code repositories to ensure

vulnerabilities are identified and remediated on a continuous basis. Microsoft

is also dedicated to bringing responsible AI into the SDL, incorporating AI

into our security tooling to help developers identify and fix vulnerabilities

faster. We’ve built new capabilities like the AI Red Team to find and fix

vulnerabilities in AI systems. By introducing modernized practices into the

SDL, we can stay ahead of attacker innovation, designing faster defenses that

protect against new classes of vulnerabilities.

Rethinking SDLC security and governance: A new paradigm with identity at the forefront

Poorly governed identities have become a gateway for substantial incidents.

High-profile breaches at companies like LastPass and Okta have illuminated the

attackers' method: exploiting the identity attack vector to orchestrate some

of the most notable breaches, using compromised accounts to potentially alter

source code and extract valuable information. These events underscore a clear

and present trend of identity theft through phishing or ransomware attacks,

which then pave the way for attackers to infiltrate the software development

lifecycle (SDLC), leading to the insertion of malicious code and the theft of

data. Despite the clear risks, organizations continue to fumble in securing

and managing these identities, making it the riskiest yet most overlooked

attack vector facing SDLC security and governance today. As we pivot to

address this critical oversight, it's imperative to understand the role of

identity within the SDLC. The “Inverted Pyramid" analogy is a useful

conceptual framework that captures the essence of the old and new paradigms

and how reorienting our approach can better protect against these insidious

threats.

Analyzing the CEO–CMO relationship and its effect on growth

It’s estimated that only 10 percent of Fortune 250 CEOs have marketing

experience. There’s also a dramatic acceleration of digital technology in the

world of marketing. We’re no longer judging marketing by television

commercials. There’s a whole slew of different components to think through.

And the data piece that you hinted at is that these customers’ signals are now

everywhere. It’s incumbent upon us as marketers to interpret them and feed

them back to our organizations in such a way that we don’t talk about data but

we talk about insights and are able to connect the dots. ... As we come up

with a means to measure marketing, the CEO or CFO needs to learn the

measurement systems in place to understand what it means when I cut budget,

what it means when I invest in it, and how we tie those activities to

outcomes. That robust measurement system can help you understand your brand,

how your customers perceive your brand, and what level of fidelity they give

you credit for. That’s where the brand scores are really helpful. But you also

need an econometric model to connect how the money you’re spending on

different channels such as video, content, and search—all working in

tandem—helps create the results you want.

Quote for the day:

"Success is the sum of small efforts,

repeated day-in and day-out." -- Robert Collier

:format(webp)/cloudfront-us-east-1.images.arcpublishing.com/coindesk/7KX4QWBYHJDW7LLOH53VFK4EGI.jpg)