Evolving Landscape of ISO Standards for GenAI

The burgeoning field of Generative AI (GenAI) presents immense potential for

innovation and societal benefit. However, navigating this landscape responsibly

requires addressing potential concerns regarding its development and

application. Recognizing this need, the International Organization for

Standardization (ISO) has embarked on the crucial task of establishing a

comprehensive set of standards. ... A shared understanding of fundamental

terminology is vital in any field. ISO/IEC 22989 serves as the cornerstone by

establishing a common language within the AI community. This foundational

standard precisely defines key terms like “artificial intelligence,” “machine

learning,” and “deep learning,” ensuring clear communication and fostering

collaboration and knowledge sharing among stakeholders. ... Similar to the need

for blueprints in construction, ISO/IEC 23053 provides a robust framework for AI

development. This standard outlines a generic structure for AI systems based on

machine learning (ML) technology. This framework serves as a guide for

developers, enabling them to adopt a systematic approach to designing and

implementing GenAI solutions.

Your Face For Sale: Anyone Can Legally Gather & Market Your Facial Data

We need a range of regulations on the collection and modification of facial

information. We also need a stricter status of facial information itself.

Thankfully, some developments in this area are looking promising. Experts at the

University of Technology Sydney have proposed a comprehensive legal framework

for regulating the use of facial recognition technology under Australian law. It

contains proposals for regulating the first stage of non-consensual activity:

the collection of personal information. That may help in the development of new

laws. Regarding photo modification using AI, we’ll have to wait for

announcements from the newly established government AI expert group working to

develop “safe and responsible AI practices”. There are no specific discussions

about a higher level of protection for our facial information in general.

However, the government’s recent response to the Attorney-General’s Privacy Act

review has some promising provisions. The government has agreed further

consideration should be given to enhanced risk assessment requirements in the

context of facial recognition technology and other uses of biometric

information.

Affective Computing: Scientists Connect Human Emotions With AI

Affective computing is a multidisciplinary field integrating computer science,

engineering, psychology, neuroscience, and other related disciplines. A new and

comprehensive review on affective computing was recently published in the

journal Intelligent Computing. It outlines recent advancements, challenges, and

future trends. Affective computing enables machines to perceive, recognize,

understand, and respond to human emotions. It has various applications across

different sectors, such as education, healthcare, business services and the

integration of science and art. Emotional intelligence plays a significant role

in human-machine interactions, and affective computing has the potential to

significantly enhance these interactions. ... Affective computing, a field that

combines technology with the nuanced understanding of human emotions, is

experiencing surges in innovation and related ethical considerations.

Innovations identified in the review include emotion-generation techniques that

enhance the naturalness of human-computer interactions by increasing the realism

of the facial expressions and body movements of avatars and robots.

The open source problem

Over the years, I’ve trended toward permissive, Apache-style licensing,

asserting that it’s better for community development. But is that true? It’s

hard to argue against the broad community that develops Linux, for example,

which is governed by the GPL. Because freedom is baked into the software, it’s

harder (though not impossible) to fracture that community by forking the

project. To me, this feels critical, and it’s one reason I’m revisiting the

importance of software freedom (GPL, copyleft), and not merely developer/user

freedom (Apache). If nothing else, as tedious as the internecine bickering was

in the early debates between free software and open source (GPL versus Apache),

that tension was good for software, generally. It gave project maintainers a

choice in a way they really don’t have today because copyleft options

disappeared when cloud came along and never recovered. Even corporations, those

“evil overlords” as some believe, tended to use free and open source licenses in

the pre-cloud world because they were useful. Today companies invent new

licenses because the Free Software Foundation and OSI have been living in the

past while software charged into the future. Individual and corporate developers

lost choice along the way.

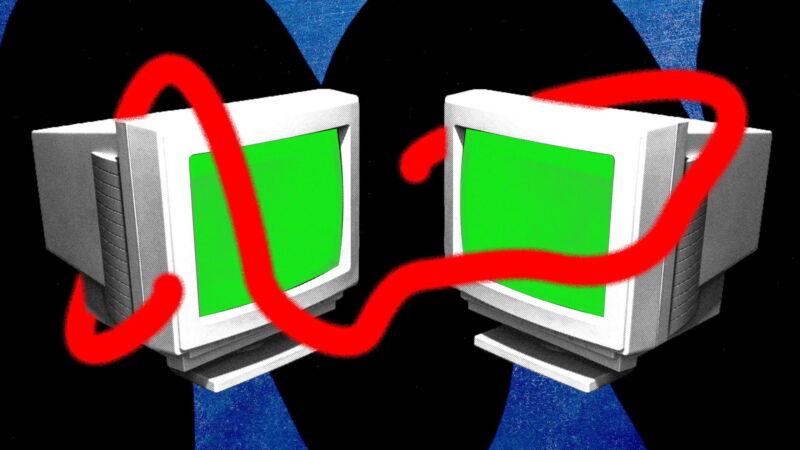

Researchers create AI worms that can spread from one system to another

Now, in a demonstration of the risks of connected, autonomous AI ecosystems, a

group of researchers has created one of what they claim are the first

generative AI worms—which can spread from one system to another, potentially

stealing data or deploying malware in the process. “It basically means that

now you have the ability to conduct or to perform a new kind of cyberattack

that hasn't been seen before,” says Ben Nassi, a Cornell Tech researcher

behind the research. ... To create the generative AI worm, the researchers

turned to a so-called “adversarial self-replicating prompt.” This is a prompt

that triggers the generative AI model to output, in its response, another

prompt, the researchers say. In short, the AI system is told to produce a set

of further instructions in its replies. This is broadly similar to traditional

SQL injection and buffer overflow attacks, the researchers say. To show how

the worm can work, the researchers created an email system that could send and

receive messages using generative AI, plugging into ChatGPT, Gemini, and open

source LLM, LLaVA. They then found two ways to exploit the system—by using a

text-based self-replicating prompt and by embedding a self-replicating prompt

within an image file.

Do You Overthink? How to Avoid Analysis Paralysis in Decision Making

Welcome to the world of analysis paralysis. This phenomenon occurs when an

influx of information and options leads to overthinking, creating a deadlock

in decision-making. Decision makers, driven by the fear of making the wrong

choice or seeking the perfect solution, may find themselves caught in a loop

of analysis, reevaluation, and hesitation, consequently losing sight of the

overall goal. ... Analysis paralysis impacts decision making by stifling risk

taking, preventing open dialogue, and constraining innovation—all of which are

essential elements for successful technology development. It often leads to

mental exhaustion, reduced concentration, and increased stress from endlessly

evaluating information, also known as decision fatigue. The implications of

analysis paralysis include missed opportunities due to ongoing hesitation and

innovative potential being restricted by cautious decision making. ... In the

technology sector, the consequences of poor decisions can be far-reaching,

potentially unraveling extensive work and achievements. Fear of this happening

is heightened due to the sector’s competitive nature. Teams worry that a

single misstep could have a cascading negative impact.

30 years of the CISO role – how things have changed since Steve Katz

Katz had no idea what the CISO job was when he accepted it in 1995. Neither

did Citicorp. “They said you’ve got a blank cheque, build something great —

whatever the heck it is,” Katz recounted during the 2021 podcast. “The CEO

said, ‘The board has no idea, just go do something.’” Citicorp gave Katz just

two directives after hiring him: “Build the best cybersecurity department in

the world” and “go out and spend time with our top international banking

customers to limit the damage.” ... today’s CISO must be able to communicate

cyber threats in terms that line of business can understand almost instantly.

“It’s the ability to articulate risk in a way that is related to the business

processes in the organization,” says Fitzgerald. “You need to be able to

translate what risk means. Does it mean I can’t run business operations? Does

it mean we won’t be able to treat patients in our hospital because we had a

ransomware attack?” Deaner says CISOs have an obvious role to play in core

infosec initiatives such as implementing a business continuity plan or

disaster recovery testing. ... “People in CISO circles absolutely talk a lot

about liability. We’re all concerned about it,” Deaner acknowledges. “People

are taking the changes to those regulations very seriously because they’re

there for a reason.”

Vishing, Smishing Thrive in Gap in Enterprise, CSP Security Views

There is a significant gap between enterprises’ high expectations that their

communications service provider will provide the security needed to protect

them against voice and messaging scams and the level of security those CSPs

offer, according to telecom and cybersecurity software maker Enea. Bad actors

and state-sponsored threat groups, armed with the latest generative AI tools,

are rushing to exploit that gap, a trend that is apparent in the skyrocketing

numbers of smishing (text-based phishing) and vishing (voice-based frauds)

that are hitting enterprises and the jump in all phishing categories since the

November 2022 release of the ChatGPT chatbot by OpenAI, according to a report

this week by Enea. ... “Maintaining and enhancing mobile network security is a

never-ending challenge for CSPs,” the report’s authors wrote. “Mobile networks

are constantly evolving – and continually being threatened by a range of

threat actors who may have different objectives, but all of whom can exploit

vulnerabilities and execute breaches that impact millions of subscribers and

enterprises and can be highly costly to remediate.”

Causal AI: AI Confesses Why It Did What It Did

Traditional AI models are fixed in time and understand nothing. Causal AI is a

different animal entirely. “Causal AI is dynamic, whereas comparable tools are

static. Causal AI represents how an event impacts the world later. Such a

model can be queried to find out how things might work,” says Brent Field at

Infosys Consulting. “On the other hand, traditional machine learning models

build a static representation of what correlates with what. They tend not to

work well when the world changes, something statisticians call nonergodicity,”

he says. It’s important to grok why this one point of nonergodicity is such a

crucial difference to almost everything we do. “Nonergodicity is everywhere.

It’s this one reason why money managers generally underperform the S&P 500

index funds. It’s why election polls are often off by many percentage points.

... Without knowing the cause of an event or potential outcome, the knowledge

we extract from AI is largely backward facing even when it is forward

predicting. Outputs based on historical data and events alone are by nature

handicapped and sometimes useless. Causal AI seeks to remedy that.

Leveraging power quality intelligence to drive data center sustainability

The challenge is that some data centers lack the power monitoring capabilities

necessary for achieving heightened efficiency and sustainability. Moreover,

there needs to be more continuous power quality monitoring. Many rely on

rudimentary measurements, such as voltage, current, and power parameters,

gathered by intelligent rack power distribution units (PDUs), which are then

transmitted to DCIM, BMS, and other infrastructure management and monitoring

systems. Some consider power quality only during initial setup or occasionally

revisit it when reconfiguring IT setups. This underscores the critical role of

intelligent PDUs in delivering robust power quality monitoring and the

imperative for data center and facility managers to steer efforts toward

increased efficiency and sustainability. Certain power quality issues can have

detrimental effects on the electrical reliability of a data center, leading to

costly unplanned downtime and posing challenges in enhancing sustainability.

... These power quality issues can profoundly affect a data center's

functionality and dependability. They may result in unforeseen downtime, harm

to equipment, data loss or corruption, and reduced network

efficiency.

Quote for the day:

"If you want to achieve excellence,

you can get there today. As of this second, quit doing less-than-excellent

work." -- Thomas J. Watson