What is RAG? More accurate and reliable LLMs

Retrieval-Augmented Generation (RAG) is an AI framework that significantly

impacts the field of Natural Language Processing (NLP). It is designed to

improve the accuracy and richness of content produced by language models.

Here’s a synthesis of the key points regarding RAG from various

sources: RAG is a system that retrieves facts from an external knowledge

base to provide grounding for large language models (LLMs). This grounding

ensures that the information generated by the LLMs is based on accurate and

current data, which is particularly important given that LLMs can sometimes

produce inconsistent outputs; The framework operates as a hybrid model,

integrating both retrieval and generative models. This integration allows RAG

to produce text that is not only contextually accurate but also rich in

information. The capability of RAG to draw from extensive databases of

information enables it to contribute contextually relevant and detailed

content to the generative process; RAG addresses a limitation of foundational

language models, which are generally trained offline on broad domain corpora

and are not updated with new information post-training.

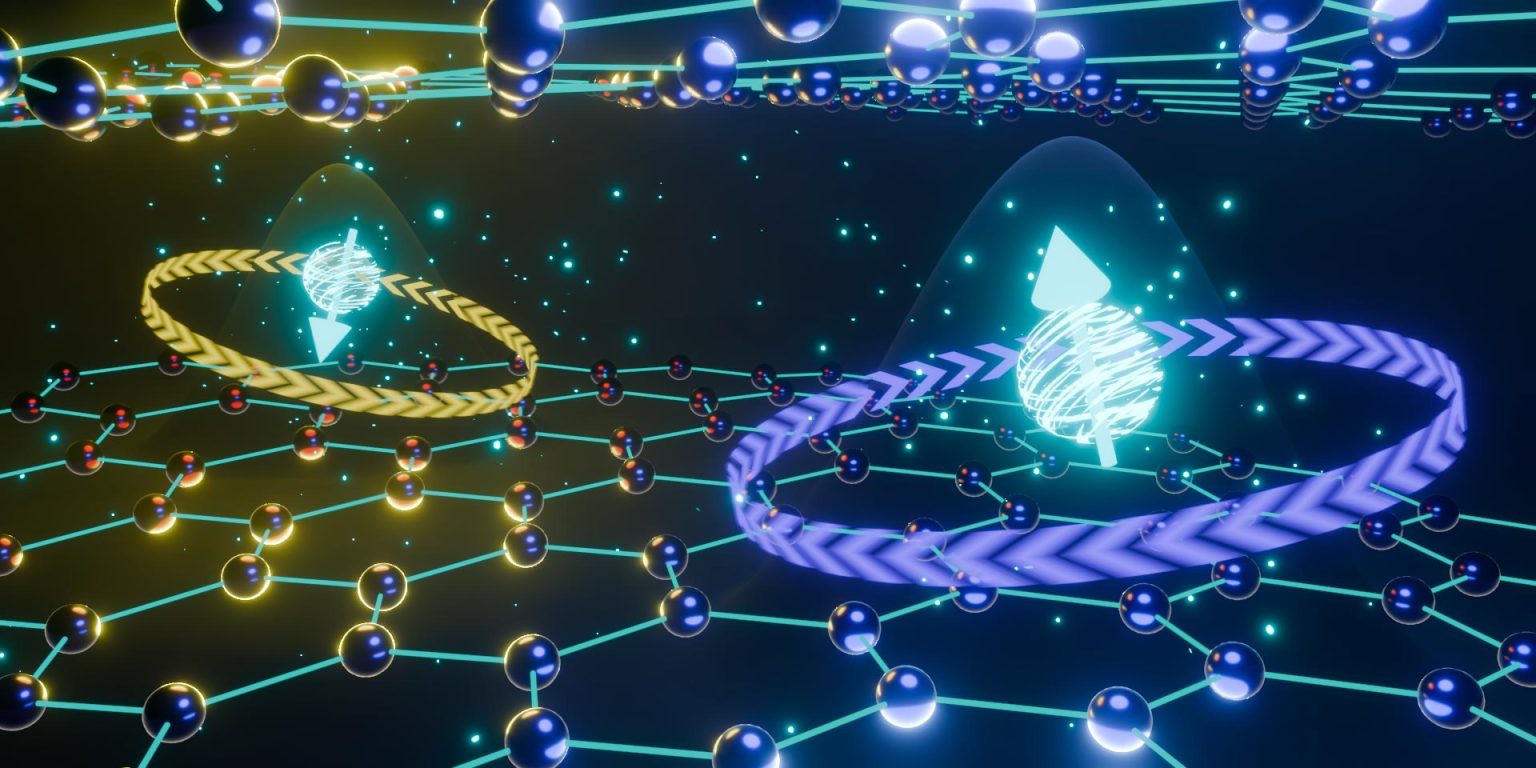

Redefining Quantum Bits: The Graphene Valley Breakthrough

Because quantum information is much more prone to being corrupted – and

therefore become unsuitable for computational tasks – by the surrounding

environment than its classical counterpart, researchers who study different

qubit candidates must characterize their coherence properties: these tell them

how well and for how long quantum information can survive in their qubit

system. In most traditional quantum dots, electron spin decoherence can be

caused by the spin-orbit interaction, which introduces an unwanted coupling

between the electron spin and the vibrations of the host lattice, and the

hyperfine interaction between the electron spin and the surrounding nuclear

spins. In graphene as well as in other carbon-based materials, spin-orbit

coupling and hyperfine interaction are both weak: this makes graphene quantum

dots especially appealing for spin qubits. The results reported by Garreis,

Tong, and co-authors add one more promising facet to the picture. ... The

hexagonal symmetry observed in this so-called real space is also present in

momentum space, where the vertices of the lattice don’t correspond to the

spatial locations of carbon atoms but to values of momentum associated with

the free electrons on the lattice.

5 Ways AI Can Make Your Human-To-Human Relationships More Effective

Understanding your audience is a major challenge for many business leaders.

After all, if you knew what did or didn’t appeal to your audience, it would be

much easier to speak to them in a meaningful, engaging way that sparks lasting

connections. And AI can help here, too. This was illustrated to me during a

recent conversation with James Webb, co-founder and CTO of Comb Insights,

whose app uses proprietary AI to provide sentiment scores on comments on

social media posts. "Using AI to quickly evaluate the overall sentiment of the

comments on a post can give business leaders an immediate understanding of

whether their content resonated with their audience,” he told me in an

interview. “Seeing the ratio of positive to neutral or negative comments, and

seeing the most common words that show up in the comments, can provide quick

insights into why a post succeeded or failed. With this instant understanding

of their audience, businesses can pivot in the type of social media content

they produce so they can strengthen these important digital relationships.”

The missing link of the AI safety conversation

From a practical standpoint, the high cost of AI development means that

companies are more likely to rely on a single model to build their product —

but product outages or governance failures can then cause a ripple effect of

impact. What happens if the model you’ve built your company on no longer

exists or has been degraded? Thankfully, OpenAI continues to exist today, but

consider how many companies would be out of luck if OpenAI lost its employees

and could no longer maintain its stack. Another risk is relying heavily on

systems that are randomly probabilistic. We are not used to this and the world

we live in so far has been engineered and designed to function with a

definitive answer. Even if OpenAI continues to thrive, their models are fluid

in terms of output, and they constantly tweak them, which means the code you

have written to support these and the results your customers are relying on

can change without your knowledge or control. Centralization also creates

safety issues. These companies are working in the best interest of themselves.

If there is a safety or risk concern with a model, you have much less control

over fixing that issue or less access to alternatives.

Intro to Digital Fingerprints

Digital fingerprinting is a technique used to identify users across different

websites based on their unique device and browser characteristics. These

characteristics - fingerprint parameters, can include various software,

hardware (CPU, RAM, GPU, media devices - cameras, mics, speakers), location,

time zone, IP, screen size/resolution, browser/OS languages, network, internet

provider-related and other attributes. The combination of these parameters

creates a unique identifier - fingerprint, that can be used to track a user's

online activity. Fingerprints play a crucial role in online security, enabling

services to identify and authenticate unique users. They also make it possible

for users to trick such systems to stay anonymous online. However, if you can

manipulate your fingerprints, you can run tens or hundreds or more different

accounts to pretend that they are unique, authentic users. While this may

sound cool, it has serious implications as it can make it possible to create

an army of bots that can spread spam and fakes all over the internet,

potentially resulting in fraudulent actions.

Looking at a data-driven financial future for India

In the intricate landscape of financial services, managing vast data, complex

silos, and strict compliance demands a strategic solution. A hybrid data mesh

is an innovative approach to financial operations that brings flexibility and

coherence. This method combines a distributed architecture with an SSOT,

ensuring accurate, secure, and compliant data handling. Data distribution

across systems and functions facilitates quick insights while adhering to

quality and privacy standards. The hybrid data mesh concept integrates the

advantages of a distributed architecture tailored to domain-specific data with

the SSOT, providing enhanced flexibility and scalability. This fusion ensures

data coherence and accuracy while allowing domain independence, reinforcing

security, and streamlining traceable and auditable compliance. Predictive

models can be tailored to specific products or customer segments by harnessing

AI and ML tools, enhancing decision-making in a dynamic market. This

streamlined approach identifies growth opportunities and nurtures a culture of

adaptability and innovation.

L&D trends that will define 2024

AI-assisted coding/software development employs AI to help write and review

code. The potential of the technology to assist new developers in improving

their code and saving time is valuable. The edtech sector, in particular, will

employ AI to create customised learning experiences besides using tools that

offer instant feedback on code. We could be looking at automating assessments

for unbiased, error-free evaluations. Manually identifying personalised

learning journeys for numerous individuals is time-consuming and extremely

difficult. AI-assisted coding can help solve this operational challenge. Soon,

we’ll give users quick, accurate responses and allow them to accelerate their

learning journeys. ... Organisations will focus on data-driven,

business-aligned learning initiatives for specific job-role competencies. This

is to qualify L&D impact by easily tracking employee metrics such as job

performance, efficiency, engagement, and employee satisfaction in new ways.

When properly implemented, the accumulated data can raise confidence levels

among higher-ups and lead to sustained investment in training practices.

Organisations also analyse the information to identify areas of positive

impact and focus on L&D in those regions for frequently better

outcomes.

New Guidance Urges US Water Sector to Boost Cyber Resilience

"Cyber threat actors are aware of - and deliberately target - single points of

failure," the guidance states. "A compromise or failure of a water and

wastewater sector organization could cause cascading impacts throughout the

sector and other critical infrastructure sectors." The incident response guide

aims to provide organizations with best practices for all four stages of the

incident response life cycle - from preparation through detection, recovery

and post-incident activities. The guidance says "the cyber incident reporting

landscape is constantly evolving" and encourages water sector officials to

review their reporting obligations and "consider engaging in additional

voluntary reporting and/or information sharing" measures. Eric Goldstein,

CISA's executive director for cybersecurity, said in a statement announcing

the joint guidance that the U.S. water and wastewater sector "is under

constant threat from malicious cyber actors." "In the new year, CISA will

continue to focus on taking every action possible to support 'target-rich,

cyber-poor' entities like WWS utilities by providing actionable resources and

encouraging all organizations to report cyber incidents," he said.

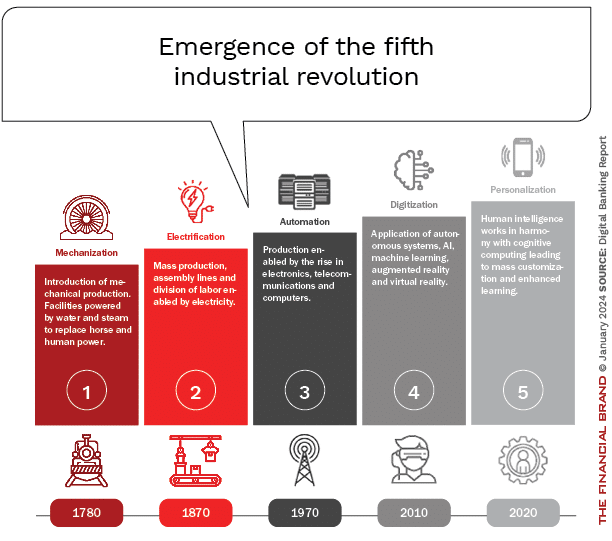

Banking at the Precipice: Navigating the Fifth Industrial Revolution

As retail banking stands amid the Fourth Industrial Revolution’s digital

transformation, leaders now must prepare for an imminent Fifth Industrial

Revolution poised to profoundly reshape markets and experiences. Defined by

extreme personalization, mass customization and precision augmentation, the

emerging revolution’s exact disruptions remain somewhat undefined. Yet

advancements in generative artificial intelligence, ambient interfaces and

hyper-connectivity hint at consumer-in-command days ahead. ... Most of these

Fifth Industrial Revolution financial applications seem unimaginable today.

Imagine augmented live views layering physical surfaces like a retail store,

billboard or car dealership with tailored offers based on persona

identification and real-time transactional and behavioral data. Moving

further, imagine a ‘digital twin agent’ seamlessly negotiating a personalized

deal or pre-approved financing instantly. In this world, augmented and mixed

reality interfaces, bridging physical and virtual worlds, will be able to move

money experiences from transactions to value-based propositions based on where

your eyes focus and engagements you have had in the past.

How generative AI is changing entrepreneurship

Entrepreneurs are expected to do a wide range of time-consuming tasks, from

writing emails and answering phone calls to orchestrating product

demonstrations and coding a website. “AI does all of those things well,”

Mollick said. “It lets you focus more on what your top skill is, and it kind

of handles everything else.” Generative AI can also serve as a guide. “A third

of Americans have a business idea that they haven’t acted on because they

don’t know what to do next,” Mollick said. “The AI can tell you what to do

next, help you write the emails, [and] help you build the product.” Mollick

noted that users should be aware of the benefits and limitations of the

technology. “It’s kind of like an intern who wants to make you happy and

therefore lies a lot and is kind of naive [and] never admits that they made a

mistake,” he said. “Once you think about [AI] that way, you end up in much

better shape.” Generative AI is a new general-purpose technology — one that

comes around once in a generation and touches just about everything humans do,

Mollick said, like electricity, computers, and the internet have. For

entrepreneurs, generative AI can assist with researching ideas, coming up with

logos and names, creating a website, and more, Mollick said.

Quote for the day:

"Leadership is not about titles, positions, or flow charts. It is about

one life influencing another." -- John C. Maxwell

)