Cloud infrastructure spending is growing

Although I love to be right about the strong cloud spending, that does not mean it’s suitable for all enterprises. Indeed, the trend will be to overspend, even after net-new finops deployments that closely monitor where the dollars are spent. We must focus on accountability, automation, and discipline around allocating and paying for cloud resources. I suspect many cloud deployments are hugely underoptimized and need a tune-up. Even though some of this shared infrastructure spending is unavoidable, CIOs need to review how the spending occurs and look for opportunities to save dollars without reducing the value generated by these systems. I suggest companies consider all other options, such as bringing some processing into enterprise data centers. Those prices have been falling while they have been stable or rising on the public cloud side. Also, many systems function in isolation and don’t benefit much from existing within a public cloud. Simple storage is one example, and many enterprises are putting those systems on-premises these days.

BAs are responsible for creating new models that support business decisions by working closely with finance and IT teams to establish initiatives and strategies aimed at improving revenue and/or optimizing costs. Business analysts need a “strong understanding of regulatory and reporting requirements as well as plenty of experience in forecasting, budgeting, and financial analysis combined with understanding of key performance indicators,” according to Robert Half Technology. ... Business analysts need to know how to pull, analyze and report data trends, share that information with others, and apply it to business goals and needs. Not all business analysts need a background in IT if they have a general understanding of how systems, products, and tools work. Alternatively, some business analysts have a strong IT background and less experience in business but are interested in shifting away from IT into this hybrid role. The role often acts as a communicator between the business and IT sides of the organization, so having extensive experience in either area can be beneficial for business analysts.

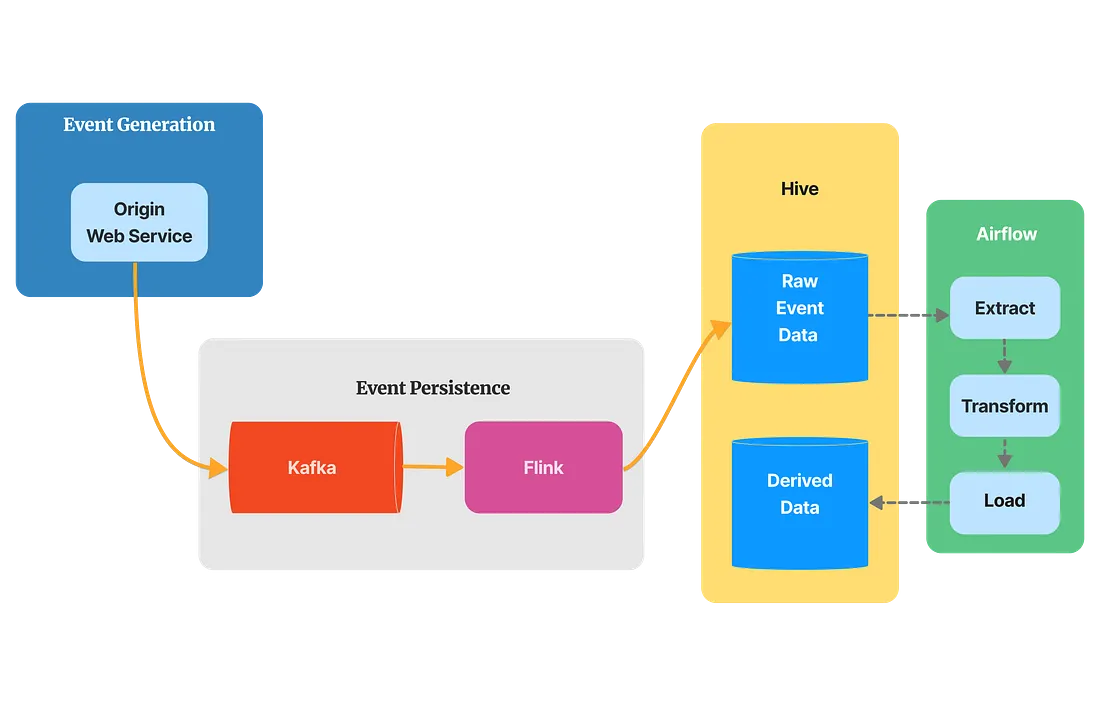

AI Needs Data More Than Data Needs AI

While data plays a foundational role in AI, the reverse is not true. Data

doesn't inherently need AI to exist or be valuable. Data, in various forms, has

been collected and analyzed for centuries without the need for sophisticated AI

algorithms. Data on its own can provide valuable insights and inform

decision-making processes. Therefore, organizations should not blindly chase the

AI hype at the cost of ignoring the importance of data management and data

quality. The role of AI is to take the computation and insights of good quality

data to the next level and not necessarily attempt to fix the decades-old data

management processes. ... While AI relies heavily on data for its operation and

evolution, data can benefit from AI in several ways. Data Management: AI can

help automate data management tasks, making it easier to process, clean and

organize large datasets. Predictive Insights: AI can uncover patterns and

insights in data that may not be immediately apparent to humans, enhancing the

value of the data.

Enterprises see AI as a worthwhile investment

Despite prior industry research indicating that 90% of AI initiatives fail to

produce substantial ROI and roughly half never leave the prototype stage, the

overwhelming majority of respondents to this survey (92%) find business value

from their models in production and 66% feel their models have delivered results

that are outstanding or exceed expectations. Common use cases for AI among these

leading-edge organizations include personalizing the customer experience, fraud

detection, optimizing sales and marketing and improving real-time decision

making. Their success of this group offers a basic roadmap that other

organizations should consider when developing their own best practices,

including: Approach: A majority of responding organizations have a robust,

defined approach and a dedicated team for monitoring ML models in production. In

fact among larger enterprises, 71% have at least 100 people working in ML while

over half have more than 250.

5 Strategies for Cloud Security in Health Care

Adopting data security in the cloud doesn’t mean merely uploading patient data

to S3 and enabling encryption. There are many security controls that need to be

in place before a single patient record is migrated. For instance, there is

particular concern about data security on medical devices and wireless body area

networks (devices that are embedded in a patient’s body). Obviously, it’s vital

to secure such devices from exploits. When running services on the cloud, you

should review all relevant data privacy considerations and encryption controls,

including data encryption, public-key encryption, identity-based encryption,

identity-based broadcast encryption and attribute-based encryption. Then adopt a

framework for achieving secure and controlled identity access using federation

(like OpenID Connect, which is not the same as OpenID, or SAML). Finally, you

should ensure that monitoring and audit controls are in place to maintain

confidentiality. You should also have an incident response plan in place to

handle crisis scenarios in the event of an incident.

Financial Institutions Turn to AI and Cloud to Solve Data Challenges

In data management, the potential uses of GenAI, powered by large language

models, has been recognised by many financial institutions, including State

Street. For instance, it can help in the cross-mapping of datasets, the

classifying of data and more generalist applications such as summarising reports

and responding to plain English inquiries. ... The Alpha platform uses GenAI

with Snowflake as a strategic partner providing the data foundation of the

platform. Snowflake’s cloud-native architecture streamlines data sharing and

governance, enables faster time to market for data-centric applications, and

offers a rich environment of AI and machine learning-based capabilities for data

scientists, quants and engineers. “Every few years, the technology landscape

re-sets, creating a small window of opportunity that in turn enables a giant

leap in innovation; GenAI is the opportunity that will define the new set of

industry leaders over the next decade,” State Street Executive Vice President

and Chief Architect Aman Thind tells A-Team Group.

Building data center networks for GenAI fabric enablement

Building GenAI data centers from a network perspective differs greatly from

traditional data center buildouts -- or even those that were designed to

support high-performance computing (HPC). ... After all, the pace of a GenAI

application is only as fast as its slowest component. If properly built, the

network can be eliminated as a potential performance bottleneck. Building a

highly scalable network is also key to GenAI data centers as it enables future

growth capacity. Network switch fabrics must include hardware that can expand

horizontally and vertically, as well as use network OSes on switching hardware

that include advanced features, such as packet spraying, load awareness and

intelligent traffic redirection. These features provide automated rerouting of

traffic within the network and between GPU processing units that may become

overloaded. ... Early GenAI adopters have concluded that the use of multisite

or micro data centers is the best option to accommodate this level of density.

And, yet again, this puts pressure on the network interconnecting these sites

to be as high-performing and resilient as possible.

Breach Roundup: Still Too Much ICS Exposed on the Internet

Apple responded to an actively exploited zero-day flaw in iOS and iPadOS on

Wednesday with the release of security patches. The identified vulnerability,

tracked as CVE-2023-42824, exists in the kernel and may allow an attacker to

elevate privileges. "Apple is aware of a report that this issue may have been

actively exploited against versions of iOS before iOS 16.6," the company said.

The update also addresses CVE-2023-5217, a WebRTC component issue. WebRTC is

an open-source project that supports real-time computing between browsers and

mobile applications, powering uses such as video and voice calling. ... Sony

Interactive Entertainment alerted around 6,800 individuals about a

cybersecurity breach. The intrusion resulted from an unauthorized party

exploiting a zero-day vulnerability, tracked as CVE-2023-34362, in the MOVEit

file transfer platform. This critical-severity SQL injection flaw, leading to

remote code execution, was used by the Clop ransomware gang in widespread

attacks in late May.

8 Ways to Combat Ageism in Your Job Search

Workplace experts say candidates can combat this by showing what efforts

they've made to quickly pick up new skills and show enthusiasm for future

learning. That might mean enrolling in extra training courses, getting new

certifications and highlighting them in your résumé or interview, North said.

Younger workers may need to show that they have taken proactive measures to

learn new job skills they may lack. Older workers may want to show that they

can keep up with fast-paced environments and various tech tools. ... "If you

don't have to input this information, don't volunteer it," he said, adding

that phrases like 40-plus years of experience also may not be best. Instead,

stick to your skills and experiences. If you lack experience in one area, show

how your skills are transferrable for this specific job. You can also be clear

about any kind of transition, like a career change, or gap in employment by

placing it in an executive summary section at the top of your résumé, Freeman

said. Quantify your previous work's impact with numbers or qualify it by

explaining how it affected the results.

Ransomware Crisis, Recession Fears Leave CISOs in Tough Spot

With a new ransomware target being attacked every 14 seconds, organizations

must prioritize ransomware prevention. With its developing sophistication,

mitigating ransomware is increasingly more challenging. There's no silver

bullet to eradicate attacks, and having to operate in a tight market adds a

layer of complexity. CISOs and security leaders must focus on the best return

on investment while building out a multilayered approach for improving their

overall IT security. One strategy to accomplish this is managing attack

vectors using encrypted channels with preventive technologies that can stop

adversaries before they have a chance to compromise networks or while they are

executing their multistep campaigns. ... Ransomware gangs also take advantage

of legitimate websites encrypted with SSL/TLS to look secure, but have been

infected with drive-by downloads. And cybercriminals leech onto browser

vulnerabilities that can lead to infection when the entry point is encrypted,

allowing encrypted threats embedded with malicious payloads to go

unnoticed.

Quote for the day:

“People are not lazy. They simply have

important goals – that is, goals that do not inspire them.” --

Tony Robbins