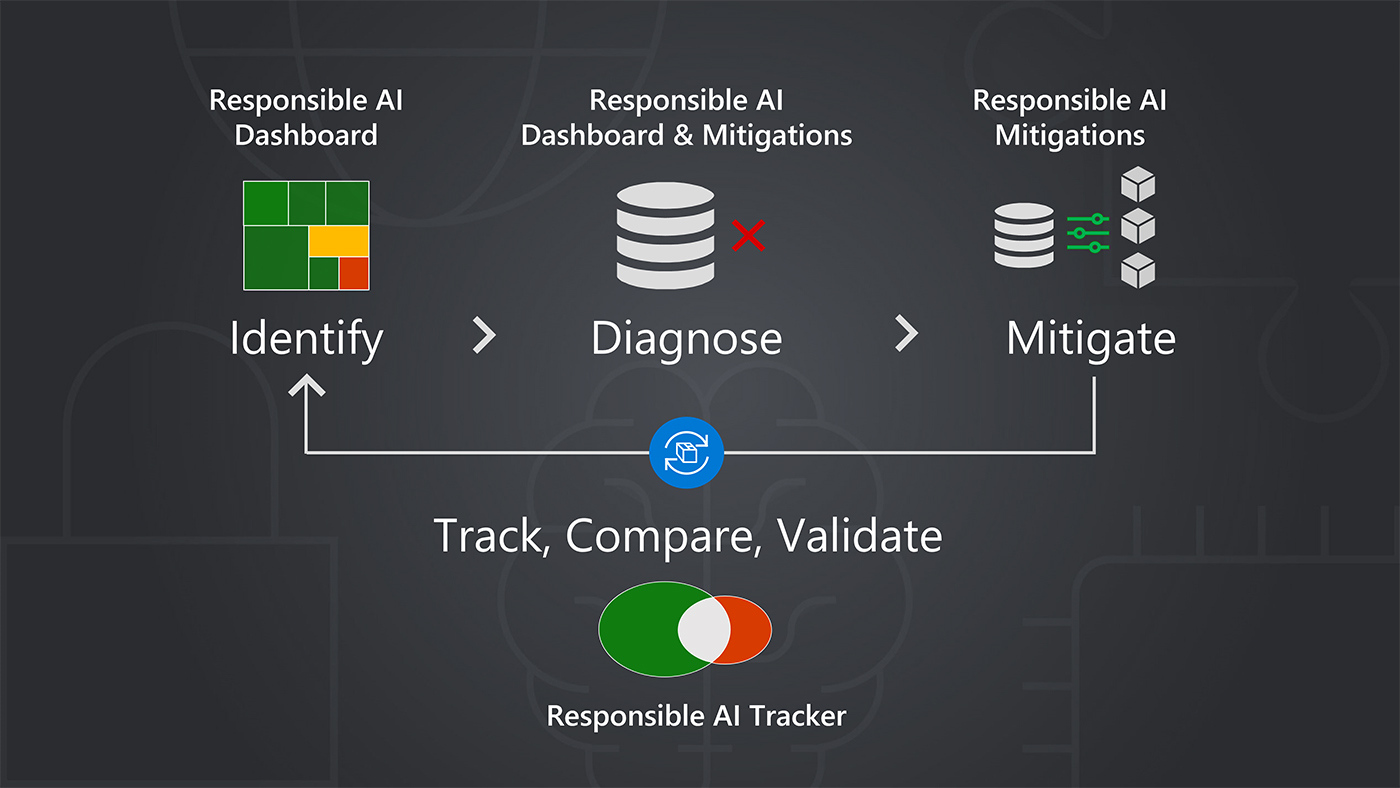

Computer says no. Will fairness survive in the AI age?

A number of risks fall outside of these existing laws and regulations, so while

lawmakers might wrestle with the far-reaching ramifications of AI, other

industry bodies and other groups are driving the adoption of guidance, standards

and frameworks - some of which might become standard industry practice even

without the enforcement of law. One illustration is the US' National Institute

of Standards and Technology's AI risk management framework, which is intended

"for voluntary use and to improve the ability to incorporate trustworthiness

considerations into the design, development, use, and evaluation of AI products,

services, and systems". ... Bias is one particularly important element. The

algorithms at the centre of AI decision making may not be human, but they can

still imbibe the prejudices which hue human judgement. Thankfully, policymakers

in the EU appear to be alive to this risk. The bloc's draft EU Artificial

Intelligence Act addressed a range of issues on algorithmic bias, arguing

technology should be developed to avoid repeating “historical patterns of

discrimination” against minority groups, particularly in contexts such as

recruitment and finance.

12 programming mistakes to avoid

Some say that a good programmer is someone who looks both ways when crossing a

one-way street. But, like playing it fast and loose, this tendency can

backfire. Software that is overly buttoned up can slow your operations to a

crawl. Checking a few null pointers may not make much difference, but some

code is just a little too nervous, checking that the doors are locked again

and again so that sleep never comes. ... Scaling well is a challenge and it is

often a mistake to overlook the ways that scalability might affect how the

system runs. Sometimes, it’s best to consider these problems during the early

stages of planning, when thinking is more abstract. Some features, like

comparing each data entry to another, are inherently quadratic, which means

your optimizations might grow exponentially slower. Dialing back on what you

promise can make a big difference. Thinking about how much theory to apply to

a problem is a bit of a meta-problem because complexity often increases

exponentially. Sometimes the best solution is careful iteration with plenty of

time for load testing.

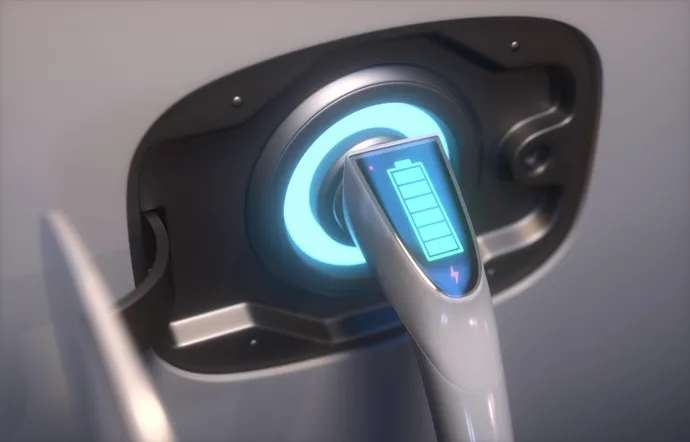

EV Charging Infrastructure Offers an Electric Cyberattack Opportunity

The risks are not just theoretical: A year ago, after Russia invaded Ukraine,

hacktivists compromised charging stations near Moscow to disable them and

display their support for Ukraine and their contempt for Russian President

Vladamir Putin. ... In many ways, EV charging infrastructure represents a

perfect storm of technologies. The devices are connected via mobile

applications and carry the same risks as other IoT devices, but they're also

set to become a critical part of transportation network in the United States,

like other operational technology (OT). And because EV charging stations must

be connected to public networks, ensuring that their communications are

encrypted will be critical to maintaining the security of the devices, says

Dragos' Tonkin. "Hacktivists will always be looking for poorly secured devices

on public networks, it's important that the owners of EV put in place controls

to ensure they are not easy targets," he says. "The crown jewels of the

operators of EV chargers have to be their central platforms, the chargers

themselves intrinsically trust the instructions pushed down from the

center."

Can WebAssembly Solve Serverless’s Problems?

Wasm computing structure is designed in such a way that it has “shifted” the

potential of the serverless landscape, Butcher said. This is due, he said, to

WebAssemby’s nearly instant startup times, small binary sizes, and platform

and architectural neutrality, as Wasm binaries can be executed with a fraction

of the resources required to run today’s serverless infrastructure.

“Contrasted with heavyweight [virtual machines] and middleweight containers, I

like to think of Wasm as the lightweight cloud compute platform,” he noted.

“Developers package up only the bare essentials: a Wasm binary and perhaps a

few supporting files. And the Wasm runtime takes care of the rest.” An

immediate benefit of relying on Wasm’s runtime for serverless is lower

latency, especially when extending Wasm’s reach not only beyond the browser

but away from the cloud. This is because it can be distributed directly to and

on edge devices with relatively low data-to-transfer and computing

overhead.

Tracking device technology: A double-edged sword for CISOs

Clearly, the logistics side of the equation means vehicles and things can be tagged and tracked with relative ease. Not only will it help with locating and counting inventory, but the technology can also be used to ensure an alert occurs when those things which are supposed to stay within a specific geographic footprint leave that footprint. Then there is the negative side of the equation, on which employees might use the corporate tracking capability for nefarious purposes or bring their own tracking devices into the corporate environment. But don’t stop with the employee. What of the vendor or the competition? How might they wish to use these tracking devices to garner a bit of competitive intelligence? Tracking the movements of gear or people might be prudent in a specific circumstance — visitors to a corporate building, for example. A badge outfitted with the technology can be monitored to ensure visitors stay within the areas to which they are granted access and, if escorts are required, an escort tag can be issued to provide confirmation that their corporate escort is within proximity.

US Official Reproaches Industry for Bad Cybersecurity

Easterly specifically called out Google's August 2022 debut of Android 13,

which was the first Android release in which a majority of the new code added

to the release was in a memory-safe language. Easterly said there wasn't a

single memory safety vulnerability discovered in the Rust code added to

Android 13. Open-source software community Mozilla created Rust in 2015 and

currently has a project to integrate Rust into its Firefox web browser. Amazon

Web Services has begun to build critical services in Rust, which Easterly said

has resulted in both security benefits as well as time and cost savings for

the public cloud behemoth. Making memory-safe languages ubiquitous within

universities will serve as a building block to companies migrating their key

libraries to memory-safe languages, Easterly said. This effort hinges on the

technology industry containing, and eventually rolling back, the prevalence of

C and C++ in key systems. C and C++ are still written and taught due to the

belief that migrating away from them would harm performance.

A key post-quantum algorithm may be vulnerable to side-channel attacks

Quantum computers have the potential to crack the cryptographic algorithms in

use today, which is why “post-quantum” cryptographic algorithms are designed

to be so strong that they can survive huge leaps in computing power. A team in

Sweden, however, says it’s possible to attack some of the new algorithms with

other methods. Researchers at the KTH Royal Institute say they found a

vulnerability in a specific implementation of CRYSTALS-Kyber — a “quantum

safe” algorithm that the U.S. National Institute of Standards and Technology

has selected as part of its potential standards for future cryptographic

systems. According to the Swedish team, CRYSTALS-Kyber is vulnerable to

side-channel attacks, which use information leaked by a computer system to

gain unauthorized access or extract sensitive information. Instead of trying

to guess a secret key, a side-channel technique analyzes data such as small

variations in power consumption or electromagnetic radiation to reconstruct

what the machine is doing and find clues that would enable access.

How to achieve and shore up cyber resilience in a recession

With cybercriminals waiting in the wings, concerns about whether it’s a false

economy to make cuts in cybersecurity investments is a growing concern.

However, investing in expensive security tools will be ineffective if

organizations neglect putting the right foundational security practices in

place. When it comes to elevating organizational resilience, CIOs don’t need

to choose between savings and safety. By reviewing processes, revisiting the

basics, making the most of existing resources, and focusing on internal

training, organizations can increase their security and digital resilience.

Selectively deploying cybersecurity tools and product kits can then complement

these good practices in a highly cost-effective way. In a downturn, it pays to

reset cybersecurity priorities and review how and where finite resources can

best be deployed. Unfortunately, all too often organizations conflate good

security practices with good security purchases, in the misbegotten belief

that, somehow, it’s possible to “buy security”.

Companies can’t stop using open source

Freely downloadable code has never been truly free (as in cost). The bits

might be free, but there’s a cost to manage those bits. Developers always

cost more than the code they write or manage. This may be one reason that

when enterprises were asked what they most value in “open source

leadership,” they responded with “makes it easy to deploy my preferred open

source software in the cloud.” Companies increasingly want the benefits of

open source without the expense of managing it themselves. ... Despite these

problems and despite open source costs, even those who think open source is

more expensive than proprietary alternatives say its benefits outweigh those

costs. Chesbrough, when conducting the survey for the Linux Foundation,

asked about this seemingly counterintuitive finding. “If you think [open

source is] more expensive, why are you still using it?” he asked one

respondent. Their response? “The code is available.” Meaning, “If we were to

construct the code ourselves, that would take some amount of time. ...”

Do you have the courage of your convictions?

A courageous leader also has a healthy appreciation for the fact that

sticking your neck out carries the risk of being wrong or failing. Many CEOs

and senior leaders are looking to promote managers who have failed and can

show they have learned from the experience. They want leaders who take big

swings and, if they stumble, figure out what went wrong. But still, we’re

all too prone to put up facades of invincibility and perfection, polishing

resumes that show a smooth trajectory and consistent record of success. In

job interviews, candidates are unwilling to acknowledge any failures or

weaknesses beyond the predictable non-answers of “I work too hard” or “I

care too much.” “People who don’t make bad decisions are indecisive and

risk-averse,” said David Kenny, who was CEO of the Weather Company when I

interviewed him years ago (he now runs market research firm Nielsen). “I

love hiring people who’ve failed. We’ve got some great people here with some

real flameouts.

Quote for the day:

"When you accept a leadership role,

you take on extra responsibility for your actions toward others." --

Kelley Armstrong