Preparing for Compliance With AI, Data Privacy Laws

Even though enforcement of data privacy laws in California and New York laws

have been slightly delayed, and California regulations implementing the new AI

law are not yet fully baked, businesses should be employing expert consultants

now to be ready when enforcement begins. Platz notes that in the working

world -- and especially in an environment that is often largely remote with

employees around the country and the world -- these new privacy laws will affect

employees beyond the states that enacted the laws if they live and work in

different locations. “With flexibility to work from virtually anywhere,

this legislation will have wide reaching impact across states and sectors and

will only highlight the need for employers to look closely at their path to

compliance across a significant amount of data,” Platz says. ... “As

almost always happens, many other jurisdictions will follow suit, as New York

City already has,” he says. “So, businesses should be preparing to deal not just

with these two new laws but, ultimately, with similar ones in most or all states

and perhaps other cities.”

While governments pass privacy laws, companies struggle to change

No single approach can ward off all dangers—it takes a potent combination of

technologies, policies, and practices, all with boardroom support. Remember,

employees often represent the weakest link in the data security chain since a

simple phishing email can bypass the most sophisticated defenses. Strong

protection starts with practical training and enforcement. Management can also

help ensure every strategy builds on a solid foundation. Many enterprises are

now engaged in major digital transformation and cloud migration initiatives.

However, some still need help answering basic questions: Do we know where every

piece of data in the house resides? Do we know how much of it contains PII, and

who has access to it? How is the data managed in the cloud? What kind of

encryption has been applied? Where are the encryption keys stored, and who has

access to those? ... This way, there are no shared network resources, and the

enhanced security is matched with greater flexibility to ensure a

company-specific deployment—a dedicated cloud tenant and custom software to

address specific needs.

Is the Answer to Your Data Science Needs Inside Your IT Team?

Allowing data scientists and developers to work together in real time provides

multiple benefits. First, it allows for more expeditious and agile development

of intelligent apps. Second, it allows developers and data scientists to learn

about each other’s needs and processes. When each group is so closely

connected and understands each other, it improves the chances of project

success. Agile application development requires everyone to work in sync. When

Red Hat began exploring ways to bridge the gap that has traditionally existed

between developers and data scientists, we expanded on the idea of creating a

common platform for real-time collaboration between them. Within this common

platform, development and data science teams would have access to all the

tools they need to perform their tasks, and could quickly build and share

production pipelines. ... Open Data Hub was so effective at solving our

internal data science and development challenges that we ultimately evolved it

into a commercial offering called Red Hat OpenShift Data Science.

20 Ways to Achieve Street Smart Wisdom for Leaders and Entrepreneurs

The necessity of cultivating an open mindset and being able to adjust to

changing circumstances and obstacles swiftly is highlighted by adaptive

thinking. To succeed, leaders need to be able to think quickly on their feet

and modify their plans as necessary. Adaptive thinking focuses on maintaining

persistence and focus in the face of difficulty. The need to think outside the

paradigm and come up with unique solutions to challenging problems is

emphasized under creative problem-solving. To create novel solutions, leaders

need to be able to spot trends and think creatively. It underlines how

important it is to be abreast of recent trends and advancements. Lastly,

strategic planning emphasizes the need for a well-thought-out strategy and the

capacity to picture the desired outcome. Leaders must be able to foresee

possible difficulties and be ready to modify their plans as necessary. This

highlights the need to maintain organization and concentrate on long-term

objectives.

The Case for a Strong Data Governance Program in 2023

Effective data governance is also critical for complying with data-focused

regulations, especially data privacy laws. Following in the steps of the EU’s

General Data Protection Regulation, several U.S. states have introduced

privacy laws, with more states poised to do the same. Existing regulations

include California’s Privacy Rights Act and Consumer Privacy Act, along with

similar regulations in Colorado, Connecticut, Utah, and Virginia. In addition,

because many organizations today anticipate incorporating artificial

intelligence into decision making, they must make efforts to comply with

emerging AI regulations. The standard-bearer is the EU’s AI Act, which aims to

prevent potential data misuse and privacy violations. Acts like these depend

on organizations adopting strong data governance practices. Clearly, every

company today must have a data governance program. Lack of one can cause data

inconsistencies, complicate data integration efforts, and create data

integrity challenges. These issues can lead to a slew of negative outcomes:

reputational damage, fines for noncompliance, reduced efficiency, and, of

course, missed opportunities for business growth.

Government plans to catch tax fraudsters with help of AI

Cabinet Office minister Baroness Neville-Rolfe said fraud against “the public

purse is unacceptable and we’re stepping up the fight against those who wish

to profit off the backs of taxpayers”. “Through the use of cutting-edge

technology, the PSFA will use data and AI to help us in the fight against

fraudsters,” she added. The government previously signed another deal with

Quantexa, in October 2021, to help combat Covid-19 loan scheme fraud. During

the pandemic, fraudsters abused the government’s loan scheme, with a number of

businesses making fraudulent claims. The contract with Quantexa was part of

the government’s response to those criminal activities. As part of the

contract, the government used Quantexa’s Contextual Decision Intelligence

(CDI) platform, which enables customers to “create a connected view of [their]

data to reveal relationships between people, places and organisations”. It

analysed an initial set of 250 networks of people, organisations and places,

processing more than 100 million data items.

Insurance IT leaders herald new era for digital customer experience

With new platforms evolving, insurance CIOs are eyeing new possibilities for

the future. Liberty Mutual, which has been an industry leader in digital

transformation, operates a hybrid cloud infrastructure built primarily on

Amazon Web Services but with specific uses of Microsoft Azure and, lesser so,

Google Cloud Platform. ... The insurance company under his direction spent 17

years developing a robust platform that today enables consumers to access an

automated claims system that uses chatbots, cameras, and e-mail to initiate a

claim and rent a car while a machine learning model analyzes the photograph of

the damaged vehicle to detect whether its airbag has been deployed, for

instance, and to determine immediately whether a vehicle is totaled or the

damage is limited to a fender bender. That’s today. The platform will enable

data scientists to build the next generation of applications for its consumers

tomorrow. “We’re really trying to understand the metaverse and what it might

mean for us,” said McGlennon.

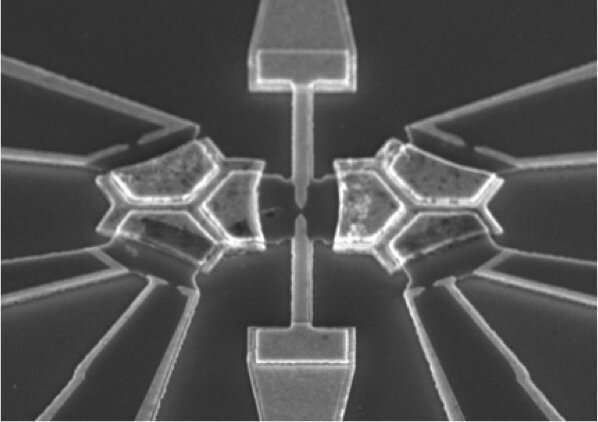

Lambda Throttling - How to Avoid It?

When your lambda is throttled and you reach the maximum parallel execution

limit, lambda returns a throttling error. Lambda has a retry mechanism with

exponential backoff that starts from 1 second and reaches a max of 5-minute

windows which can even run for 6 hours (by default), to try to complete the

execution of a failed event. We should also mention that for better

error-proofing your code, you could use a dead-letter queue (DLQ) which other

queues can target for messages that can’t be processed / consumed

successfully. A DLQ is for the cases it still fails to execute, but that is

just for reference, and we will not dive into that now. The meaning of this is

tremendous. It doesn’t matter if we send a message with SQS, Eventbridge, or

other async services; you will practically never need to think about handling

throttling issues. ... However, in contrast to synchronous invocation, this

will not impact your application and service level agreement (SLA), as the

events will be kept in the internal Lambda service queue and handled in time

when the resources have freed up to manage them. Every single one of them.

Will your incident response team fight or freeze when a cyberattack hits?

CISOs shouldn’t be surprised to hear that even well-prepared teams can have

moments of paralysis; it’s just human nature, McKeown says. She says sometimes

responders may experience cognitive narrowing, where they’re so focused on the

situation directly in front of them that they can’t consider the full

circumstances—an experience that can stop responders from thinking as they

normally would. Niel Harper, an enterprise cybersecurity leader who serves as

a board director with the governance association ISACA, witnessed a team

freeze in response to a ransomware attack on his first day working with a

company as an advisor. “They literally did not know what to do, even though

they had some experience with [incident response] walkthroughs,” he recalls.

“They were in panic mode.” Harper says he has seen other situations where the

response was stymied and thus delayed. In some cases, teams were afraid that

they’d be seen as overreacting. In others, they were paralyzed with the fear

of being blamed.

Why 2023 is the time to consider security automation

Security automation done right doesn’t usually mean replacing human

intelligence and ability – rather, it aims to give people the requisite power

to strengthen the organization’s security posture and mitigate threats.

Security automation doesn’t necessarily have to be exotic. Especially if

you’re just starting out, some of the simplest automation can have

considerable impacts. ... “Over the last several years, engineering teams have

automated nearly all of their development and deployment processes across APIs

in CI/CD pipelines and unfortunately, security has oftentimes been an

afterthought,” says Paul Nguyen, co-founder and co-CEO of Permiso.

“Accordingly, attackers have leveraged stolen API keys and compromised service

tokens as methods to infiltrate a network or service and move laterally.” The

course correction isn’t to dump DevOps and CI/CD pipelines, obviously – it’s

to better secure them, and automation is key. So is DevSecOps. “It’s time for

security teams to embrace automation and bolster their defenses in order to be

able to respond to the modern tactics of bad actors,” Nguyen says.

Quote for the day:

"You can't delegate accountability" --

Gordon Tredgold