7 ways to foster IT innovation

Asking your team to be innovative is like asking an athlete to play better.

While it may feel motivational and instructive to say it, it’s most often

taken as disapproving and vague to the person receiving it. So if you want

people to innovate, define specifically what you’re looking for them to do.

Think specificity. My definition of IT innovation: The successful creation,

implementation, enhancement, or improvement of a technical process, business

process, software product, hardware product, or cultural factor that reduces

costs, enhances productivity, increases organizational competitiveness, or

provides other business value. ... Building an innovative culture is not only

people-oriented but process-oriented. You must develop a formalized process

that identifies, collects, evaluates and implements innovative ideas. Without

this process, great ideas and potential innovations die on the vine. There

also has to be an appreciation and understanding that innovative ideas can

come from many directions, including your employees, internal business

partners, customers, vendors, competitors, or through accidental discovery.

Shift Left Approach for API Standardization

Having clear and consistent API design standards is the foundation for a good

developer and consumer experience. They let developers and consumers

understand your APIs in a fast and effective manner, reduces the learning

curve, and enables them to build to a set of guidelines. API standardization

can also improve team collaboration, provide the guiding principles to reduce

inaccuracies, delays, and contribute to a reduction in overall development

costs. Standards are so important to the success of an API strategy that many

technology companies – like Microsoft, Google, and IBM as well as industry

organization like SWIFT, TMForum and IATA use and support the OpenAPI

Specification (OAS) as their foundational standard for defining RESTful APIs.

... The term “shift left” refers to a practice in software development in

which teams begin testing earlier than ever before and help them to focus on

quality, work on problem prevention instead of detection.

3 ways CIOs can empower their teams during uncertainty

By understanding data from different parts of the business, CIOs are in a unique

position to see first-hand what efforts are producing the highest return. They

can also identify gaps in knowledge and efficiency. Data analytics provide

information used to set goals and expectations that allow the company to adapt

in real-time as priorities change. As data stewards, CIOs will determine the

origin of the most relevant data points and must be able to present these to

other C-suite executives to help them make the best-informed choices. ... As the

face of the IT department, CIOs can set the tone for a company’s culture, both

inside and outside the building’s walls. They can articulate why new digital

technologies are implemented and foster a forward-thinking environment.

Additionally, they can connect the day-to-day actions of IT with their greater

strategic vision. ... CIOs can help drive enterprise agility by always putting

the customer at the center of decisions. The CIO can collaborate closely with

business leaders to understand the business priorities and then develop a plan

for how technology can drive the most value for the customer.

In defense of “quiet working”

Leaders need to raise their game and do their part to make work more engaging

and crack down on bad managers who make life miserable for their teams. They

need to more clearly articulate how people can contribute and what is expected

of them. Companies need to rethink the “why” behind return-to-office policies,

for example, so they don’t just feel like ham-handed directives based on a lack

of trust in employee productivity. This issue of quiet quitting is fraught, and

I want to be clear that there is a balance of shared responsibility here. Bad

bosses give their employees plenty of reasons to throw up their hands and

disengage. Companies need to make work more engaging beyond just coming up with

lofty purpose statements. But let’s also give a shout-out to the value of a

strong work ethic. A lot of companies are making progress and doing their part

to try to figure out the new world of work. And so are the #quietworking

employees. Green’s story captures a quality I’ve always admired in many people:

they own their job, whatever it is.

Cancer Testing Lab Reports 2nd Major Breach Within 6 Months

The narrow time span between CSI's two major health data breaches will

potentially raise red flags with regulators, says Greene, a former senior

adviser at HHS OCR. HHS OCR will often look at what actions the entity took in

response to the first data breach and whether the multiple breaches were due to

a similar systematic failure, such as a failure to conduct an enterprisewide

risk analysis," he says. While there are definite negatives involving major

breaches being reported within a short time frame, there can also be a sliver of

optimism related to the subsequent incident. ... "While multiple breaches may

reflect widespread information security issues, I have also seen it occur

for more positive reasons, such as an entity improving already-good audit

practices and, as a result, detecting more cases of users abusing their access

privileges." ... "We believe the access to a single employee mailbox occurred

not to access patient information, but rather as part of an effort to commit

financial fraud on other entities by redirecting CSI customer health care

provider payments to an account posing as CSI using a fictitious email address,"

CSI says.

Ransomware: This is how half of attacks begin, and this is how you can stop them

While over half of ransomware incidents examined started with attackers

exploiting internet-facing vulnerabilities, compromised credentials – usernames

and passwords – were the entry point for 39% of incidents. There are several

ways that usernames and passwords can be stolen, including phishing attacks or

infecting users with information-stealing malware. It's also common for

attackers to simply breach weak or common passwords with brute-force attacks.

Other methods that cyber criminals have used as the initial entry point for

ransomware attacks include malware infections, phishing, drive-by downloads, and

exploiting network misconfigurations. No matter which method is used to initiate

ransomware campaigns, the report warns that "ransomware remains a major threat

and one that feeds on gaps in security control frameworks". Despite the

challenges that can be associated with preparing for ransomware and other

malicious cyber threats – especially in large enterprise environments –

Secureworks researchers suggest that applying security patches is one of the key

things organisations can do to help protect their networks.

Landmark US-UK Data Access Agreement Begins

“The Data Access Agreement will allow information and evidence that is held by

service providers within each of our nations and relates to the prevention,

detection, investigation or prosecution of serious crime to be accessed more

quickly than ever before,” noted a joint statement penned between Washington and

London. “This will help, for example, our law enforcement agencies gain more

effective access to the evidence they need to bring offenders to justice,

including terrorists and child abuse offenders, thereby preventing further

victimization.” However, legal experts have also warned that any UK service

providers responding to requests from US law enforcers would have to consider

whether there was a “legal basis” for data transfers under the GDPR. Data

flowing the other way would not be subject to the same concerns given the

European Commission’s adequacy decision regarding the UK. That said, Cooley

predicted that OPOs would still come under intense legal scrutiny.

What IT will look like in 2025

To succeed, both now and as the future unfolds, CIOs will need to synthesize a

range of technologies cohesively to deliver experiences, functionalities, and

services to employees, partners, and most definitely customers. “When you

think about 2025, our teams will continue to focus on serving both customers,

internal and external, and to find ways to make our business better on a daily

basis,” says Richard A. Hook, executive vice president and CIO of Penske

Automotive Group and CIO of Penske Corp. “In addition, our teams will continue

to evolve their skills to ensure everyone has at least a security baseline of

knowledge (deeper depending on roles), increased depth on various cloud

platforms and configurations, and the skills necessary to build automation

within IT and the business.” ... “We see that leaders increasingly recognize

the next phase of new value will come from transformational efforts — seeking

to change their business models, finding new forms of digitalized products and

services, new ways to reach new customer segments, etc.,” says Gartner’s

Tyler.

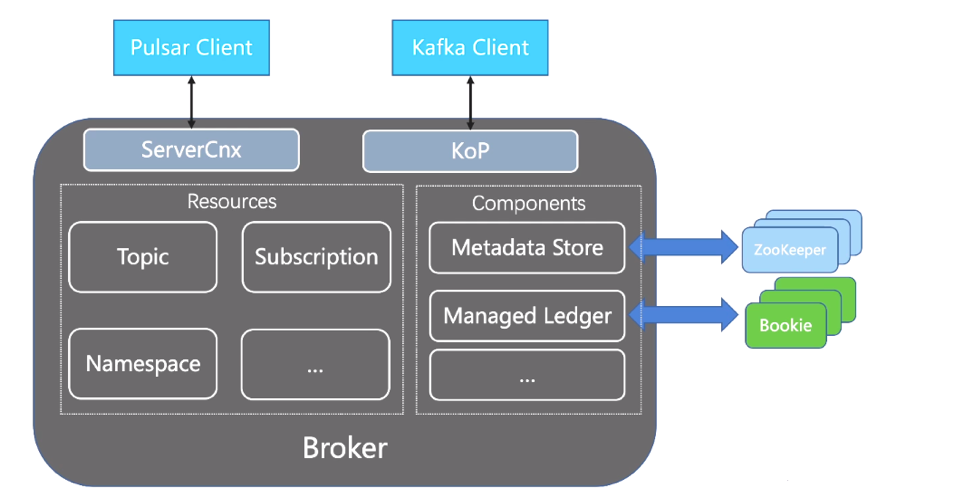

Understanding Kafka-on-Pulsar (KoP): Yesterday, Today, and Tomorrow

To provide a smoother migration experience for users, the KoP community came

up with a new solution. They decided to bring the native Kafka protocol

support to Pulsar by introducing a Kafka protocol handler on Pulsar brokers.

Protocol handlers were a new feature introduced in Pulsar 2.5.0. They allow

Pulsar brokers to support other messaging protocols, including Kafka, AMQP,

and MQTT. Compared with the above-mentioned migration plans, KoP features the

following key benefits:No Code Change: Users do not need to modify any code in

their Kafka applications, including clients written in different languages,

the applications themselves, and third-party components Great Compatibility:

KoP is compatible with the majority of tools in the Kafka ecosystem. It

currently supports Kafka 0.9+ Direct Interaction With Pulsar Brokers: Before

KoP was designed, some users tried to make the Pulsar client serve the request

sent by the Kafka client by creating a proxy layer in the middle. This might

impact performance as it entailed additional routing requests. By comparison,

KoP allows clients to directly communicate with Pulsar brokers without

compromising performance

How to Adapt to the New World of Work

Burnout is a real and serious issue facing the workforce, with 43% of

employees stating they are somewhat or very burnt out. Burnout is a

combination of exhaustion, cynicism, and lack of purpose at work. This burden

results in employees feeling worn out both physically and mentally, unable to

bring their best to work. It often causes employees to take long leaves of

absence in an attempt to recover and is a key driver of turnover, as they seek

new roles that they hope will reinvigorate their passion and drive. Sources of

burnout might include overwork, lack of necessary support or resources, or

unfair treatment. Feedback tools can help find the root cause of burn out and

how to mitigate them. Implementing wellness tools are another way to address

this issue and demonstrate that the company prioritizes mental health.

Employees whose organizations provide wellness tools are less likely to be

extremely burnt out. Currently, only 26% of tech employees say their company

provides wellbeing support tools.

Quote for the day:

"Leadership is the wise use of power.

Power is the capacity to translate intention into reality and sustain it "

-- Warren Bennis