How edge computing will support the metaverse

Edge computing supports the metaverse by minimizing network latency, reducing

bandwidth demands and storing significant data locally. Edge computing, in this

context, means compute and storage power placed closer to a metaverse

participant, rather than in a conventional cloud data center. Latency increases

with distance—at least for current computing and networking technologies.

Quantum physics experiments can convey information at a distance without

significant delay, but those aren’t systems we can scale or use for standard

purposes—yet. In a virtual world, you experience latency as lag: A character

might appear to hesitate a bit as it moves. Inconsistent latency produces

movement that might appear jerky or communication that varies in speed. Lower

latency, in general, means smoother movement. Edge computing can also help

reduce bandwidth, since calculations get handled by either an on-site system or

one nearby, rather than a remote location. Much as a graphics card works in

tandem with a CPU to handle calculations and render images with less stress on

the CPU, an edge computing architecture moves calculations closer to the

metaverse participant.

Big Gains In Tech Slowed By Talent Gaps And High Costs, Executive Survey Finds

Survey participant, Andrew Whytock, head of digitalization in Siemens

pharmaceutical division, crystallized the criticality of employee recruitment,

training and retention, explaining, “It’s great having a big tech strategy, but

employers are struggling to find the people to execute their plans.” In addition

to growth needs, staffing problems extend to fortifying cybersecurity. Nearly

60% of respondents reported that cybersecurity objectives are behind schedule.

When asked to identify the “internal challenges” driving delays, executives

ranked “lack of key skills” and “cultural obstacles” highest. That’s

inexcusable. Lax tech controls and strategy acceleration pressure make a

dangerous mix. To thrive, “digitally mature” enterprises need top talent in

supportive cultures to unlock the transformative value of their sizable IT

modernization investments. ... Despite huge investments in job training and

leadership development, broad business perspective remains a widespread skill

gap.

How to design a data architecture for business success

“Data architecture is many things to many people and it is easy to drown in an

ocean of ideas, processes and initiatives,” says Tim Garrood, a data

architecture expert at PA Consulting. Firms need to ensure that data

architecture projects deliver value to the business, he adds, and this needs

knowledge and skills, as well as technology. However, part of the challenge for

CIOs and CDOs is that technology is driving complexity in both data management

and how it is used. As management consultancy McKinsey put it in a 2020 paper:

“Technical additions – from data lakes to customer analytics platforms to stream

processing – have increased the complexity of data architectures enormously.”

This is making it harder for firms to manage their existing data and to deliver

new capabilities. The move away from traditional relational database systems to

much more flexible data structures – and the ability to capture and process

unstructured data – gives organisations the potential to do far more with data

than ever before. The challenge for CIOs and CDOs is to tie that opportunity

back to the needs of the business.

What Is Cloud Orchestration?

Cloud orchestration is the coordination and automation of workloads,

resources, and infrastructure in public and private cloud environments and the

automation of the whole cloud system. Each part should work together to

produce an efficient system. Cloud automation is a subset of cloud

orchestration focused on automating the individual components of a cloud

system. Cloud orchestration and automation complement each other to produce an

automated cloud system. ... Cloud orchestration supports the DevOps framework

by allowing continuous integration, monitoring, and testing. Cloud

orchestration solutions manage all services so that you get more frequent

updates and can troubleshoot faster. Your applications are also more secure as

you can patch vulnerabilities quickly. The journey towards full cloud

orchestration is hard to complete. To make the transition more manageable, you

can find benefits along the way with cloud automation. For example, you might

automate the database component to speed up manual data handling or install a

smart scheduler for your Kubernetes workloads.

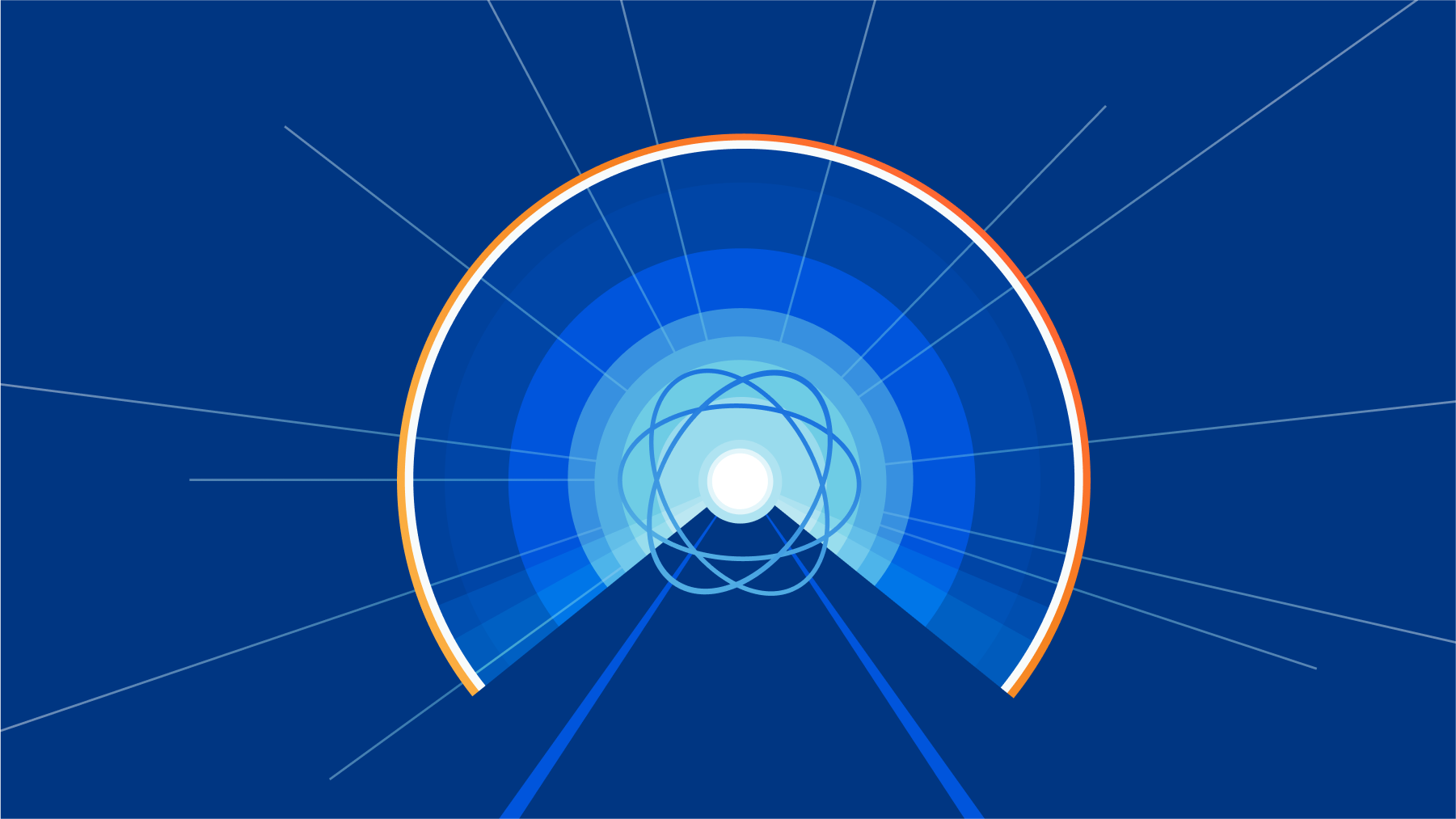

Introducing post-quantum Cloudflare Tunnel

From tech giants to small businesses: we will all have to make sure our

hardware and software is updated so that our data is protected against the

arrival of quantum computers. It seems far away, but it’s not a problem for

later: any encrypted data captured today can be broken by a sufficiently

powerful quantum computer in the future. ... How does it work? cloudflared

creates long-running connections to two nearby Cloudflare data centers, for

instance San Francisco and one other. When your employee visits your domain,

they connect to a Cloudflare server close to them, say in Frankfurt. That

server knows that this is a Cloudflare Tunnel and that your cloudflared has a

connection to a server in San Francisco, and thus it relays the request to it.

In turn, via the reverse connection, the request ends up at cloudflared,

which passes it to the webapp via your internal network. In essence,

Cloudflare Tunnel is a simple but convenient tool, but the magic is in what

you can do on top with it: you get Cloudflare’s DDoS protection for free;

fine-grained access control with Cloudflare Access and request logs just

to name a few.

What are the benefits of a microservices architecture?

The benefit of a microservice architecture is that developers can deploy

features that prevent cascading failures. A variety of tools are also

available, from GitLab and others, to build fault-tolerant microservices that

help improve the resilience of the infrastructure. A microservice application

can be programmed in any language, so dev teams can choose the best language

for the job. The fact that microservices architectures are language agnostic

also allows the developers to use their existing skill sets to maximum

advantage – no need to learn a new programming language just get the work

done. Using cloud-based microservices gives developers another advantage, as

they can access an application from any internet-connected device, regardless

of its platform. A microservices architecture lets teams deploy independent

applications without affecting other services in the architecture. This

feature will enable developers to add new modules without redesigning the

system's complete structure. Businesses can efficiently add new features as

needed under a microservices architecture.

Tips for effective data preparation

According to TechRepublic, data preparation is “the process of cleaning,

transforming and restructuring data so that users can use it for analysis,

business intelligence and visualization.” AWS’s definition is even simpler:

“Data preparation is the process of preparing raw data so that it is suitable

for further processing and analysis.” But what does this actually mean in

practice? Data doesn’t typically reach enterprises in a standardized format

and, thus, needs to be prepared for enterprise use. Some of the data is

structured—like customer names, addresses and product preferences — while most

is almost certainly unstructured—like geo-spatial, product reviews, mobile

activity and tweets. Before data scientists can run machine learning models to

tease out insights, they’re first going to need to transform the data,

reformatting it or perhaps correcting it, so it’s in a consistent format that

serves their needs. ... In addition, data preparation can help to reduce data

management costs that balloon when you try to apply bad data to otherwise good

ML models. Now, given the importance of getting data preparation right, what

are some tips for doing it well?

Optimizing Isolation Levels for Scaling Distributed Databases

The SnapshotRead isolation level, although not an ANSI standard, has been

gaining popularity. This is also known as MVCC. The advantage of this

isolation level is that it is contention-free: it creates a snapshot at the

beginning of the transaction. All reads are sent to that snapshot without

obtaining any locks. But writes follow the rules of strict Serializability. A

SnapshotRead transaction is most valuable for a read-only workload because you

can see a consistent database snapshot. This avoids surprises while loading

different pieces of data that depend on each other transactionally. You can

also use the snapshot feature to read multiple tables at a particular time and

then later observe the changes that have occurred since that snapshot. This

functionality is convenient for Change Data Capture tools that want to stream

changes to an analytics database. For transactions that perform writes, the

snapshot feature is not that useful. You mainly want to control whether to

allow a value to change after the last read. If you want to allow the value to

change, it will be stale as soon as you read it because someone else can

update it later.

Why IT leaders should embrace a data-driven culture

Data tells the story of what works – and perhaps more importantly, what

doesn’t work – for your team. It provides a clear and unbiased picture of how

new transformations are netting out and where opportunities lie to increase

efficiency and value. Utilizing the right metrics reveals which innovations

are most effective for the team, letting IT managers know how transformations

are running. Focusing on these results helps organizations streamline business

processes and leads to higher team productivity. It also puts IT leaders on

the path to sunset legacy solutions that require large budgets or lots of

manual work to keep them functional. These changes impact all business areas,

allowing employees anywhere and everywhere – not just those in IT – to be more

innovative and effective. ... As business leaders focus on meeting the needs

of today’s evolving workforce and customers’ desires, operating with a

data-driven strategy lets managers stay agile and confident in their next

steps. Allowing data to drive decisions also provides a means to back those

decisions with clear evidence.

Who is responsible for cyber security in the enterprise?

Alarmingly — or perhaps unfairly — only 8 per cent of executives said that

their CISO or equivalent performs above average in communicating the

financial, workforce, reputational or personal consequences of cyber threats.

At the same time, under 15 per cent of executives gave their CISOs or

equivalent a top rating from a scale of one to ten. Maintaining a bridge

between business and tech is vital when it comes to ensuring all are on the

same page regarding security. “It is no surprise that one of the main

challenges companies face when implementing a cyber risk mitigation or

resiliency plan is the communication gap between the board and the CISO,” said

Anthony Dagostino, founder and CEO of cyber insurance and risk management

provider Converge. “Cyber resiliency starts with the board because they

understand risk and can help their organisations set the appropriate strategy

to effectively mitigate that risk. However, while CISOs are security

specialists, most of them still struggle with adequately translating security

threats into operational and financial impact to their organisations – which

is what boards want to understand.

Quote for the day:

"You may be good. You may even be

better than everyone esle. But without a coach you will never be as good as

you could be." -- Andy Stanley

No comments:

Post a Comment