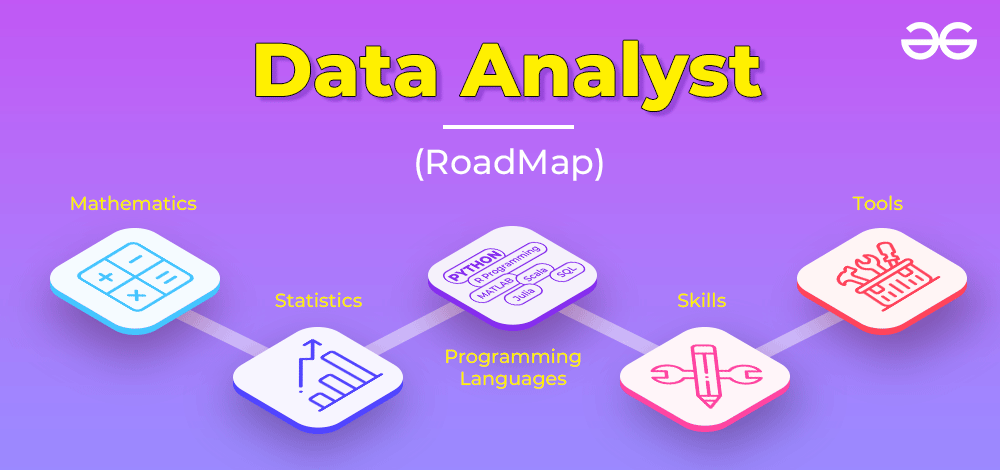

Roadmap to RPA Implementation: Thinking Long Term

Ted Kummert, executive vice president of products and engineering at UiPath,

says RPA should be viewed as a long-range capability meant to empower

organizations to evolve strategically and increase business value. It is a

journey that can start small, within one division or one department, and grow

organically across the business as additional ideas form and the organization’s

vision for automation’s potential comes to fruition. He says RPA can clear

backlog, create new capacity, and free up resources, and improve data quality by

integrating software robots into workflows. “It is a truly transformative

technology that can reduce or eliminate manual tasks and elevate creative,

high-value work,” Kummert says. “Digital transformation is often talked about,

but many times can fall short of its goals. Automation is the driver to achieve

true digital transformation.” Adam Glaser, senior vice president of engineering

for Appian, says many businesses use one automation technology, adding

third-party capabilities in patchwork fashion to automate complex end-to-end

processes.

How to start and grow a cybersecurity consultancy

To be successful, an entrepreneur must be resilient. Any comment that runs along

the lines of “That’s not possible,” or “That can’t be done” should be treated as

a challenge to prove the speaker wrong. An entrepreneur needs to have the

ability to see through what’s not important. Entrepreneurs don’t just need money

– they also need support in the form of encouragement and advice. I would advise

budding entrepreneurs to attend meetups within their industry or local community

and seek out online support via forums and groups. You’ll be surprised just how

willing others will be to help and offer advice for free. Asking questions,

getting reassurance and sanity checks from peers can be invaluable at all stages

of your businesses journey. There will always be someone a little further down

the path you’re taking. Starting a business can be exhilarating, rewarding and

fun, but can be exhausting, relentless and stressful in equal measure.

Surveillance tech firms complicit in MENA human rights abuses

“When operating in conflict-affected or high-risk regions as the MENA region,

the surveillance sector must undertake heightened human rights due diligence

and, if it cannot do so or it identifies evidence of harm, it should stop

selling its technology to companies or governments,” said Dima Samaro, MENA

regional researcher and representative at the Business & Human Rights

Resource Centre. “Lack of adequate due diligence measures by private companies

will only worsen the situation for those from marginalised communities, putting

their lives in jeopardy as the absence of robust regulation and effective

mechanisms in the region allows surveillance technologies to be operated freely

and without scrutiny.” The report added that, although the United Nations’ (UN)

Guiding principles on business and human rights were adopted a decade ago –

which establish that companies must take proactive and ongoing steps to identify

and respond to the potential or actual human rights impacts of their business –

the principles’ non-binding, voluntary nature means there are “glaring gaps in

human rights safeguards” at the firms.

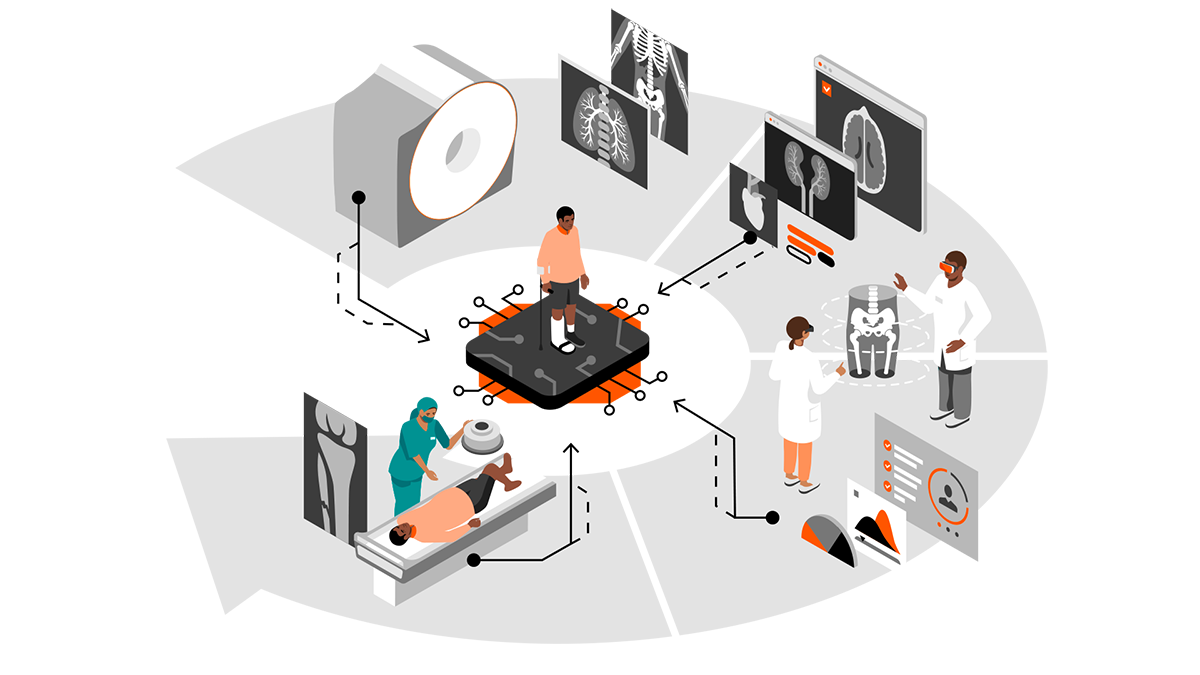

How companies can accelerate transformation

Ensuring that customer value drives technology architecture and investment is

one way to optimize technology usage. Another way is to ensure that an

organization is getting the most out of the investments it has already made.

Inefficiency in any aspect of technology usage represents a drag on businesses’

ability to change quickly. ... While enterprise architects (EAs) play a central

role in identifying opportunities for this type of technology optimization, they

have an even greater role to play when it comes to optimizing the entire IT

landscape. A “business capability” perspective makes this possible. ...

Efficiency doesn’t improve on its own. The business needs to decide to improve

it. Making those decisions, however, is not always easy. As mentioned, relying

on business capabilities to evaluate technology needs is one way to simplify the

decision process. The other is visibility. Business leaders can’t make decisions

if they can’t see the problem. In terms of business architecture, EAs help guide

leaders in the decisions they make by showing them business capability maps,

data-rich process diagrams and dashboards highlighting the connection between

architectural issues and business value.

Optus reveals extent of data breach, but stays mum on how it happened

Optus says its recent data breach impacted 1.2 million customers with at least

one form of identification number that is valid and current. The Australian

mobile operator also has brought in Deloitte to lead an investigation on the

cybersecurity incident, including how it occurred and how it could have been

prevented. Optus said in a statement Monday that Deloitte's "independent

external review" of the breach would encompass the telco's security systems,

controls, and processes. It added that the move was supported by the board of

its parent company Singtel, which had been "closely monitoring" the situation.

Elaborating on Deloitte's forensic assessment, Optus CEO Kelly Bayer Rosmarin

said: "This review will help ensure we understand how it occurred and how we can

prevent it from occurring again. It will help inform the response to the

incident for Optus. This may also help others in the private and public sector

where sensitive data is held and risk of cyberattack exists." In its statement,

Optus added that it had worked with more than 20 government agencies to

determine the extent of the data breach.

Why cyber security strategy must be more than a regulatory tick-box exercise

While technology plays a critical role in an effective cyber security strategy,

it alone does not provide the solution. Business leaders must also consider the

organisation’s processes and people. If organisations don’t have the right

processes or people in place to manage new technologies, it can be easy to

revert to old habits. Many organisations opt for a hybrid Security Operations

Centre to underpin their MDR strategy, which combines the cyber skills of

in-house engineers, cyber security teams and an MSSP to create a single

facility. MSSPs fill in the gaps in defences while upskilling in-house teams to

stay on top of changing threats and technologies. This approach can also free

in-house staff to drive projects and internal improvements while the MSSP takes

the lead on high value incidents. If the goal is to improve cyber security

whilst meeting your organisational goals, then regulations will only ever go so

far in tackling the issue. Attacks will continue to plague all sectors and

proper detection, response and remediation will be what makes the difference

between those that make the news and those that don’t.

Mozilla is looking for a scapegoat

Not so long ago, Microsoft’s Internet Explorer dominated market share. Antitrust

authorities helped change that, but Google, not Mozilla, stepped up to take

Microsoft’s place, yet without the bully pulpit of a dominant operating system.

Meanwhile, as far back as 2008, I was writing about Mozilla’s chance to make

Firefox a true community-developed web platform. It didn’t succeed, though

Mozilla has gifted us incredible innovations such as Rust. Clearly there are

smart people at Mozilla and they have demonstrated the ability to push the

envelope on innovation. But not with Firefox. DuckDuckGo has carved out a

growing, sizeable niche in privacy-oriented search, but Mozilla keeps losing

similar ground in browsers. Why? In its report, Mozilla says browser freedom has

been “suppressed for years through online choice architecture and commercial

practices that benefit platforms and are not in the best interest of consumers,

developers, or the open web.” This would be more credible in Mozilla’s mouth if

this weren’t the same company that completely mismanaged its entrance into the

mobile market.

Indonesia Data Protection Law Includes Potential Prison Time

The Indonesia data protection law took some eight years to come to fruition,

with contentious ongoing debate about what government body should oversee the

new regulations and exactly how strong the penalties should be. A recent wave of

cyber attacks and data breaches in the country seems to have prompted

legislative action; Kaspersky reports that the country experienced 11.8 million

cyberattacks in the first quarter of 2022, a 22% increase from the prior year,

and the country has become the leading target for ransomware attacks in

Southeast Asia. This includes data breaches of various government agencies, one

of which exposed the vaccination records of President Joko Widodo. Stats from

SurfShark indicate that Indonesia now has the third-highest rate of data

breaches in the world. Regulation oversight has fallen to the executive branch,

with the President slated to form an oversight body tasked with determining and

administering fines. Similar to the EU’s General Data Protection Regulation

(GDPR), which the Indonesia data protection law drew from substantially, there

is a maximum potential fine of 2% of global annual turnover for violations.

How To Protect Your Reputation After A Hack Or Data Breach

Part of transparency and recovery is working with the relevant authorities and

experts to track the scope of the breach. A post-mortem analysis can be

critical. For one thing, it can determine what data was stolen, by who and how.

It can also help track where that data ends up and how it is used. In cases

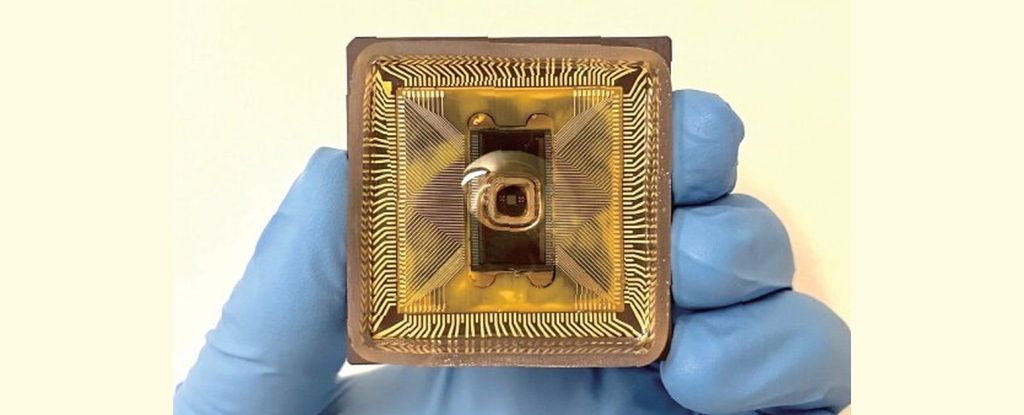

where the cause has something to do with software or hardware being exploited,

it can be essential to inform the developers or manufacturers of the breach and

how it occurred. They may also need to issue patches or recalls to prevent other

businesses using that hardware or software from being compromised. No business

stands alone. ... Recovery after a breach is a sensitive time. You will

undoubtedly see a deluge of negative reviews and bad press, which will be

difficult to counteract. Clear and transparent messaging is part of it; breaches

happen, and there's no surefire way to avoid them. Demonstrating that your data

security policies prevented usable data from being stolen or that you've been

able to protect users proactively can be critical to repairing your

reputation.

Data quality is at the heart of successful data governance

The downstream effects of data quality have ramifications felt throughout data

governance efforts. Recent findings from a survey by Enterprise Strategy Group

showed that data management is greatly challenged by a lack of visibility and

compounded by data quality issues. Concerningly, 42 percent of all respondents

indicated at least half of their data was “dark data” - retained by the

organization, but unused, unmanageable, and unfindable. An influx in dark data

and a lack of data visibility often leads to downstream bottlenecks, impeding

the accuracy and effectiveness of operational data. Data quality was the top

driver for organizations’ data governance programs but was also the top

challenge that these organizations have to overcome to maximize the return on

their data governance efforts. When you consider the fact that many

organizations are experiencing data quality issues, which are difficult to

manage, and in many cases have significant amounts of data that is dark, there

is a clear need for more robust data governance solutions providing data

landscape transparency united with business context and guidance.

Quote for the day:

"Perhaps the ultimate test of a leader

is not what you are able to do in the here and now - but instead what

continues to grow long after you're gone" -- Tom Rath